Author(s): Rashmi

Originally published on Towards AI.

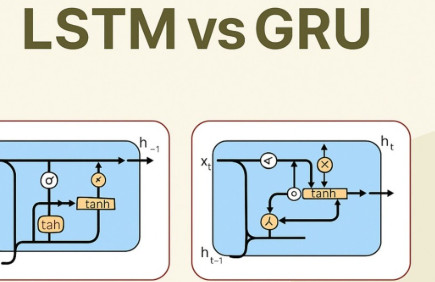

LSTM vs GRU: Architecture, Performance and Use Cases

Imagine you’re reading a long book and trying to remember the main plot points:

This article explores the comparison between Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) architectures, their specific structural components and functionalities, performance aspects, and practical use cases in various domains. It discusses scenarios where LSTM excels due to its complex state control, while emphasizing the advantages of GRU in speed and efficiency, especially in real-time applications. The piece also provides insights on implementation through different models, including when to use each architecture and how they fit into modern machine learning contexts, concluding with recommendations including their relevance in applications such as natural language processing and time series analysis.

Read the entire blog for free on Medium.

Published via Towards AI

Take our 90+ lessons from Beginner to Advanced LLM Developer Certification: This is the most comprehensive and practical LLM course, from choosing a project to deploying a working product!

Towards AI has published Building LLM for Production – our 470+ page guide to mastering the LLM with practical projects and expert insights!

Find your dream AI career at Towards AI Jobs

Towards AI has created a job board specifically tailored to machine learning and data science jobs and skills. Our software searches for live AI jobs every hour, labels and categorizes them and makes them easily searchable. Search over 40,000 live jobs on AI Jobs today!

Comment: The content represents the views of the contributing authors and not those of AI.