Reinforcement Learning for Enterprise Agents

For complete technical report, Click here. Are you interested in trying Databricks Custom RL on your enterprise agent? Click here.

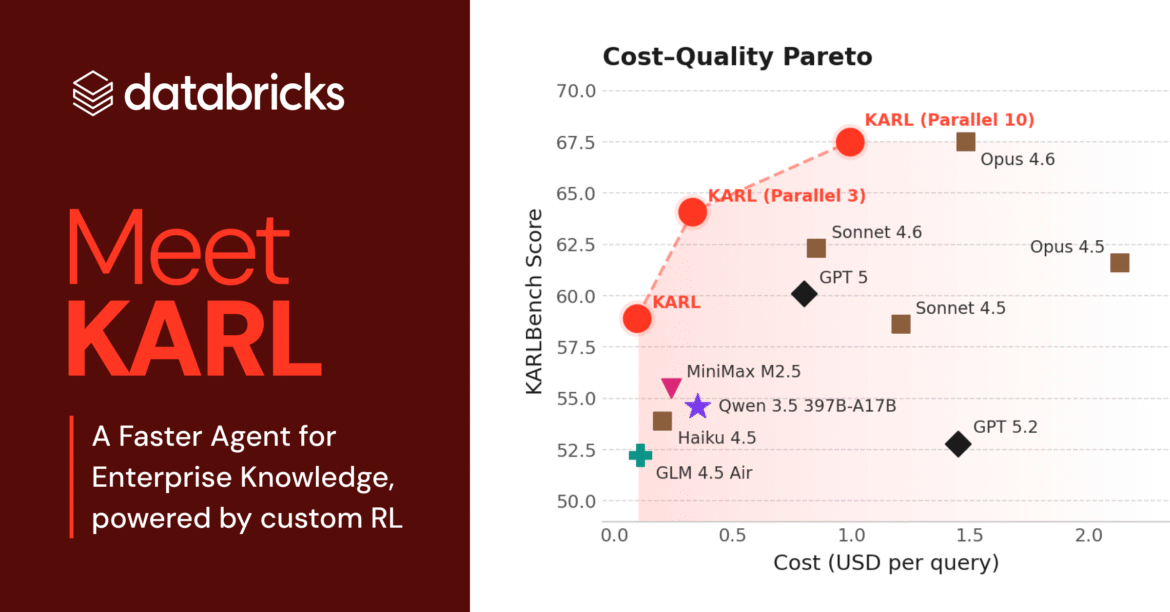

The improved reasoning capabilities of current models have led to an increase in the number of agents deployed for cognitive tasks, such as writing code, asking questions about enterprise data, and automating common workflows. While the models used in enterprise operations are very powerful, they are also extremely expensive, and estimation costs are beginning to rise unsustainably for many use cases. More in this post Related Technical ReportWe describe our experience using reinforcement learning (RL) to build custom models for power use cases that are a key part of our Agent Bricks product. This example demonstrates that, for relatively low cost, it is possible to create custom models that strictly dominate frontier models on all three important dimensions: estimation cost, latency, and quality. Our findings are consistent with other industry observations, such as Cursor musician modelWhere RL-based optimization was able to significantly improve both speed and quality compared to alternatives.

Carl: a fast, robust, cheap knowledge agent for Databricks users

The model we trained, which we call KARL, addresses a critical enterprise capability, ground logic: Answering questions by searching documents, fact-finding, cross-referencing information, and reasoning in dozens or hundreds of steps. Many Databricks products, such as the Agent Bricks Knowledge Assistant, require grounded logic. Unlike math and coding, ground logic tasks are difficult to verify – There is often no single correct answer. In situations like this, it is especially difficult to guide reinforcement learning to a good solution.

using the RL Techniques And the infrastructure developed at Databricks matches the performance of the world’s most powerful proprietary models at a fraction of the KARL service cost and latency, including new ground-breaking logic functions it had never seen before. (See technical report for complete details.) We did this with only a few thousand GPU hours of training and completely synthetic data. In internal testing with human users, KARL provided better and more comprehensive responses than our existing products and the latest Frontier models. This research is making its way into the Databricks agents you use today, like Agent Brix, basing answers in your unstructured and structured data in Databricks Lakehouse.

A reusable RL pipeline for Databricks customers

We’re excited to share that the same RL pipeline and infrastructure we used to build KARL (and the other agents we’ll talk about soon) is used here. Databricks is now available to customers who want to improve model performance and reduce costs For their high volume agent workload. Almost all real-world enterprise functions are difficult to verify, so KARL paves the way for a better experience not only for Databricks users – but also for our customers to build their own custom RL models for their popular agents. Our custom RL private preview, supported by serverless gpu computeEnables you to use the KARL infrastructure to create a more efficient, domain-specific version of your agent. If you have an AI agent that is growing rapidly and are interested in optimizing it with RL, Sign up here to express your interest in this preview.