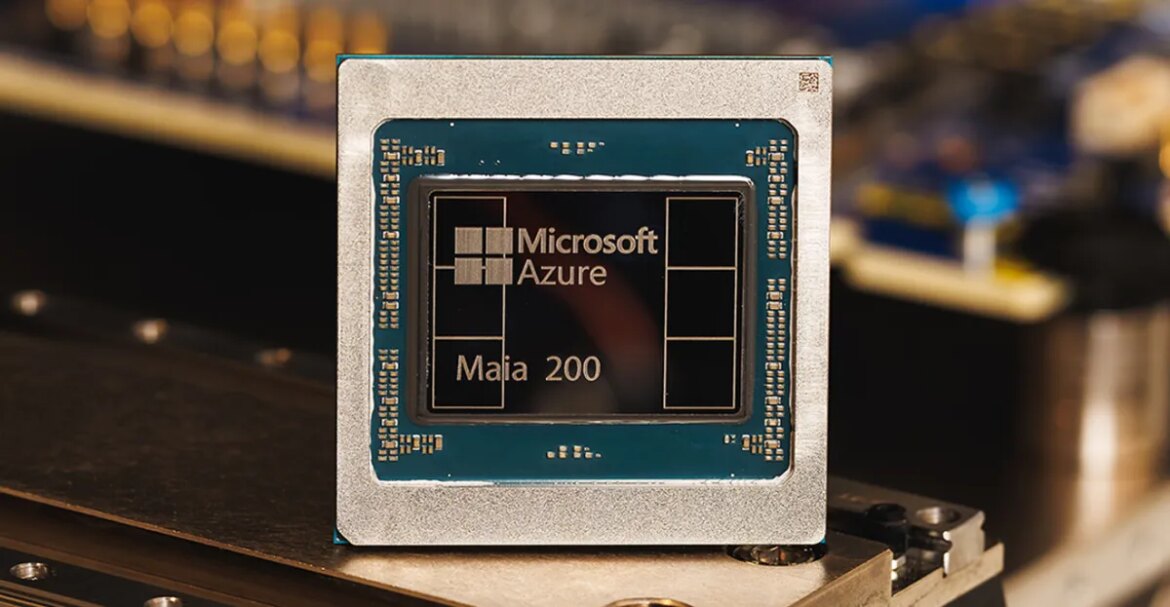

Microsoft is today announcing the Maia 200, the successor to its first in-house AI chip. Built on TSMC’s 3nm process, Microsoft says its Maia 200 AI accelerator delivers “up to 3x the FP4 performance of the third-generation Amazon Trenium and FP8 performance above Google’s seventh-generation TPU.”

Each Maia 200 chip has more than 100 billion transistors, all designed to handle massive AI workloads. “Maia 200 can easily run the largest models today, with ample room for even larger models in the future,” says Scott Guthrie, executive vice president of Microsoft’s cloud and AI division.

Microsoft will use Maia 200 to host OpenAI’s GPT-5.2 models and others for Microsoft Foundry and Microsoft 365 Copilot. “The Maia 200 is the most efficient inference system Microsoft has ever deployed, with up to 30 percent better performance per dollar than the latest generation hardware in our fleet today,” says Guthrie.

Microsoft’s performance compared to its closest Big Tech competitors is different from when it first launched the Maia 100 in 2023 and it did not want to draw direct comparisons with the AI cloud capabilities of Amazon and Google. However, both Google and Amazon are working on next-generation AI chips. Amazon is even working with nvidia To integrate its upcoming Trenium4 chip with NVLink 6 and Nvidia’s MGX rack architecture.

Microsoft’s superintelligence team will be the first to use its Maia 200 chips, and the company is also inviting academics, developers, AI labs, and open-source model project contributors to an early preview of the Maia 200 software development kit. Microsoft is starting to deploy these new chips today in its Azure US Central data center region, with additional regions to follow.