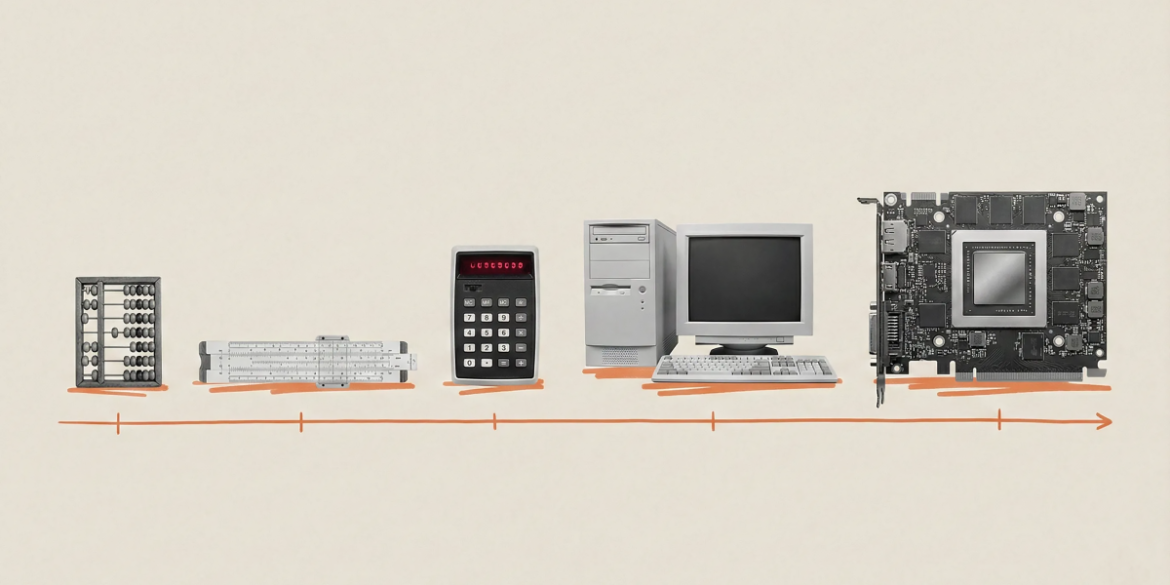

We evolved for a linear world. If you walk for an hour, you cover a certain distance. Walk for two hours and you’ll cover twice the distance. This intuition served us well in Savannah. But it fails catastrophically when faced with AI and the core exponential trends at its core.

Since I started working on AI in 2010, the amount of training data going into frontier AI models has increased 1 trillion times – from about 10¹⁴ flops (floating-point operations, the main unit of computation) for early systems to more than 10²⁶ for the largest models today. It’s a blast. Everything else in AI follows from this fact.

Skeptics keep predicting walls. And they keep getting it wrong in the face of this epic generational reckoning ramps up. Often, they point out that Moore’s Law is slowing down. They also cite a lack of data, or they cite limitations on energy.

But when you look at the combined forces driving this revolution, the exponential trend seems quite predictable. To understand why, it’s worth looking at the complex and rapidly growing reality beneath the headlines.

Think of AI training as a room full of people working on calculators. For years, adding computational power meant adding more people with calculators in that room. Most of the time those workers sat idle, tapping their fingers on the desk, waiting for the numbers to come in for their next calculation. Every stoppage capability was wasted. Today’s revolution goes beyond more and better calculators (however it delivers them); It’s really about making sure all those calculators never turn off, and that they work well together.

Three advances are now coming together to enable this. First, basic calculators became faster. Nvidia’s chips deliver more than a seven-fold increase in raw performance in just six years 312 teraflops in 2020 To 2,250 teraflops today. our own Maiya 200 The chip, launched this January, delivers 30% better performance per dollar than any other hardware in our fleet. Second, the numbers are accessed faster because of a technology called HBM, or high bandwidth memory, which stacks the chips vertically like tiny skyscrapers; The latest generation, HBM3, triples the bandwidth of its predecessor, sending data to the processors so fast that they are busy all the time. Third, the room of people with calculators became an office and then an entire complex or city. techniques like NVLink And infiniband Connect hundreds of thousands of GPUs to warehouse-sized supercomputers that act as single cognitive entities. A few years ago this was impossible.

All these benefits come together to provide dramatically more computation. Where in 2020 it took 167 minutes to train a language model on eight GPUs, it now takes less than four minutes on equivalent modern hardware. To put this in perspective: Moore’s law will predict There was only 5 times improvement in this period. We looked at 50x. We’ve gone from two GPUs training AlexNet, the image recognition model that kicked off the modern boom in deep learning in 2012, to today’s largest clusters of over 100,000 GPUs, each individually far more powerful than its predecessors.

Then there was a revolution in software. research from era ai Suggests that the computation required to reach a certain performance level halves approximately every eight months, much faster than Moore’s Law’s traditional 18 to 24 month doubling. The cost of servicing some recent models has fallen by up to 900 times on an annual basis. AI is becoming increasingly cheaper to deploy.

The near-term numbers are just as shocking. Consider that leading laboratories are growing capacity by approximately 4 times annually. Since 2020, the computation used to train Frontier models has increased 5x every year. Global AI-relevant compute is projected to reach 100 million H100-equivalents by 2027, a tenfold increase in three years. Put it all together and we’re expecting a 1,000-fold increase in effective compute by the end of 2028. It is plausible that by 2030 we will bring additional computation. 200 Online calculation of gigawatts per year – similar to the total energy use of the UK, France, Germany and Italy.

What do we get from all this? I believe this will drive the transformation from chatbots to nearly human-level agents – semi-autonomous systems capable of writing code for days, completing projects lasting weeks and months, making calls, negotiating contracts, managing logistics, etc. Forget basic helpers that answer questions. Think of teams of AI workers who brainstorm, collaborate, and execute. We are only at the beginning of this transition, and its implications extend far beyond technology. Every industry built on cognitive work will be transformed.

The obvious constraint here is energy. A refrigerator-sized AI rack consumes 120 kilowatts, equivalent to 100 homes. But this hunger hits another exponent: solar cost There has been an almost 100-fold decline in 50 years; battery prices There has been a 97% decline in three decades. A path to clean scaling is emerging.

Capital is deployed. Engineering is giving. $100 billion clusters, 10-gigawatt power draws, warehouse-scale supercomputers… these aren’t science fiction anymore. Now the ground is being prepared for these projects in America and around the world. As a result, we are moving toward true cognitive abundance. At Microsoft AI, this is the world our Superintelligence Lab is planning and building for.

Skeptics accustomed to a linear world will continue to predict diminishing returns. They will continue to wonder. The computation explosion is the technology story of our times, full stop. And this is still only the beginning.

Mustafa Suleiman is the CEO of Microsoft AI.