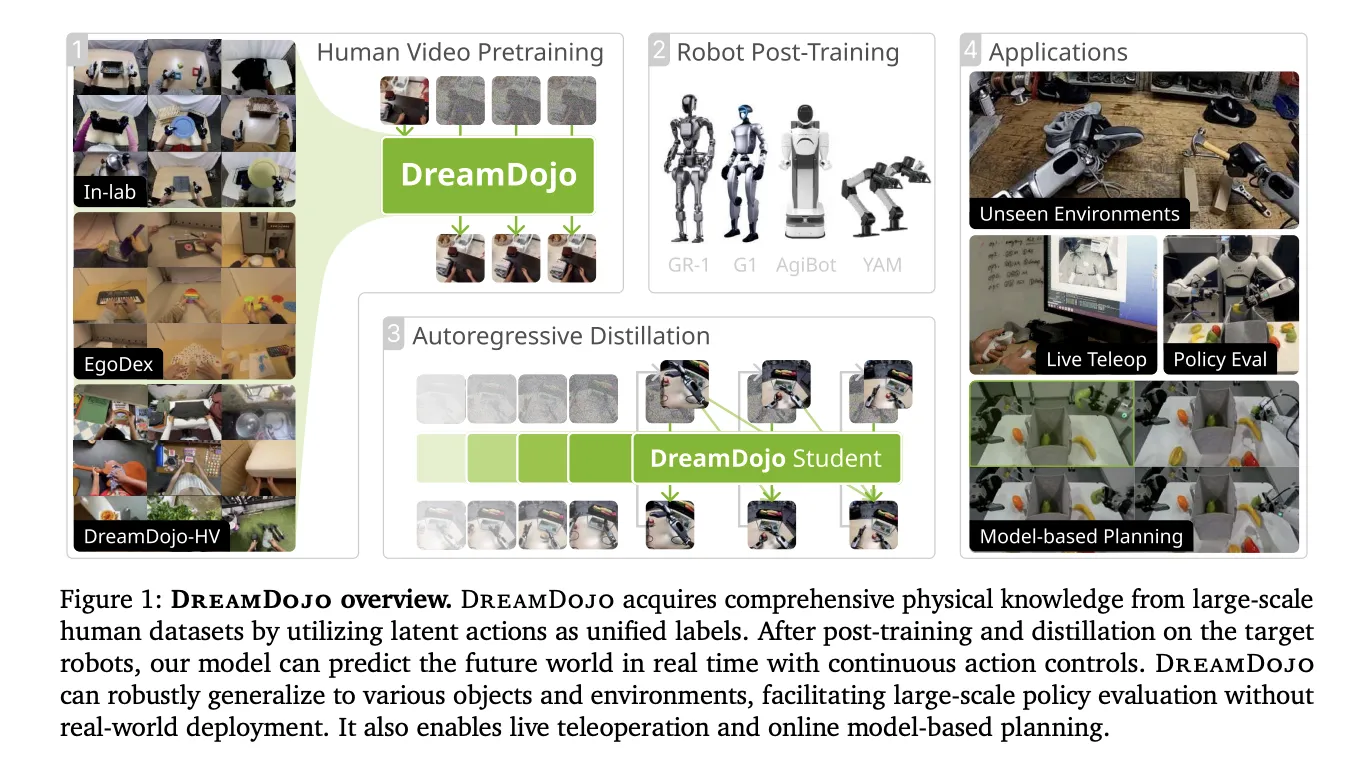

Creating simulators for robots has been a long-standing challenge. Traditional engines require manual coding of physics and complete 3D models. NVIDIA is changing this dreamdojoA completely open-source, generalizable robot world model. Instead of using a physics engine, DreamDojo ‘dreams’ the results of robot actions directly into pixels.

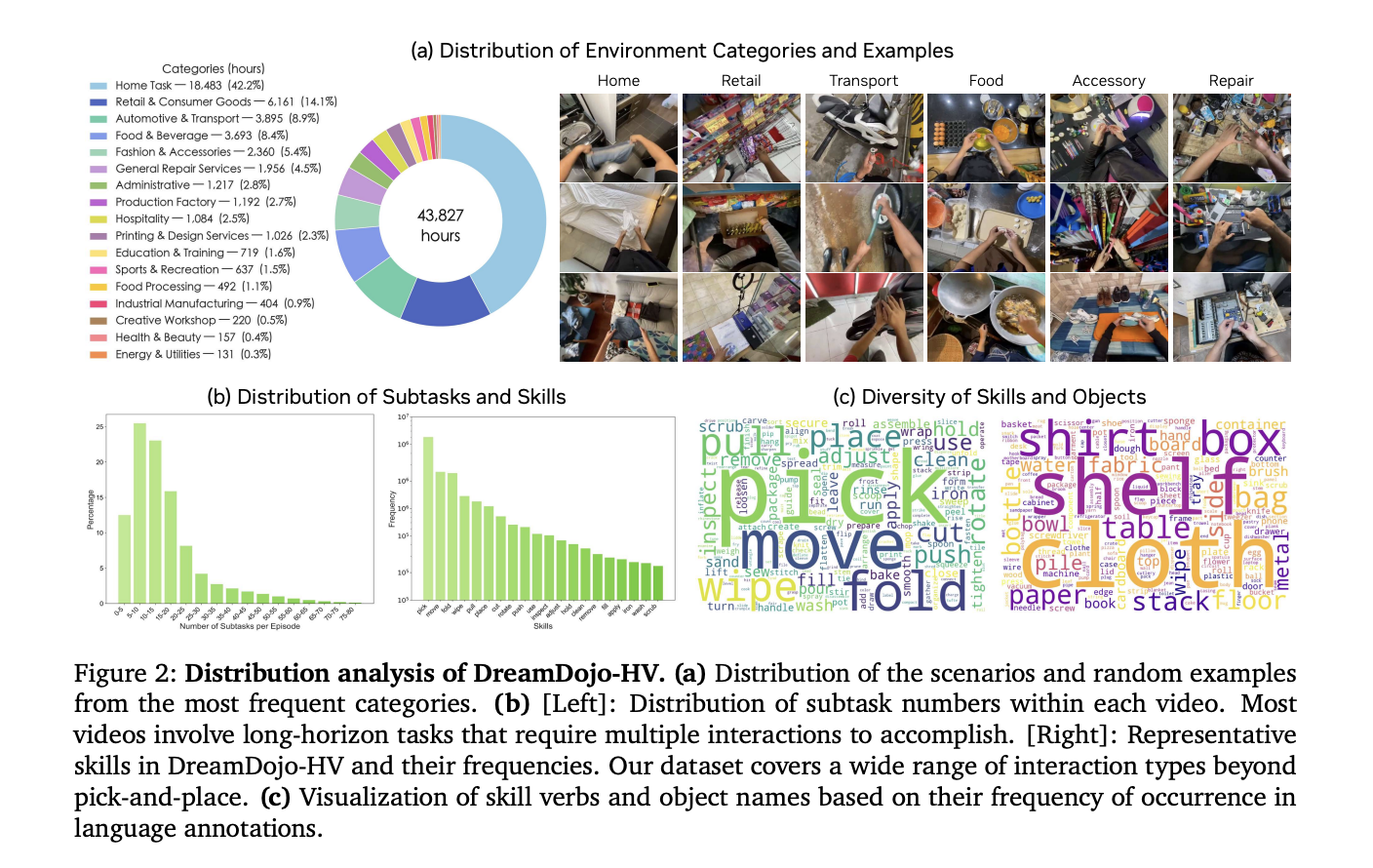

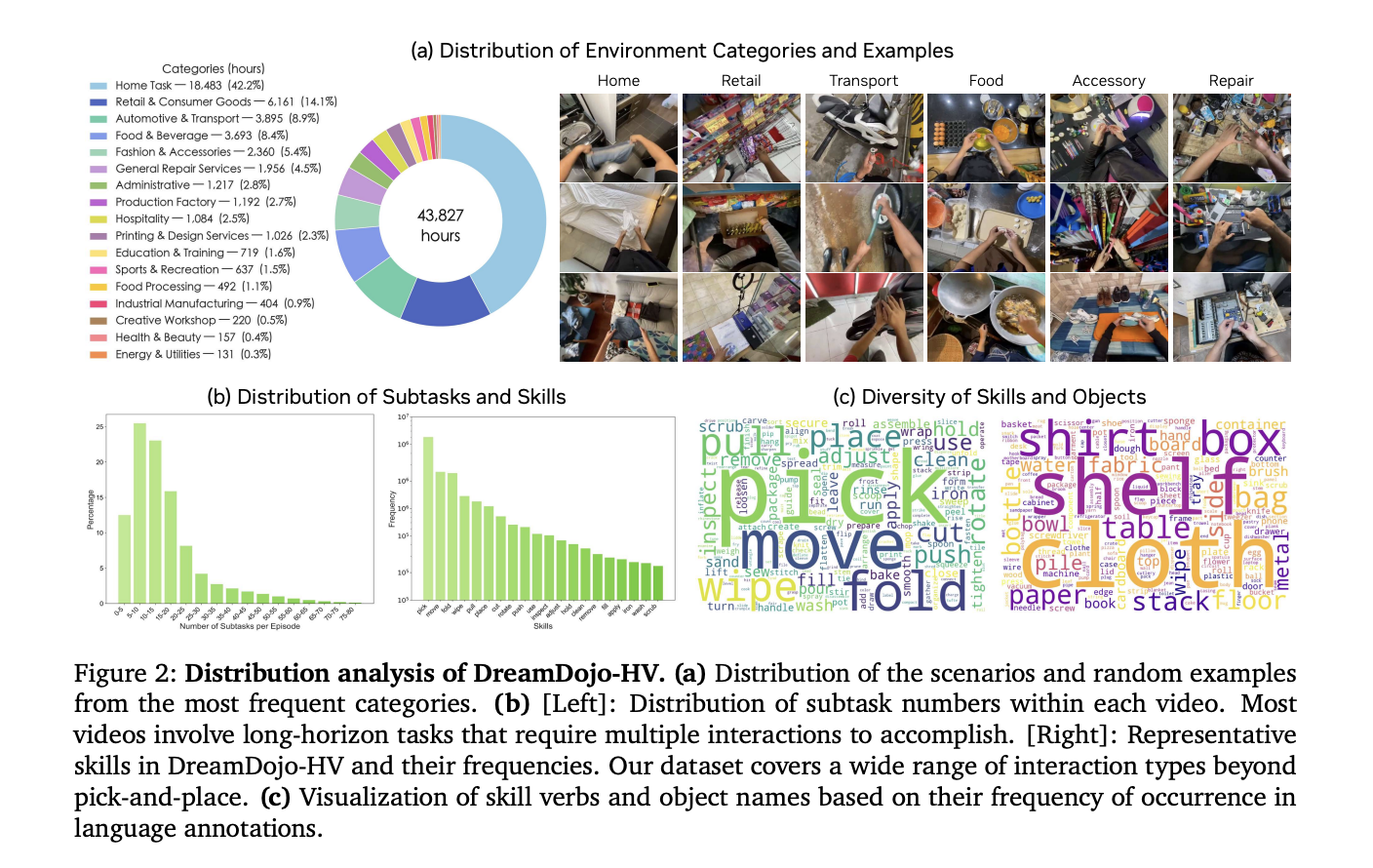

Scaling robotics with 44k+ hours of human experience

The biggest hurdle for AI in robotics is data. Collecting robot-specific data is expensive and slow. DreamDojo solves it by learning 44k+ hours Arrogant human video. This dataset is called dreamdojo-hvThe world is the largest of its kind for model pretraining.

- It contains 6,015 unique tasks on 1M+ trajectories.

- The data contains 9,869 unique scenes and 43,237 unique objects.

- Pre-training used 100,000 NVIDIA H100 GPU hours To create 2B and 14B model variants.

Humans have already mastered complex physics, like pouring liquids or folding clothes. DreamDojo uses this human data to give robots a ‘common sense’ understanding of how the world works.

bridging the gap with latent verbs

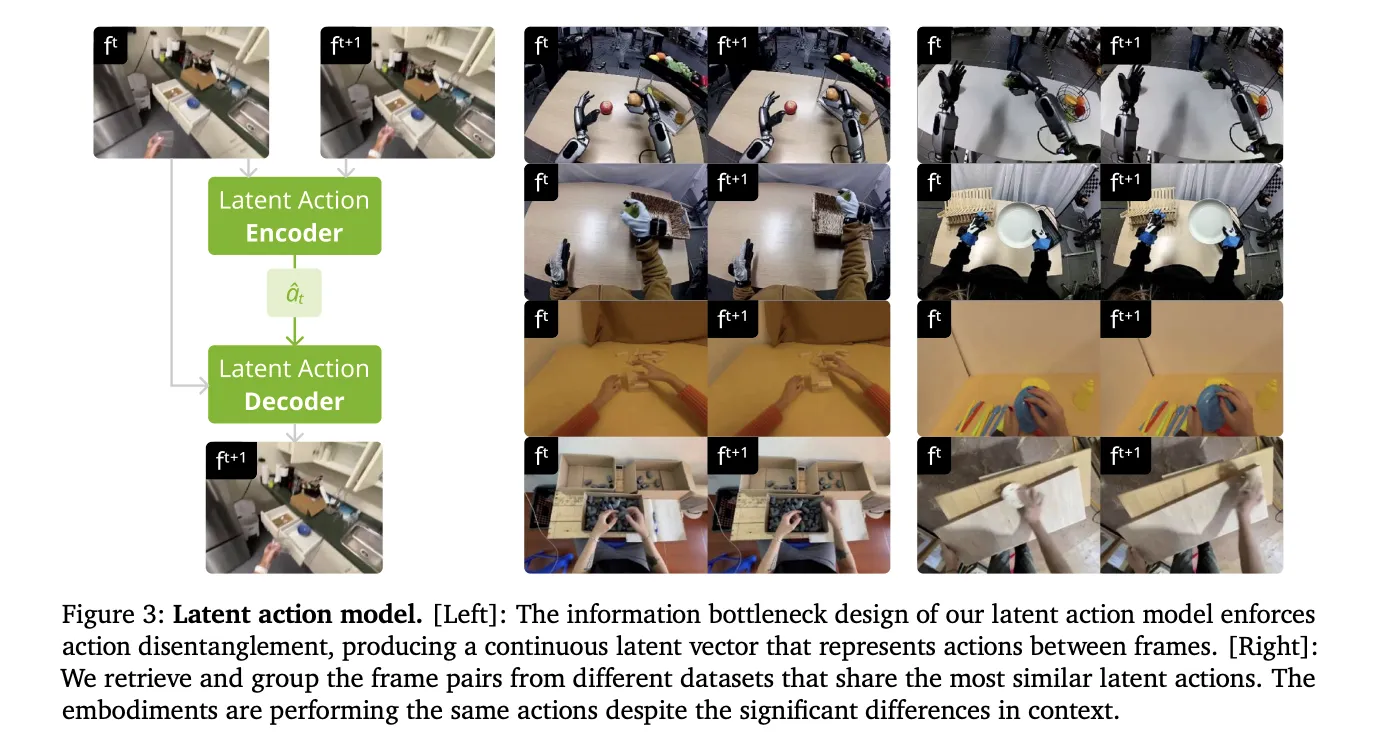

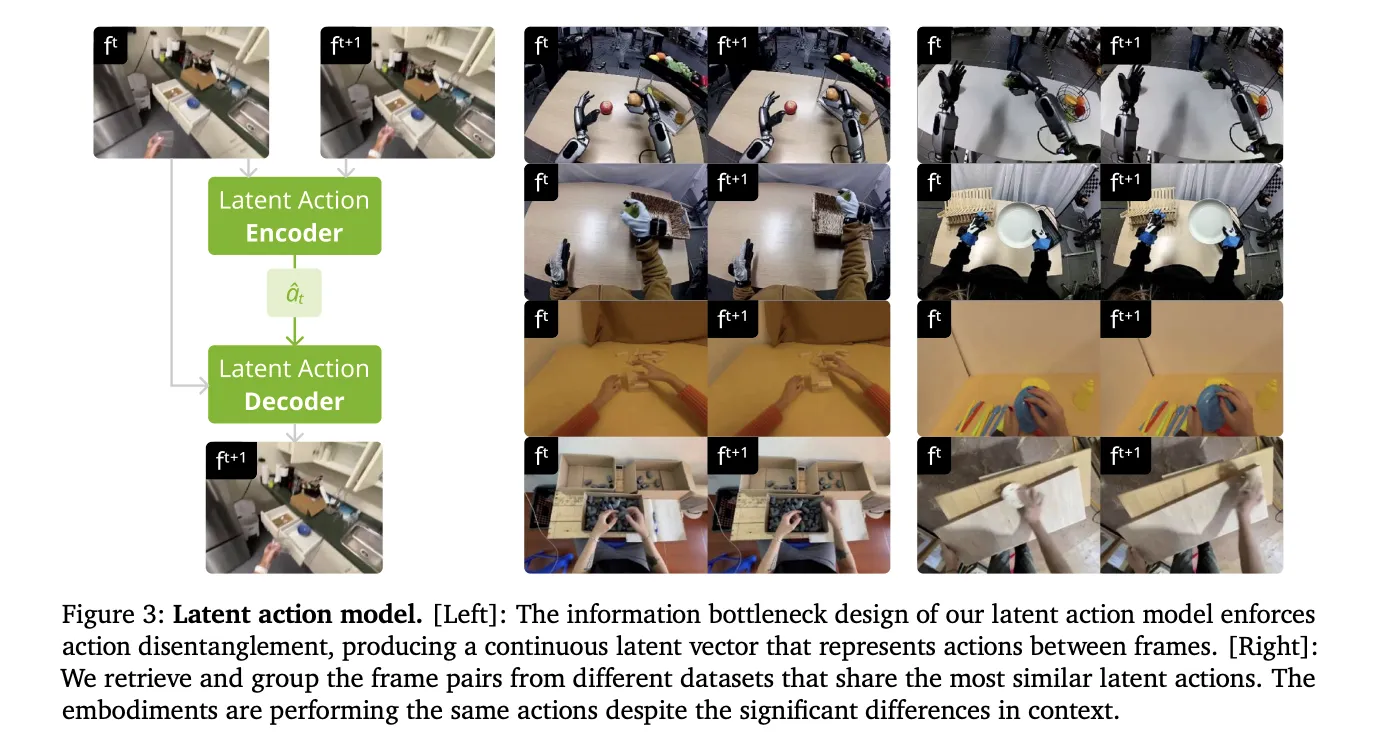

Robots in human videos do not have motor commands. To make these videos ‘robot-readable’, NVIDIA’s research team introduced continuous latent verbs. This system uses a spatiotemporal transformer VAE to extract actions directly from pixels.

- The VAE encoder takes 2 consecutive frames and outputs a 32-dimensional latent vector.

- This vector represents the most significant motion between frames.

- The design creates an information barrier that separates the action from the visual context.

- This allows models to learn physics from humans and apply them to different robot bodies.

Better physics through architecture

based on dreamdojo Universe-Prediction2.5 Latent video propagation model. uses it WAN2.2 tokenizerWhose temporal compression ratio is 4. The team improved the architecture with 3 key features:

- Relative verbs: The model uses joint delts instead of full poses. This makes it easier for the model to generalize to different trajectories.

- Fragmentary Verb Injection: It injects 4 consecutive actions into each latent frame. This aligns actions with the tokenizer’s compression ratio and corrects causality confusion.

- Temporary Consistency Loss: A new loss function matches the estimated frame velocities to ground-truth transitions. This reduces visual artifacts and keeps objects physically consistent.

Distillation for 10.81 fps real-time interaction

A simulator is only useful if it is fast. Standard diffusion models require too many denoising steps for use in real time. The NVIDIA team used to force oneself Distillation pipeline to solve this.

- distillation training conducted 64 Nvidia H100 GPU.

- The ‘Student’ model reduces denoising from 35 steps to 4 steps.

- Final model achieves real-time speed 10.81 fps.

- It is stable for a continuous rollout of 60 seconds (600 frames).

Unlocking downstream applications

DreamDojo’s speed and accuracy enables many advanced applications for AI engineers.

1. Credible Policy Evaluation

Testing robots in the real world is risky. DreamDojo serves as a high-fidelity simulator for benchmarking.

- Its simulated success rates show a Pearson correlation of (Pearson 𝑟=0.995) with real-world outcomes.

- There is only mean maximum rank violation (MMRV). 0.003.

2. Model-Based Planning

Robots can use DreamDojo to ‘look ahead’. A robot can simulate multiple action sequences and choose the best one.

- In a fruit-packing task, this improved real-world success rates 17%.

- Compared to random sampling, this provided a 2-fold increase in success.

3. Live Teleoperation

Developers can teleoperate virtual robots in real time. The NVIDIA team demonstrated this using pico vr controller and with a local desktop nvidia rtx 5090. It allows secure and fast data collection.

Model Performance Summary

| metric | dreamdojo-2b | dreamdojo-14b |

| physics accuracy | 62.50% | 73.50% |

| action following | 63.45% | 72.55% |

| FPS (distilled) | 10.81 | N/A |

NVIDIA has released all the weights, training code, and evaluation benchmarks. This open-source release allows you to post-train DreamDojo on your robot data today.

key takeaways

- huge scale and diversity:Pre-trained on DreamDojo dreamdojo-hvThe largest egocentric human video dataset to date, featuring 44,711 hours across the footage 6,015 unique works And 9,869 views.

- Unified Latent Action Proxy: Uses models to overcome the lack of action labels in human videos continuous latent verbs A spatiotemporal transformer is extracted through the VAE, which acts as a hardware-agnostic control interface.

- Customized Training and Architecture:High-fidelity physics and precise controllability are achieved using models relative verb change, fragmented verb injectionand a special temporary stability loss.

- Real time display through distillation: through a to force oneself Distillation pipeline, model is accelerated 10.81 fpsEnabling interactive applications such as live teleoperation and stable, long-horizon simulations 1 minute.

- Reliable for downstream tasks: DreamDojo serves as an accurate simulator policy evaluationshowing one 0.995 Pearson correlation With real-world success rates, and can improve real-world performance 17% When used for model based planning.

check it out paper And codes. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.