The gap between the proprietary frontier model and the highly transparent open-source model is narrowing faster than ever. NVIDIA has officially pulled back the curtain nemotron 3 superA staggering 120 billion parameter reasoning model specifically engineered for complex multi-agent applications.

Released today, nemotron 3 super Fits perfectly between the lightweight 30 billion parameter Nemotron 3 Nano and the highly anticipated 500 billion parameter Nemotron 3 Ultra arriving later in 2026. Providing up to 7x more throughput And with double the accuracy of its previous generation, this model is a huge leap forward for developers who refuse to compromise between intelligence and inference efficiency.

The ‘Five Wonders’ of Nemotron 3 Super

Nemotron 3 Super’s unprecedented performance is driven by five key technological breakthroughs:

- Hybrid MOE Architecture: The model intelligently combines memory-efficient Mamba layers with high-accuracy Transformer layers. By activating only a fraction of the parameters to generate each token, It achieves 4 times increase in KV and SSM cache usage efficiency.

- Multi-Token Prediction (MTP): The model can predict multiple future tokens simultaneously, making predictions up to 3 times faster on complex logic tasks.

- 1-million reference window: Claiming a context length up to 7 times larger than the previous generation, developers can drop massive technical reports or entire codebases directly into the model’s memory, eliminating the need for re-logic in multi-step workflows.

- Latent MOE: This allows the model to compress information Activate four experts for the same calculation costE. Without this innovation, the model would need to be 35 times larger to reach the same accuracy level.

- Nemo RL Gym Integration: Through interactive reinforcement learning pipelines, the model learns from dynamic feedback loops instead of just static text, effectively doubling its intelligence index.

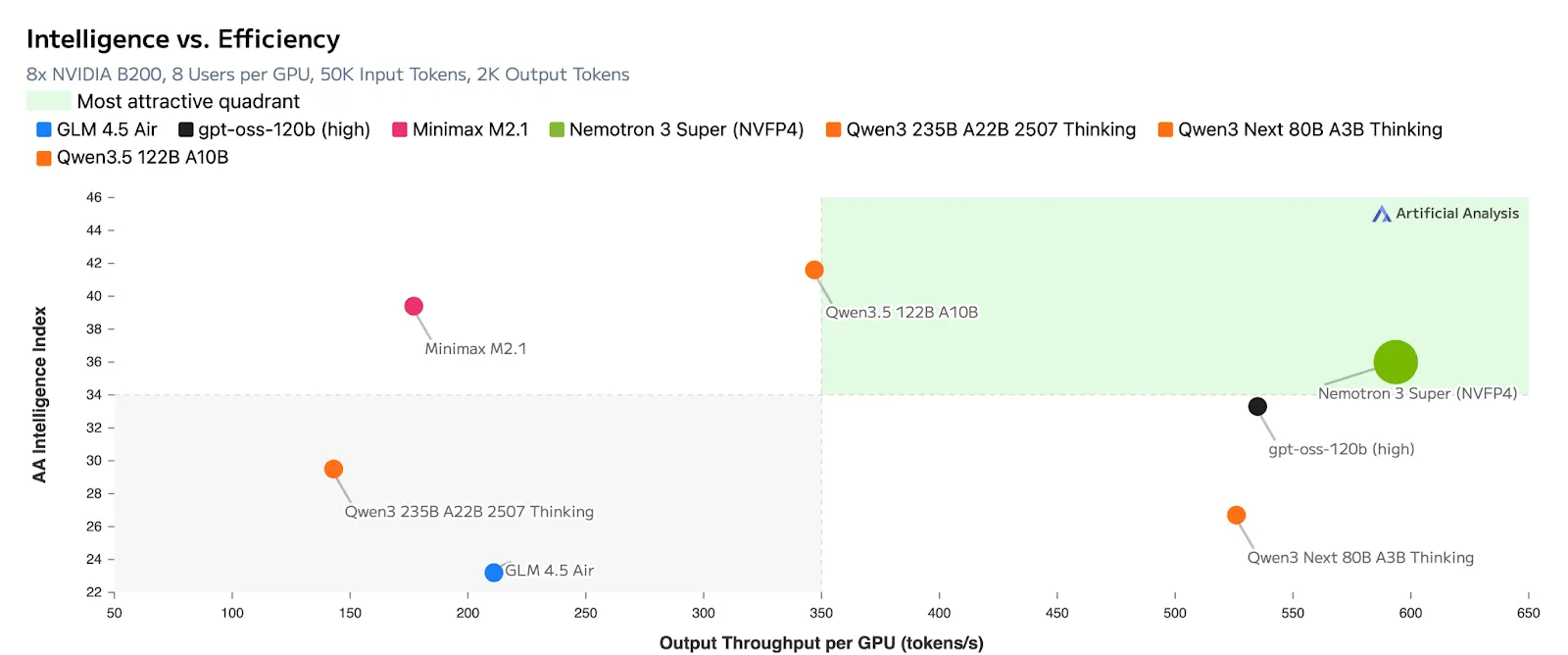

All these breakthroughs lead to incredible efficiency in terms of output tokens per GPU

Why Nemotron 3 is the ultimate engine for super multi-agent AI?

nemotron 3 super It’s not just a standard big language model; It is specifically positioned as a logic engine designed to plan, verify, and execute complex tasks within a comprehensive system of specialized models. Here’s why its architecture makes it a game-changer for multi-agent workflows:

- High Throughput for Deep Reasoning: The model’s 7x higher throughput physically expands its search space. Because it can process and generate tokens faster, it can explore significantly more trajectories and evaluate better responses. This allows developers to run deeper logic on the same compute budget, which is essential for building sophisticated, autonomous agents.

- Zero “re-reasoning” in long workflows: In multi-agent systems, agents constantly pass context back and forth. The 1-million token context window allows the model to maintain massive amounts of state, such as an entire codebase or a long, multi-step agent conversation history, directly in its memory. This eliminates the latency and cost of forcing the model to reprocess the context at each step.

- Agent-specific training environment: Instead of relying only on static text datasets, the model’s pipeline was enhanced with over 15 interactive reinforcement learning environments. By training in dynamic simulation loops (such as dedicated environments for software engineering agents and tool-augmented search), Nemotron 3 Super learned the optimal trajectory to complete an autonomous task.

- Advanced Tool Calling Capabilities: In real-world multi-agent applications, models need to perform tasks, not just provide textual feedback. different thinking, The Nemotron 3 super tool has proven to be highly efficient in callingSuccessfully navigating the vast pool of available tasks – such as dynamically selecting from over 100 different tools in complex cybersecurity workflows.

Open source and training scale

NVIDIA is not just releasing weight; They are completely open-sourcing the entire stack of models, Which includes training datasets, libraries, and reinforcement learning environments.

Due to this level of transparency, synthetic analysis places Nemotron 3 Super in the ‘Most Attractive Quadrant’, noting that it achieves the highest openness score while maintaining leading accuracy with proprietary models. The foundation of this intelligence comes from a completely redesigned pipeline trained on 10 trillion curated tokens, complemented by an additional 9 to 10 billion tokens focused strictly on advanced coding and reasoning tasks.

Developer Control: Introducing ‘Logic Budget’‘

While the raw parameter counts and benchmark scores are impressive, the NVIDIA team understands that real-world enterprise developers need precise control over latency, user experience, and compute costs. To solve the classic intelligence-versus-speed dilemma, Nemotron 3 Super introduces extreme flexibility reasoning mode Provides an unprecedented level of control into the hands of the developer, directly through its API.

Instead of forcing a one-size-fits-all output, developers can dynamically adjust the precision How hard the model ‘thinks’ based on the specific task at hand: :

- Absolute arguments (default): The model is designed to take advantage of its maximum capabilities, exploring deep search spaces and multi-step trajectories to solve the most complex, agentic problems.

- ‘Logic Budget’: This is a complete game-changer for latency-sensitive applications. Developers can explicitly limit the thinking time or computation allowance of the model. By setting a strict logic budget, the model intelligently optimizes its internal search space to produce the best possible answer. within that exact constraint.

- ‘Low Effort Mode’: Not every indication requires intensive, multi-agent analysis. When a user needs a simple, concise answer (such as a standard summary or basic Q&A) without deep reasoning, this toggle turns the Nemotron 3 Super into a lightning-fast responder, saving huge amounts of computation and time.

‘Golden’ configuration

Tuning reasoning models can often be a frustrating process of trial and error, but the NVIDIA team has made it completely clear for this release. To achieve optimal best performance All Of these dynamic modes, NVIDIA recommends a global configuration of Temperature 1.0 and Top P 0.95.

According to the NVIDIA team, locking in these precise hyperparameter settings ensures that the model maintains the right mathematical balance of creative exploration and logical precision, whether running on constrained low-effort mode or on unconstrained reasoning deep-dives.

Real-world applications and availability

nemotron 3 super Already proving its potential in demanding enterprise applications:

- software development: It handles junior-level pull requests and outperforms leading proprietary models in problem localization, successfully finding the exact line of code causing the bug.

- Cyber security: This model excels at navigating complex security ISV workflows with its advanced tool-calling logic.

- Sovereign AI: Organizations globally in regions such as India, Vietnam, South Korea and Europe are using Nemotron architectures to create specialized, localized models tailored to specific regions and regulatory frameworks.

nemotron 3 super rReleased in BF16, FP8, and NVFP4 quantization with NVFP4 DGX is required to run models on Spark.

View models at hugging face. you can find details research paper And Technical/Developer Blog.

Thanks to the NVIDIA AI team for the thought leadership/resources for this article. This content/article has been endorsed and sponsored by the NVIDIA AI team.

Jean-Marc is a successful AI business executive. He leads and accelerates development of AI driven solutions and started a computer vision company in 2006. He is a recognized speaker at AI conferences and holds an MBA from Stanford.