NVIDIA has announced the release of Nemotron-Cascade 2an open weight 30B Mixture of Experts (MOE) model with 3b active parameters. The model focuses on maximizing ‘intelligence density’, providing advanced reasoning capabilities at a fraction of the parameter scale used by Frontier models. Nemotron-Cascade is the second open-weight LLM to achieve 2 gold medal level performance At the 2025 International Mathematical Olympiad (IMO), International Olympiad in Informatics (IOI), and ICPC World Finals.

Target performance and strategic trade-offs

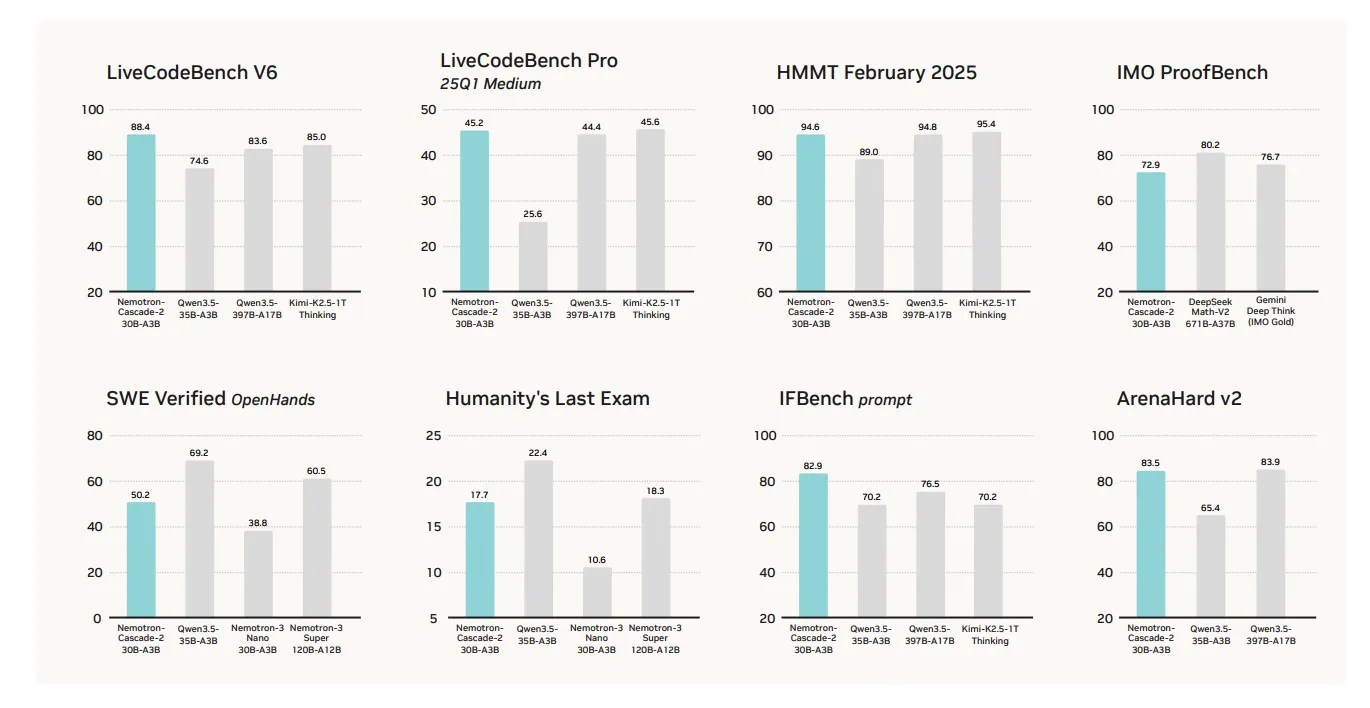

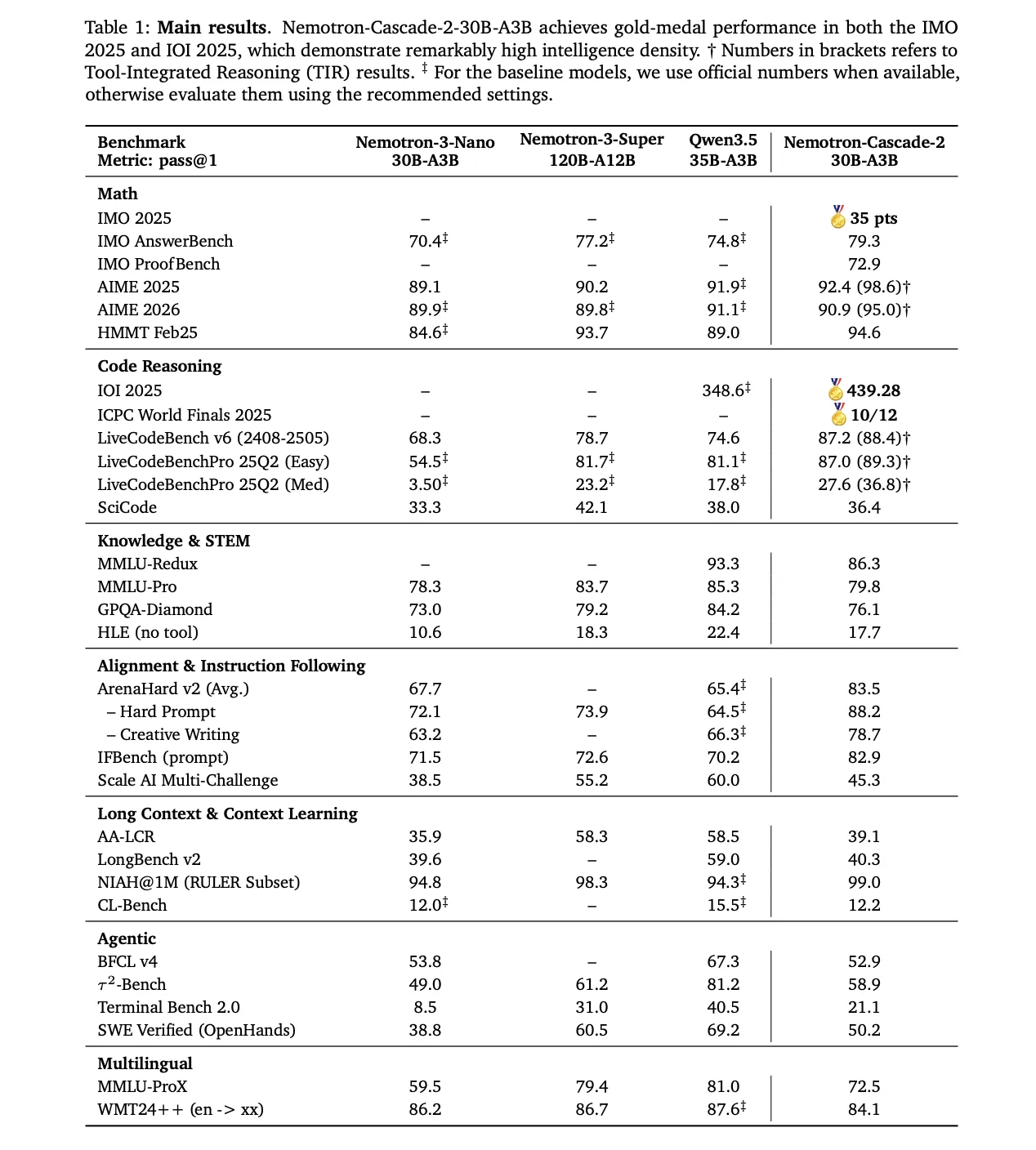

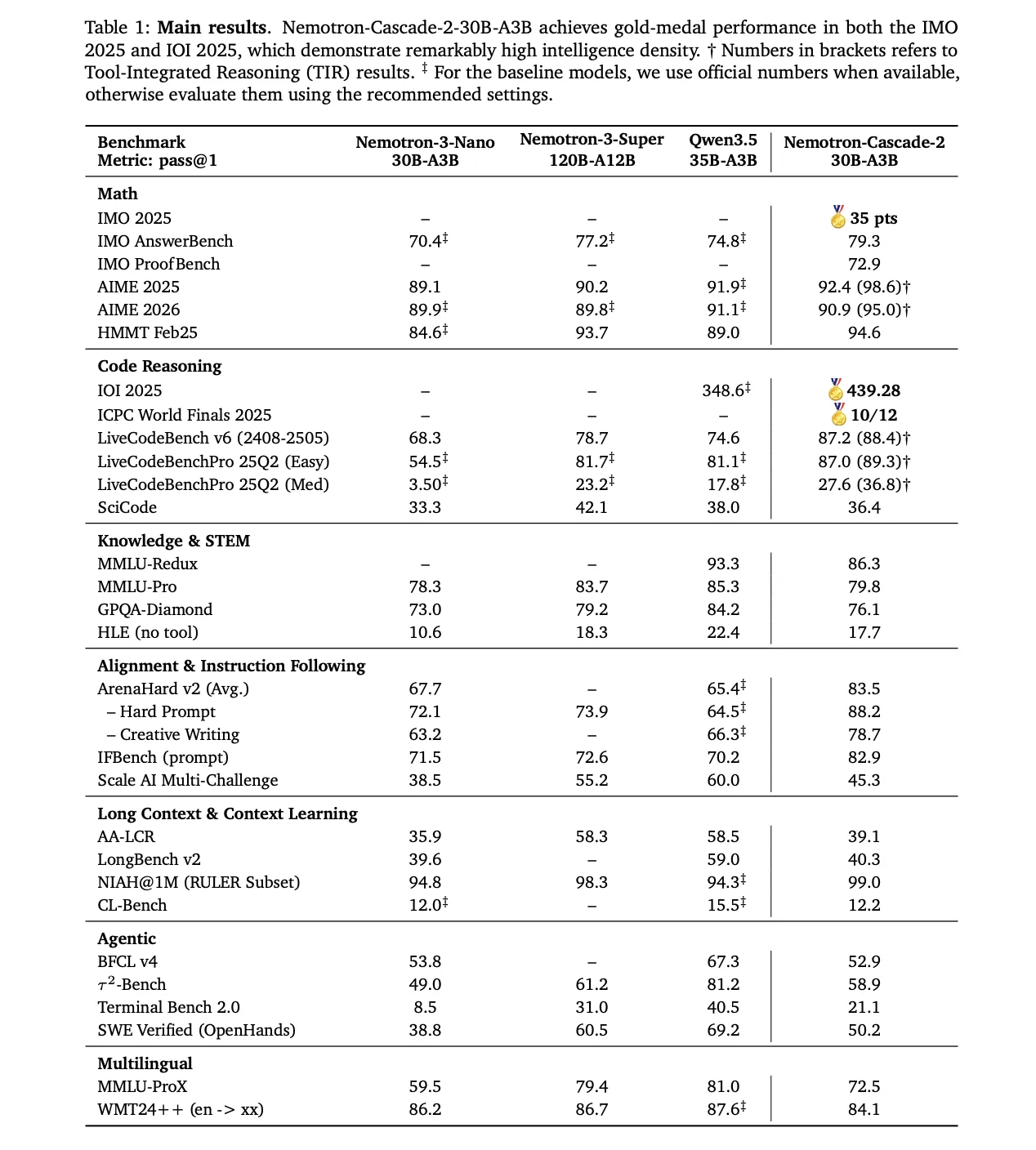

The primary value proposition of Nemotron-Cascade 2 is its exceptional performance in mathematical logic, coding, alignment and instruction following. While it achieves state-of-the-art results in these key logic-intensive domains, it is certainly not a ‘blanket win’ in all benchmarks.

The model’s performance is excellent in many target categories compared to recently released Qwen3.5-35B-A3B (February 2026) and bigger Nemotron-3-Super-120B-A12B: :

- arithmetic logic: Qwen3.5-35B-A3B outperforms AIME 2025 (92.4 vs 91.9) and HMMT February 25 (94.6 vs 89.0).

- Coding: takes forward livecodebench v6 (87.2 vs 74.6) and IOI 2025 (439.28 vs 348.6+).

- Alignment and instructions following: but the score is quite high arenahard v2 (83.5 vs 65.4+) and IFBENCH (82.9 vs 70.2).

Technical Architecture: Cascade RL and Multi-Domain On-Policy Distillation (MOPD)

The model’s reasoning capabilities begin with its post-training pipeline Nemotron-3-Nano-30B-A3B-Base Sample.

1. Supervised Fine-Tuning (SFT)

During SFT, the NVIDIA research team used a carefully crafted dataset where samples were packed into sequences 256K tokens. The dataset includes:

- 1.9M Python logic traces And 1.3M Python tool-calling samples for competitive coding.

- 816K samples For mathematical natural language proofs.

- a special Software Engineering (SWE) Blend This includes 125K agentic and 389K agentless samples.

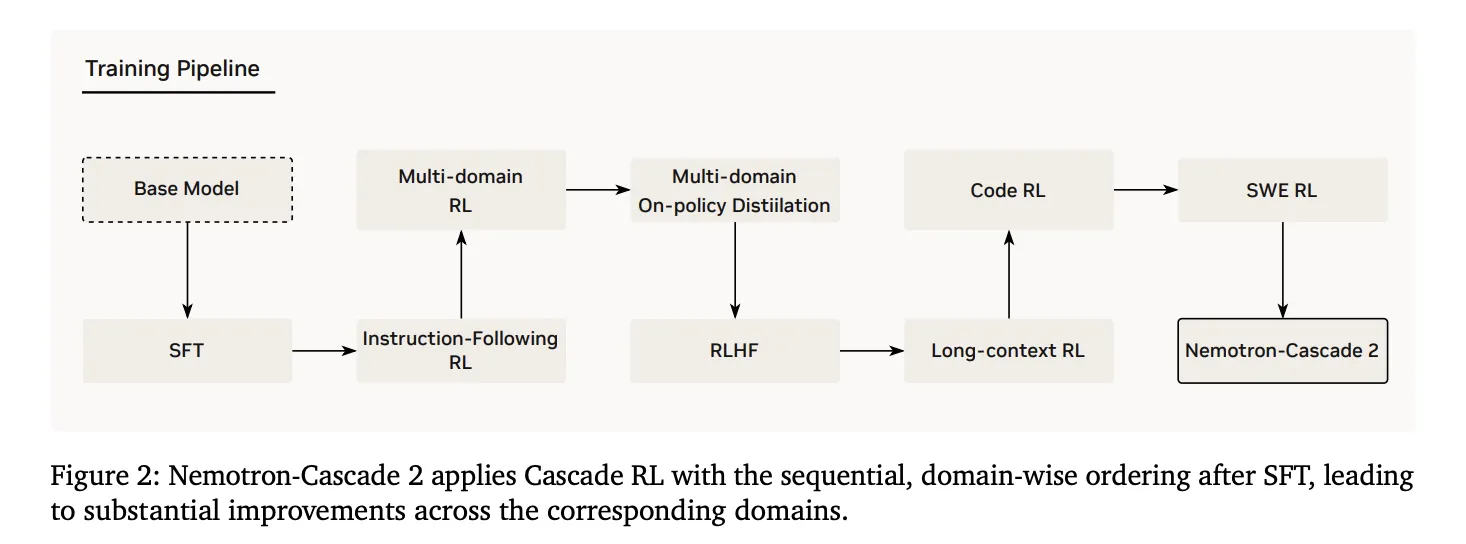

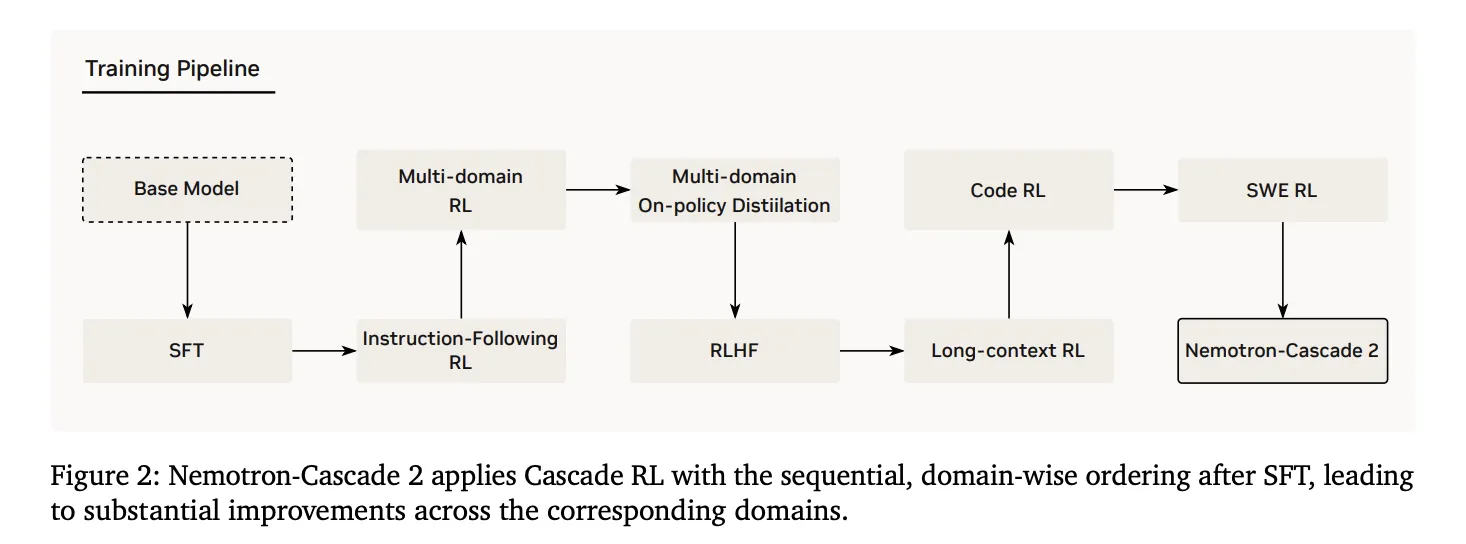

2. Cascade Reinforcement Learning

Following SFT, the model was further Cascade RLwhich applies sequential, domain-wise training. This prevents catastrophic mistakes by allowing hyperparameters to be tailored to specific domains without destabilizing others.. The pipeline includes stages for instruction-following (IF-RL), multi-domain RL, RLHF, long-context RL, and special code and SWE RL..

3. Multi-Domain On-Policy Distillation (MOPD)

An important innovation in Nemotron-Cascade 2 is the integration of MOPD During the Cascade RL process. MOPD assembly uses the best performing intermediate ‘teacher’ models to provide dense token-level distillation benefits – already derived from similar SFT initialization. This profit is defined mathematically as:

$$a_{t}^{MOPD}=log~pi^{domain_{t}}(y_{t}|s_{t})-log~pi^{train}(y_{t}|s_{t})$$

The research team found that MOPD is significantly more sample-efficient than sequence-level reward algorithms. Group Relative Policy Optimization (GRPO). For example, on AIME25MOPD reached teacher-level performance (92.0) within 30 steps, while GRPO achieved only 91.0 after matching those steps.

Inference Features and Agent Interaction

Nemotron-Cascade 2 supports two primary operating modes through its chat template:

- Thinking Mode: started by single

- Non-thinking mode: activated by adding an empty

For agentic tasks, the model uses a structured tool-calling protocol within the system prompt.. Available tools are listed within

By focusing on ‘intelligence density’, Nemotron-Cascade 2 demonstrates that specialized reasoning abilities once considered the exclusive domain of frontier-scale models are achievable at the 30B scale through domain-specific reinforcement learning.

check out paper And Model on HF. Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.