OpenAI recently introduced GPT-5.3-Codex, a new agentic coding model that extends Codex to handle a wide range of tasks on computers, from writing and reviewing code. The model combines the frontier coding performance of the GPT-5.2 codec with the logic and professional knowledge capabilities of GPT-5.2 into a single system, and it runs up to 25% faster for codec users due to improvements in infrastructure and inference.

For dev folks, GPT-5.3-Codex is positioned as a coding agent that can execute long-running tasks including research, tool use, and complex execution, while remaining operable ‘like a colleague’ during a run.

Frontier Agent Capabilities and Benchmark Results

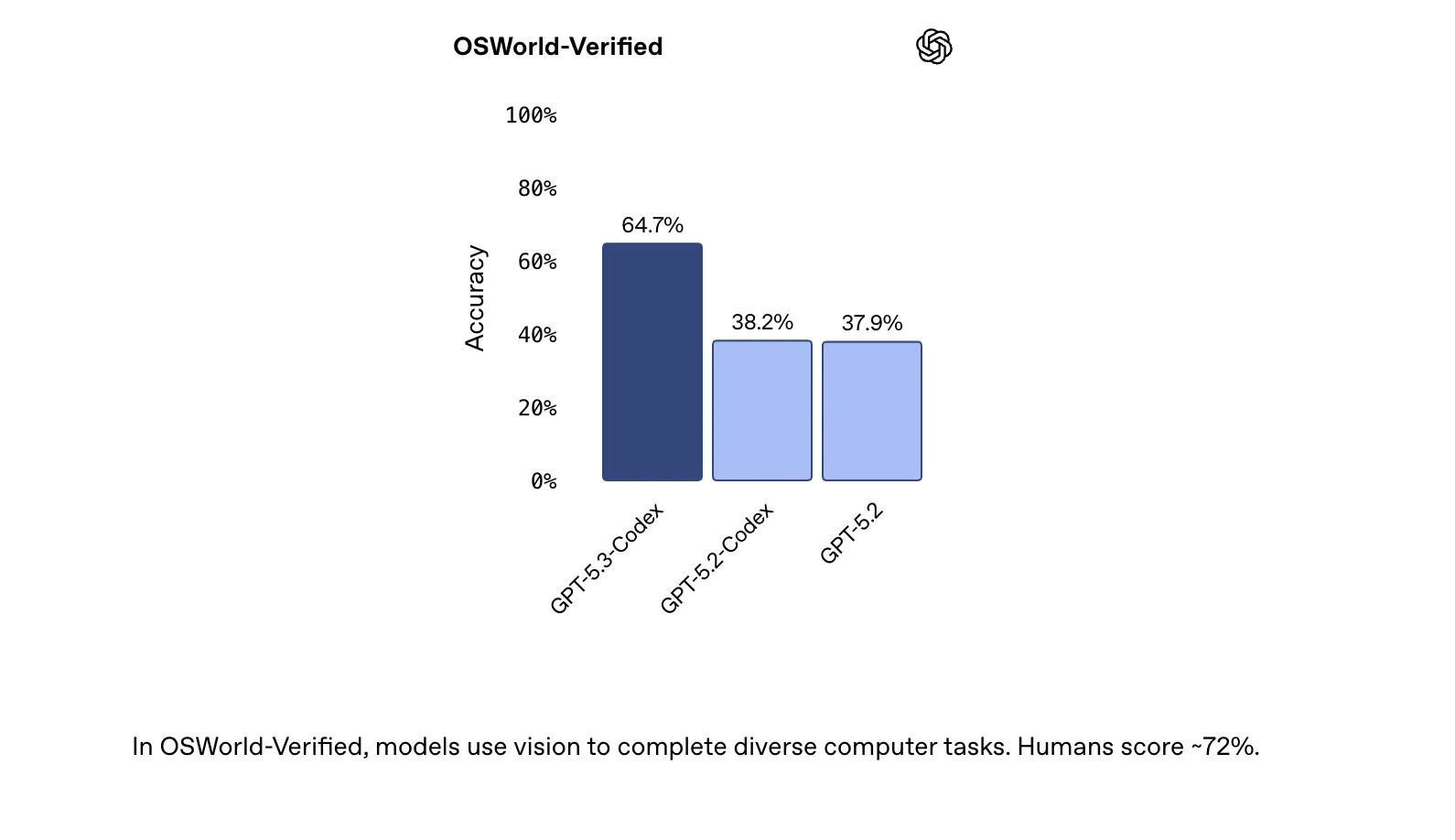

OpenAI evaluates the GPT-5.3-codecs on four key benchmarks that target real-world coding and agentive behavior: SWE-Bench Pro, Terminal-Bench 2.0, OSWorld-Verified, and GDPVal.

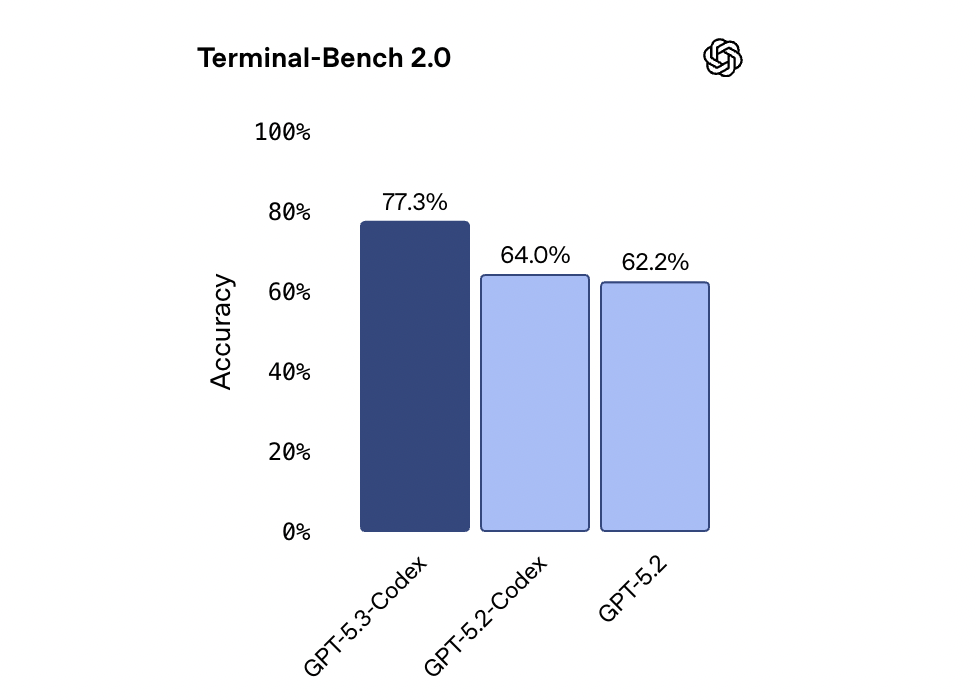

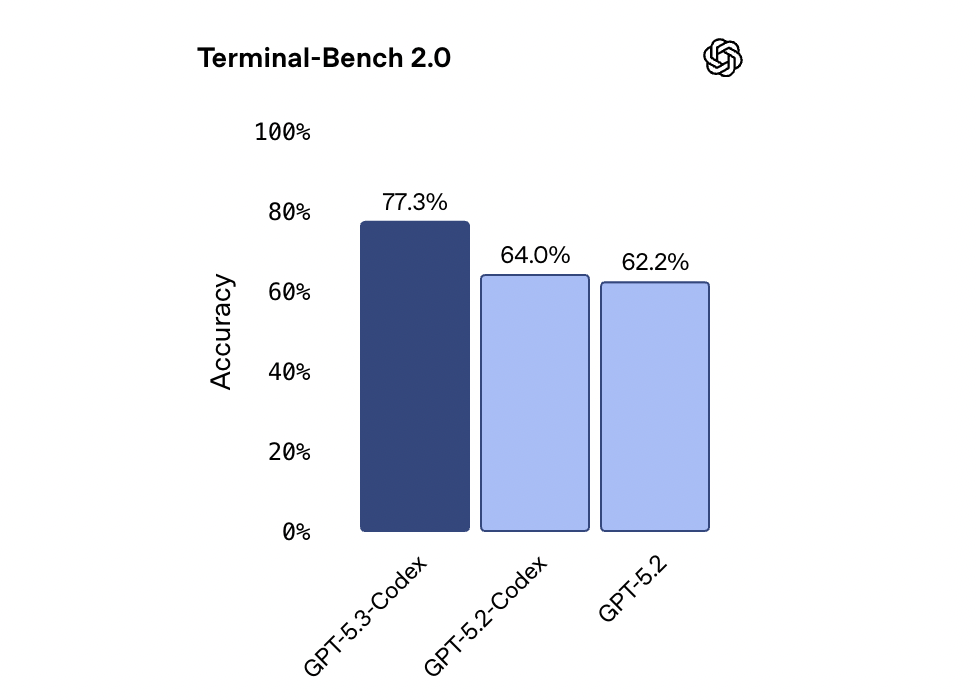

On SWE-Bench Pro, a contamination-resistant benchmark built from real GitHub issues and pull requests in 4 languages, the GPT-5.3-codecs reaches 56.8% with xhigh logic effort. This is slightly better than GPT-5.2-codecs and GPT-5.2 at the same effort level. Terminal-Bench 2.0, which measures the terminal skills required for coding agents, shows a big difference: GPT-5.3-codecs reaches 77.3%, which is significantly higher than the previous model.

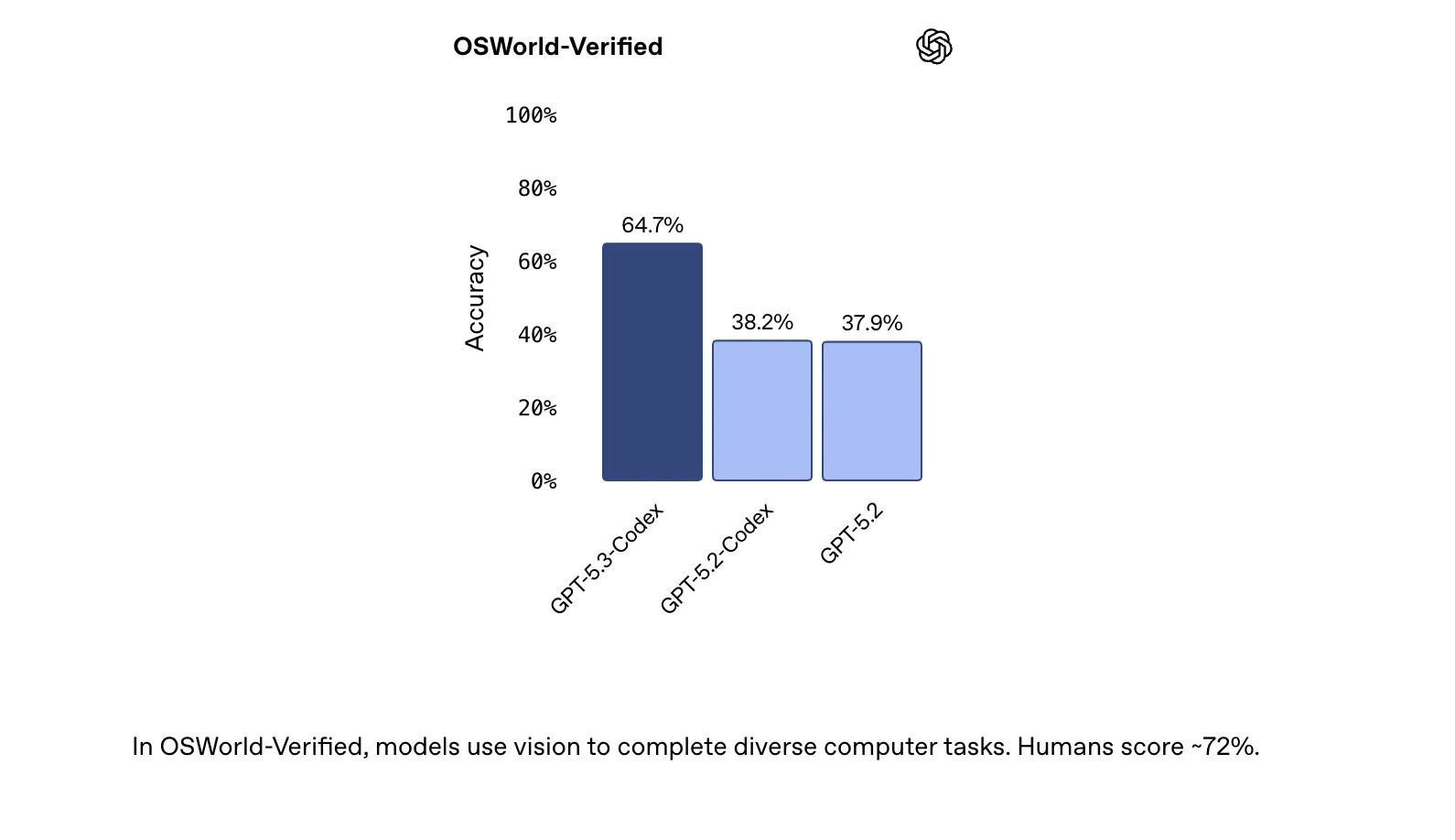

On OSWorld-Verified, an agentic computer-usage benchmark where agents complete productivity tasks in a visual desktop environment, the GPT-5.3-codecs reaches 64.7%. Humans score on this benchmark at around 72%, giving a roughly human-level reference point.

For professional knowledge work, the GPT-5.3-codex is evaluated with GDPval, an assessment launched in 2025 that measures performance on well-specified tasks in 44 occupations. The GPT-5.3 codec achieved a 70.9% win or tie on GDPval, matching GPT-5.2 on high logic effort. These tasks include creating presentations, spreadsheets, and other work products that align with typical professional workflows.

A notable system detail is that the GPT-5.3-codex achieves its results with fewer tokens than previous models, allowing users to “build more” within the same context and cost budget.

Beyond Coding: GDPval and OSworld

OpenAI emphasizes that software developers, designers, product managers, and data scientists perform a variety of tasks beyond code generation. GPT-5.3-Codex is designed to assist with the software lifecycle: debugging, deployment, monitoring, writing PRDs, editing copy, user research, testing, and running metrics.

With the same custom skills used in prior GDPVAL experiments, the GPT-5.3-codex produces a fully working product. Examples from the OpenAI official blog include a financial advice slide deck, a retail training document, an NPV analysis spreadsheet, and a fashion presentation. Each GDPval task is designed by a domain professional and reflects realistic work from that business.

At OSWorld, GPT-5.3-Codex demonstrates more robust computer-use capabilities than earlier GPT models. The OSWorld-verified model requires using vision to complete a variety of tasks in a desktop environment, which closely aligns with the way agents operate real applications and tools, rather than just generating text.

An interactive companion in the Codex app

As models become more capable, the main challenge OpenAI poses is human supervision and control of multiple agents working in parallel. The Codex app is designed to make managing and directing agents easier, and with GPT-5.3-Codex it gains more interactive behavior.

The codex now provides frequent updates during runs so users can see important decisions and progress. Instead of waiting for a single final output, users can ask questions, discuss approaches, and operationalize the model in real time. The GPT-5.3-codex explains what it’s doing and responds to feedback while keeping context. This ‘follow-up behavior’ can be configured in the Codex app settings.

A model that helped train and deploy itself

GPT-5.3-Codex is the first model in this family that was ‘build it yourself’. OpenAI used early versions of the GPT-5.3-codecs to debug its own training, manage deployments, and diagnose test results and evaluations.

The OpenAI research team used Codex to create applications that monitor and debug training runs, track patterns in the training process, analyze interaction quality, propose improvements, and observe behavioral differences relative to prior models. The development team used codecs to optimize and optimize the serving harness, identify context rendering bugs, explore the root causes of low cache hit rates, and dynamically scale the GPU cluster to maintain stable latency under traffic surges.

During alpha testing, a researcher asked the GPT-5.3-codex to measure the additional work completed per turn and the impact on productivity. The model created regex-based classifiers to estimate explanation frequency, positive and negative reactions, and task progress, then ran these on session logs and generated a report. Codex also helped create new data pipelines and rich visualizations when standard dashboard tools were inadequate and obtained summarized insights from thousands of data points in less than 3 minutes.

Cyber Security Capabilities and Security Measures

GPT-5.3-Codex is the first model that OpenAI has classified as ‘high potential’ for cybersecurity-related tasks under its readiness framework and the first model that has been directly trained to identify software vulnerabilities. OpenAI says it has no definitive evidence that the model can automate end-to-end cyberattacks and is taking a precautionary approach with its most comprehensive cybersecurity protection stack to date.

Mitigation includes security training, automated monitoring, reliable access to advanced capabilities, and enforcement pipelines incorporating threat intelligence. OpenAI is launching a ‘Trusted Access for Cyber’ pilot, expanding the private beta of Aardvark, a security research agent, and providing free codebase scanning for widely used open-source projects like Next.js, where Codex was used to identify recently disclosed vulnerabilities.

key takeaways

- Unified Frontier Model for Coding and Work: GPT-5.3-Codex combines the coding strength of GPT-5.2-Codex with the logic and processing capabilities of GPT-5.2-Codex in a single agentive model, and runs 25% faster in Codex.

- State-of-the-art on coding and agent benchmarks: The model sets new highs on SWE-Bench Pro (56.8% on xhigh), Terminal-Bench 2.0 (77.3%), and wins or ties 64.7% on OSWorld-Verified and 70.9% on GDPval, often with fewer tokens than previous models.

- Supports long-horizon web and app development: Using common follow-up skills like ‘develop web games’ and ‘fix bugs’ and ‘improve games’, GPT-5.3-Codex autonomously developed complex racing and diving games on millions of tokens, demonstrating continuous multi-stage development capability.

- Helpful in your own training and deployment: Early versions of GPT-5.3-Codex were used for debugging training runs, analyzing behavior, customizing the serving stack, creating custom pipelines, and summarizing alpha logs on a large scale, making it the first Codex model to ‘build your own’.

- High Capacity Cyber Model with Protected Access: GPT-5.3-Codex is the first OpenAI model with a ‘High Capability’ rating for cyber and the first model directly trained to identify software vulnerabilities. OpenAI has combined this with Trusted Access for Cybersecurity, extended Aardvark beta, free codebase scanning for projects like Next.js.

check it out technical details And try it here. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.