Apache Spark Structured Streaming powers large-scale, long-running mission-critical data pipelines from ETL streaming to analytics and machine learning. But as operational use cases evolved, teams started demanding something more: sub-second latency for applications like fraud detection, personalization, anomaly detection, real-time alerting, and reporting.

Historically, meeting these ultra-low latency requirements has meant introducing specialized systems alongside Spark. With the introduction of real-time mode in Spark Structured Streaming, that tradeoff is no longer necessary. In this blog, we explore how Spark simplifies real-time streaming architectures for common use cases like feature engineering, eliminates long-standing operational complexity, and delivers industry-leading performance.

Real-time streaming no longer requires running multiple separate systems

The ability to process and act on data in real time is now a core requirement. Modern applications, especially AI agents, rely on a constant stream of fresh context to function. If the underlying data is incomplete or slow, the user experience suffers. Real-time performance is essential not only for traditional use cases like fraud detection, but also for every common interaction where the user expects accurate, up-to-date responses. In this environment, latency directly impacts revenues, customer trust, and competitive advantage.

Data teams building real-time streaming applications have historically had to manage two separate data processing stacks: Apache Spark™ for large-scale analytics and specialized systems like Apache Flink® or Kafka Streams for sub-second, latency sensitive applications. This fragmentation requires teams to maintain duplicate codebases, manage separate governance models, and hire specialized talent to tune and maintain engine-specific infrastructure.

Launching in public preview in August 2025, Real-Time Mode (RTM) for Apache Spark Structured Streaming is designed to eliminate this friction. By fundamentally evolving the Spark execution engine, we have removed the need for a second system. This change allows engineers to address the entire spectrum of use cases using the same Spark API – from high-throughput ETL to low-latency real-time apps. This means less time managing infrastructure and more time to focus on the business use case.

Spark can now process events in milliseconds; Up to 92% faster than Flink

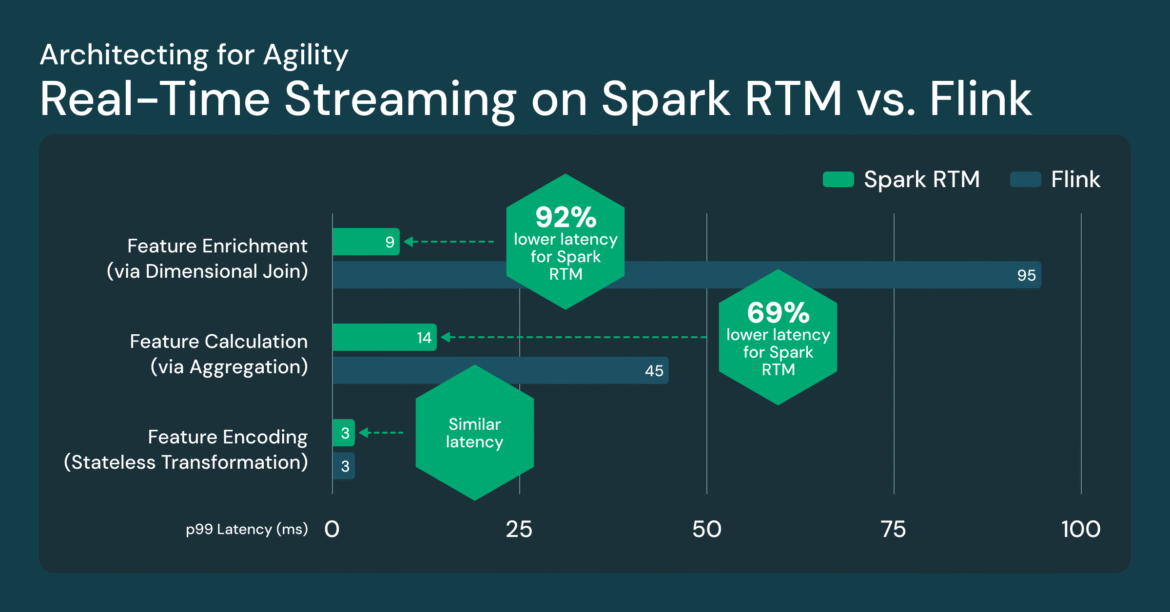

Real-Time Mode (RTM) introduced a new optimized execution engine that enables Spark to deliver consistent sub-second latency. To evaluate performance, we conducted a side-by-side comparison between Spark RTM and Apache Flink. The test was based on real-time feature computation workloads that we typically see in production. These feature count patterns are representative of most low-latency ETL use cases such as fraud detection, personalization, and operational analytics.

We evaluated three common feature patterns:

- Feature Encoding (Stateless Transformation): Minimizing input lines and encoding

- Feature Enhancement (via Join): Joining a stream with a static table

- Feature Count (via Aggregation): GroupBy + Count Aggregation

The results show that Spark’s developed architecture provides a latency profile comparable to specialized streaming frameworks.

This performance is enabled by three key technological innovations in RTM:

- Continuous Data Flow: Data is processed as it arrives rather than in separate, periodic chunks.

- Pipeline Scheduling: The stages run simultaneously without interruption, allowing downstream tasks to process data immediately without waiting for the upstream stage to finish.

- Streaming Shuffle: Data is passed instantly between tasks, bypassing the latency constraints of traditional disk-based shuffles.

Together, these turn Spark into a high-performance, low-latency engine capable of handling the most demanding operational use cases.

Teams operate less infrastructure, and move faster with Spark

While raw speed is essential, the real value of real-time mode lies in its ability to eliminate the operational complexity that typically prevents the creation of ultra-low latency pipelines. Spark RTM significantly simplifies your architecture through three main benefits. To make this concrete, we describe these in the context of real-time machine learning applications.

Reduce “logic drift” between training and inference: Real-time ML, like fraud detection, requires a seamless handoff between high-throughput batching (for model training) and low-latency streaming (for live inference). Spark is the preferred choice for data scientists for model training, and switching from Spark to Flink for inference will make a business logic difference. You end up with one version of the logic in Spark for training and a completely different codebase in Flink for production. This replication of business logic can be error prone and lead to logic drift, where your model is trained on one reality but scores on another. With Spark RTM, your transformation code remains the same, enabling you to produce features faster and with greater accuracy.

On-demand freshness with a single-line code change: Business needs are rarely static. A feature pipeline that starts with a 1-minute SLA today may require sub-second latency tomorrow as model freshness requirements evolve. In contrast, for many use cases, “slow running” (for example, daily or hourly batches) is significantly more cost-effective when immediate freshness is not required. Spark offers the scope to grow and scale along with your product. This enables you to easily advance your feature engineering strategy with a single-line code change. For example, you can set your trigger to now available To run the pipeline on a daily or hourly schedule. When business needs change, you can transition to continuous, ultra-low-latency streaming by switching to real-time mode: .Trigger(RealTimeTrigger.apply()). In contrast, achieving this in Flink is a manual process. Often you need to tune parity and organize shutdown and restart of compute resources to match the new processing frequency.

Accelerate Growth: RTM is built on the same Spark API your team already knows. It eliminates the friction of maintaining multiple systems, allowing you to move faster by building and scaling real-time applications in a single, consistent environment.

Customers running multiple real-time applications on Spark

Early adopters are using RTM to power a range of low-latency applications across industries.

fraud detection: A leading digital asset platform calculates dynamic risk features like velocity checks and aggregate spend patterns from Kafka streams, updating its online feature store in under 200 milliseconds to prevent fraudulent transactions at the point of sale.

Personalized Experience: An e-commerce platform calculates real-time intent features based on the user’s current session, allowing the model to refresh recommendations as the user interacts with a product.

IoT monitoring: A transportation and logistics company uses live telemetry to detect anomalies, moving from reactive to proactive decision making in milliseconds.

draftkingsOne of North America’s largest sportsbooks and fantasy sports services uses RTM to power the feature calculations for its fraud detection models.

“In live sports betting, fraud detection requires extreme velocity. The introduction of real-time mode with the TransformWithState API in Spark Structured Streaming has been a game changer for us. We have achieved substantial improvements in both latency and pipeline design, and for the first time, have built integrated feature pipelines for ML training and online inference, achieving ultra-low latency that was not previously possible.” -Maria Marinova, Senior Lead Software Engineer, DraftKings

Start building with Spark real-time mode

The era of choosing between “easy” and “fast” is over. Why manage two engines, two security models and two sets of specialized skills when one engine now does it all? RTM delivers the sub-second speed your real-time applications demand, with the architectural simplicity your team deserves. By eliminating “operating taxes,” you can finally focus on value creation rather than infrastructure management.

Are you ready to eliminate the complexity of your real-time stack?

- Dive into the details: find out RTM Documentation To understand the full technical specifications, supported sources and sinks, and example queries. You’ll find everything you need to enable the new triggers and configure your streaming workloads.

- See it in action: To go deeper into the engineering behind RTM, check out this technical in-depth session that walks through the design and implementation.