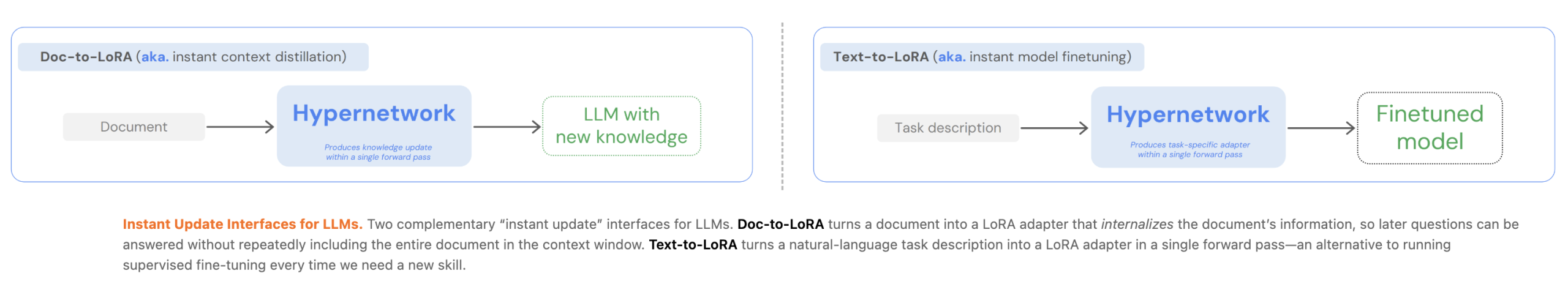

Optimizing large language models (LLMs) currently presents a significant engineering trade-off between flexibility and In-Context Learning (ICL) and efficiency of Context Distillation (CD) Or Supervised Fine-Tuning (SFT). Tokyo-based Sakana AI has proposed a new approach to overcome these barriers through cost amortization. In two of his recent papers, he introduced Text-to-LoRa (T2L) And Dock-to-LoRa (D2L)Lightweight hypernetworks that meta-learn to generate Low-Rank Optimization (LoRA) Matrices in a single forward pass.

Engineering Hurdle: Latency vs. Memory

For AI devs, the primary limitation of standard LLM optimization is the computational overhead:

- In-Context Learning (ICL): While convenient, ICL suffers from quadratic attention cost and linear KV-Cash Increase, which increases latency and memory consumption due to signals becoming longer.

- Context Distillation (CD): CD transfers information to model parameters, but per-prompt distillation is often impractical due to high training cost and update latency.

- SFT: Requires task-specific datasets and expensive re-training if information changes.

Sakana AI’s methods amortize these costs by paying a one-time meta-training fee. Once trained, the hypernetwork can quickly adapt the base LLM to new tasks or documents without additional backpropagation.

Text-to-LoRa (T2L): Adaptation through natural language

Text-to-LoRa (T2L) is a hypernetwork designed to optimize LLMs on the fly using only natural language descriptions of a task.

Architecture and Training

T2L uses a functional encoder to extract vector representations from textual descriptions. This representation, combined with learnable modules and layer embeddings, is processed through a series of MLP blocks to generate A And b Low-rank matrices for target LLM.

The system can be trained through two primary schemes:

- LoRa reconstruction: Distilling existing, pre-trained LoRA adapters into hypernetworks.

- Supervised Fine-Tuning (SFT): Optimizing end-to-end hypernetworks on multi-task datasets.

Research indicates that SFT-trained T2L generalizes better to unseen tasks because it learns to cluster related functionalities in the weight space. In benchmarks, matches or outperforms task-specific adapters on tasks like T2L GSM8K And arch-challengeWhereas the optimization cost is reduced by more than 4 times compared to 3-shot ICL.

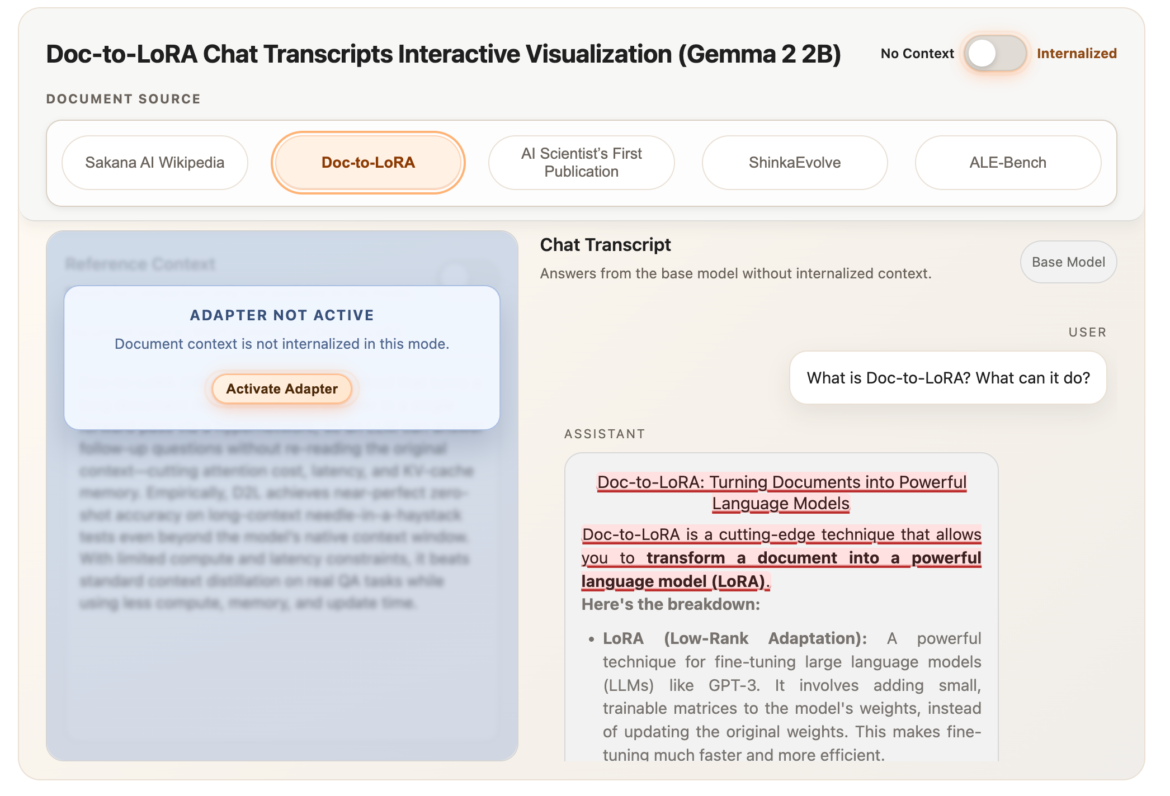

Dock-to-LoRa (D2L): Internalizing the context

Dock-to-LoRa (D2L) Extends this concept to document internalization. This enables the LLM to answer subsequent queries about the document without re-consuming the original context, effectively removing the document from the active context window.

Perceiver-Based Design

uses D2L perceptive style Cross-attention architecture. It maps variable-length token activations (Jade) from the base LLM to a fixed-sized LoRa adapter.

To handle documents longer than the training period, D2L uses a chunking mechanism. Long references are split Of Contiguous segments are each processed independently to form per-segment adapters. These are then combined with the rank dimension, allowing D2L to generate higher-rank LoRAs for longer inputs without changing the output size of the hypernetwork.

performance and memory efficiency

on one Needle in the Haystack (NIAH) Recovery task, D2L maintained almost perfect zero-shot accuracy at a reference length of more than 4x the base model’s original window.

- Memory Effect: For a 128K-token document, a base model is required 12gb of VRAM for KV cache. The internalized D2L model handled the same document using minimal 50 MB.

- Update Latency: D2L internalizes information in the sub-second regime (<1s), whereas conventional CDs can take 40 to 100 seconds.

cross-modal transfer

An important finding in D2L research is the ability to perform zero-shot internalization of visual information. using a Visual-Language Model (VLM) As a reference encoder, D2L mapped visual activations to the parameters of a text-only LLM. This allowed the text model to classify images ImageNet with dataset 75.03% accuracyDespite never seeing image data during its primary training.

key takeaways

- Refinement Optimization through Hypernetwork: Both methods use lightweight hypernetworks to meta-learn the adaptation process, enabling instant, sub-second generation LoRA adapters for new tasks or documents with a one-time meta-training cost.

- Significant memory and latency reduction: Doc-to-LoRA encapsulates context into parameters, reducing KV-Cache memory consumption from 12 GB to less than 50 MB for long documents and reducing update latency from minutes to less than a second.

- Effective Long-Context Generalization: Using a perceiver-based architecture and a chunking mechanism, dock-to-LoRa can internalize information at more than 4x the sequence length from the base LLM’s original reference window with almost perfect accuracy.

- Zero-Shot Function Optimization: Text-to-LoRA can generate specialized LoRA adapters for completely unseen tasks, based entirely on natural language descriptions, that match or exceed the performance of task-specific ‘oracle’ adapters.

- Cross-modal knowledge transfer: The Doc-to-LoRa architecture enables zero-shot internalization of scene information from a vision-language model (VLM) to a text-only LLM, allowing the latter to classify images with high accuracy without seeing pixel data during its primary training.

check it out Doc-to-LoRa paper, code, Text-to-LoRa Paper, code . Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.