Author(s): Shreyash Shukla

Originally published on Towards AI.

“Reference Window” Economy

In the world of large language models (LLM), attention is a finite currency. While the context window is expanding, the “lost in the middle” phenomenon continues to be an architectural challenge. research from Stanford University shows that as the amount of retrieved context increases, the model’s ability to accurately extract specific constraints degrades significantly (Lost in the middle: how language models use long references).

This creates a serious problem for enterprise analytics. The standard industry approach – Retrieval-Augmented Generation (RAG) – often involves filling the prompt with hundreds CREATE TABLE Statements (DDL) in the hope that the LLM will figure it out. this leads to “Reference rot.” The model becomes overwhelmed with irrelevant columns and foreign keys, losing focus on the user’s actual question.

Furthermore, relying on raw schema introduces “The raw schema fallacy.” describes a DDL statement structureNo Material. This tells the agent that a column is named status exists, but not what values reside inside it. Does this include “active/passive”? “Open/Closed”? “1/0”? Without this knowledge, the agent is forced to hallucinate the filter, generating SQL that is syntactically correct but semantically dead. datacamp Note that this lack of semantic context is the primary driver of 20-40% failure rate In text-to-SQL applications (State of Data and AI Literacy 2024).

To solve this, we need to discard the “idle RAG”. “At the Right Time” Architecture that provides rich, targeted context only for the specific tables involved.

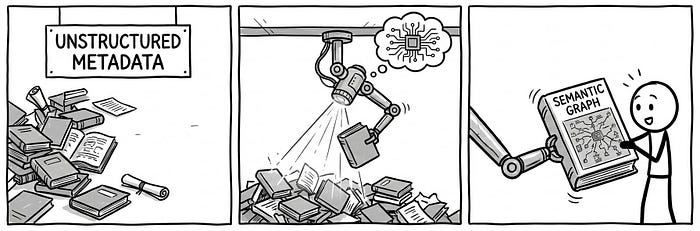

Pillar 1 – Enterprise Semantic Graph

The first pillar of accuracy is Enterprise Semantic Graph. We cannot rely on static documentation or manual wiki pages, which become out of date as soon as they are written. Instead, we should treat it SQL ETL script itself As a source of truth.

parsing the script create From the tables, we create a structured, JSON-based map of the data universe. This takes us away from a flat list of tables semantic layer – a concept that databricks Logic is essential to AI, as it “translates raw data into business concepts” (Importance of semantic layer for AI).

This structure allows the agent to navigate data lineage – Not only understanding that a table exists, but also identifying its upstream dependencies and downstream consumers. Importantly, it provides the agent with verified argument. How to Calculate “Churn” Instead of guessing, the agent reads simplified_sql_logic Straight from the metadata, ensuring the math is the same as official reporting.

Artwork: Knowledge Object

Instead of indexing just the schema, we index the logic.

{

"table_name": "revenue_daily_snapshot",

"lineage": {

"upstream_tables": (

{ "table_name": "raw_bookings"},

{ "table_name": "currency_conversion_rates" }

)

},

"metrics": (

{

"name": "Net_Revenue_Retention",

"definition": "Revenue from existing customers excluding new sales.",

"simplified_sql_logic": "SUM(renewal_revenue) + SUM(upsell_revenue) - SUM(churn_revenue)",

"key_filters_and_conditions": ("is_active_contract = TRUE", "region != 'TEST'")

}

)

}

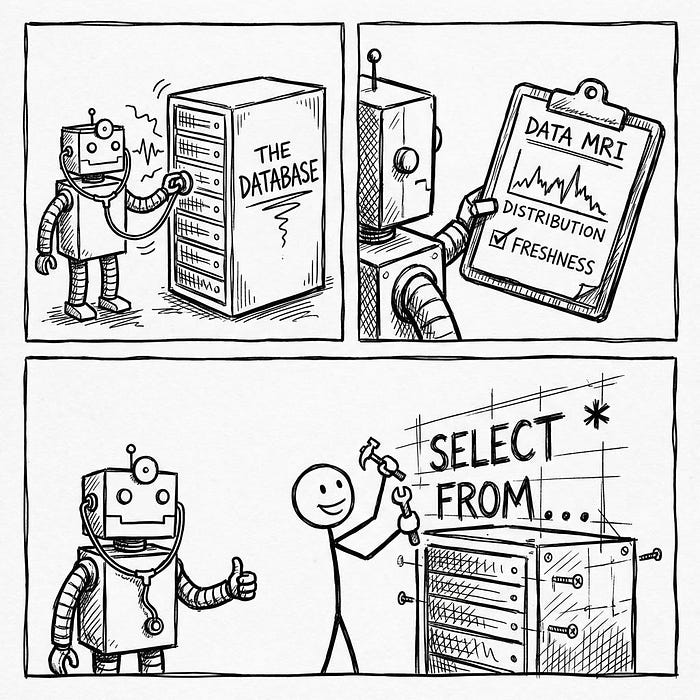

Column 2 – Finding statistical shape

The second pillar of accuracy is statistical shape detection. While the semantic graph provides map of data, provides shape detection locality. To write accurate SQL, an agent needs to know the statistical signatures of data columns before attempting to query them.

Without it, LLM declines “Cardinality Trap.” For example, if a user asks “Group customers by type” and this customer_type The columns actually contain unique IDs (higher cardinality) rather than categories (lower cardinality), resulting in GROUP BY The query may crash the database cluster. so Gartner predicts that organizations adopting “active metadata” analysis will Reduce delivery time of new data assets by up to 70% (Using Active Metadata for Data Management). By actively scanning for data size, the agent removes the friction of trial-and-error queries.

To prevent this, our architecture pre-calculates a “size definition” for each important column. The agent refers to these metrics “just-in-time” to verify its logic:

The Artifact: The Shape Definition

Before writing a line of SQL, the agent consults these pre-calculated prompts:

DISTINCT VALUE COUNT: The agent checks a column to decide whether it is safe for it or notGROUP BYShould be treated as a clause (low cardinality) or an identifier (high cardinality).FREQUENT VALUES OCCURRENCES: It prevents “price hallucination”. If the user asks for “United States” data, the agent checks this list to see if the actual value is in the database.'USA','US'Or'United States'.QUANTILES&MIN/MAX VALUE: These allow the agent to detect outliers. If a revenue figure is outside the 99th percentile, the agent may flag it as an anomaly rather than reporting it as a trend.ROW COUNT: These act as “health checks”. IfROW_COUNTThere has been a 50% drop since yesterday, the agent knows the data pipeline is broken.

This approach is consistent with IDC The conclusion is that “mature data intelligence organizations” – those that actively manage metadata context – achieve Three times better business results compared to your peers (IDC Snapshot: Data Intelligence Maturity Drives Three Times Better Business Results).

Result – Deterministic SQL Generation

When we add these two columns – the Enterprise Semantic Graph And statistical shape detection – We achieve a fundamental change in the way agents operate. we proceed from there probabilistic text generation To Deterministic SQL Assembly.

In a standard LLM workflow, the model guesses the query based on the patterns it learned during training. In our architecture, the agent works like a compiler. It doesn’t guess; It assembles the query based on verified constraints:

- Selection (Map): Semantic graph clearly identifies

my_company_data.revenueAs to the correct table for “sales”, rejecting similar-sounding but irrelevant tables. - Filtering (area): Shape Detector confirms that

regionColumn contains'NA'No'North America'to ensureWHEREThe where clause actually returns the data. - Logic (Rule): The knowledge object provides the exact formula for “net revenue”, which prevents the agent from having to invent his own math.

This “constraint-based” approach reflects development self-driving carsWhich rely on high-definition maps (semantic layer) and real-time sensor data (shape detection) to navigate safely. according to databricksThis shift toward compound AI systems – where models are guided by external tools and valid data – is the only viable path forward. state-of-the-art accuracy In enterprise applications (Changes in Compound AI system).

from generation to logic

The era of “chatting with data” is over. To build an agent that a CEO can trust, we must treat the “prompt” not as a magic mantra, but as a software engineering problem.

By engineering a semantic layer that provides “just-in-time” context and a shape detection layer that provides statistical reality, we stop asking LLMs to Memorization world and start teaching it inspection it. This is the difference between an agent that generates text and an agent that reasons about data.

We will examine this in the next article device-driven spine – Unique orchestration architecture that allows agents to use these powerful tools in real time.

create a complete system

This is part of the article cognitive agent architecture series. We’re going through the engineering required to go from a basic chatbot to a secure, deterministic enterprise advisor.

To see the full roadmap – including Semantic Graph (Brain), Gap Analysis (Discernment)And Sub-Agent Ecosystem (Organization) – See master index below:

Cognitive Agent Architecture: From Chatbot to Enterprise Advisor

Published via Towards AI