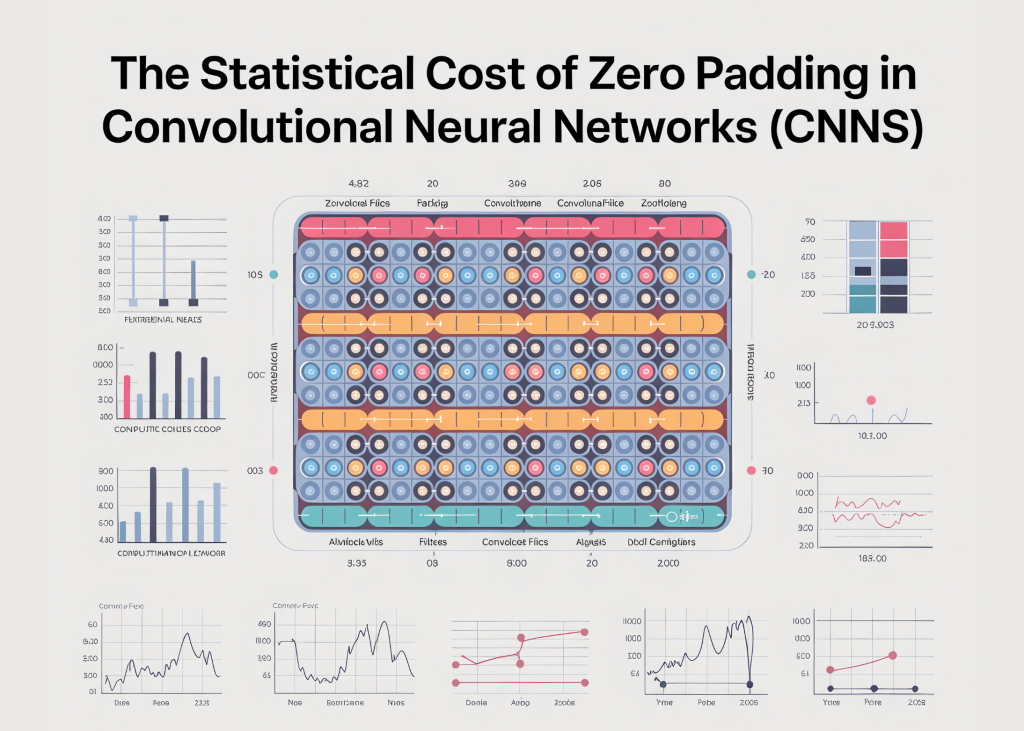

what is zero padding

Zero padding is a technique used in convolutional neural networks where extra pixels with zero values are added around the boundaries of an image. This allows the convolutional kernel to slide over edge pixels and helps control how much the spatial dimensions of the feature map shrink after convolution. Padding is commonly used to preserve feature map size and enable deeper network architectures.

Hidden issue with zero padding

From a signal processing and statistical perspective, zero padding is not a neutral operation. Injecting zeros at image boundaries creates artificial discontinuities that are not present in the original data. These sharp transitions act like strong edges, causing convolutional filters to respond to padding rather than meaningful image content. As a result, the model learns different statistics at the boundaries than at the center, subtly breaking translation balance and skewing feature activation near image edges.

How does zero padding change feature activation

install dependencies

pip install numpy matplotlib pillow scipyimport numpy as np

import matplotlib.pyplot as plt

from PIL import Image

from scipy.ndimage import correlate

from scipy.signal import convolve2dimport image

img = Image.open('/content/Gemini_Generated_Image_dtrwyedtrwyedtrw.png').convert('L') # Load as Grayscale

img_array = np.array(img) / 255.0 # Normalize to (0, 1)

plt.imshow(img, cmap="gray")

plt.title("Original Image (No Padding)")

plt.axis("off")

plt.show()In the above code, we first load the image using disk Public interest litigation and convert it explicitly scaleSince convolution and edge-detection analysis are easier to reason about in the same intensity channel. The image is then converted to a NumPy array and normalized to the range (0,1)(0, 1)(0,1) so that pixel values represent meaningful signal magnitudes rather than raw byte intensities. For this experiment, we use an image Chameleon generated using Nano Banana 3Chosen because it is a real, textured object that is placed well within the frame – any strong reaction to the image borders can clearly be attributed to padding rather than actual visible edges.

Padding the image with zeros

pad_width = 50

padded_img = np.pad(img_array, pad_width, mode="constant", constant_values=0)

plt.imshow(padded_img, cmap="gray")

plt.title("Zero-Padded Image")

plt.axis("off")

plt.show()In this step, we apply zero padding to the image by adding a border of fixed width on all sides using NumPy’s pad function. The parameter mode=’constant’ with constant_values=0 obviously fills the padded area with zeros, effectively surrounding the original image with a black frame. This operation does not add new visual information; Instead, it introduces a sharp intensity discontinuity at the boundary between the real pixel and the padded pixel.

Implementing Edge Detection Kernel

edge_kernel = np.array(((-1, -1, -1),

(-1, 8, -1),

(-1, -1, -1)))

# Convolve both images

edges_original = correlate(img_array, edge_kernel)

edges_padded = correlate(padded_img, edge_kernel)Here, we use a simple Laplacian-style edge detection kernel, designed to respond strongly to high-frequency signals such as sudden intensity changes and edges. We apply the same kernel to both the original image and the zero-padded image using correlation. Since the filter remains unchanged, any difference in output can only be attributed to padding. Strong edge responses near the boundaries of a padded image are not caused by real image features, but by artificial zero-valued boundaries introduced through zero padding.

Visualizing padding artifacts and distribution shifts

fig, axes = plt.subplots(2, 2, figsize=(12, 10))

# Show Padded Image

axes(0, 0).imshow(padded_img, cmap='gray')

axes(0, 0).set_title("Zero-Padded Imagen(Artificial 'Frame' added)")

# Show Filter Response (The Step Function Problem)

axes(0, 1).imshow(edges_padded, cmap='magma')

axes(0, 1).set_title("Filter Activationsn(Extreme firing at the artificial border)")

# Show Distribution Shift

axes(1, 0).hist(img_array.ravel(), bins=50, color="blue", alpha=0.6, label="Original")

axes(1, 0).set_title("Original Pixel Distribution")

axes(1, 0).set_xlabel("Intensity")

axes(1, 1).hist(padded_img.ravel(), bins=50, color="red", alpha=0.6, label="Padded")

axes(1, 1).set_title("Padded Pixel Distributionn(Massive spike at 0.0)")

axes(1, 1).set_xlabel("Intensity")

plt.tight_layout()

plt.show()

In top leftThe zero-padded image shows a uniform black frame added around the original chameleon image. This frame does not come from the data itself – it is an artificial construct introduced purely for architectural convenience. In top rightThe edge filter response reveals the result: despite there being no actual meaningful edge at the image boundary, the filter activates strongly along the padded boundary. This occurs because the transition from actual pixel values to zero creates a sharp step function, which is designed to noticeably enhance edge detectors.

bottom row Throws light on a deep statistical issue. The histogram of the original image shows a smooth, natural distribution of pixel intensities. In contrast, the padded image distribution displays a huge spike at intensity 0.0, which represents the injected zero-value pixels. This spike indicates a clear distribution shift introduced by padding alone.

conclusion

Zero padding may seem like a harmless architectural choice, but it silently injects strong assumptions into the data. By placing zeros in front of the actual pixel values, it creates artificial phase functions that convolutional filters interpret as meaningful edges. Over time, the model begins to associate boundaries with specific patterns – introducing spatial bias and breaking the original promise of translation equivalence.

More importantly, zero padding alters the statistical distribution at image boundaries, causing edge pixels to follow a different activation regime than interior pixels. From a signal processing perspective, this is not a minor detail but a structural distortion.

For production-grade systems, padding strategies such as reflection or replication are often preferred, as they maintain statistical continuity across boundaries and prevent the model from learning artifacts that were never present in the original data.

I am a Civil Engineering graduate (2022) from Jamia Millia Islamia, New Delhi, and I have a keen interest in Data Science, especially Neural Networks and their application in various fields.