In the high-risk world of AI infrastructure, the industry has operated under a singular assumption: Flexibility is king. We build general-purpose GPUs because AI models change every week, and we need programmable silicon that can adapt to the next research breakthrough.

But talusThe Toronto-based startup believes that inflexibility is what is holding AI back. According to the Talas team, if we want AI to become as common and cheap as plastic, we need to stop ‘simulating’ intelligence on general-purpose computers and start ‘casting’ it directly into silicon.

Problem: ‘Memory Wall’ and GPU Tax

The current cost of running Large Language Models (LLMs) is driven by a physical constraint: memory wall.

Traditional processors (GPUs) are ‘instruction set architecture’ (ISA) based. They separate compute and memory. When you run an inference pass on a model like Llama-3, the chip spends the bulk of its time and energy shuttling weight from high bandwidth memory (HBM) to the processing core. This ‘data movement tax’ accounts for approximately 90% of power consumption in modern AI data centres.

Talas’ solution is radical: End the memory-fetch cycle. Using a proprietary automated design flow, Talas translates the computational graphs of a specific model directly into the physical layout of the chip. in their this hc1 (Hardcore 1) The weight and architecture of the chip, model, is literally carved into the wiring of the silicon.

Hardcore model: 17,000 tokens per second

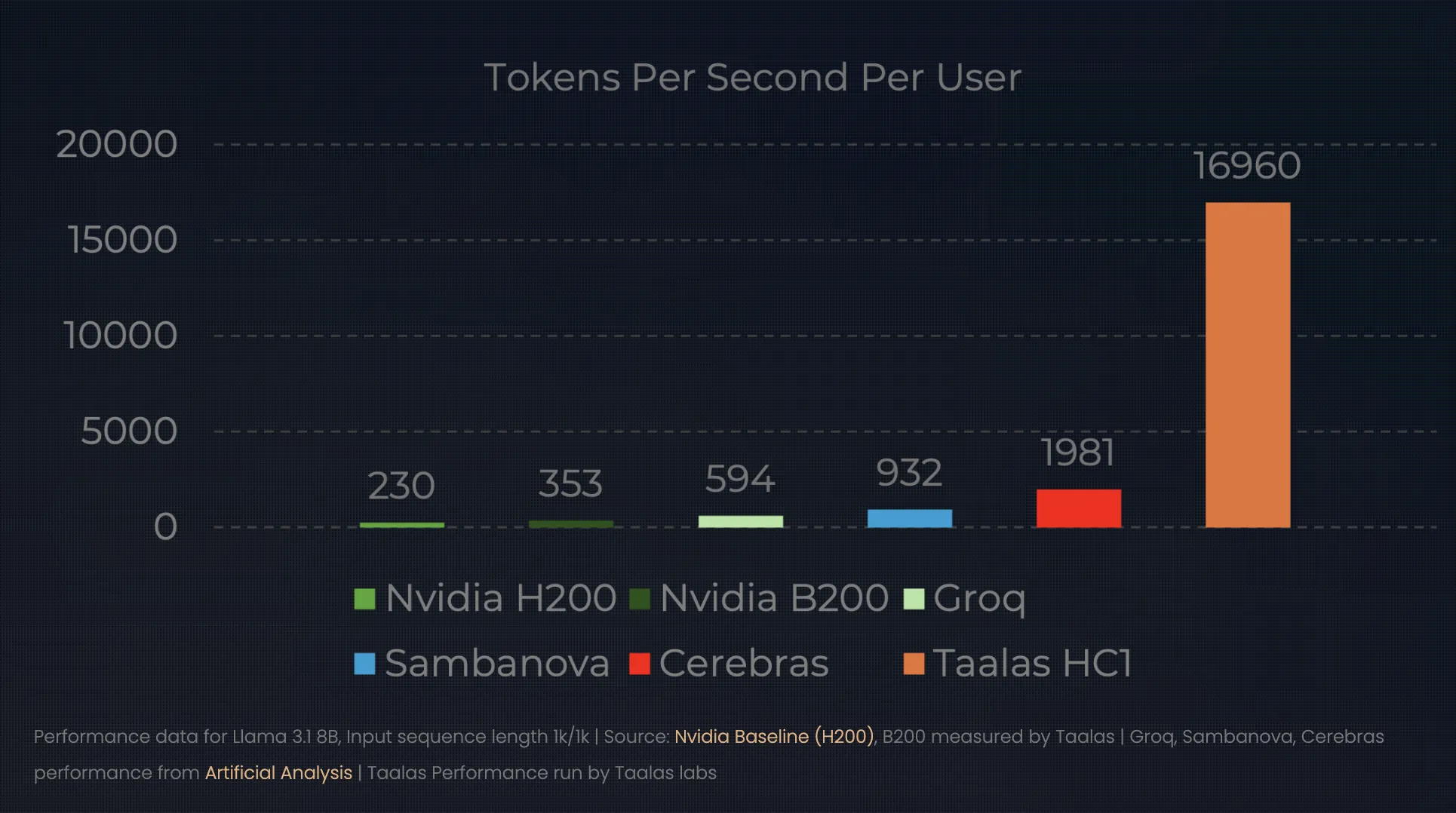

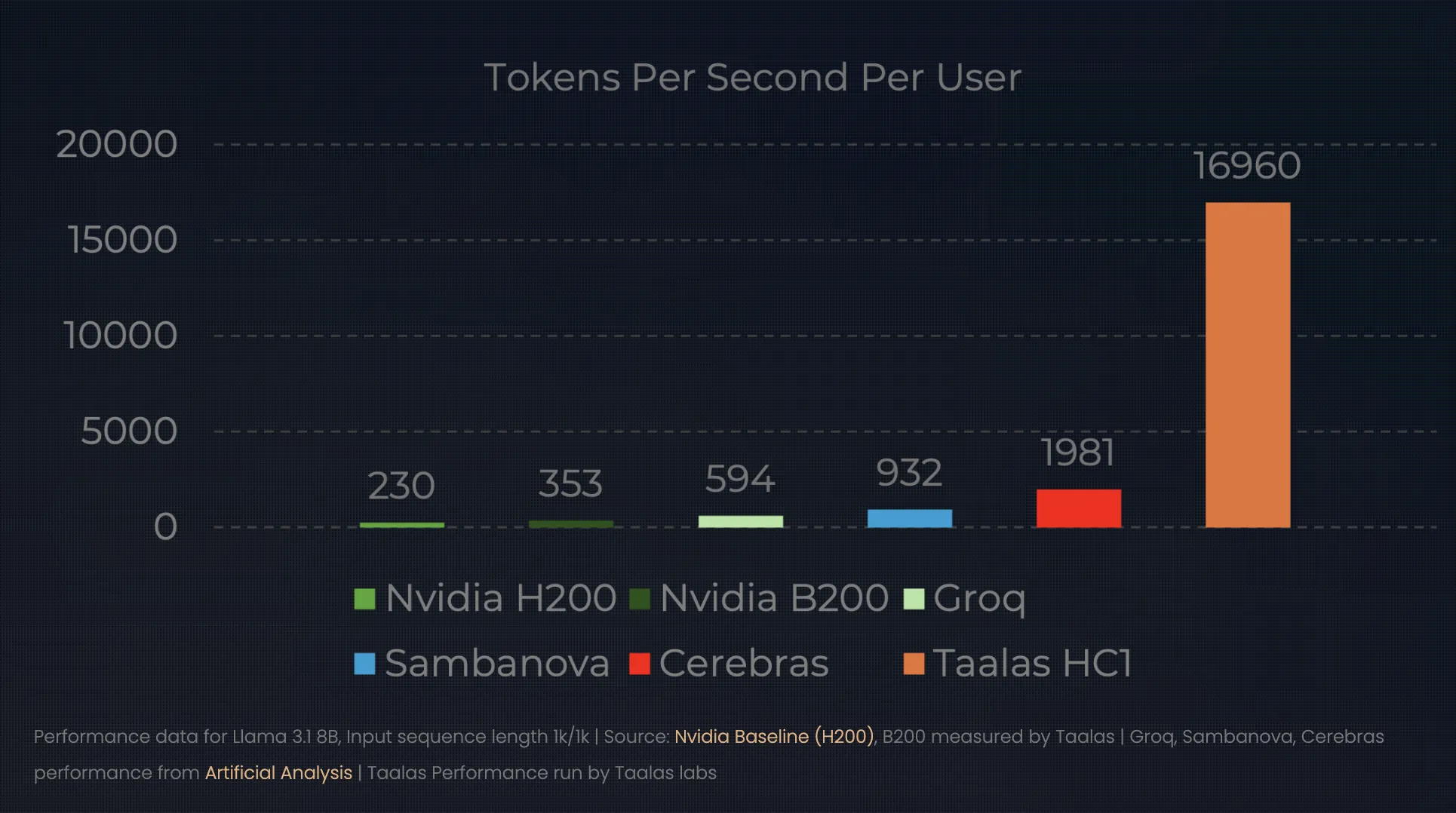

The results of this ‘direct-to-silicon’ approach redefine the performance frontier for inference. In its latest unveiling, Talas demonstrated its this hc1 Running the Llama 3.1 8B model. While a top-tier NVIDIA H100 can serve a user at a rate of ~150 tokens per second, the HC1 serves a staggering 16,000 to 17,000 tokens per second.

This changes the ‘unit economics’ of AI:

- Display: A single HC1 chip can outperform a small GPU data center in terms of raw throughput for a typical model.

- Capacity: Talas claims 1000x improvement In efficiency (performance-per-watt and performance-per-dollar) compared to conventional chips.

- Infrastructure: Since the weights are hardwired, there is no need for external HBM or complex liquid cooling systems. A standard air-cooled rack can hold ten of these 250W cards, providing the power of an entire GPU cluster in a single server box.

Breaking the 60-Day Barrier: Automated Foundry

The obvious ‘catch’ for the AI developer is flexibility. If you hardwire a model into a chip today, what happens when a better model comes out tomorrow? Historically, an ASIC (Application-Specific Integrated Circuit) took two years and millions of dollars to design.

Talas has found a solution automation. They have created a compiler-like foundry system that takes models and produces a chip design in about a week. By focusing on a streamlined manufacturing workflow – where they only change the top metal masks of the silicon – they have reduced the turnaround time from ‘wet-to-silicon’ to just two months.

This allows a ‘seasonal’ hardware cycle. A company can fine-tune a frontier model in the spring and deploy thousands of specialized, ultra-efficient inference chips by the summer.

Market Transformation: From Shovels to Stamps

This change marks a significant moment in the AI hype cycle. We are moving from the ‘research and training’ phase – where GPUs are essential for their flexibility – to the ‘deployment and estimation’ phase, where cost-per-token is the only metric that matters.

If Talas is successful, the AI market will split into two distinct tiers:

- General Purpose Training: Led by NVIDIA and AMD, it provides the massive, flexible clusters needed for exploring and training new architectures.

- Typical estimates: Led by ‘foundries’ like Talus, which take those proven architectures and ‘print’ them in cheap, ubiquitous silicon for everything from smartphones to industrial sensors.

key takeaways

- ‘Hardwired’ paradigm shift: running from talas Software-Defined AI (Models running on general purpose GPUs). Hardware-defined AI. By ‘baking’ a specific model’s weight and architecture directly into the silicon, they eliminate the need for traditional instruction-set overhead, effectively making the model the processor itself.

- Death of Memory Wall: Traditional AI hardware wastes ~90% of its energy moving data between memory and compute. of Talas HC1 (Hardcore 1) The chip eliminates the “memory wall” by physically wiring the model parameters into the metal layers of the chip, removing the need for expensive high bandwidth memory (HBM).

- 1000x efficiency leap: By removing the ‘programmability tax’, Talas claims 1,000x improvement In performance-per-watt and performance-per-dollar. In practice, this means that HC1 can be hit 17,000 tokens per second Llama 3.1 on the 8B model—outperforming a standard GPU rack while using much less power.

- Automated ‘direct-to-silicon’ foundries: To solve the problem of model obsolescence, Talas uses a proprietary automated design flow. This cuts the time taken to build a custom AI chip from years to mere years weeksthereby allowing companies to ‘print’ their sophisticated models in silicon on a seasonal basis.

- Commodity AI future: This technology signals a shift from ‘cloud-first’ ‘Device-native’ AI. As inference becomes a cheap, hardwired commodity, AI will move away from centralized servers to local, low-power hardware – from smartphones to industrial sensors – with zero latency and no subscription costs.

check it out technical details. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.