The Quen team recently released quen3-coder-next, an open-source language model designed for coding agents and native development. It sits on top of the Qwen3-Next-80B-A3B backbone. The model uses a sparse mixture-of-experts (MOE) architecture with hybrid attention. It has a total of 80B parameters, but only 3B parameters are active per token. The goal is to match the performance of very large active models while keeping inference costs low for long coding sessions and agent workflows.

The model is deployed for agentic coding, browser-based tools, and IDE copilots rather than simple code completion. Qwen3-Coder-Next is trained with a large collection of executable tasks and reinforcement learning so that it can plan, call tools, run code, and recover from runtime failures over long periods of time.

Architecture: Hybrid Attention Plus Sparse MOE

The research team describes it as a hybrid architecture that combines Gated DeltaNet, Gated Attention, and MOE.

The main configuration points are:

- Types: Causal language models, pre-training and post-training.

- Parameters: Total 80B, 79B non-embeddings.

- Active parameters: 3B per token.

- Layers: 48.

- Hidden dimension: 2048.

- Layout: 12 repetitions

3 × (Gated DeltaNet → MoE)After1 × (Gated Attention → MoE).

The gated attention block uses 16 query heads and 2 key-value heads with head dimension 256 and rotary position embedding of dimension 64. Gated DeltaNet uses 32 linear-attention heads for block values and 16 for queries and keys with head dimension 128.

The MoE layer has 512 experts, with 10 experts and 1 shared expert active per token. Every expert uses an intermediate dimension of 512. This provides strong capabilities for design specialization, while keeping active computation close to the 3B dense model footprint.

Agent Training: Executable Tasks and RL

The Quen team describes quen3-coder-next as ‘agent-trained at scale’ on top of quen3-next-80b-a3b-base. The training pipeline extensively uses executable task synthesis, interaction with the environment, and reinforcement learning.

It highlights approximately 800K verifiable tasks along with the executable environment used during training. These functions provide concrete signals for long-horizon reasoning, device sequencing, test execution, and recovery from failed runs. This aligns with a SWE-bench-style workflow rather than pure static code modeling.

Benchmarks: SWE-Bench, Terminal-Bench, and Adder

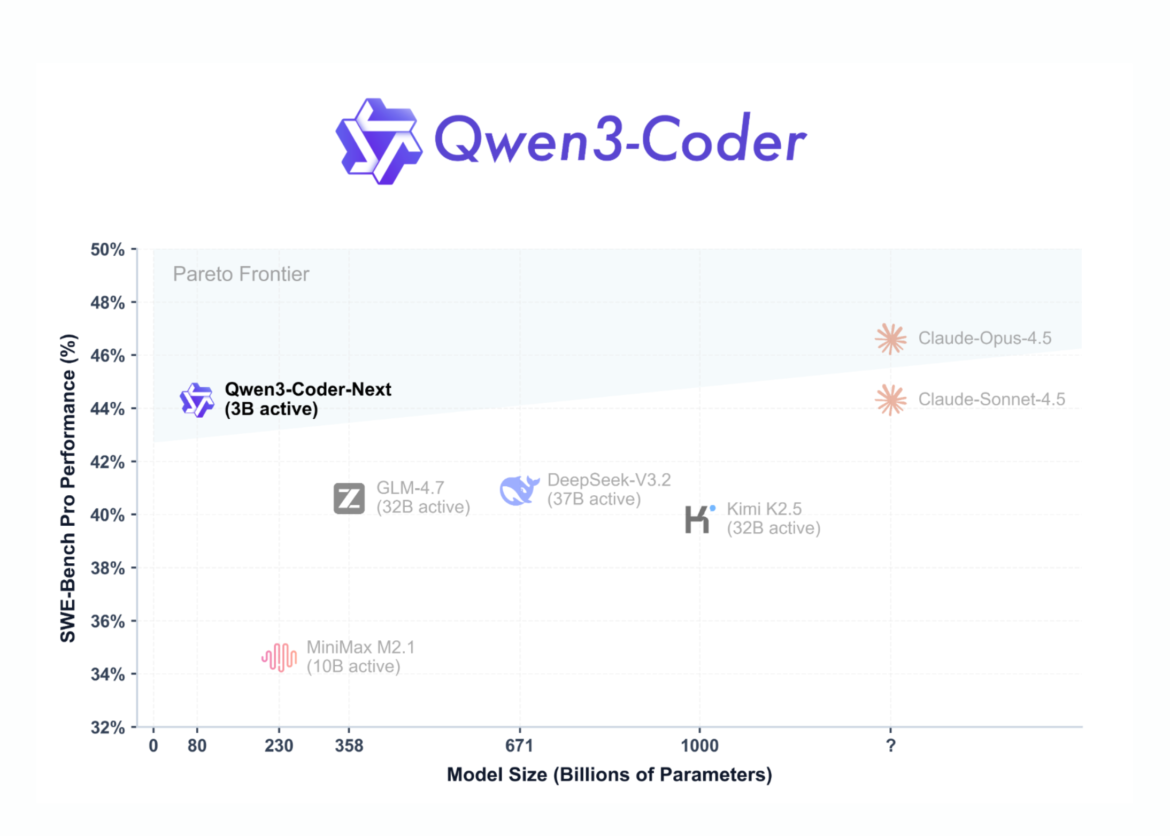

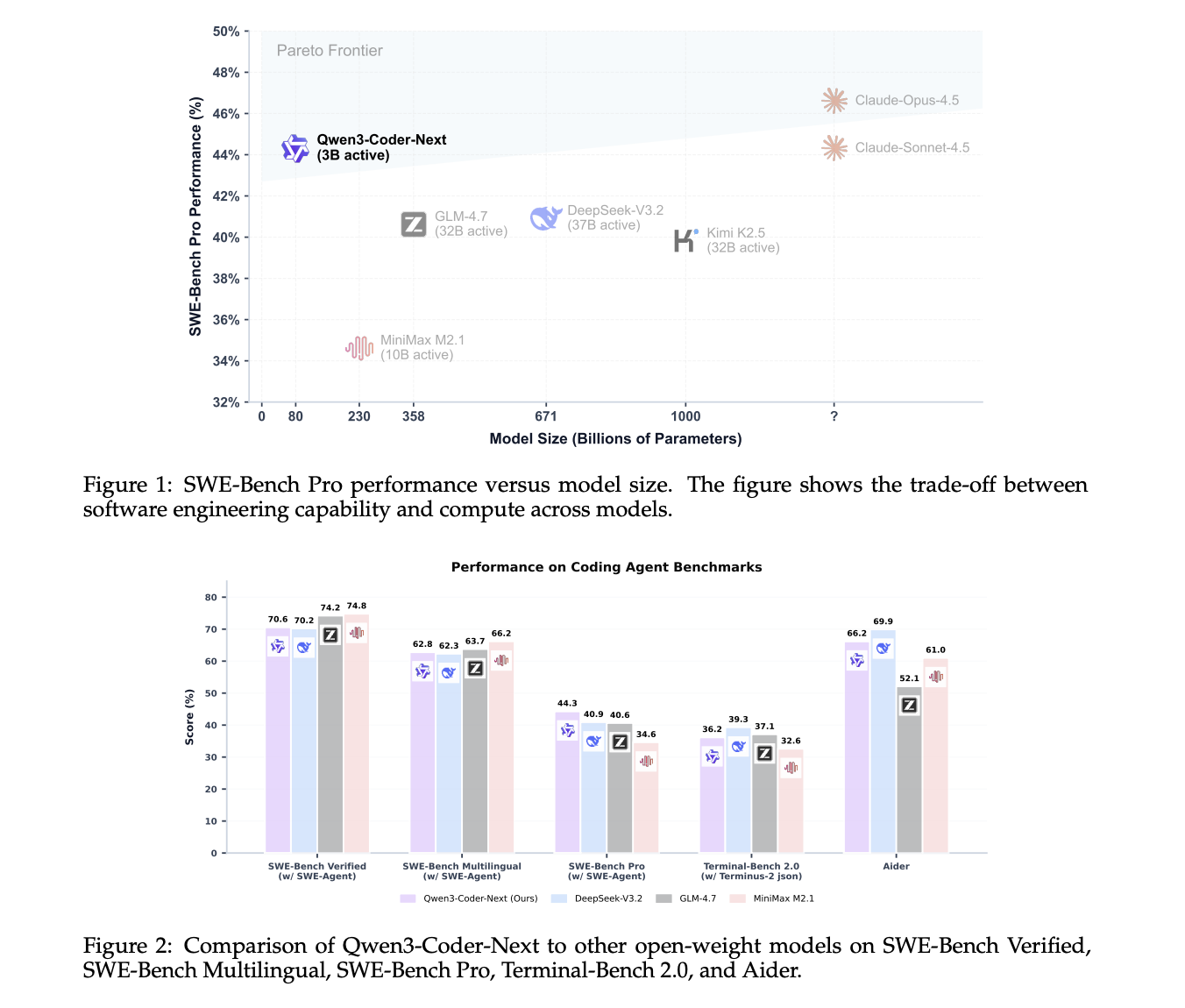

On SWE-Bench, verified using the SWE-Agent scaffold, Qwen3-Coder-Next has a score of 70.6. The score of DeepSeq-v3.2 on 671b parameters is 70.2, and the score of GLM-4.7 on 358b parameters is 74.2. On SWE-Bench Multilingual, Quen3-Coder-Next reaches 62.8, which is very close to DeepSeq-v3.2 at 62.3 and GLM-4.7 at 63.7. On the more challenging SWE-Bench Pro, Qwen3-Coder-Next scores 44.3, above DeepSeek-V3.2 with 40.9 and GLM-4.7 with 40.6.

On Terminal-Bench 2.0 with the Terminus-2 JSON scaffold, Qwen3-coder-next has a score of 36.2, which is again competitive with larger models. On the Adder benchmark, it reaches 66.2, which is close to the best models in its class.

These results support the Quen team’s claim that Quen3-Coder-Next achieves performance equivalent to models with 10–20× more active parameters, especially in coding and agentic settings.

Tool access and agent integration

Qwen3-Coder-Next is tuned for tool calling and integration with coding agents. The model is designed to plug into IDE and CLI environments such as quen-code, cloud-code, cline, and other agent frontends. 256K context lets these systems hold large codebases, logs, and conversations in a single session.

Qwen3-Coder-Next only supports non-thinking mode. Both official model cards and Unsloth Documentation stress that it does not arise

Deploy: SGLang, vLLM, and local GGUF

For server deployment, the Quen team recommends SGLang and vLLM. In SGLang, users go sglang>=0.5.8 with --tool-call-parser qwen3_coder And the default reference length of 256K tokens. In VLLM, users move vllm>=0.15.0 with --enable-auto-tool-choice And the same tool parser. Both setups highlight an OpenAI-compatible /v1 endpoint.

For local deployment, Unsloth provides GGUF quantization Qwen3-coder-next and a complete llama.cpp and llama-server workflow. The 4-bit quantified version requires approximately 46 GB of RAM or integrated memory, while the 8-bit requires approximately 85 GB. The Unsloth guide recommends a reference size of up to 262,144 tokens, with 32,768 tokens as a practical default for smaller machines.

unsloth guide It also shows how to connect Qwen3-Coder-Next to local agents emulating OpenAI codecs and cloud code. These examples rely on Llama-server with an OpenAI-compliant interface and reuse the agent prompt template while swapping the model name to Qwen3-Coder-Next.

key takeaways

- MoE architecture with less active computation: The sparse MoE design in Qwen3-Coder-Next has a total of 80B parameters, but only 3B parameters are active per token, which reduces inference cost while keeping high capacity for specialized experts.

- Hybrid focus stack for long-horizon coding: The model uses a hybrid layout of Gated DeltaNet, Gated Attention, and MOE blocks on 48 layers with 2048 hidden shapes, optimized for code editing and long-horizon reasoning in agent workflows.

- Agent training with executable tasks and RL: Qwen3-Coder-Next is trained on large-scale executable tasks and reinforcement learning on top of Qwen3-Next-80B-A3B-Base, so it can plan, call tools, run tests, and recover from failures instead of just completing short code snippets.

- Competitive performance on SWE-bench and terminal-bench: Benchmarks show that Quen3-Coder-Next reaches strong scores on SWE-Bench Verified, SWE-Bench Pro, SWE-Bench Multilingual, Terminal-Bench 2.0, and Adder, often matching or surpassing much larger MOE models with 10–20× more active parameters.

- Practical deployment for agents and local use: The model supports 256K context, non-thinking mode, OpenAI-compliant APIs via SGLang and vLLM, and GGUF volume for llama.cpp, making it suitable for IDE agents, CLI tools, and local private coding co-pilots under Apache-2.0.

check it out paper, repo, model weight And technical details. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.