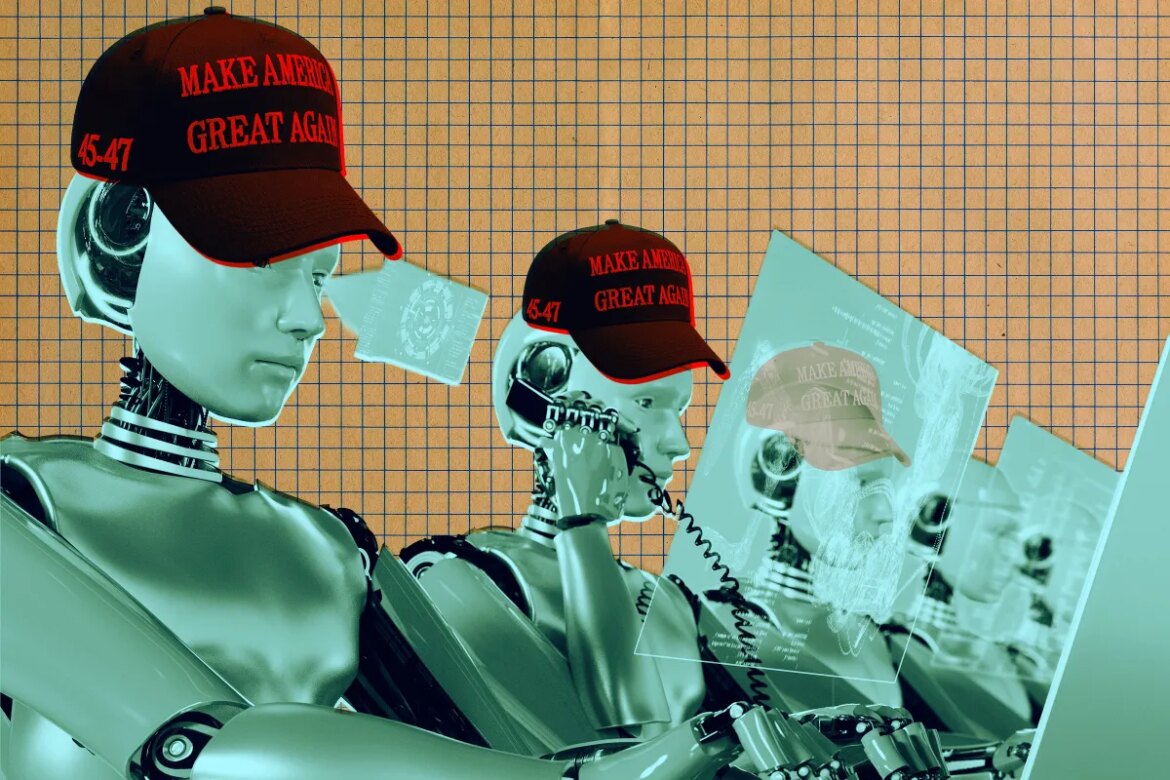

Illustration by Tag Hartman-Simkins/Futurism. Source: Getty Images

The Department of Defense may be the first government agency to introduce a department-wide AI chatbot, but the Department of Transportation is about to be the first to draft actual binding rules with the technology.

according to a New investigation by ProPublicaThe top transportation agency has used Google Gemini to help write new rules affecting aviation, automotive, railroad and maritime safety. In an internal communication from DoT lawyer Daniel Cohen, the plan was presented to agency staff with a demonstration of AI’s “potential to revolutionize the way we make rules.”

Cohen enthused that the AI demonstration “will showcase the exciting new AI tools available to DOT rule writers that will help us do our jobs better and faster.”

What’s troubling is that their focus was clearly on whether the AI was fast, even if it wasn’t particularly accurate.

“We don’t need a perfect rule on XYZ. We don’t even need a very good rule on XYZ,” said Gregory Zerzan, DoT general counsel. ProPublica. “We want the best,” he said. “We are flooding the area.”

Additionally, Zerzan said enthusiasm for the DoT AI tool is at an all-time high, noting that Donald Trump is “very excited about this initiative.”

The six DoT personnel who were talked to ProPublica Anonymously stated that specific regulation-writing can take months and sometimes years due to the complexities involved. But at the demonstration in December, a presenter told them Google’s Gemini could reduce that to “minutes or seconds.”

Zarzan, for his part, told DoT staff that the goal is to be able to implement a new regulation in as little as 30 days. “It should take you no more than 20 minutes to get the draft rules from Gemini,” he told regulators.

His opinion was sought by ProPublicaMike Horton, DoT’s former chief AI officer, compared the plan to “a high school intern making your rules.”

For anyone concerned about keeping trains on the tracks and planes in the skies, this is an incredibly troubling development. Large language models (LLMs) like Gemini are prone to errors called hallucinations. Gemini has been linked to a number of embarrassing incidents, such as hallucinating weddings that don’t exist or giving dangerous medical misinformation.

And without getting into it, in April last year, Gemini users became upset as the chatbot suddenly started spitting out Things that read like disturbing psychological breakdowns – the last thing you want when dealing with federal regulations on vital activities like air traffic control.

More on Trump: Trump’s HHS calls top African health organization “fake” and “powerless”