In this article, you will learn how vector databases and graph RAGs differ as memory architectures for AI agents, and when each approach is a better fit.

Topics we’ll cover include:

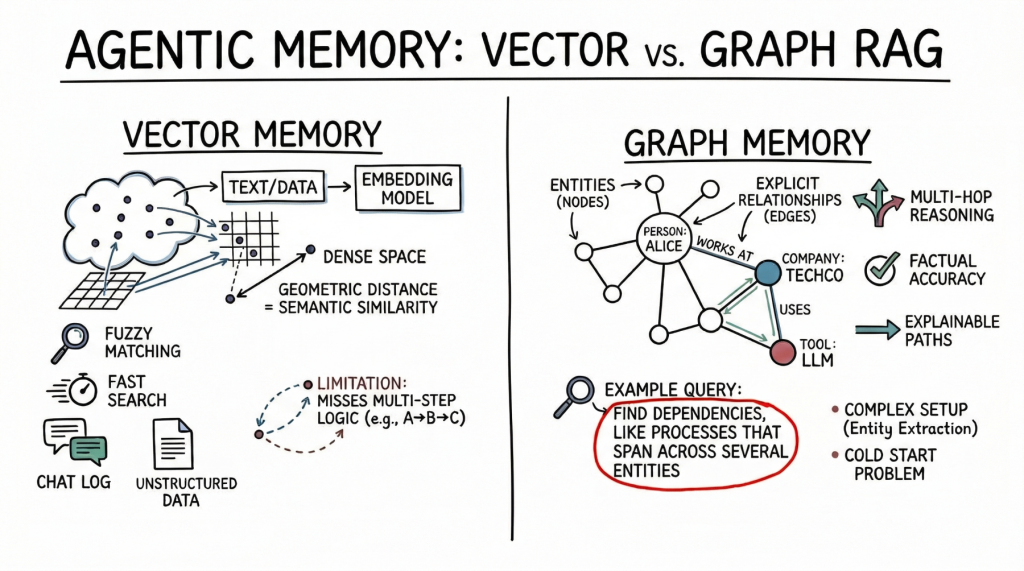

- How vector databases store and retrieve semantically similar unstructured information.

- How graph RAG represents entities and relationships for accurate, multi-hop recovery.

- How to choose between these approaches, or combine them in a hybrid agent-memory architecture.

With that in mind, let’s get straight to it.

Vector Database vs Graph RAG for Agent Memory: When to Use Which

Image by author

Introduction

AI Agent Long-term memory is needed to be truly useful in complex, multi-step workflows. An agent without memory is essentially a stateless function that resets its context with each interaction. As we move toward autonomous systems that manage tasks continuously (such as coding assistants that track project architecture or research agents that compile ongoing literature reviews) the question of how to store, retrieve, and update context becomes important.

Currently, the industry standard for this task is vector databases, which use dense embeddings for semantic search. Nevertheless, as the need for more complex logic increases, graph RAGs, an architecture that combines knowledge graphs with large language models (LLMs), are gaining traction as a structured memory architecture.

At a glance, vector databases are ideal for broad similarity matching and unstructured data retrieval, while graph RAGs are excellent when context windows are limited and when multi-hop relationships, factual accuracy, and complex hierarchical structures are required. This difference highlights the vector database’s focus on flexible matching compared to Graph RAG’s ability to reason through explicit relationships and preserve accuracy under tight constraints.

To clarify their respective roles, this article explores the underlying theory, practical strengths, and limitations of both approaches to agent memory. In doing so, it provides a practical framework to guide the choice of system, or combination of systems, to be deployed.

Vector Database: The Basis of Semantic Agent Memory

vector database Represent memory as dense mathematical vectors, or embeddings, located in a high-dimensional space. An embedding model maps text, images or other data into arrays of floats, where the geometric distance between two vectors corresponds to their semantic similarity.

AI agents primarily use this approach to store unstructured text. A common use case is to store conversation history, allowing the agent to recall what the user previously asked by searching for semantically related previous conversations in its memory bank. Agents also leverage vector stores to retrieve relevant documents, API documents, or code snippets based on the underlying meaning of the user’s prompt, which is a far more robust approach than relying on exact keyword matching.

Vector databases are a strong alternative to agent memory. They also offer fast searching across billions of vectors. Developers also find these easier to set up than structured databases. To integrate a vector store, you split the text, generate embeddings, and index the results. These databases handle fuzzy matching well, accommodating typos and interpretation without the need for strict queries.

But semantic search has limitations for advanced agent memory. Vector databases often cannot follow multi-step logic. For example, if an agent needs to find links between entity A and entity C, but only has data showing that A connects to B and B connects to C, a simple similarity search may miss important information.

These databases also struggle to retrieve large amounts of text or deal with noisy results. With dense, interconnected facts (from software dependencies to company organizational charts) they can return related but irrelevant information. This may cause the agent’s context window to fill with less useful data.

Graph RAG: Structured Reference and Relational Memory

graph rag Addresses the limitations of semantic search by combining knowledge graphs with LLM. In this paradigm, memory is structured as discrete units represented as nodes (for example, a person, a company, or a technology), and the explicit relationships between them are represented as edges (for example, “serves” or “uses”).

Graph agents using RAG create and update a structured world model. As they gather new information, they extract entities and relationships and add them to the graph. When searching memory, they follow clear paths to find the exact reference.

The main strength of Graph RAG is its precision. Since retrieval follows explicit relations rather than just semantic proximity, the risk of error is lower. If a relation does not exist in the graph, the agent cannot infer it from the graph alone.

Graph RAG excels at complex reasoning and is ideal for answering structured questions. To find the manager’s direct report who approved a budget, you trace a path through the organization and approval chain – a simple graph traversal, but a difficult task for vector search. Explainability is another major advantage. The retrieval path is a clear, audible sequence of nodes and edges, not an opaque similarity score. This matters for enterprise applications that require compliance and transparency.

On the downside, Graph RAG introduces significant implementation complexity. This demands robust entity-extraction pipelines to parse raw text into nodes and edges, which often requires carefully tuned signals, rules, or specialized models. Developers must also design and maintain an ontology or schema, which can be rigid and difficult to evolve as new domains emerge. The cold-start problem is also prominent: unlike a vector database, which is useful the moment you embed text, a knowledge graph requires substantial upfront effort before it can answer complex queries.

Comparison Framework: When to Use Which

When architecting memory for an AI agent, keep in mind that vector databases are excellent at handling unstructured, high-dimensional data and are suitable for similarity search, while graph RAGs are beneficial for representing entities and explicit relationships when those relationships are important. The choice should be driven by the underlying structure of the data and expected query patterns.

Vector databases are ideally suited for completely unstructured data – huge knowledge bases built from chat logs, general documentation, or raw text. They are excellent when the query is intended to explore broad topics, such as “Find me concepts similar to X” or “What have we discussed regarding topic Y?” From a project-management perspective, they offer low setup costs and good general accuracy, making them the default choice for early-stage prototyping and general-purpose assistants.

In contrast, graph RAG is better suited for data with underlying structure or semi-structured relationships such as financial records, codebase dependencies, or complex legal documents. When questions demand precise, clear answers, such as “How is X related to Y?” So this is the appropriate architecture. or “What are all the dependencies of this specific component?” The high setup cost and ongoing maintenance overhead of graph RAG systems is justified by its ability to provide high precision on specific connections where vector search would hallucinate, overgeneralize, or fail.

However, the future of advanced agent memory lies not in choosing one or the other, but in a hybrid architecture. Leading agentic systems are increasingly combining both approaches. A common approach uses a vector database for the initial retrieval stage, performing a semantic search to locate the most relevant entry nodes within a huge knowledge graph. Once those entry points are identified, the system moves on to graph traversal, extracting the exact relational context associated with those nodes. This hybrid pipeline matches the broad, fuzzy memory of vector embeddings with the strict, deterministic accuracy of graph traversal.

conclusion

Vector databases remain the most practical starting point for general purpose agent memory due to their ease of deployment and strong semantic matching capabilities. For many applications, from customer support bots to basic coding assistants, they provide adequate context retrieval.

However, as we move toward autonomous agents capable of enterprise-grade workflows, which involve agents that must consider complex dependencies, ensure factual accuracy, and explain their reasoning, Graph RAG emerges as a critical unlocking potential.

Developers would be advised to take a layered approach: start the agent memory with a vector database for basic conversation grounding. As the agent’s reasoning requirements increase and approach the practical limits of semantic search, selectively introduce knowledge graphs to structure high-value entities and key operational relationships.