Author(s): Form

Originally published on Towards AI.

By Swaroop Hadke

1. Introduction: The Hidden Web of Network Effects

Standard A/B testing is dangerous in two-sided markets. If you treat a ride-hailing app or delivery network like a standard e-commerce store, you’re not just getting noisy data – you’re often actively destroying your network.

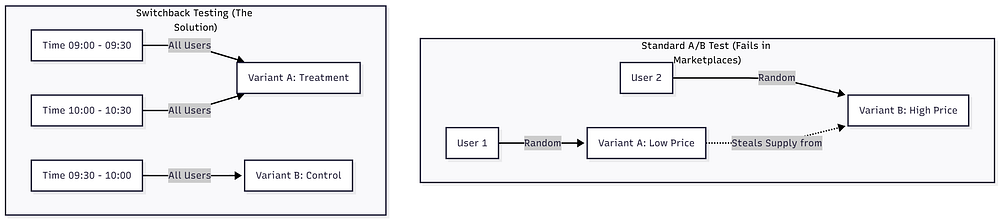

In a typical A/B test (e.g., testing a “Buy Now” button color), we rely on Sutva (Fixed unit treatment cost estimate). This is a statistical guarantee that the behavior of user A does not affect user B.

In the market, SUTVA is effectively non-existent.

Here’s the scenario that breaks standard testing: You want to test a new dynamic pricing algorithm designed to lower prices and boost conversions.

- Group A (treatment) Looks at low prices. They naturally flood the system with orders.

- Group B (Control) Looks at general prices.

Because your supply (drivers/couriers) is limited, Group A consumes all available drivers. When Group B users try to book, they face long wait times or “no driver available” errors. Group B’s performance worsened artificially Due to Group A activity.

If you look at the raw data, your algorithm looks like a massive success. In fact, you have simply stolen resources from your control group. This phenomenon is called InterferenceAnd to solve this, we need to stop randomizing by user and start randomizing by Time,

right here switchback test becomes one DemandNo luxury.

2. Solution: Changing the unit of randomization

The switchback test (or time-split test) solves the problem of interference by switching the entire market between “control” and “treatment” at specific time intervals.

Instead of User A getting one price and User B getting another price simultaneously, Everyone The market finds healing logic for the 30-minute window, and then Everyone Control logic is received for the next 30 minutes.

This clusters the intervention within time blocks, allowing us to compare the performance of “treatment windows” with “control windows.”

imagination of division

Here’s how the randomization logic differs.

By separating variants in time, we ensure that the available supply during the “control” period reflects (mostly) natural market conditions, not distorted by simultaneous competing algorithms.

3. Build: Simulating Marketplace in Python

Testing experimental frameworks on live production traffic is risky (and expensive). To prove the efficacy of this architecture, I created a Python-based experiment engine,

The core of this project is MarketplaceSimulator Class. It models the delicate balance of a two-sided economy:

- Driver (supplied): Prioritize higher earnings (surge pricing).

- User (Demand): Give priority to low cost.

The goal of the simulation was to test a “surge pricing” algorithm. Hypothesis? Increasing the price slightly will reduce user conversion, but will increase driver acceptance so much that the overall outcome will be better. Order Completion Rate (OCR),

Below is the main logic of my simulation engine. This shows how the system “switches” behavior depending on the time window version.

# Simplified Logic from simulation.pyfor _, row in schedule.iterrows():

variant = row('variant') # 'Control' or 'Treatment' based on time window

# ... (Demand generation logic omitted for brevity) ...

# The Core Trade-off Mechanism

if variant == 'Treatment':

# Treatment: Surge Pricing applied

# Result: Users convert less, but Drivers accept more

price = base_price * np.random.uniform(1.0, 1.2)

driver_acceptance_prob = 0.85

user_conversion_prob = 0.70

else:

# Control: Base Pricing

# Result: Users convert more, but Drivers are pickier

price = base_price

driver_acceptance_prob = 0.75

user_conversion_prob = 0.75

# Determine the Outcome

# A ride is only 'Completed' if BOTH sides agree

driver_found = bool(np.random.random() < driver_acceptance_prob)

user_accepted = bool(np.random.random() < user_conversion_prob)

is_completed = bool(driver_found and user_accepted)

In this simulation, Treatment Solving a friction point for the market (supply availability) creates a friction point for the user (price).

4. Mathematics: Why Aggregation is Important

If you run this simulation for two weeks, you can generate 10,000 individual ride requests.

- 5,000 treatment rides

- 5,000 control rides

A junior data scientist might be tempted to run a t-test on these 10,000 rows. This is statistically invalid.

Why? Because the rides are happening within the same 30 minute window autocorrelatedIf it starts raining at 9:15 in the morning, All Rides are affected in that window. They are not independent samples.

To correctly calculate the p-value, we need to collect our data unit of randomization – which, in the switchback test, is time window,

Instead of comparing 10,000 rides, we compare means n Time window (for example, 336 hours = 672 windows of 30 minutes).

analysis implementation

Here is how I implemented the aggregation and statistical testing analysis.pyNotice how we group window_start Before running the test.

from scipy import statsdef analyze_experiment(df):

# 1. CRITICAL: Aggregate metrics by the Window

# (The Unit of Randomization)

window_metrics = df.groupby(('window_start', 'variant')).agg({

'request_id': 'count',

'is_completed': 'sum',

'order_value': 'sum'

}).reset_index()

# Calculate Ratios per window (e.g., Order Completion Rate)

window_metrics('ocr') = window_metrics('is_completed') / window_metrics('request_id')

# 2. Separate Groups

control_windows = window_metrics(window_metrics('variant') == 'Control')

treatment_windows = window_metrics(window_metrics('variant') == 'Treatment')

# 3. Statistical Test (Welch's t-test on Window Means)

# We test the means of the *windows*, not the individual rides.

t_stat, p_value = stats.ttest_ind(

treatment_windows('ocr'),

control_windows('ocr'),

equal_var=False

)

return p_value, window_metrics

By averaging the metrics within each window, we satisfy the independence assumption required for Welch’s t-test.

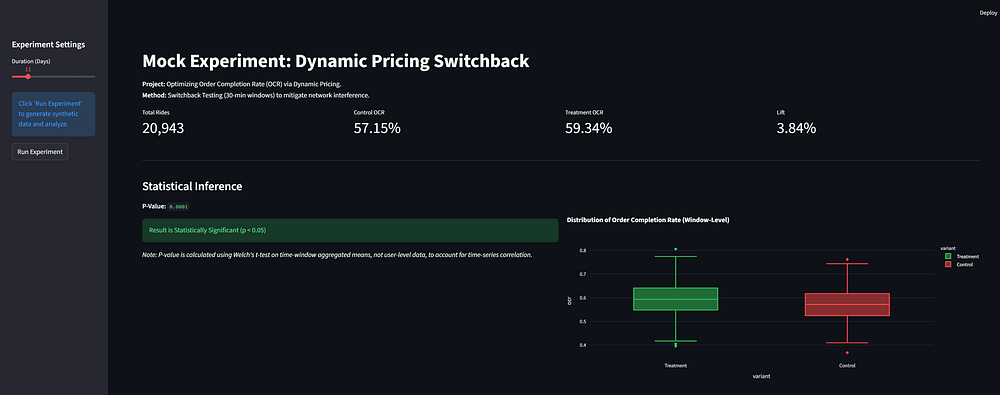

5. Visualizing the results

To make these results accessible to stakeholders, the final step of the project was to pipe these metrics into a Streamlit dashboard.

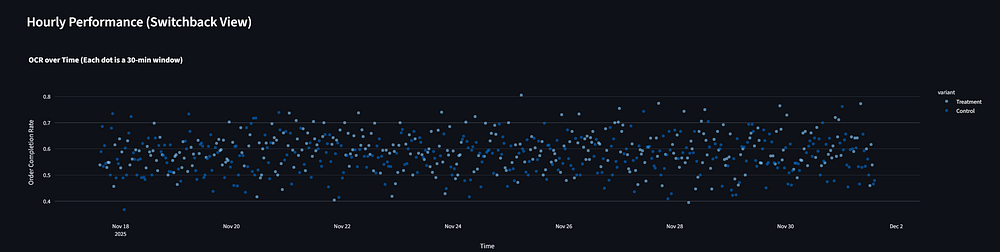

The dashboard visualizes:

- Global rise: Percentage improvement in order completion rate (OCR) and gross merchandise value (GMV).

- Importance: A clear indicator of whether the p-value is <0.05.

- time series: A chart showing how metrics fluctuated over a 14-day period.

6. Advanced Ideas and Limitations

While switchback testing solves the interference problem, it introduces a new problem: carryover effect,

If you raise prices at 9:55 am (treatment) to attract drivers, those drivers are still likely to be in the area at 10:05 am, when the system returns to normal prices (control). The “control” window benefits from supplies accumulated by the “treatment” window.

Solution: Sophisticated experiment platform implements a “Burn-in” (or washout) periodWhen calculating metrics, we discard data from the first 5-10 minutes of each window, This allows the market situation to reset and stabilize before we start measuring the impact of the new version,

7. Conclusion: Moving on from “move fast and break things.”

The creation of this simulation highlighted an important shift in our approach to data science in mature technology areas. In the early days, simple predictive modeling and “move fast” A/B testing were enough to catch the low-hanging fruit.

Today, there is very little margin for error in the markets. The algorithms that manage pricing and dispatch are so interconnected that they cannot be tested with simple segmentation methods. we need to move forward causal inference engine Which respects the physics of supply and demand.

The Python code I’ve shared here—especially the shift from row-level analysis to window-aggregated analysis—isn’t just a statistical safeguard; This requires validity. If you can’t trust your control group, you can’t trust your elevator either. And in a business that operates at scale, a false positive on a pricing algorithm isn’t just a bad experiment – it’s millions in lost revenue.

This article is based on a portfolio project demonstrating causal inference In PythonThe full source code for the simulation and analysis engine is available on my website GitHub,

Published via Towards AI