Startups are under constant pressure to ship products that are simple, affordable, and scalable without compromising performance or user trust. for years, AI system To meet these demands there has been a heavy reliance on cloud infrastructure.

This approach works, but it comes with real trade-offs: increased computation costs, latency, and growing concerns around data privacy.

Increasingly, machine learning workloads are moving away from centralized clouds and closer to where the data is generated, on the device itself. This shift toward edge computing is accelerating and reshaping the way startups design and launch their MVPs.

💡

Edge AI offers a practical middle ground, delivering strong performance, lower operating costs, and better privacy protections from day one.

What is Edge AI and what is it important for? Start-up?

Edge AI refers to running machine learning models directly on devices such as smartphones, sensors, gateways or edge servers instead of sending data to a remote cloud for processing. (Xi et al., 2016).

Processing data locally enables low-latency, real-time decision making while reducing reliance on constant network connectivity. It also significantly reduces cloud compute and data transfer costs.

A Data Engineer’s Guide to Pipeline Frameworks

If you’re planning for 2026, these are the seven frameworks you really need to care about.

Recent advances in specialized hardware have made this approach feasible sooner than expected. Platforms like Apple’s Neural Engine, Google’s Edge TPU, and modern microcontrollers now provide enough on-device computing to support practical inference at large scale. (Zhang et al., 2021).

For startups, this matters because speed and cost discipline cannot be compromised. Cloud-based AI pipelines can become increasingly expensive, especially as usage grows.

Edge AI removes that overhead, allowing teams to deliver intelligent features without huge infrastructure bills.

Key Benefits of Edge AI for MVPs

- Low cost and low burn rate

Running estimation on device eliminates recurring cloud compute costs for many use cases. For early-stage startups, where runway and margins are tight, this can have a meaningful impact on sustainability. (Government, 2020).

- Enhanced privacy and security

User expectations regarding privacy have changed, especially in areas governed by the GDPR and CCPA. Processing data locally reduces compliance risks and builds trust, especially in sensitive areas like healthcare or finance.

In an environment where users are wary of large cloud providers, local processing can become a competitive advantage rather than just a technical detail (Sicari et al., 2015).

- real time display

Applications such as personalized recommendations, health monitoring, and predictive systems rely on fast response times. Cloud-based estimation introduces unavoidable latency due to network round trips.

Edge AI avoids that barrier, enabling real-time decision making and an intuitive user experience that users immediately notice, even if they can’t articulate why. (Zhang et al., 2021).

Transforming the enterprise: Moving past chat into core workflow redesign

OnDemand Sessions from OpenAI

why now? Strategic timing for edge AI adoption

The time is especially ripe for edge-first MVPs. Consumer hardware is moving fastAnd AI acceleration is now a core feature rather than a specific capability.

Apple, Qualcomm, and Intel have introduced dedicated neural processing units in recent product roadmaps, designed to support fast, energy-efficient on-device inference and reduce disruption to edge deployments.

At the same time, development tooling has matured. framework Such as TensorFlow Lite, PyTorch Mobile, and ONNX runtimes reduce friction in deploying and maintaining models across different devices.

What once required highly specialized teams can now be managed by focused startup engineering groups that match the profile of early-stage companies.

Challenges and how start-ups can overcome them

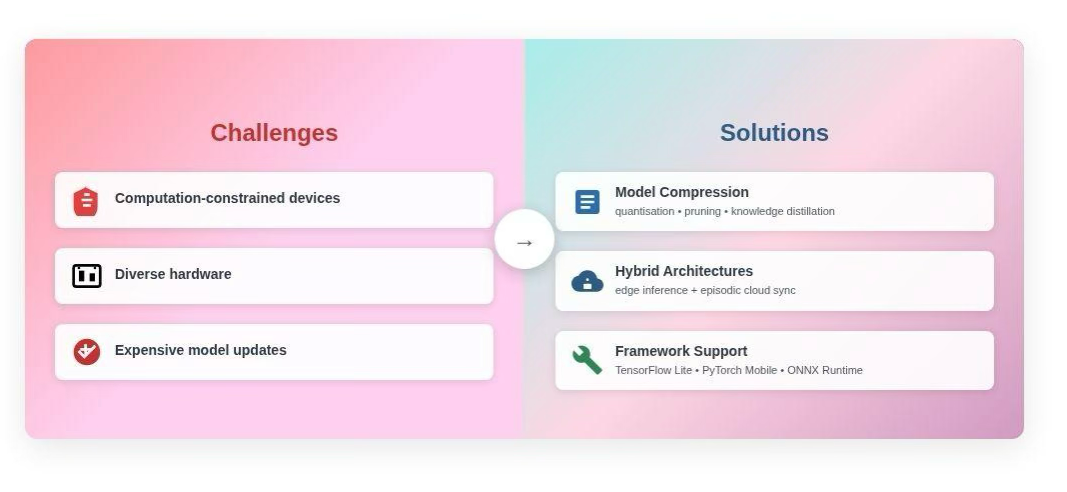

Edge AI comes with obstacles. Limited computation capacity, device fragmentation, and model update complexity are common concerns. However, these challenges are increasingly well understood and manageable.

Model compression techniques, such as quantization, pruning, and knowledge distillation, allow models to run efficiently on restricted hardware without unacceptable accuracy loss.

The hybrid architecture, combining on-device estimation with periodic cloud synchronization, provides a practical balance between performance and flexibility.

💡

Improved framework support continues to resolve many device-specific deployment issues, reducing operational burden.

When thoughtfully implemented, these approaches allow startups to benefit from edge deployments without accumulating long-term technical debt.

Edge AI and the New MVP Playbook

Traditional MVPs often prioritize speed to market through cloud-based services. Despite being faster to deploy, those systems can be expensive to scale and sensitive to latency or connectivity problems. Edge AI changes that equation.

By moving the guesswork to the device, startups can create MVPs that:

- Operate reliably in low-connectivity environments, opening up access to underserved markets

- Protect user data by default, strengthening trust in regulated sectors

- Provide needed feedback for AR/VR, robotics and wearable technologies

For example, a remote patient monitoring system deployed in rural areas can trigger alerts immediately when abnormal vital signs are detected, even without reliable Internet access.

Agricultural sensors away from central infrastructure can optimize irrigation in real time. These are practical benefits available today, not hypothetical scenarios.

Looking ahead: The edge as the new cloud

Industry forecasts point to continued decentralization of data processing. Gartner estimates that by 2025, 75% of enterprise-generated data will be processed outside of traditional centralized data centers. (Gartner, 2021). This represents a structural shift in how intelligent systems are delivered, not just marginal optimization.

For startups, the path is clear: Edge AI is not only a cost-saving measure; This is a strategic design choice. Teams that adopt edge-first architecture early can make a difference on performance, privacy, and user experience, factors that increasingly determine whether an MVP gains traction or stalls.

As hardware improves and development tools become more accessible, edge computing is likely to become the default foundation for intelligent products rather than the exception.