Image by editor

# Introduction

procurement labeled data – that is, ground truth target labeled data – is a fundamental step for building most supervised machine learning models such as random forests, logistic regression, or neural network-based classifiers. Although a major difficulty in many real-world applications is obtaining sufficient amounts of labeled data, even after checking that box many times, there may be another significant challenge: class imbalance.

class imbalance Occurs when a labeled dataset contains classes with a very unequal number of observations, usually with one or more classes being greatly under-represented. This issue often gives rise to problems when building machine learning models. Put another way, training a predictive model like a classifier on imbalanced data leads to issues like biased decision boundaries, poor recall on the minority class, and deceptively high precision, which in practice means that the model performs well “on paper” but, once deployed, fails in the critical cases we care about most – fraud detection in bank transactions is a clear example of this, with about 99% of transactions being legitimate. Due to this the transaction datasets are extremely imbalanced.

Synthetic minority over-sampling technique (SMOTE) A data-centric resampling technique tackles this problem by generating new samples belonging to the minority class, for example fraudulent transactions, through interpolation techniques between existing real examples.

This article briefly introduces SMOTE and later explains how to implement it correctly, why it is often used incorrectly, and how to avoid these situations.

# What is SMOTE and how does it work

SMOTE is a data augmentation technique to address class imbalance problems in machine learning, especially in supervised models such as classifiers. In classification, when at least one class is significantly underrepresented compared to others, the model can easily be biased towards the majority class, leading to poor performance, especially when it comes to predicting rare classes.

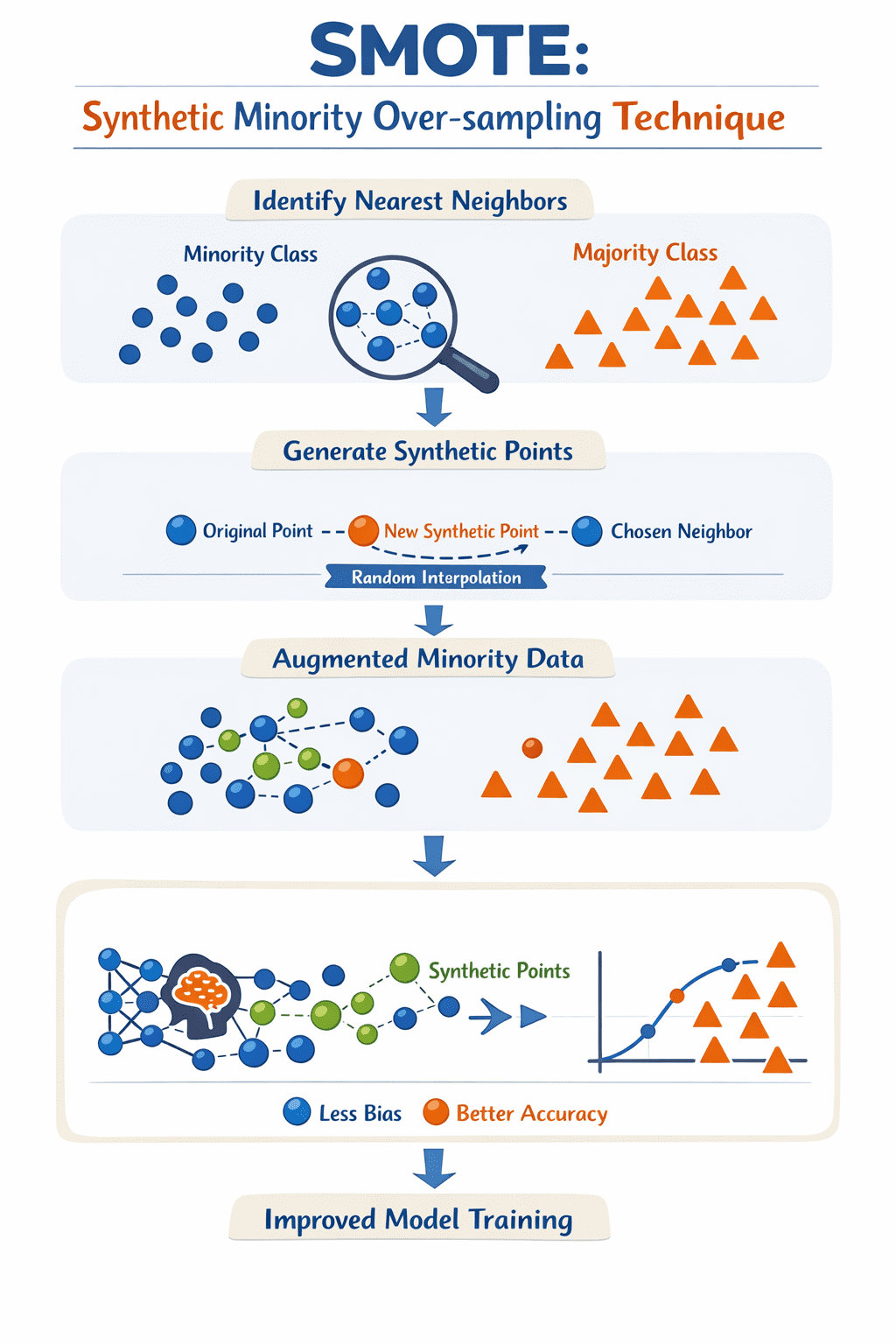

To address this challenge, SMOTE creates synthetic data examples for the minority class, not by simply copying existing examples, but by interpolating a sample between the minority class and its nearest neighbors in the space of available features: this process is, in essence, effectively “filling in” the gaps in the regions around which existing minority examples move, thus helping to balance the dataset as a result.

SMOTE iterates over each minority example, identifies its nearest neighbors, and then generates a new synthetic point along the “line” between the sample and a randomly chosen neighbor. The result of iteratively applying these simple steps is a new set of examples of the minority class, so that the process of training the model can be based on a richer representation of the minority class in the dataset, and resulting in a more effective, less biased model.

How does SMOTE work? Image by author

# Correctly implementing SMOTE in Python

To avoid the data leakage issues mentioned earlier, it is best to use a pipeline. unbalanced-learning The library provides a pipeline object that ensures that SMOTE is only applied to the training data during each step of cross-validation or during a simple hold-out split, leaving the test set untouched and representative of real-world data.

The following example shows how to integrate SMOTE machine learning Using Workflow scikit-learn And imblearn: :

from imblearn.over_sampling import SMOTE

from imblearn.pipeline import Pipeline

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import classification_report

# Split data into training and testing sets first

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# Define the pipeline: Resampling then modeling

# The imblearn Pipeline only applies SMOTE to the training data

pipeline = Pipeline((

('smote', SMOTE(random_state=42)),

('classifier', RandomForestClassifier(random_state=42))

))

# Fit the pipeline on training data

pipeline.fit(X_train, y_train)

# Evaluate on the untouched test data

y_pred = pipeline.predict(X_test)

print(classification_report(y_test, y_pred))by using PipelineYou ensure that change occurs only within the training context. This prevents synthetic information from “bleeding” into your evaluation set, providing a more honest assessment of how your model will handle unbalanced classes in production.

# Common misuses of SMOTE

Let’s look at three common ways in which SMOTE is misused machine learning Workflows, and how to avoid these misuses:

- Applying SMOTE before splitting the dataset into training and testing sets: This is a very common error that inexperienced data scientists can make frequently (and in most cases accidentally). Creates new synthetic examples based on SMOTE all available dataAnd subsequently injecting synthetic points into both the training and testing partitions is a “not so perfect” recipe for artificially unrealistically increasing model evaluation metrics. The correct approach is simple: first split the data, then apply SMOTE only on the training set. Are you also thinking about implementing k-fold cross-validation? Even better.

- Over-balancing: Another common error is to blindly resample until there is an exact match between class proportions. In many cases, achieving that perfect balance is not only unnecessary, but may also be counterproductive and unrealistic given the domain or class structure. This is especially true in multiclass datasets with many sparse minority classes, where SMOTE may end up creating synthetic examples that exceed boundaries or lie in areas where no real data examples are found: in other words, noise may be inadvertently introduced, with potentially unwanted consequences such as model overfitting. The general approach is to work slowly and try to train your model with subtle, incremental increases in the proportion of the minority class.

- Ignoring the context of metrics and models: The overall accuracy metric of a model is an easy to obtain and interpretable metric, but it can also be a misleading and “hollow metric” that does not reflect your model’s inability to detect minority class cases. This is a significant issue in high-risk sectors such as banking and healthcare, with scenarios such as detecting rare diseases. Meanwhile, SMOTE can help improve the dependability on metrics like recall, but it can reduce its counterpart, precision, by introducing noisy synthetic samples that may be misaligned with business goals. To accurately evaluate not only your model, but also the effectiveness of SMOTE in its performance, focus on metrics such as joint recall, F1-score, Matthews correlation coefficient (MCC, the “summary” of the entire confusion matrix), or precision-recall area under the curve (PR-AUC). Similarly, consider alternative strategies such as class weighting or threshold tuning as part of the application of SMOTE to further increase effectiveness.

# concluding remarks

This article revolves around SMOTE: a commonly used technique to address class imbalance in building some machine learning classifiers based on real-world datasets. We’ve identified some common misuses of this technology and offered practical advice on how to avoid them.

ivan palomares carrascosa Is a leader, author, speaker and consultant in AI, Machine Learning, Deep Learning and LLM. He trains and guides others in using AI in the real world.