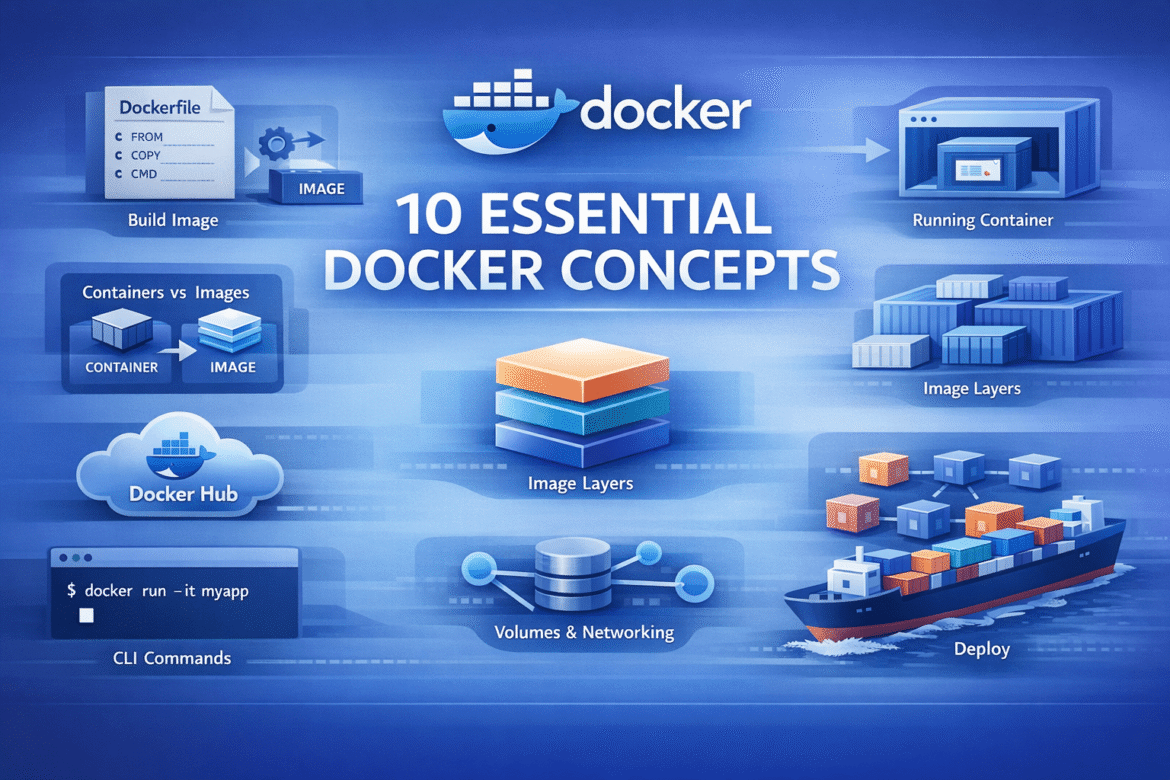

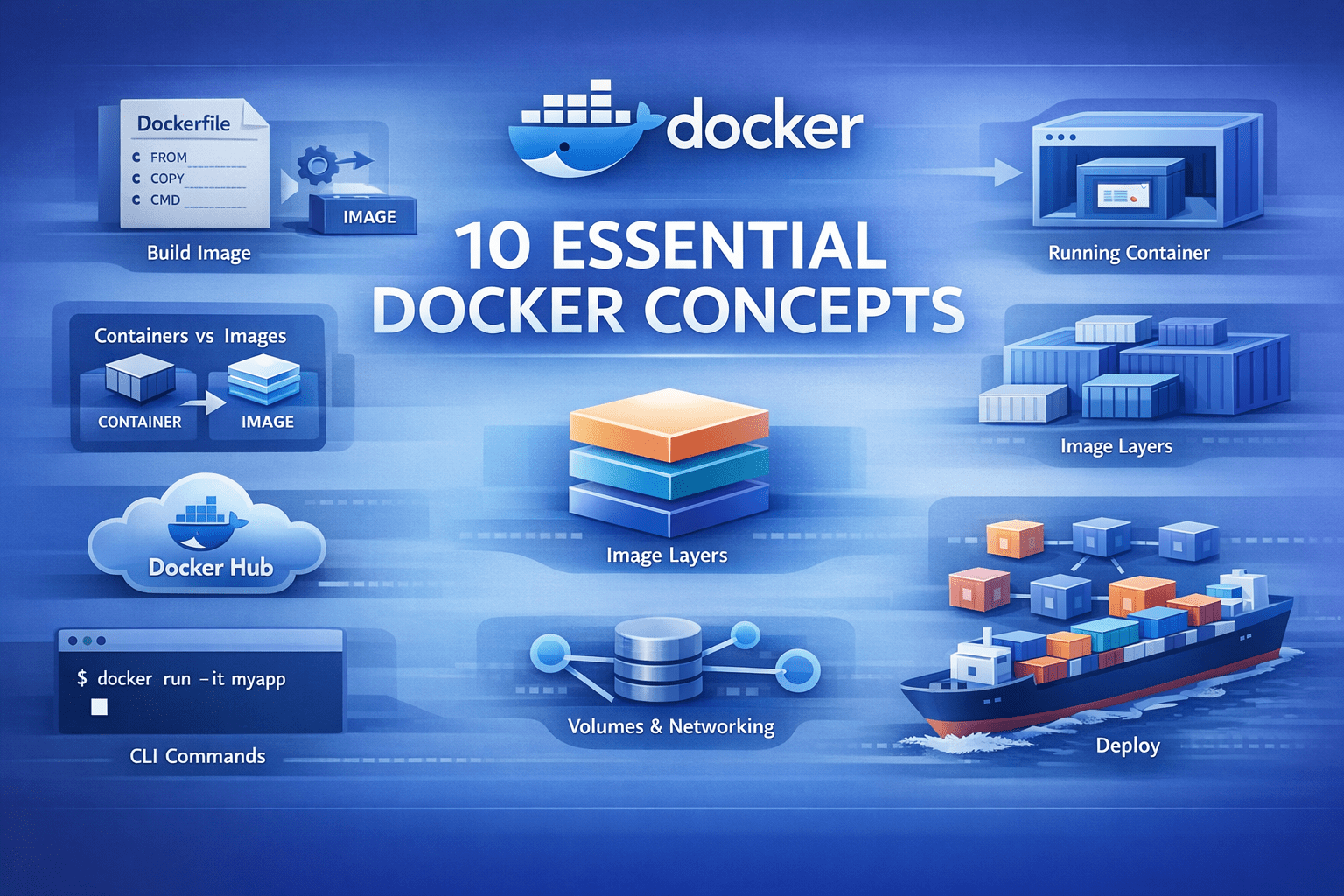

Image by author

# Introduction

postal worker We’ve simplified the way you build and deploy applications. But when you’re starting out learning dockerThe terminology can often be confusing. You’ll likely hear terms like “images,” “containers,” and “volumes” without really understanding how they fit together. This article will help you understand the basic Docker concepts you need to know.

let’s get started.

# 1. Docker Image

The Docker image is an artifact that contains Everything What your application needs to run: code, runtime, libraries, environment variables, and configuration files.

Images are immutable. Once you create an image, it doesn’t change. This guarantees that your application runs the same on your laptop, your coworker’s machine, and in production, eliminating environment-specific bugs.

Here’s how you build an image from a Dockerfile. A Dockerfile is a recipe that defines how you build the image:

docker build -t my-python-app:1.0 . -t The flag tags your image with a name and version. . Tells Docker to look for a Dockerfile in the current directory. Once created, this image becomes a reusable template for your application.

# 2. Docker Container

The container is what you get when you run an image. It is an isolated environment where your application actually executes.

docker run -d -p 8000:8000 my-python-app:1.0 -d The flag runs the container in the background. -p 8000:8000 Maps port 8000 on your host to port 8000 in the container, making your app accessible on localhost:8000.

You can run multiple containers from a single image. They work independently. This way you test different versions simultaneously or scale horizontally by running ten copies of the same application.

Containers are light. Unlike virtual machines, they do not boot a full operating system. They start up in seconds and share the host’s kernel.

# 3. Dockerfile

A Dockerfile contains instructions for building an image. This is a text file that tells Docker how to set up your application environment.

Here is a Dockerfile for a Flask application:

FROM python:3.11-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

EXPOSE 8000

CMD ("python", "app.py")Let’s break down each instruction:

FROM python:3.11-slim– Start with a base image that has Python 3.11 installed. The slim version is smaller than the standard image.WORKDIR /app– Set the working directory to /app. All subsequent commands run from here.COPY requirements.txt .– Copy only the requirements file first, not your entire code yet.RUN pip install --no-cache-dir -r requirements.txt– Install Python dependencies. The –no-cache-dir flag keeps the image size small.COPY . .— Now copy the rest of your application code.EXPOSE 8000– Document that the app uses port 8000.CMD ("python", "app.py")– Define commands to run when the container starts.

The order of these instructions is important for how long your build takes, which is why we need to understand layers.

# 4. Image Layers

Each instruction in the Dockerfile creates a new layer. These layers are stacked on top of each other to create the final image.

Docker caches each layer. When you rebuild an image, Docker checks whether each layer needs to be rebuilt. If nothing changed, it reuses the cached layer instead of rebuilding.

That’s why we copy requirements.txt Before copying the entire application. Your dependencies change less frequently than your code. when you modify app.pyDocker reuses the cached layer that installs the dependencies and rebuilds the layers only after the code copy.

Here is the layer structure from our Dockerfile:

- base python image (

FROM) - set working directory (

WORKDIR) - copy

requirements.txt(COPY) - Install dependencies (

RUN pip install) - Copy the application code (

COPY) - Metadata about port (

EXPOSE) - default command(

CMD)

If you only change your Python code, Docker only rebuilds 5-7 layers. Layers 1-4 come from the cache, making construction very fast. Understanding the layers helps you Write efficient Dockerfiles. Place frequently changed files at the end and stable dependencies at the beginning.

# 5. Docker Volume

Containers are temporary. When you delete a container, everything inside it disappears, including the data created by your application.

docker volume Solve this problem. They are directories that exist outside the container file system and persist even after the container is deleted.

docker run -d

-v postgres-data:/var/lib/postgresql/data

postgres:15This creates a named volume called postgres-data and mounts it /var/lib/postgresql/data Inside the container. Your database files survive container restarts and deletions.

You can also mount directories from your host machine, which is useful during development:

docker run -d

-v $(pwd):/app

-p 8000:8000

my-python-app:1.0This mounts your current directory into the container /app. Changes you make to files on your host are immediately reflected in the container, enabling live development without rebuilding the image.

There are three types of mounts:

- named section (

postgres-data:/path) – managed by Docker, best for production data - bind mount (

/host/path:/container/path) – mount any host directory, which is good for development - tmpfs mount – Store data only in memory, useful for temporary files

# 6. Docker Hub

docker hub There is a public registry where people share Docker images. when you write FROM python:3.11-slimDocker pulls that image from Docker Hub.

You can search images:

And drag them to your machine:

docker pull redis:7-alpineYou can also push your own images to share with others or deploy to the server:

docker tag my-python-app:1.0 username/my-python-app:1.0

docker push username/my-python-app:1.0Docker Hub hosts official images for popular software like PostgreSQL, redis, nginx, PythonAnd thousands of others. These are maintained by the software manufacturers and follow best practices.

For private projects, you can create private repositories on Docker Hub or use alternative registries Amazon Elastic Container Registry (ECR), Google Container Registry (GCR)Or Azure Container Registry (ACR).

# 7. Docker Compose

Real applications require multiple services. A typical web app has a Python backend, a PostgreSQL database, a redis cacheAnd maybe a worker process.

docker compose Lets you define all these services in one Another markup language (YAML) File and manage them together.

create a docker-compose.yml file:

version: '3.8'

services:

web:

build: .

ports:

- "8000:8000"

environment:

- DATABASE_URL=postgresql://postgres:secret@db:5432/myapp

- REDIS_URL=redis://cache:6379

depends_on:

- db

- cache

volumes:

- .:/app

db:

image: postgres:15-alpine

volumes:

- postgres-data:/var/lib/postgresql/data

environment:

- POSTGRES_PASSWORD=secret

- POSTGRES_DB=myapp

cache:

image: redis:7-alpine

volumes:

postgres-data:Now start your entire application stack with one command:

This starts three containers: web, dbAnd cache. Docker Compose handles networking automatically: web service can access database on hostname db and redis on hostname cache.

To stop everything, run:

To rebuild after code changes:

docker-compose up -d --buildDocker Compose is required for the development environment. Instead of installing PostgreSQL and Redis on your machine, you run them in a container with a single command.

# 8. Container Network

When you run multiple containers, they need to talk to each other. docker makes virtual Networks that connect containers.

By default, Docker Compose creates a network for all the services you define. docker-compose.yml. Containers use service names as hostnames. In our example, the web container connects using PostgreSQL db:5432 Because db The name of the service is.

You can also create a custom network manually:

docker network create my-app-network

docker run -d --network my-app-network --name api my-python-app:1.0

docker run -d --network my-app-network --name cache redis:7Now api container can access redis cache:6379. Docker provides several network drivers, among which you will frequently use the following:

- Bridge – default network for containers on a single host

- Host – the container uses the host’s network directly (no isolation)

- none – the container has no network access

Provide network isolation. Containers on different networks cannot communicate unless they are explicitly connected. This is useful for security because you can separate your frontend, backend, and database networks.

To see all networks, run:

To inspect a network and see which containers are connected, run:

docker network inspect my-app-network# 9. Environment variables and Docker secrets

Hardcoding configuration is asking for trouble. The password for your database should not be the same in development and production. Your API keys should definitely not reside in your codebase.

docker handles it Environment Variables. pass them with runtime -e Or --env flag, and your container gets the configuration it needs without baking values into the image.

Docker Compose makes it cleaner. point to one .env File and keep your secrets out of version control. swap out .env.production When you deploy or define environment variables directly in your compose file, if they are not case sensitive.

docker secrets Take it further for production environments, especially in swarm mode. Instead of environment variables – which can do Visible in logs or process listings – Secrets are encrypted in transit and at rest, then mounted as files in the container. Only those services that require them get access. They are designed for passwords, tokens, certificates, and anything else that could be disastrous if leaked.

The pattern is simple: separate code from configuration. Use standard configuration for sensitive data and environment variables for secrets.

# 10. Container Registry

Docker Hub works fine for public images, but you don’t want your company’s application images to be publicly available. A container registry is private storage for your Docker images. Popular options include:

For each of the above options, you can follow a similar process to publish, pull, and use images. For example, you would do the following with ECR.

your local machine or Continuous Integration and Continuous Deployment (CI/CD) The system first proves its identity to the ECR. This allows Docker to securely interact with your private image registry instead of the public one. The locally built Docker image is given a fully qualified name that contains:

- AWS Account Registry Address

- store name

- image version

This step tells Docker where the image will reside in ECR. The image is then uploaded to the private ECR repository. Once pushed, the image is centrally stored, versioned, and available to authorized systems.

Production servers authenticate with ECR and download the image from the private registry. This keeps your deployment pipeline fast and secure. Instead of building images on a production server (slow and requiring source code access), you build once, push to the registry, and pull to all servers.

Many CI/CD systems integrate with container registries. Yours GitHub Actions The workflow creates the image, pushes it to ECR, and your Kubernetes cluster pulls it automatically.

# wrapping up

These ten concepts form the foundation of Docker. Here’s how they connect into a common workflow:

- Write a Dockerfile with instructions for your app, and build an image from the Dockerfile

- Run a container from an image

- Use volumes to retain data

- Set environment variables and secrets for configuration and sensitive information

- create a

docker-compose.ymlFor multi-service apps and let Docker Network connect to your containers - Push, drag and run your image to the registry anywhere

Start by containerizing a simple Python script. add dependencies with a requirements.txt file. Then render a database using Docker Compose. Each step builds on previous concepts. Once you understand these basic principles, Docker is no longer complicated. It is just a tool that packages applications consistently and runs them in isolated environments.

Happy exploring!

Bala Priya C is a developer and technical writer from India. She likes to work in the fields of mathematics, programming, data science, and content creation. His areas of interest and expertise include DevOps, Data Science, and Natural Language Processing. She loves reading, writing, coding, and coffee! Currently, she is working on learning and sharing her knowledge with the developer community by writing tutorials, how-to guides, opinion pieces, and more. Bala also creates engaging resource overviews and coding tutorials.