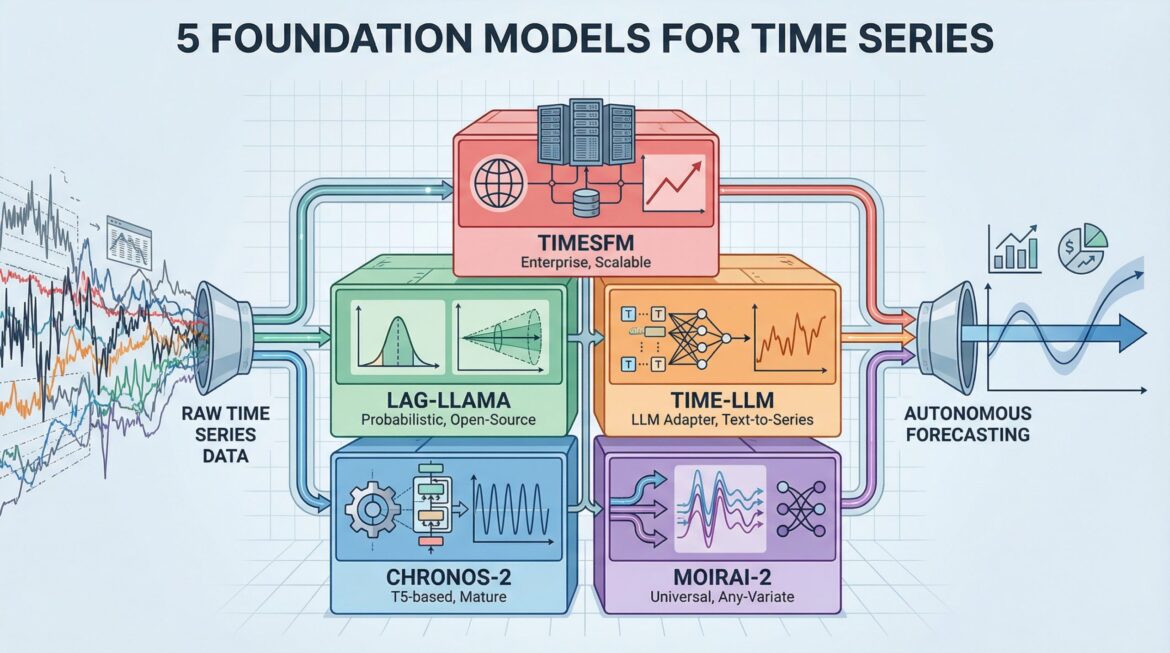

2026 Time Series Toolkit: 5 Foundation Models for Autonomous Forecasting

Image by author

Introduction

Most forecasting tasks involve building custom models for each dataset – fit an ARIMA here, tune an LSTM there, wrestle with it prophetHyperparameters of. Foundation models move it around. They are pre-trained on large amounts of time series data and can predict new patterns without additional training, in the same way that GPT can write about topics it has never seen clearly. This list covers the five essential base models you need to know to build a production forecasting system in 2026.

The shift from task-specific models to foundation model orchestration changes the way teams approach forecasting. Instead of spending weeks tuning parameters and bringing domain expertise to each new dataset, pre-trained models already understand universal temporal patterns. Teams get faster deployment, better generalization across domains, and lower computational costs without extensive machine learning infrastructure.

1. Amazon Chronos-2 (Production-Ready Foundation)

Amazon Chronos-2 The Foundation Model is the most mature option for teams moving toward forecasting. This family of pre-trained Transformer models, based on the T5 architecture, characterizes time series values through scaling and quantization – treating forecasting as a language modeling task. The October 2025 release expanded capabilities to support univariate, multivariate, and covariate-informed forecasting.

The model provides state-of-the-art zero-shot predictions that consistently outperform tuned statistical models out of the box, processing 300+ predictions per second on a single GPU. with millions of downloads hugging face And native integration with AWS tools like sagemaker And autogluonChronos-2 has the strongest documentation and community support among the Foundation models. The architecture comes in five sizes, ranging from 9 million to 710 million parameters, so that teams can balance performance against computational constraints. check implementation GitHubReview the technical approach in research paperOr take a pre-trained model from Hugging Face.

2. Salesforce MOIRAI-2 (Universal Forecaster)

salesforce moirai-2 Tackles the practical challenge of handling unstructured, real-world time series data through its universal forecasting architecture. This decoder-only Transformer Foundation model adapts to any data frequency, any number of variables, and any prediction length, all within a single framework. The model’s “any-variance attention” mechanism dynamically adjusts to multivariate time series without requiring fixed input dimensions, distinguishing it from models designed for specific data structures.

MOIRAI-2, with strong performance on both in-distribution and zero-shot tasks, ranks high on the GIFT-Eval leaderboard among non-data-leaking models. Training on the LOTSA dataset – 27 billion observations across nine domains – provides the model with strong generalization to new forecasting scenarios. Teams benefit from completely open-source development with active maintenance, making it valuable for complex, real-world applications with many variables and irregular frequencies. of the project GitHub repository While implementation details are included technical paper And salesforce blog post Explain the universal forecasting approach. Pre-trained models are on hugging face.

3. Lag-Llama (Open-Source Backbone)

lag-lama Foundation brings probabilistic forecasting capabilities to the model through a decoder-only transformer inspired by Meta’s LLaMA architecture. Unlike models that generate only point forecasts, lag-lama generates full probability distributions with uncertainty intervals for each prediction step – the quantifiable uncertainty that decision-making processes require. The model uses lagged features as covariates and shows robust few-shot learning when properly tuned on small datasets.

The fully open-source nature with permissive licensing makes Lag-Llama accessible to teams of any size, while its ability to run on CPU or GPU removes infrastructure barriers. Academic support adds recognition through publications at major machine learning conferences. For teams that prioritize transparency, reproducibility, and probabilistic outputs over raw performance metrics, Lag-Llama provides a reliable foundation model backbone. GitHub repository includes implementation code, and research paper Description of probabilistic forecasting method.

4. Time-LLM (LLM Adapter)

time-llm The original model takes a different approach by converting existing large language models into predictive systems without modifying the weights. This reprogramming framework translates time series patches into textual prototypes, allowing frozen LLMs such as GPT-2, LLAMA, or BERT to understand temporal patterns. “Prompt-as-prefix” technology injects domain knowledge through natural language, so teams can use their existing language model infrastructure for prediction tasks.

This adapter approach works well for organizations already running LLM in production, as it eliminates the need to deploy and maintain separate forecasting models. The framework supports multiple backbone models, making it easy to switch between different LLMs when new versions become available. Time-LLM represents an “agent AI” approach to forecasting, where general-purpose language understanding capabilities are transferred to temporal pattern recognition. Access implementation through GitHub repositoryor review methodology research paper.

5. Google TimesFM (The Big Tech Standard)

Google TimesFM Provides enterprise-grade foundation model predictions backed by one of the largest technology research organizations. This patch-based decoder-only model, pre-trained on 100 billion real-world time points from Google’s internal dataset, delivers strong zero-shot performance across multiple domains with minimal configuration. The model design prioritizes large-scale production deployment, reflecting its origins in Google’s internal forecasting workloads.

TimesFM has been battle-tested through extensive use in Google’s production environments, instilling confidence in teams deploying the Foundation model in business scenarios. The model balances performance and efficiency, avoiding the computational overhead of larger alternatives while maintaining competitive accuracy. Ongoing support from Google Research means ongoing development and maintenance, making TimesFM a reliable choice for teams looking for enterprise-grade foundation model capabilities. Access the model via GitHub repositoryArchitecture Review in technical paperor read the implementation details Google Research Blog Post.

conclusion

Foundation models transform time series forecasting from a model training problem to a model selection challenge. KRONOS-2 provides production maturity, MOIRAI-2 handles complex multivariate data, LAG-LAMA provides probabilistic output, TIME-LLM leverages existing LLM infrastructure, and TIMESFM provides enterprise reliability. Evaluate the model based on your specific needs around uncertainty quantification, multivariate support, infrastructure constraints, and deployment scale. Start with a zero-shot evaluation on representative datasets to identify which foundation model best meets your forecasting needs before investing in fine-tuning or custom development.