Data engineers are increasingly focused on one core problem: using AI to improve ETL and create reliable, production-grade pipelines without introducing new complexity. They need AI that truly streamlines workflows without adding disconnected tools or removing context.

databricks lake flow Brings an integrated data engineering platform with embedded and secure AI that automates your entire data processing, unlocks greater insights, and supports a wide range of business problems. Whether it’s with AI-generated pipeline code or streamlining AI workloads, data engineers leveraging Lakeflow can avoid spending hours on manual glue work and instead focus on strategic and high-value patterns that have real impact on their business.

In this blog, we’ll explore how you can productize and scale your AI models by implementing them into your data pipeline to automatically unlock business insights.

Effortlessly gain more insight from your data at scale

Data teams are drowning in unstructured input, whether it’s contracts, invoices, transcripts or reviews. Processing them often means dealing with brittle NLP models, rigid rules, or manual cleanup. The result: unreliable output, slow turnaround, and valuable insights locked inside documents while engineers waste time on repetitive analysis instead of making an impact.

With Databricks Lakeflow, you can solve this by seamlessly incorporating AI-powered changes into your existing workflows through Databricks Agent Bricks. AI functions. These functions let you integrate high-quality AI directly into your ETL process, automating the extraction, transformation, and classification of unstructured and structured data at scale.

It has a variety of AI functions agent bricks You can choose. Some of which do not require prompts and are task-specific, such as:

ai_extract: Extract specific entities from input text based on the labels you provide. For example, person, place, organizationai_classify: Classify the input text according to the labels you provide. For example, “urgent” versus “not urgent,” or subject categories.ai_translate: : Translate text into a specified target language.

We’re particularly excited about our recently launched AI function ai_parse_documentWhich can be used to convert any unstructured data into required structured formats. Using the Multimodal Foundation Model, ai_parse_doc Allows you to parse text, extract tables, reason on figures, and transform images into AI-generated descriptions. This function opens up new possibilities for data processing that were previously almost impossible to analyze. Learn more here

We also provide a more general function called ai_query(), powered by us Serverless Batch Estimation platform. This function enables you to run AI-powered transformations across large datasets at once using any LLM of your choice.

To maximize performance across millions of rows, our serverless batch estimation engine automatically calculates and scales resources and executes workloads in parallel. This eliminates per-request overhead and provides significantly faster processing, reducing runtime from hours to minutes while improving cost efficiency for high-volume AI workloads.

With Lakeflow, you can easily produce your AI models and organize them seamlessly into your data engineering solution lakeflow jobs. With AI functions, you can bring greater efficiency to your orchestration and unlock more use cases, such as:

- generate new data. Use AI to speed up reporting or write summaries on customer insights to forecast future revenue.

- Structuring and organizing data In specific business-meaningful categories. Run sentiment analysis across millions of multilingual reviews or automate customer segmentation using natural-language signals at scale.

- Improvement data quality. Use fuzzy matching and entity resolution to fix duplicates and inconsistencies at scale.

The combination of Lakeflow and Agent Bricks enables you to run your AI models on a single, unified governed data platform, so that your AI – and the insights it produces – have the right business and enterprise context.

AI functions and practical use cases of Lakeflow

-

Example 1: Transforming raw call transcripts into business insights

Imagine your sales team needs a reliable way to transform long, unstructured call transcripts into clear, actionable summaries. With hundreds of calls per day – many of which last 45 to 60 minutes – manual review quickly becomes impossible.

With Databricks, you can leverage built-in AI functions to easily and quickly analyze all that transcript, extract key insights, and generate follow-up recommendations.

Instead of building a separate AI service or managing custom agents, you can simply write a query and run it with Lakeflow Jobs as part of your Orchestrator. Your AI model is then implemented directly into a governed and integrated data engineering platform, where you get scalable batch processing that remains fully integrated with your existing sales pipeline workflows while remaining in the right business and enterprise context.

Let’s see how it works in practice. After adding call transcripts to your pipeline, you can apply AI functions to transform unstructured text into usable signals:

ai_analyze_sentimentTo convey the overall sentiment of the call (positive, negative, neutral)ai_extractTo extract key information from calls, including customer name, company name, job title, phone number, etc.ai_classifyTo classify the type of call (urgency, subject, etc.)

This provides you with a structured foundation for downstream analytics and automation.

Next, use ai_query To summarize each call using the AI model of your choice (in our example, we’re using the “databricks-meta-llama-3-3-70b-instruction” LLM):

This query produces consistent, high-quality summaries that sales and account teams can review at a glance.

You can then generate personalized follow-ups in the same workflow:

These notes can then be fed directly into your CRM or mass sales tools, so your teams know what actions to take immediately after the call ends. You can also share those notes with your BI team to help highlight shortcomings and improve the overall customer service experience.

-

Example 2: Streamlining Insurance Claim Processing

Imagine you’re building a claims processing pipeline for an insurance provider that needs faster, more consistent approvals. Today, claims often come through email with unstructured attachments such as scanned documents, photos and PDFs, making them difficult to receive and process at scale.

With Agent Brix and Lakeflow, data engineers can use ai_parse_document And ai_query To automatically extract, normalize, and consolidate data from incoming emails as part of your ETL pipelines. It enables reliable, end-to-end automation that reduces manual review, accelerates decisions, and integrates seamlessly into existing data workflows.

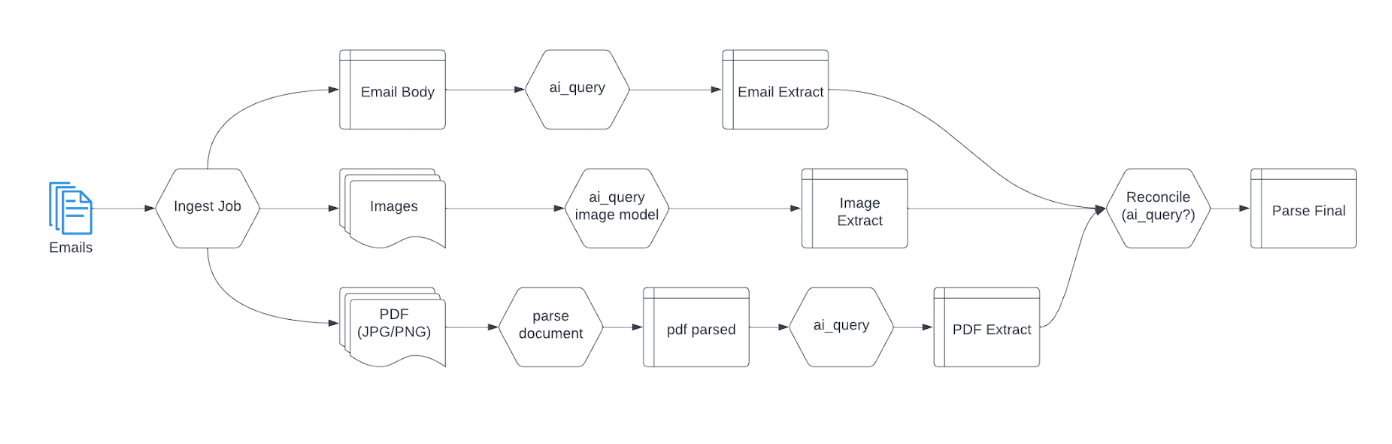

Here’s how it will work:

Using Lakeflow and Agent Bricks, you can integrate your email files into your Lakehouse Extract the data you need with:

ai_queryTo read the body of an email and extract key information (for example: name, date of birth, address, social security number)ai_queryWith a model that can specifically read the type of incoming image. This AI function will generate text describing the attached image and extract its metadata. Below is a SQL query example of that function:

- And

ai_parse_documentTo read any PDF (JPG or PNG) attached to an email

Once the data is extracted, you can use ai_query again to consolidate all the information into one file that can either be reused in another workflow or shared directly with the downstream team (BI analysts, AI/ML team, etc.), depending on your use case.

Below is a DAG example of what the workflow will look like in Lakeflow Jobs:

There’s much more you can do by combining Lakeflow and Agent Brix – watch this video To learn how you can transform cluttered sales data into AI-powered marketing campaigns.

Real-world applications of AI in Databricks

Many Databricks customers and data engineers have successfully addressed a variety of business issues – pricing, customer success and marketing – by using AI and Lakeflow to unlock insights and boost productivity.

cardThe New York-based fintech company, uses Agent Brix AI Function To Empowering a scalable, accurate transaction classification system Which replaces manual and inconsistent inheritance methods. This modern approach enables cards to efficiently process billions of transactions, deliver personalized rewards and provide rich insights that drive loyalty and business value.

data engineering team Banco BradescoOne of the largest banks in Latin America, faced productivity barriers Due to lengthy coding, debugging and documentation processes. by adopting Databricks AssistantThey cut coding time by 50% and empowered both technical and non-technical users to generate code and troubleshoot using natural language – democratizing data access, reducing costs, and accelerating data-driven decisions.

localA global omnichannel advertising platform, Used Lakeflow jobs to orchestrate complex LLM training pipelines, Which its previous scheduler, Airflow, couldn’t handle. By streamlining ETL, model training and experimentation, and calculation selection, Lakeflow Jobs removes operational burden Managing complex workflows allowed a single data scientist to create the GenAI assistant that became a key selling feature for the ad-tech company.

With Lakeflow, you can easily integrate AI capabilities into your data engineering platform and streamline AI workflows, making your data processes more efficient, insight-driven, and accessible. And we have more to come! Soon, you will be able to use Databricks Genie to power your data engineering platform for pipeline authoring and debugging using natural language processing.