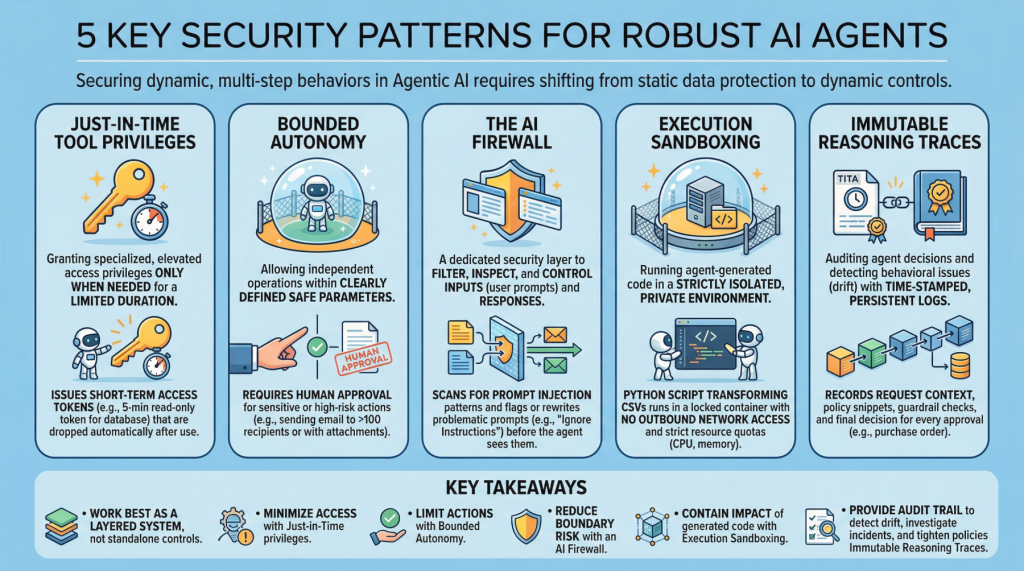

5 essential security patterns for strong agent AI

Image by editor

Introduction

agent aiwhich revolves around autonomous software entities called agents, has reshaped the AI landscape and influenced many of its most visible developments and trends in recent years, including applications built on generators and language models.

With any major technology wave like agentic AI comes the need to secure these systems. Doing this requires a shift from static data security to dynamic, multi-step behavioral security. This article lists 5 key security patterns for strong AI agents and highlights why they are important.

1. Just-in-Time Tool Privilege

Often abbreviated as JIT, it is a security model that grants special or elevated access privileges to users or applications only when needed and only for a limited period of time. This is in contrast to classic, permanent privileges which persist unless manually modified or revoked. In the realm of agentic AI, an example would be issuing short-term access tokens to limit the “blast radius” if the agent becomes compromised.

Example: Before an agent runs a billing reconciliation job, it requests a narrow-scoped, 5-minute read-only token for a single database table and automatically releases the token once the query completes.

2. Bounded autonomy

This security principle allows AI agents to work independently within a limited setting, which means striking a balance between control and efficiency, within clearly defined safe parameters. This is especially important in high-risk scenarios where full autonomy can avoid catastrophic errors by requiring human approval for sensitive tasks. In practice, this creates a control plane to reduce risk and support compliance requirements.

Example: An agent can draft and schedule outbound emails on their own, but any messages to more than 100 recipients (or those with attachments) are sent to a human for approval before being sent.

3. AI Firewall

It refers to a dedicated security layer that filters, inspects, and controls the inputs (user signals) and subsequent responses to protect the AI system. This helps protect against threats such as quick injection, data intrusion, and toxic or policy-violating content.

Example: Incoming signals are scanned for prompt-injection patterns (for example, requests to ignore prior instructions or reveal secrets), and flagged signals are either blocked or rewritten into a secure form before being seen by the agent.

4. Execution Sandboxing

Take a strictly isolated, private environment or network perimeter and run any agent-generated code within it: this is known as execution sandboxing. This helps prevent unauthorized access, resource exhaustion, and potential data breaches by preventing the impact of unreliable or unexpected execution.

Example: An agent that writes Python scripts to transform CSV files, runs them inside a locked-down container with no outbound network access, strict CPU/memory quotas, and a read-only mount of input data.

5. Traces of inductive logic

This practice supports auditing autonomous agent decisions and detecting behavioral issues such as drift. This includes creating time-stamped, tamper-evident and persistent logs that capture agent inputs, key intermediate artifacts used for decision making, and policy checks. This is an important step towards transparency and accountability for autonomous systems, especially in high-risk application domains such as procurement and finance.

Example: For each purchase order approved by the agent, it records the request context, retrieved policy snippet, applied guardrail checks and the final decision in a write-once log that can be independently verified during an audit.

key takeaways

These patterns work best as a layered system rather than as standalone controls. Just-in-time tool privileges reduce the access an agent has at any given moment, while limited autonomy limits the actions it can take without being monitored. AI firewalls reduce risk at the interaction boundary by filtering and shaping inputs and outputs, and execution sandboxing covers the impact of any code generated or executed by the agent. Finally, immutable logic traces provide an audit trail that lets you detect drift, investigate incidents, and continually harden policies over time.

| security pattern | Description |

|---|---|

| Just-in-Time Tool Privilege |

Provide short-term, narrow-scope access only when necessary to reduce the blast radius of the compromise. |

| bounded autonomy |

Control what actions an agent can take independently, taking sensitive steps through approvals and guardrails. |

| ai firewall |

Filter and inspect signals and responses to prevent or neutralize threats such as injection, data intrusion and toxic content. |

| execution sandboxing |

Run agent-generated code in an isolated environment with strict resource and access controls to prevent loss. |

| Traces of Inductive Logic |

Create time-stamped, tamper-evident logs of inputs, intermediate artifacts, and policy checks for auditability and drift detection. |

Together, these limitations reduce the likelihood of a single failure turning into a systemic breach, without eliminating the operational benefits that make agentic AI attractive.