The era of ‘Copilot’ is officially getting an upgrade. While the tech world has spent the last two years getting comfortable with AI that suggests code or drafts emails, the ByteDance team is moving the goalposts. he released deer flow 2.0, a new open-source ‘superagent’ framework that doesn’t just suggest tasks; This makes it work. Deerflow is designed to research, code, build websites, create slide decks, and generate video content autonomously.

Sandbox: An AI with its own computer

The most important differentiator for Deerflow is its approach to execution. Most AI agents work within the constraints of a text-box interface, sending queries to the API and returning a string of text. If you want that code to run, you—a human—must copy, paste, and debug it.

Deerflow flips this script. It operates within a Real, isolated Docker containers.

For software developers, the implications are massive. This is not an AI ‘hallucination’ that it ran a script; It is an agent with a full file system, a bash terminal, and the ability to read and write actual files. When you give Dearflow a task, it doesn’t just suggest a Python script to analyze the CSV – it spins up the environment, installs the dependencies, executes the code, and hands you the resulting chart.

By providing AI with its own ‘computer’, the ByteDance team has solved one of the biggest friction points in agentic workflow: hand-offs. Because it has stateful memory and a persistent file system, Dearflow can remember your specific writing styles, project structures, and preferences across different sessions.

Multi-agent orchestration: divide, conquer, and unite

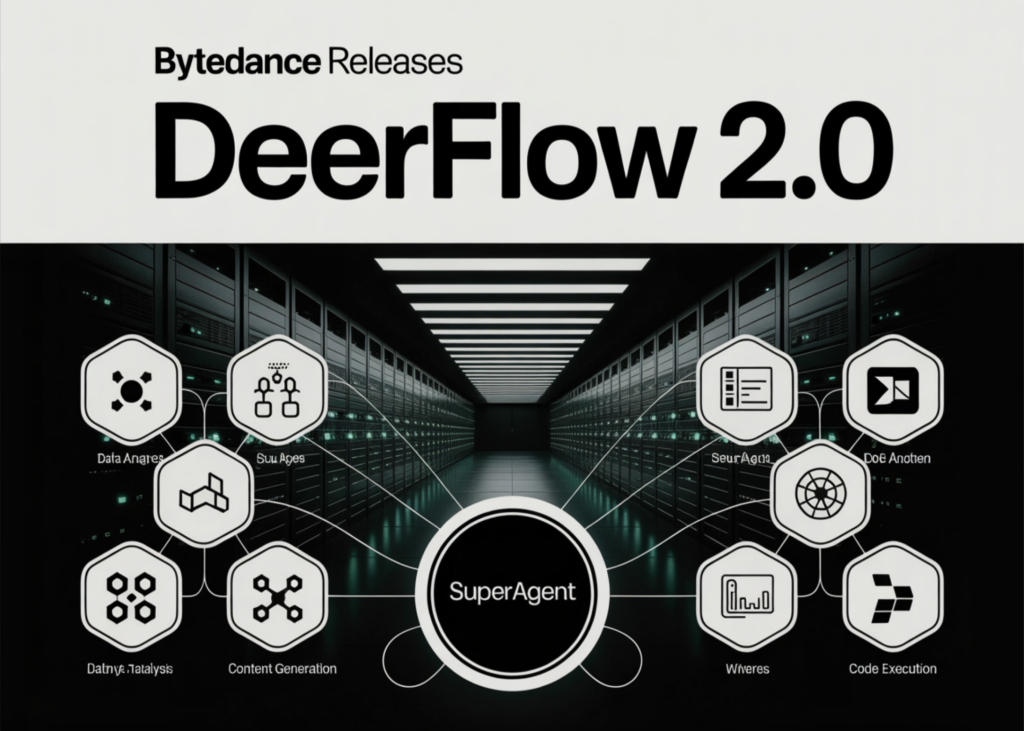

The ‘magic’ of Deerflow lies in its orchestration layer. it uses a superagent harness– A lead agent who acts as a project manager.

When a complex signal is received—for example, ‘Research the top 10 AI startups in 2026 and prepare a comprehensive presentation for me‘-Deerflow doesn’t try to do it all in a linear thought process. Instead, this task employs decomposition:

- lead agent Breaks the prompt into logical subtasks.

- sub-agents Are generated in parallel. One can handle web scraping for funding data, another can perform competitive analysis, and a third can generate relevant images.

- Convergence: Once the sub-agents complete their tasks in their respective sandboxes, the results are sent back to the main agent.

- Final Delivery: A final agent compiles the data into a sophisticated deliverable, such as a slide deck or full web application.

This parallel processing significantly reduces delivery times for ‘heavy’ tasks that would traditionally take a human researcher or developer hours to synthesize.

From research tools to full-stack automation

Interestingly, Deerflow was not originally intended to be so detailed. It started life at ByteDance as a specialized deep research tool. However, as the internal community began using it, they pushed the limits of its capabilities.

Users began leveraging its Docker-based execution to build automated data pipelines, spin up real-time dashboards, and even build full-scale web applications from scratch. Recognizing that the community wanted an execution engine rather than just a search tool, ByteDance rewrote the framework from the beginning.

The result is DeerFlow 2.0, a versatile framework that can handle:

- Deep Web Research: Collecting cited sources from across the web.

- content creation: Generating reports with integrated charts, images and videos.

- Code Execution: Running Python scripts and Bash commands in a secure environment.

- Wealth Creation: Creating complete slide decks and UI components.

key takeaways

- Execution-First Sandbox: Unlike traditional AI agents, Deerflow works in a different way Docker-based sandbox. This gives the agent a real file system, a Bash terminal, and the ability to execute code and run commands instead of just making suggestions.

- Hierarchical Multi-Agent Orchestration: The framework uses ‘superagent’ leads to break down complex tasks into sub-tasks. it is born parallel sub-agent Handling various components – such as scraping data, creating images, or writing code – before converting the results into a final deliverable.

- ‘Superagent’ axis: Deerflow 2.0 was originally a deep research tool completely rewritten To become a task-agnostic harness. It can now build full-stack web applications, produce professional slide decks, and seamlessly automate complex data pipelines.

- Complete model agnosticism: Deerflow is designed llm-neutral. It integrates with any OpenAI-compliant API, allowing engineers to swap between GPT-4, Cloud 3.5, Gemini 1.5, or even native models via DeepSeek and Olama without changing the underlying agent logic.

- Stateful memory and persistence: Characteristics of an agent persistent memory system Which tracks user preferences, writing styles, and project context across multiple sessions. This allows it to act as a long-term ‘AI employee’ rather than a one-time session tool.

check out GitHub repo. Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.