Researchers at Meta’s FAIR Lab have released NeuralSet, a Python framework designed to eliminate one of the most persistent obstacles in neuro-AI research: the painful, fragmented process of getting brain data into a deep learning pipeline.

Problem: Neuroscience data is stuck in the pre-deep-learning era

Neuroscience already has excellent, battle-tested software. Tools such as MNE-Python, EEGLAB, FieldTrip, Brainstorm, Nilearn, and fMRIprep are the gold standard for signal processing in electrophysiology and neuroimaging. The trouble is that these tools were designed for a pre-deep-learning world: they rely on eager loading, assuming that entire datasets fit in RAM, and they lack the native abstraction for temporally aligning neural time series with high-dimensional embeddings from modern AI frameworks like HuggingFace Transformer.

outcome? Researchers put enormous effort into building ad-hoc pipelines, which require manual data wrangling, manual caching, and complex backend configuration – just to combine brain signals with GPT-2 text embeddings for a single experiment. As public datasets on platforms like OpenNeuro now reach terabyte scale, and experimental protocols increasingly incorporate continuous speech and video stimuli, this infrastructure gap is no longer an inconvenient one – it is a scientific barrier.

What does NeuralSet actually do?

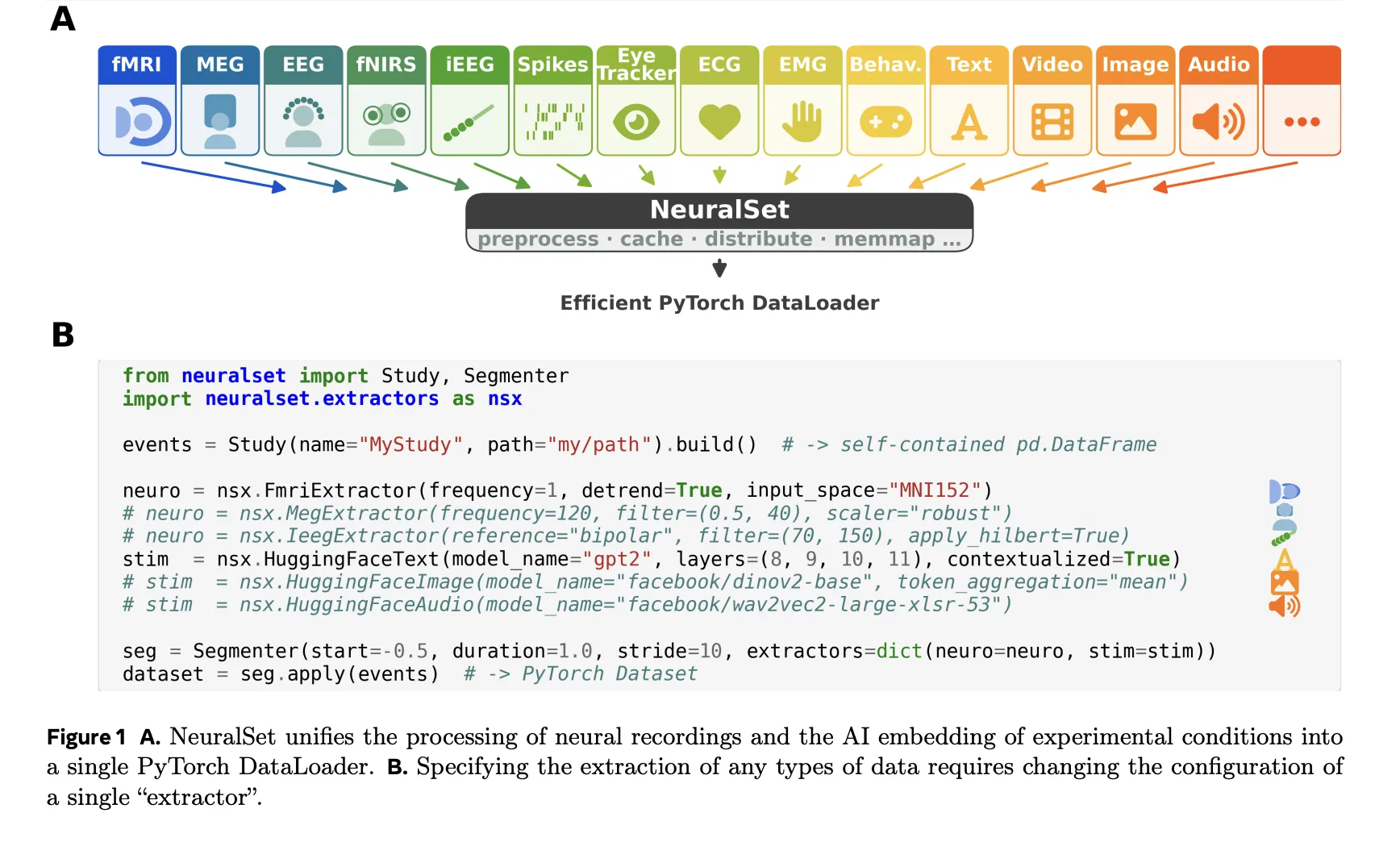

The main design principle of NeuralSet is structure-data decoupling. Instead of preloading raw signals, NeuralSet presents the logical structure of any experiment as lightweight, event-driven metadata – completely separated from the memory- and compute-intensive extraction of actual signals. The structure is organized around five basic essences: : Events, Extractors, Segments, Batch Data and a backend layer.

In practice, everything in an experiment – an fMRI run, a word spoken during a task, a video stimulus – is modeled as an event: defined by a lightweight Python dictionary. typeA start time, a durationand a timeline (A unique identifier for a persistent recording session). A Study The object aggregates all events in the entire dataset into a single Pandas DataFrame. Importantly, NeuralSet supports BIDS-compliant datasets, although it is not limited to them. Because DataFrames contain only light metadata – not the raw signals themselves – engineers can filter, explore, and recombine large datasets using standard Pandas operations without loading a single byte of raw data into memory.

composable EventsTransform Operations can then be chained to enrich or filter events – for example, annotating words with their sentence context, specifying cross-validation partitioning, or splitting long audio and video events into shorter segments. Multiple study and change phases can also be designed together ChainWhich creates a single reproducible, cacheable pipeline object.

When it’s time to actually work with the data, NeuralSets uses extractors to bridge the gap between the metadata layer and numerical arrays needed for machine learning models. For neural recordings, NeuralSet directly wraps a preprocessing stack of domain-specific libraries: a FmriExtractor Delegate to NeeLearn for signal cleaning, spatial smoothing and surface or atlas-based projection, while a MegExtractor Or EegExtractor Delegate to MNE-Python for filtering, re-referencing and re-sampling. The same unified interface covers iEEG, fNIRS, EMG and spike recording – switching modalities only requires changing configuration parameters, not rewriting the pipeline.

For experimental stimuli, NeuralSet offers native integration with the HuggingFace ecosystem. even one HuggingFaceImage The extractor can embed stimulus frames via DINOv2 or CLIP; Analogous extractors exist for audio (Wav2Vec, Whisper), text (GPT-2, LLaMA), and video (VideoMAE). Critically, NeuralSet can extend a static embedding – say, one vector per image – into a time series at an arbitrary frequency, so that the stimulus representation is always temporally aligned with the neural recording.

Extractors follow a three-stage execution model: configure (parameter verification at the time of creation), prepare (pre-compute and cache heavy output for all events), and Removal (Lazy retrieval from cache during model training). This means that expensive computations – such as running a large language model on every word in a corpus – are done once and reused across experiments. The output of an extractor for a single segment is batch data: A dictionary of tensors keyed by extractor name, with corresponding sections.

Segmentator, Dataloader, and Cluster-Ready Infrastructure

A Segmenter Event divides the dataframe into segments – contiguous temporal windows representing single training examples – either on a sliding window grid or anchored to a specific trigger event such as image or word onset. as a result SegmentDataset There is a standard PyTorch dataset, which is directly compatible with DataLoaderPyTorch Lightning, or any PyTorch-based framework.

Built on NeuralSet exca Package, which handles deterministic, hash-based caching, full computational provenance, and hardware-agnostic execution. Changing a single preprocessing parameter invalidates only the affected downstream cache, leaving independent branches untouched. Full provenance is preserved, meaning that any processed tensor can be traced back to the exact version of the raw data and the specific preprocessing chain used to generate it. Researchers can create prototypes on a single topic on their laptop, then send 100 topics to a SLURM-based HPC cluster by changing a single configuration flag – no infrastructure-specific code required.

NeuralSet uses pydantic to enforce strict schema validation at initialization time to every configurable object – events, studies, extractors, segmenters, and transforms are all pydantic. BaseModel Subcategory. This means that a misconfigured parameter (for example, a negative filter frequency or an invalid BIDS directory path) produces an obvious error immediately before any job is submitted, rather than failing hours into the processing run.

How does it stack up against existing devices

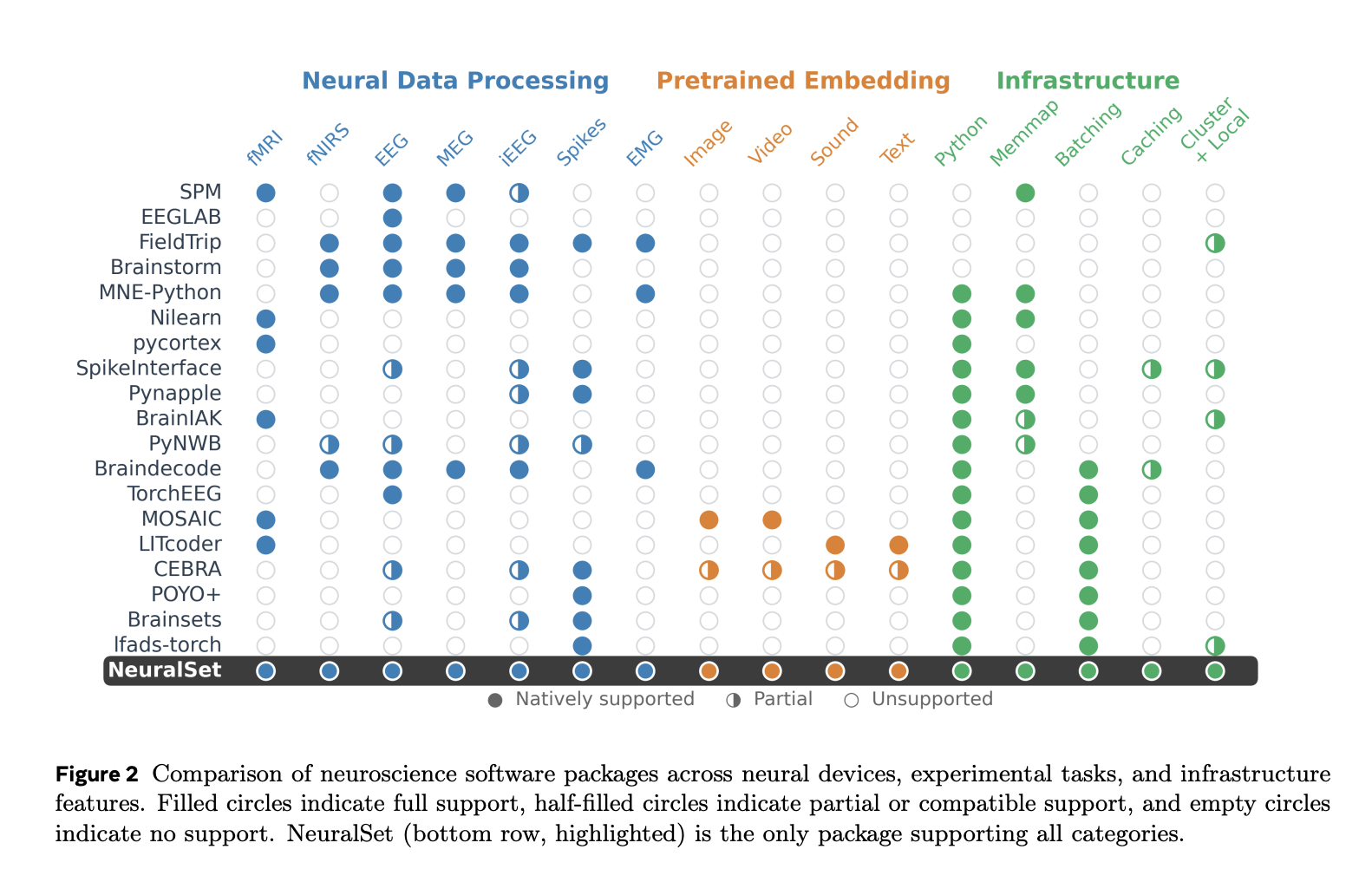

In the paper, the research team presents a detailed comparison of NeuralSet against 18 existing neuroscience software packages across neural devices (fMRI, EEG, MEG, iEEG, spikes, and more), experimental task types (image, video, sound, text), and infrastructure (Python support, memap, batching, caching, cluster execution). In comparison NeuralSet is the only package that has full support in all categories.

key takeaways

- NeuralSet integrates brain data and AI into one pipeline. Meta FAIR researchers created NeuralSet to bridge the gap between diverse neural recordings (fMRI, M/EEG, spikes) and modern deep learning frameworks, providing a single PyTorch-ready dataloader for both.

- Structure-data decoupling eliminates memory bottlenecks. NeuralSet separates lightweight event metadata from heavy signal extraction, so AI devs and researchers can filter and explore terabyte-scale datasets without loading a single byte of raw data into RAM.

- Changing the recording modes only requires changing one configuration parameter. A unified extractor interface wraps MNE-Python, Nilearn and HuggingFace models – covering fMRI, EEG, MEG, IEEG, fNIRS, EMG, spikes, text, audio and video – without requiring any pipeline rewriting.

- Pedantic validation and deterministic caching prevent wasted computation. Configuration errors are caught early on, before any tasks are run, and a hash-based caching system ensures that expensive calculations like LLM embeddings are done once and reused across all experiments.

- The same code runs on a laptop or SLURM cluster. NeuralSet’s hardware-agnostic backend, powered by

excaThe package lets researchers and AI developers seamlessly scale from local prototyping to high-performance cluster execution by updating a single configuration flag.

check it out paper And GitHub page. Also, feel free to follow us Twitter And don’t forget to join us 130k+ ML subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.

Do you need to partner with us to promote your GitHub repo or Hugging Face page or product release or webinar, etc? join us