Image by author

# Introduction

Before we start anything, I want you to watch this video:

Isn’t it amazing? I mean you can now run a full local model that you can talk to on your machine and it works out of the box. it feels like talking to a real person Because the system can listen and speak at the same time, like a natural conversation.

This is not the usual “you speak, then waits, then answers” pattern. Personaplex is a real-time speech-to-speech conversational AI It handles interruptions, overlaps, and natural conversation cues like “uh-huh” or “right” when you’re talking.

PersonPlex is designed as full-duplex so that it can listen to and produce speech simultaneously without forcing the user to pause first. This makes conversations feel more fluid and human-like than traditional voice assistants.

In this tutorial, we’ll learn how to set up a Linux environment, install PersonaPlex locally, and then start the PersonaPlex web server so you can interact with the AI in your browser in real time.

# Using PersonaPlex Locally: A Step-by-Step Guide

In this section, we’ll learn how we install PersonPlex on Linux, launch the real-time WebUI, and start talking to a full-duplex speech-to-speech AI model running locally on our machine.

// Step 1: Accepting model conditions and generating a token

Before you can download and run PersonPlex, you must accept the Terms of Use for models on Hugging Face. NVIDIA’s speech-to-speech model PersonaPlex-7B-v1 is gated, which means you can’t access Wait unless you agree to the license terms on the model page.

Go to PersonaPlex model page Go to Hugging Face and log in. You will see a notice saying that you need to agree to share your contact information and accept the license terms to access the files. Review the NVIDIA Open Model License and accept the terms to unlock the repository.

Once you have access, create a hugging face access token:

- Go Settings → Access Token

- Create a new token with Reading Permission

- Copy the generated token

Then export this in your terminal:

export HF_TOKEN="YOUR_HF_TOKEN"This token allows your local machine to authenticate and download PersonPlex models.

// Step 2: Installing Linux Dependencies

Before installing PersonPlex, you must install opus audio codec development library. PersonPlex relies on Opus to handle real-time audio encoding and decoding, so this dependency must be available on your system.

On Ubuntu or Debian-based systems, run:

sudo apt update

sudo apt install -y libopus-dev// Step 3: Building PersonaPlex from Source

Now we will clone the PersonPlex repository and install the required Moshi packages from source.

Clone the official NVIDIA repository:

git clone https://github.com/NVIDIA/personaplex.git

cd personaplexOnce inside the project directory, install Moshi:

This will compile and install the PersonPlex components with all required dependencies including PyTorch, CUDA libraries, NCCL, and audio tooling.

You should see packages like torch, nvidia-cueblas-cu12, nvidia-qnn-cu12, sentencepieces, and moshi-personplex installed successfully.

tip: If you are on your own machine then do this in a virtual environment.

// Step 4: Starting the WebUI Server

Before launching the server, install Fast Hugging Face Downloader:

Now start the PersonPlex Real-Time Server:

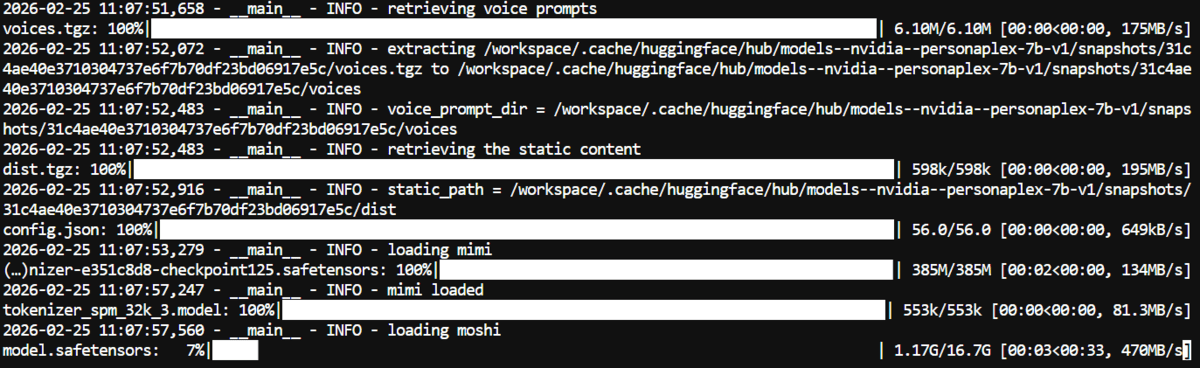

python -m moshi.server --host 0.0.0.0 --port 8998The first run will download the full PersonPlex model, which is approximately 16.7 GB. This may take some time depending on your internet speed.

After the download is complete, the model will be loaded into memory and the server will start.

// Step 5: Talking to PersonPlex in the Browser

Now that the server is running, it’s time to actually talk to PersonPlex.

If you’re running it on your local machine, copy this link and paste it into your browser: http://localhost:8998.

This will load the WebUI interface in your browser.

As soon as the page opens:

- choose a voice

- Click Add

- grant microphone permissions

- start speaking

The interface includes conversation templates. For this demo, we selected astronaut (fun) Templates to make conversations more entertaining. You can also create your own template by editing the initial system prompt text. This allows you to completely customize the personality and behavior of the AI.

For sound selection, we switched from default and chose Natural F3 Just to try something different.

And honestly, it feels surprisingly natural.

You can interrupt him while he is speaking.

You can ask follow-up questions.

You can change the subject in the middle of the sentence.

It handles the flow of conversations smoothly and responds intelligently in real time. I also tested it by simulating a bank customer service call and the experience felt realistic.

PersonPlex includes multiple voice presets:

- Natural (female): NATF0, NATF1, NATF2, NATF3

- Natural (Male): NATM0, NATM1, NATM2, NATM3

- Variety (Female): VARF0, VARF1, VARF2, VARF3, VARF4

- Variety (Male): VARM0, VARM1, VARM2, VARM3, VARM4

You can experiment with different voices to match the personality you want. Some feel more conversational, some more expressive.

# concluding remarks

After going through this entire setup and actually talking to PersonPlex in real time, one thing becomes very clear.

This feels different.

We are accustomed to chat-based AI. You type. It gives feedback. You wait for your turn. It seems transactional.

Speech-to-speech completely changes that dynamic.

With PersonPlex running locally, you no longer have to wait your turn. You can disrupt it. You can change direction mid-sentence. You can naturally ask follow-up questions. The conversation keeps flowing. It feels really close to the way humans talk.

And that’s why I really believe that the future of AI is speech-to-speech.

But that’s only half the story.

The real change will happen when these real-time communication systems are deeply connected to agents and devices. Imagine you’re talking to your AI and saying, “Book me a Friday morning ticket.” Check stock price and trade. Compose and send that email. Schedule a meeting. Pull report.

Tab is not switching. No copying and pasting. Not typing commands.

Just talk.

PersonPlex already solves one of the toughest problems, which is natural, full-duplex conversation. The next layer is execution. Once speech-to-speech systems are connected to APIs, automation tools, browsers, trading platforms, and productivity apps, they stop being assistants and start becoming operators.

In short, it becomes something like OpenGL on steroids.

A system that doesn’t just talk like a human, but acts on your behalf in real time.

abid ali awan (@1Abidaliyawan) is a certified data scientist professional who loves building machine learning models. Currently, he is focusing on content creation and writing technical blogs on machine learning and data science technologies. Abid holds a master’s degree in technology management and a bachelor’s degree in telecommunication engineering. Their vision is to create AI products using graph neural networks for students struggling with mental illness.