The spatial data processing and analytics business is critical to the geospatial workload at Databricks. Many teams rely on external libraries or Spark extensions like Apache Sedona, GeoPandas, Databricks Lab Project Mosaic to handle these workloads. While customers have been successful, these approaches add operational overhead and often require tuning to reach acceptable performance.

Earlier this year, Databricks released support for Spatial SQLWhich now includes support for 90 spatial functions and storing data geometry Or Geography column. Spatial SQL built into Databricks is the best way to store and process vector data compared to any alternative because it solves all the primary challenges of using add-on libraries.: Highly stable, great performance, and with Databricks SQL Serverless, there is no need to manage classic clusters, library compatibility, and runtime volumes.

One of the most common spatial processing tasks is to compare whether two geometries overlap, where one geometry includes the other, or how close they are to each other. This analysis requires the use of spatial joins, which requires great out-of-the-box performance to accelerate time to spatial insight.

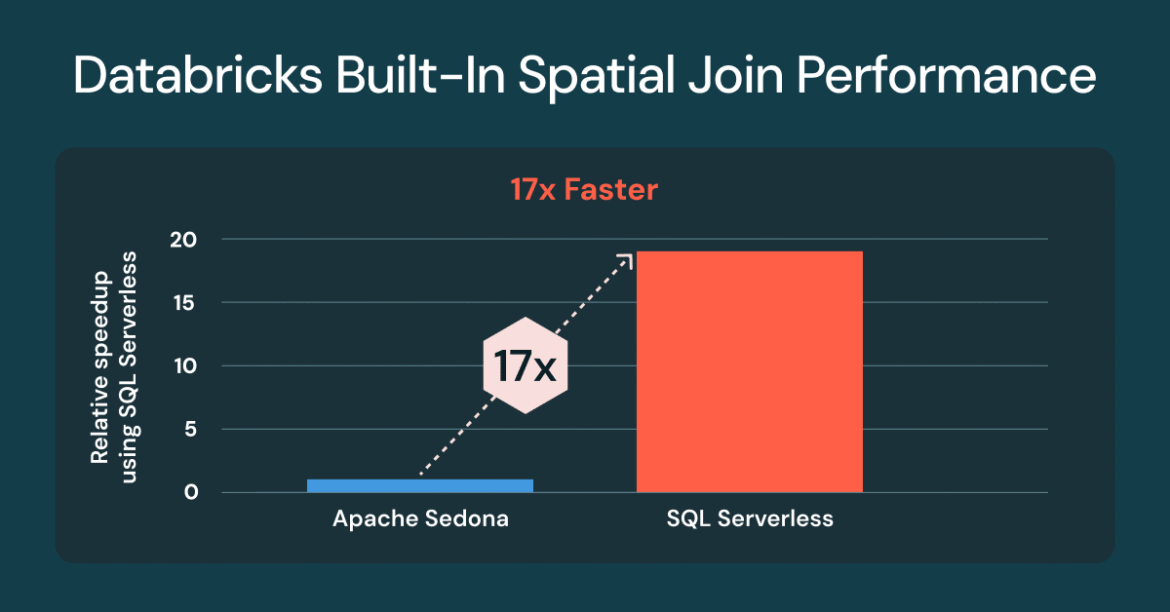

Spatial joins up to 17x faster with Databricks SQL Serverless

We are pleased to announce that every customer is using built-in spatial SQL for spatial joins, You will see up to 17 times faster performance Apache Sedona1 compared to the classic cluster installed. Performance improvements are available to all customers using Databricks SQL Serverless and Classic Cluster with Databricks Runtime (DBR) 17.3. If you are already using Databricks built-in spatial predicates, e.g. ST_Intersection Or ST_containedNo code changes required.

At the time of the benchmark Apache Sedona 1.7 was not compatible with DBR 17.x, DBR 16.4 was used.

Running spatial joins presents unique challenges, with performance influenced by many factors. Geospatial datasets are often highly heterogeneous, such as dense urban areas and sparse rural areas, and vary widely in geometric complexity, such as the complex Norwegian coastline compared to Colorado’s simple boundaries. Even after efficient file pruning, the remaining included candidates still demand computation-intensive geometric operations. This is where Databricks shines.

The improvement in spatial join comes from using R-tree indexing, optimized spatial join in Photon, and intelligent range join optimization, all of which are applied automatically. You write standard SQL with spatial functions, and the engine handles the complexity.

Business importance of spatial connectivity

A spatial join is similar to a database join but instead of matching IDs, it uses a local predicate To match data based on location. Spatial predicates evaluate relative physical relationships, such as overlap, containment, or proximity, to link two datasets. Spatial joins are a powerful tool for spatial aggregation, helping analysts uncover trends, patterns, and location-based insights across a variety of locations, from shopping centers and farms to cities and the entire planet.

Spatial Join answers business-critical questions in every industry. For example:

- Coastal authorities monitor ship traffic within a port or maritime limits

- Retailers analyze vehicle traffic and visitation patterns at store locations

- Modern agricultural companies combine weather, field and seed data to analyze and forecast crop yields.

- Public safety agencies and insurance companies figure out which homes are at risk from flood or fire.

- Energy and utility operations teams create service and infrastructure plans based on analysis of energy sources, residential and commercial land use, and existing assets.

Spatial Linkage Benchmark Preparation

For data, we chose four worldwide large-scale datasets from the Overture Maps Foundation: addresses, buildings, land use, and streets. You can test the questions yourself using the methods described below.

We used the Overture Maps dataset, which were initially downloaded as Geoparks. An example of preparing addresses for Sedona benchmarking is shown below. All datasets followed the same pattern.

We also processed the data in Lakehouse tables, converting the parquet WKB to the original geometry Data types for Databricks benchmarking.

comparison question

The chart above uses the same set of three questions each calculation is tested against.

Question #1 – ST_Contains(buildings, addresses)

This query evaluates a 2.5B building polygon that contains 450M address points (point-in-polygon join). The result is 200M+ matches. For Sedona, we reversed it ST_within(a.geom, b.geom) To support default left build-side optimizations. On Databricks, there is no material difference between using ST_contained Or ST_Within,

Question #2 – ST_Cover (Land Use, Building)

This query evaluates 1.3M ‘Industrial’ land use polygons worldwide covering 2.5B building polygons. The result is 25M+ matches.

Question #3 – ST_Intersects (Roads, Land Use)

This query evaluates 300M roads that intersect with 10M ‘residential’ land-use polygons worldwide. The result is 100M+ matches. For Sedona, we reversed it ST_Intersects(l.geom, trans.geom) To support default left build-side optimizations.

What’s next for Spatial SQL and native types

Databricks continues to add new spatial expressions based on customer requests. Here is a list of spatial functions that were added after the public preview: ST_AsEWKB, st_dump, ST_outer ring, ST_InteriorRingN, ST_NumInteriorRingsNow available in DBR 18,0 beta: support for ST_Azimuth, ST_Boundary, ST_ClosestPoint, EWKT, including two new expressions, ST_GeogFromEWKT and ST_GeomFromEWKT, and performance and robustness improvements, ST_IsValid, ST_makelineAnd ST_Create Polygon,

Provide your feedback to the product team

If you would like to share your requests for additional ST expressions or geospatial features, please fill out this short description survey,

UPDATE: Open sourcing geo types in Apache Spark™

contribution of geometry And Geography Apache Spark™ data types have made great strides and are on track to be committed to Spark 4.2 in 2026.

Try Spatial SQL for free

Run your next spatial query on Databricks SQL today – and see how much faster your spatial joins can be. To learn more about spatial SQL functions, see SQL And pyspark Documentation. For more information on Databricks SQL, see website, product tourAnd Databricks free versionIf you want to migrate your existing warehouse to a high-performance, serverless data warehouse with a better user experience and lower total costs, Databricks SQL is the solution – try it To free.