Image by author

# Net

You are going to train a model when you notice that 20% of your values are missing. Do you skip those lines? Fill them with average? Use something fancy? The answer matters more than you think.

If you Google it, you’ll find dozens of modeling methods, ranging from the extremely simple (just use the mean) to the sophisticated (iterative machine learning models). You may think fancy methods are better. KNN Considers similar lines. rats Builds predictive models. They have to do better than just slapping average, right?

We also thought so. we were wrong.

# Use

we caught Crop Recommendation Dataset From StratScratch projects – 2,200 soil samples across 22 crop types with characteristics such as nitrogen levels, temperature, humidity and rainfall. A random forest hits this thing with 99.6% accuracy. It’s almost suspiciously clean.

This analysis extends our agricultural data analysis Project, which explores similar datasets through EDA and statistical testing. Here, we ask: What happens when clean data meets a real-world problem – missing values?

Perfect for our experiment.

We introduced 20% missing values (completely random, simulating sensor failures), then tested five imputation methods:

Our testing was thorough; We used 10-fold cross-validation across five random seeds (total 50 runs per method). To ensure that no information from the testing set leaked into the training set, our imputation models were trained only on the training set. For our statistical tests, we applied the Bonferroni correction. We also normalized the input features for both KNN and MICE, such that if we had not normalized them, inputs with values between 0 and 300 (precipitation) would have a much greater impact than inputs with values in the range from 3 to 10 (pH) when calculating distances for these methods. Full code and reproducible results are available in our notebook.

Then we ran it and looked at the results.

# Wonder

This is what we expected: KNN or MICE will win, because they are smarter. They consider relationships between attributes. They use real machine learning.

Here’s what we found:

The median and mean are tied for first place. In the third and fourth stages came sophisticated methods.

We ran statistical tests. Mean vs. Median: P = 0.7. Not even close to important. They are effectively identical.

But here’s the kicker: both performed significantly better than KNN and MICE (p < 0.001 after Bonferroni correction). Simple methods just don't match fancy methods. They beat him.

# What are you waiting for?

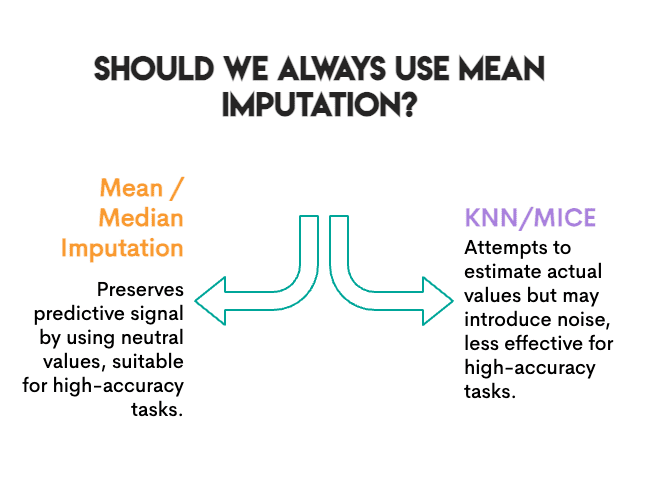

Before you delete your MICE installation, let’s take a look at why this happened.

The work was prophetic. We measured accuracy. Does the model classify crops correctly even after imputation? For that specific goal, what matters is preserving the predicted signal, not necessarily the exact value.

Mean imputation does something interesting: it replaces missing values with “neutral” values that do not push the model toward any particular class. It’s boring, but safe. Random forest may still find its decision boundaries.

KNN and MICE take more effort; They estimate what the real value might have been. But in doing so, they may generate noise. If the nearest neighbors are not the same, or if iterative modeling of MICE picks up spurious patterns, you can add error instead of removing it.

The baseline was already high. At 99.6% accuracy, this is a very easy classification problem. When the signal is strong, attribution errors matter less. The model can tolerate some noise.

random forest Is strong. Tree-based models handle incomplete data well. A linear model struggles more with variance distortion of the mean.

not so fast.

# plot twist

We measured something else: correlation preservation.

Here’s the thing about real data: features don’t exist in isolation. They walk together. In our dataset, when a soil is high in phosphorus, it is usually also high in potassium (correlation of 0.74). It is not random; Farmers typically combine these nutrients together, and some types of soil retain both equally well.

When you attribute missing values, you can accidentally break these relationships. Mean modeling fills in the “average potassium” regardless of what phosphorus looks like in that row. Do this enough times, and the relationship between P and K will begin to collapse. Your imputed data may look fine column-by-column, but relationships between columns are silently being broken.

Why does it matter? If your next step is clustering, PCAOr any analysis where features are correlation points, you are working with damaged data and you don’t even know it.

We investigated: after imputation, how much of that P↔K correlation remained?

Image by author

The ranking was completely reversed.

KNN preserved the correlation almost perfectly. The mean and median destroyed about a quarter of it. And random sampling (which samples values for each column independently) eliminated the relationship.

This is understandable. Mean imputation replaces missing values with the same number, no matter what the other characteristics look like. Pisces don’t care if a row is high in nitrogen; This still represents average potassium. KNN looks at similar rows, so if high-N rows have high-K, it will apply the high-K value.

# exchange

Here’s the real finding: There is no single best modeling method. Instead, select the most appropriate method based on your specific goals and context.

Accuracy ranking and correlation ranking are almost opposites:

Image by author

(At least the random sample is consistent – it’s bad at everything.)

This trade-off is not unique to our dataset. It’s all about how these methods work. Mean/median are univariate, and they look at one column at a time. KNN/MICE are multivariate, and they consider relationships. Univariate methods preserve the marginal distribution but destroy the correlation. Multivariate methods preserve structure and may introduce some types of prediction error/noise.

# So, what should you really do?

After running this experiment and digging into the literature, here’s our practical guide:

Use mean or median when:

- Your goal is prediction (classification, regression)

- You are using a robust model (Random Forest, XGBoost, Neural Net)

- Missing rate is less than 30%

- you need something quickly

Use KNN when:

- You need to preserve feature relationships

- The downstream task is clustering, PCA or visualization

- You want the correlations to survive for exploratory analysis

Use MICE when:

- You need valid standard errors (for statistical inference)

- You are reporting confidence intervals or p-values

- Missing data mechanism may be MAR (missing at random).

Avoid random sampling:

- This is attractive because it “preserves distribution”

- But this destroys all multivariate structure

- We couldn’t find any good use case

# fair warning

We used a dataset, a missing rate (20%), a mechanism (MCAR), and a downstream model (Random Forest). Your setup may vary. The literature shows that on other datasets, missforest And MICEs often perform better. Our finding that simpler methods compete is anecdotal, but it is not universal.

# bottom line

We went into this experiment hoping to confirm that sophisticated modeling methods are worth the complexity. Instead, we found that for prediction accuracy, the humble mean held its own, while completely failing to preserve the relationships between features.

The lesson is not to “always use mean accusations.” It’s “knowing what you’re optimizing for.”

Image by author

If you just need predictions, start simple. Test whether KNN or MICE really helps on your data. Don’t assume they will do this.

If you need the correlation structure for downstream analysis, Mean will silently destroy it while still giving you perfectly reasonable accuracy numbers. That is a trap.

And whatever you do, scale your features before using KNN. Trust us on this one.

Nate Rosidi Is a data scientist and is into product strategy. He is also an adjunct professor teaching analytics, and is the founder of StratScratch, a platform that helps data scientists prepare for their interviews with real interview questions from top companies. Nate writes on the latest trends in the career market, gives interview advice, shares data science projects, and covers everything SQL.