Large scale data quality challenges

As organizations create more data and AI products, maintaining data quality becomes harder. Data powers everything – from executive dashboards to company-wide Q&A bots. Outdated tables lead to outdated or even incorrect answers, which directly impacts business results.

Most data quality approaches are not consistent with this reality. Data teams rely on manually defined rules applied to a small set of tables. As data riches grow, they create blind spots and limit visibility into overall health.

Teams constantly add new tables, each with its own data pattern. Maintaining custom checks for each dataset is not sustainable. In practice, only a few important tables are monitored while the majority of assets remain uncontrolled.

The result is that organizations have more data than ever before, but less confidence in how to use it.

Introduction to Agentic Data Quality Monitoring

Today, Databricks announced Public preview of data quality monitoring on AWS, Azure Databricks, and GCP.

Data quality monitoring replaces fragmented, manual checks with an agentic approach built for scale. Instead of a static threshold, AI agents learn common data patterns, adapt to change, and continuously monitor the data estate.

Deep integration with the Databricks platform enables more than just detection.

- the root cause comes straight out In upstream lakeflow jobs and pipelines. Teams can go into affected work with data quality monitoring and leverage Lakeflow’s built-in observability capabilities to get deeper context on failures and resolve issues faster.

- issues have been prioritized Using Unity Catalog lineage and authenticated tags, ensuring that high-impact datasets are addressed first.

With platform-native monitoring, teams detect issues earlier, focus on what’s most important, and resolve issues faster at enterprise scale.

“Our goal has always been to have our data tell us when there’s a problem. Databricks’ data quality monitoring ultimately does this through its AI-powered approach. It’s seamlessly integrated into the UI, monitoring all of our tables with a hands-off, no-configuration approach that was always a limiting factor with other products. Instead of users reporting issues, our data flags it first, improving quality, trust, and integrity across our platform Is.” – Jake Rousis, Lead Data Engineer at Alinta Energy

How data quality monitoring works

Data quality monitoring provides actionable insights through two complementary methods.

anomaly detection

Enabled at the schema level, anomaly detection monitors all critical tables without manual configuration. AI agents learn historical patterns and seasonal behavior to identify unexpected changes.

- Learned behavior, not fixed rules: Agents adapt to normal variation and monitor key quality signals such as freshness and completeness. Support for additional testing including percent nullity, specificity, and validity is forthcoming.

- Intelligent scanning for scale: All tables in the schema are scanned once, then rechecked depending on the importance and update frequency of the table. unity list genealogy and authentication Determine which tables are most important. Frequently used tables are scanned more frequently, while static or obsolete tables are automatically skipped.

- System tables for visibility and reporting: Table health, learned limits, and observed patterns are recorded in system tables. Teams use this data for alerts, reporting, and in-depth analysis.

data profiling

Enabled at the table level, data profiling captures summary statistics and tracks their changes over time. These metrics provide historical context and will be provided for anomaly detection so you can spot issues easily.

“At OnePay, our mission is to help people achieve financial progress – empowering them to save, spend, borrow and grow their money. High-quality data across all of our datasets is critical to delivering that mission. With data quality monitoring, we can catch issues early and take prompt action. We are able to ensure accuracy in our analysis, reporting and the development of robust ML models, all of which contribute to better serving our customers.” – Namit Pai, Head of Platform and Data Engineering at OnePay

Ensure the quality of an ever-growing data asset

With automated quality monitoring, data platform teams can keep track of the overall health of their data and ensure timely resolution of any issues.

Agent, one-click monitoring: Monitor the entire schema without manual rule writing and threshold configuration. Data quality monitoring learns historical patterns and seasonal behavior (e.g., volume declines on weekends, tax season, etc.) to intelligently detect anomalies across all your tables.

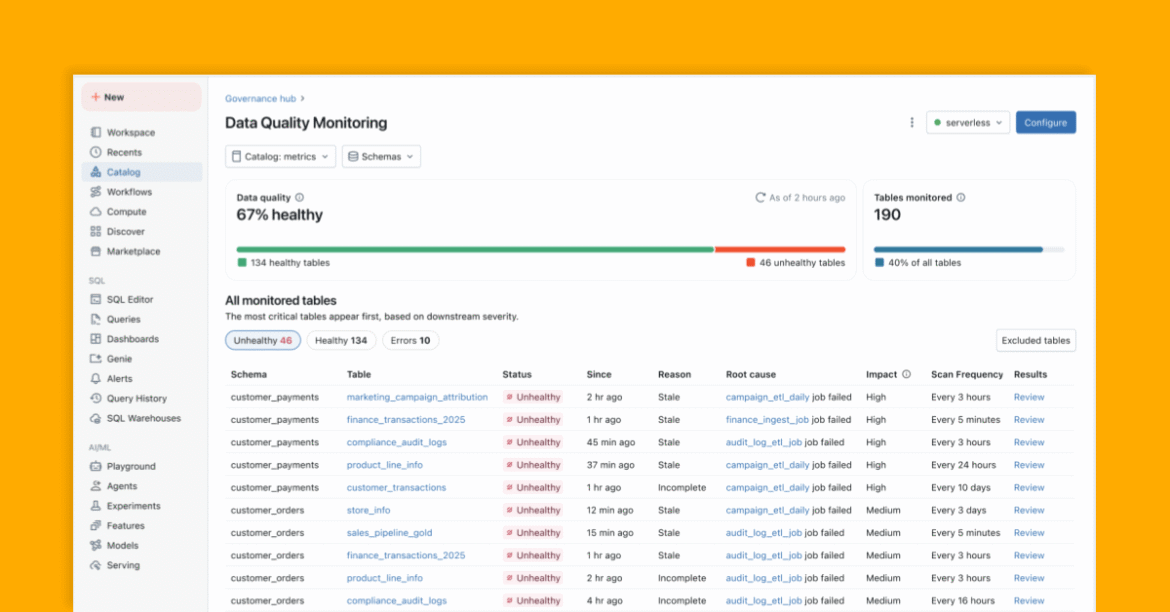

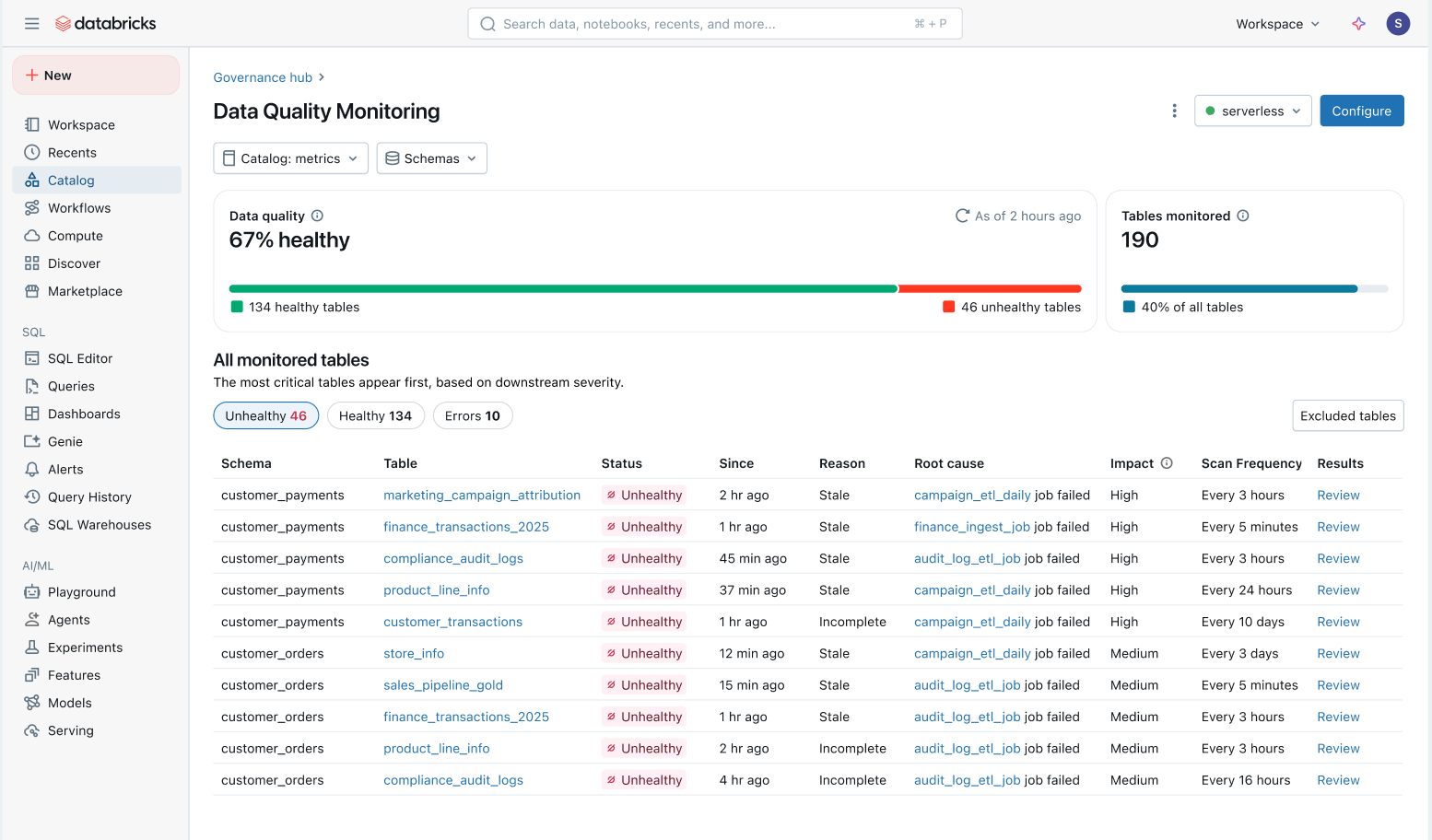

Holistic view of data health: Easily track the health of all tables in a consolidated view and ensure issues are fixed.

- Issues prioritized by downstream impact: All tables are prioritized based on their downstream lineage and query volume. Quality issues on your most important tables are flagged first.

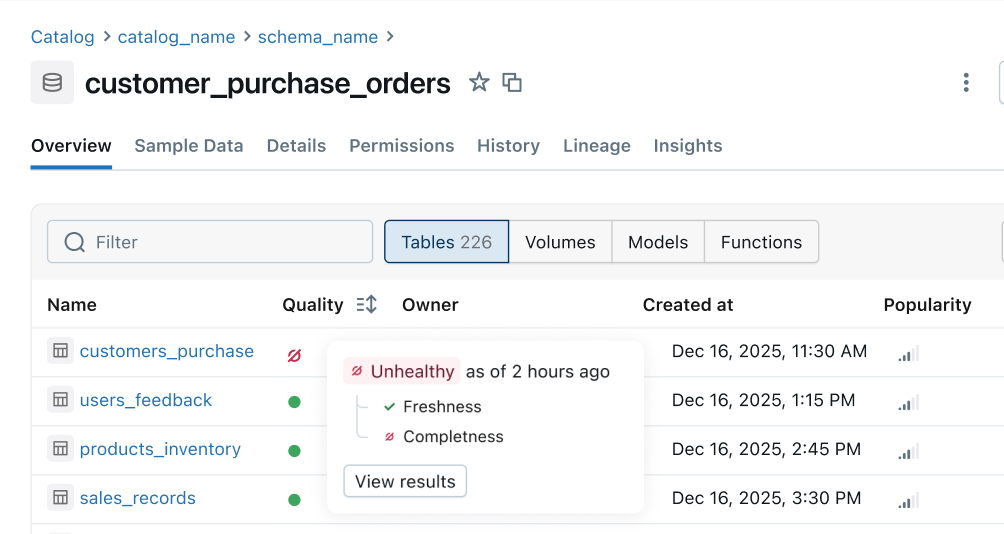

- Fast time to solution: In Unity Catalog, data quality monitoring takes issues directly upstream lakeflow jobs And spark declarative pipeline. Teams can dive into the affected work from the catalog to investigate specific failures, code changes, and other root causes.

Health Indicators: Continuous quality signals are fed from upstream pipelines to downstream business surfaces. Data engineering teams are informed about issues earlier and consumers can immediately tell if the data is safe to use.

what will happen next

What’s on our roadmap in the coming months:

- More quality rules: Support for more tests like percent nullity, specificity and validity.

- Automated alerts and root cause analysis: Automatically receive alerts and resolve issues instantly with intelligent root cause indicators built directly into your jobs and pipelines.

- Platform-wide health indicators: : View persistent health signals in Unity Catalog, Lakeflow Observability, Lineage, Notebook, Genie, and more.

- Filter and quarantine bad data: Proactively identify bad data and prevent it from reaching consumers.

Getting Started: Public Preview

Experience intelligent monitoring at scale and build a reliable, self-service data platform. Try the public preview today: