Image by editor

# Introduction

Exploratory data analysis (EDA) is an important step before building data-driven AI systems based on deep data analysis processes or machine learning models. Fixing common, real-world data quality issues and anomalies is often deferred to later stages of the data pipeline, EDA is an excellent opportunity to proactively detect these issues – before they silently bias results, degrade model performance, or compromise downstream decision making.

Below, we have compiled a list that includes 7 Python tricks you can apply to your initial EDA processes to help you effectively identify and fix a variety of data quality issues.

To illustrate these tips, we will use an artificially generated employee dataset, in which we intentionally included a variety of data quality issues to demonstrate where they can be detected and how to handle them. Before trying the tips, make sure you first copy and paste the following introduction code into your coding environment:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

# PREAMBLE CODE THAT RANDOMLY CREATES A DATASET AND INTRODUCES QUALITY ISSUES IN IT

np.random.seed(42)

n = 1000

df = pd.DataFrame({

"age": np.random.normal(40, 12, n).round(),

"income": np.random.normal(60000, 15000, n),

"experience_years": np.random.normal(10, 5, n),

"department": np.random.choice(

("Sales", "Engineering", "HR", "sales", "Eng", "HR "), n

),

"performance_score": np.random.normal(3, 0.7, n)

})

# Randomly injecting data issues to the dataset

# 1. Missing values

df.loc(np.random.choice(n, 80, replace=False), "income") = np.nan

df.loc(np.random.choice(n, 50, replace=False), "department") = np.nan

# 2. Outliers

df.loc(np.random.choice(n, 10), "income") *= 5

df.loc(np.random.choice(n, 10), "age") = -5

# 3. Invalid values

df.loc(np.random.choice(n, 15), "performance_score") = 7

# 4. Skewness

df("bonus") = np.random.exponential(2000, n)

# 5. Highly correlated features

df("income_copy") = df("income") * 1.02

# 6. Duplicated entries

df = pd.concat((df, df.iloc(:20)), ignore_index=True)

df.head()# 1. Detecting missing values through heatmaps

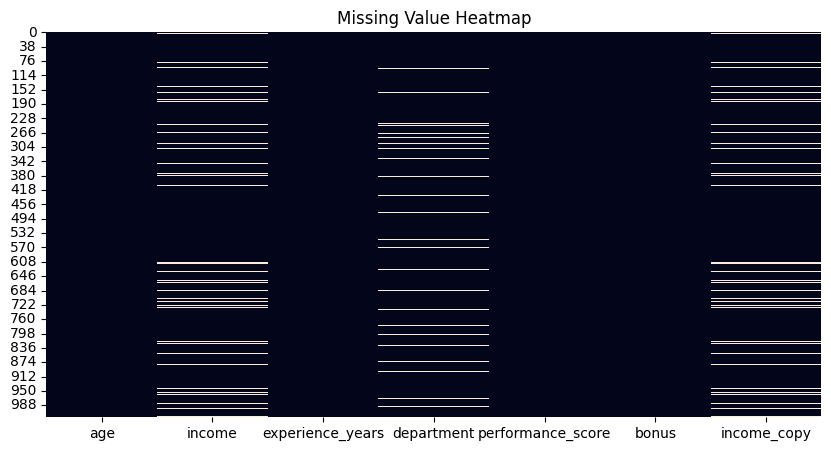

While Python libraries have functions like Panda which counts the number of missing values for each attribute in your dataset An attractive way to get a quick glimpse of all the missing values in your dataset – and which columns or attributes contain some – is to visualize with the help of a heatmap. isnull() The function thus plots white, barcode-like lines for each missing value in your entire dataset, organized by attributes horizontally.

plt.figure(figsize=(10, 5))

sns.heatmap(df.isnull(), cbar=False)

plt.title("Missing Value Heatmap")

plt.show()

df.isnull().sum().sort_values(ascending=False)

Heatmap to detect missing values. Image by author

# 2. Removing Duplicates

This trick is a classic: simple, yet very effective, to count the number of duplicated instances (rows) in your dataset, which you can then apply drop_duplicates() To remove them. By default, this function preserves the first occurrence of each duplicated row and removes the rest. Nevertheless, this behavior can be modified by, for example, using keep="last" Option to retain the last occurrence instead of the first occurrence, or keep=False To be let off the hook All The lines were repeated completely. The behavior you choose will depend on your specific problem requirements.

duplicate_count = df.duplicated().sum()

print(f"Number of duplicate rows: {duplicate_count}")

# Remove duplicates

df = df.drop_duplicates()# 3. Identifying outliers using the inter-quartile range method

The inter-quartile range (IQR) method is a statistics-supported approach to identify data points that can be interpreted as outliers or extreme values due to being far away from the rest of the points. This trick provides an implementation of the IQR method that can be repeated for different numerical attributes, such as “income”:

def detect_outliers_iqr(data, column):

Q1 = data(column).quantile(0.25)

Q3 = data(column).quantile(0.75)

IQR = Q3 - Q1

lower = Q1 - 1.5 * IQR

upper = Q3 + 1.5 * IQR

return data((data(column) < lower) | (data(column) > upper))

outliers_income = detect_outliers_iqr(df, "income")

print(f"Income outliers: {len(outliers_income)}")

# Optional: cap them

Q1 = df("income").quantile(0.25)

Q3 = df("income").quantile(0.75)

IQR = Q3 - Q1

lower = Q1 - 1.5 * IQR

upper = Q3 + 1.5 * IQR

df("income") = df("income").clip(lower, upper)# 4. Managing Inconsistent Categories

Unlike outliers, which are typically associated with numerical characteristics, inconsistent ranges in categorical variables can arise from a variety of factors, for example manual discrepancies such as uppercase or lowercase initials in names or domain-specific variations. Therefore, the right approach to handling them may partly involve subject matter expertise to decide on the right set of categories to be considered valid. This example applies to handling range discrepancies in department names that refer to the same department.

print("Before cleaning:")

print(df("department").value_counts(dropna=False))

df("department") = (

df("department")

.str.strip()

.str.lower()

.replace({

"eng": "engineering",

"sales": "sales",

"hr": "hr"

})

)

print("nAfter cleaning:")

print(df("department").value_counts(dropna=False))# 5. Checking and validating the range

While outliers are statistically distant values, invalid values depend on domain-specific constraints, for example values for the “age” attribute cannot be negative. This example identifies negative values for the “age” attribute and replaces them with NaNs – note that these invalid values turn into missing values, so a downstream strategy may also be needed to handle them.

invalid_age = df(df("age") < 0)

print(f"Invalid ages: {len(invalid_age)}")

# Fix by setting to NaN

df.loc(df("age") < 0, "age") = np.nan# 6. Applying Log-Transform to Skewed Data

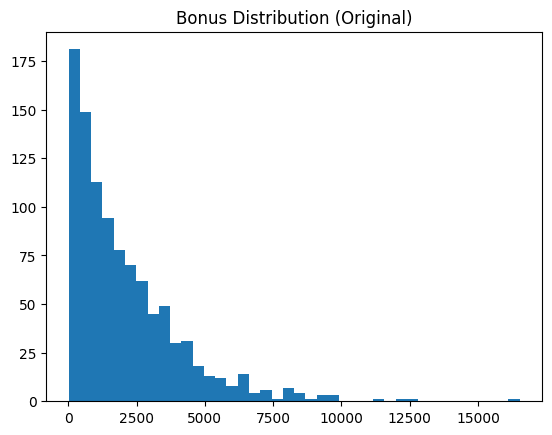

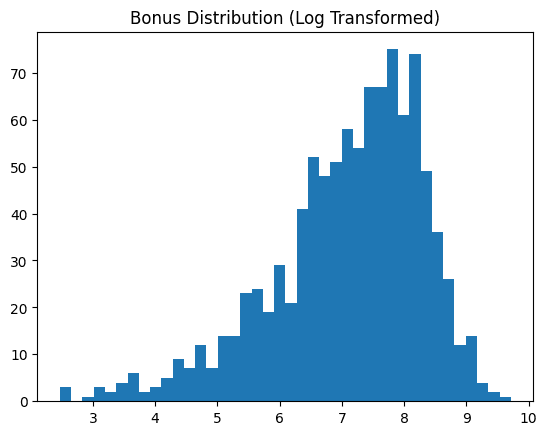

Skewed data features like the “bonus” in our example dataset are usually better transformed into something resembling a normal distribution, as this facilitates most downstream machine learning analysis. This trick applies a log transformation, which displays the before and after of our data feature.

skewness = df("bonus").skew()

print(f"Bonus skewness: {skewness:.2f}")

plt.hist(df("bonus"), bins=40)

plt.title("Bonus Distribution (Original)")

plt.show()

# Log transform

df("bonus_log") = np.log1p(df("bonus"))

plt.hist(df("bonus_log"), bins=40)

plt.title("Bonus Distribution (Log Transformed)")

plt.show()

Before log-transformation. Image by author

After log-transformation. Image by author

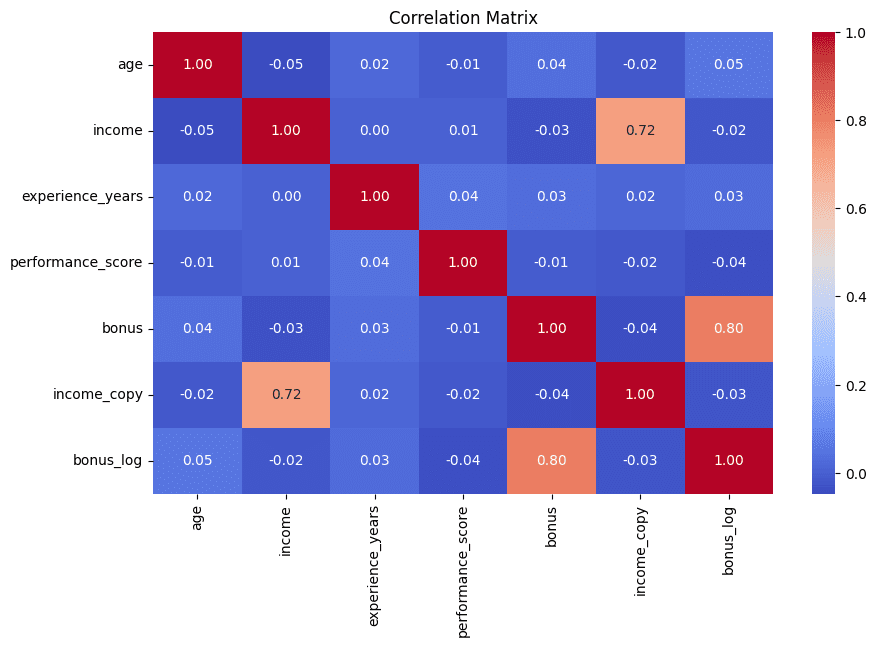

# 7. Detecting redundant features through correlation matrix

We end the list the same way we started: with a visual touch. Correlation matrices displayed as heatmaps help to quickly identify pairs of features that are highly correlated – a strong indication that they may contain redundant information that is often best minimized in subsequent analysis. This example also prints the top-5 most correlated pairs of features for further interpretation:

corr_matrix = df.corr(numeric_only=True)

plt.figure(figsize=(10, 6))

sns.heatmap(corr_matrix, annot=True, fmt=".2f", cmap="coolwarm")

plt.title("Correlation Matrix")

plt.show()

# Find high correlations

high_corr = (

corr_matrix

.abs()

.unstack()

.sort_values(ascending=False)

)

high_corr = high_corr(high_corr < 1)

print(high_corr.head(5))

Correlation matrix to detect redundant features. Image by author

# wrapping up

With the above list, you have learned 7 useful tricks to get the most out of your exploratory data analysis, helping you uncover and deal with a variety of data quality issues and anomalies effectively and intuitively.

ivan palomares carrascosa Is a leader, author, speaker and consultant in AI, Machine Learning, Deep Learning and LLM. He trains and guides others in using AI in the real world.