Image by author

# Introduction

Everyone focuses on solving the problem, but almost no one tests the solution. Sometimes, a perfectly working script can break with just a new row of data or a slight change in logic.

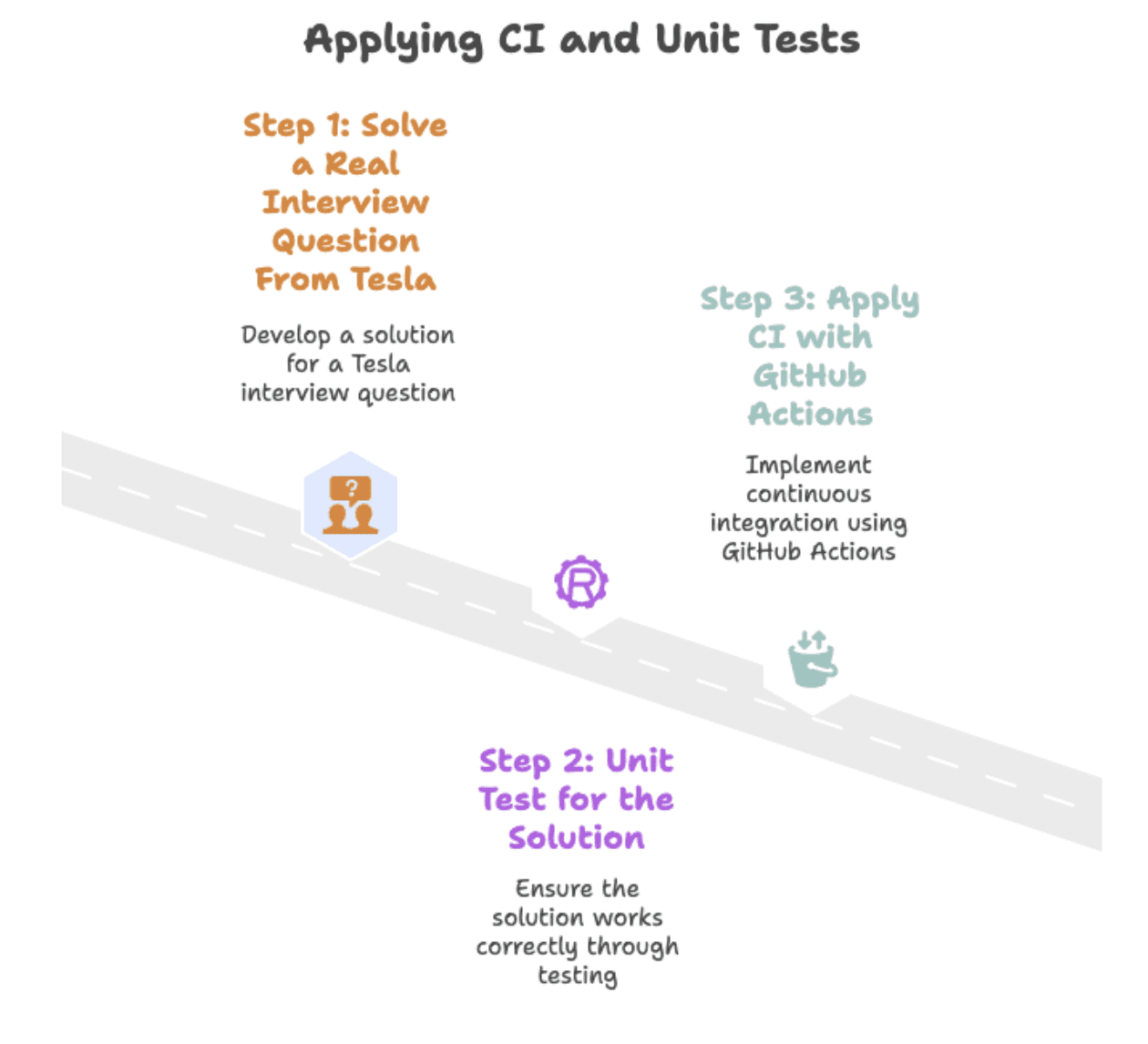

In this article, we will solve Tesla Interview Questions Python And show how versioning and unit testing turn a fragile script into a reliable solution by following three steps. We’ll start with interview questions and finish using automated testing GitHub Actions.

Image by author

We will go through these three steps to get the data solution ready for production.

First, we will solve a real interview question from Tesla. Next, we’ll add unit tests to ensure the solution remains reliable over time. Finally, we’ll use GitHub Actions to automate testing and version control.

# Solving a real interview question from Tesla

new products

Calculate the net change in the number of products launched by companies in 2020 compared to 2019. Your output should include the company name and the net difference.

(Net difference = Number of products launched in 2020 – Number launched in 2019.)

in this interview question from teslaYou are asked to measure product growth over two years.

The task is to return the name of each company with a difference in product number between 2020 and 2019.

// Understanding Datasets

Let’s first look at the dataset we are working with. Here are the column names.

| column name | data type |

|---|---|

| Year | int64 |

| Company Name | object |

| Product Name | object |

Let’s preview the dataset.

| Year | Company Name | Product Name |

|---|---|---|

| 2019 | toyota | avalon |

| 2019 | toyota | camry |

| 2020 | toyota | Corolla |

| 2019 | Honda | Accord |

| 2019 | Honda | Passport |

This dataset has three columns: year, company_nameAnd product_name. Each row represents a car model released by a company in a given year.

// writing python solution

we will use basic Panda Operations to group, compare, and calculate net product changes per company. The function we will write divides the data into subsets for 2019 and 2020.

Next, it merges them by company name and counts the number of unique products launched each year.

import pandas as pd

import numpy as np

from datetime import datetime

df_2020 = car_launches(car_launches('year').astype(str) == '2020')

df_2019 = car_launches(car_launches('year').astype(str) == '2019')

df = pd.merge(df_2020, df_2019, how='outer', on=(

'company_name'), suffixes=('_2020', '_2019')).fillna(0)The final output subtracts the 2020 counts from the 2019 counts to get the net difference. Here is the complete code.

import pandas as pd

import numpy as np

from datetime import datetime

df_2020 = car_launches(car_launches('year').astype(str) == '2020')

df_2019 = car_launches(car_launches('year').astype(str) == '2019')

df = pd.merge(df_2020, df_2019, how='outer', on=(

'company_name'), suffixes=('_2020', '_2019')).fillna(0)

df = df(df('product_name_2020') != df('product_name_2019'))

df = df.groupby(('company_name')).agg(

{'product_name_2020': 'nunique', 'product_name_2019': 'nunique'}).reset_index()

df('net_new_products') = df('product_name_2020') - df('product_name_2019')

result = df(('company_name', 'net_new_products'))// View expected output

Here is the expected output.

| Company Name | Net_new_products |

|---|---|

| chevrolet | 2 |

| ford | -1 |

| Honda | -3 |

| Jeep | 1 |

| toyota | -1 |

# Making the solution reliable with unit tests

Fixing a data issue once doesn’t mean it will continue to work. A newline or argument change can silently break your script. For example, imagine you accidentally renamed a column in your code, causing this line to change:

df('net_new_products') = df('product_name_2020') - df('product_name_2019')For:

df('new_products') = df('product_name_2020') - df('product_name_2019')The logic still runs, but your output (and test) will suddenly fail because the expected column names no longer match. Unit tests fix this. They check whether the same input gives the same output every time. If something breaks, the test fails and shows exactly where. We’ll do this in three steps, from turning the solution to the interview question into a function to writing a test that checks the output is as we expect.

Image by author

// Converting Script to Reusable Function

Before writing tests, we need to make our solution reusable and easy to test. Converting this to a function allows us to run it with different datasets and automatically verify the output without rewriting the same code every time. We changed the original code to a function that accepts DataFrame and returns a result. Here is the code.

def calculate_net_new_products(car_launches):

df_2020 = car_launches(car_launches('year').astype(str) == '2020')

df_2019 = car_launches(car_launches('year').astype(str) == '2019')

df = pd.merge(df_2020, df_2019, how='outer', on=(

'company_name'), suffixes=('_2020', '_2019')).fillna(0)

df = df(df('product_name_2020') != df('product_name_2019'))

df = df.groupby(('company_name')).agg({

'product_name_2020': 'nunique',

'product_name_2019': 'nunique'

}).reset_index()

df('net_new_products') = df('product_name_2020') - df('product_name_2019')

return df(('company_name', 'net_new_products'))// Defining test data and expected output

Before running any tests, we need to know what “right” looks like. Defining the expected output gives us a clear benchmark to compare the results of our function. Therefore, we will create a small test input and clearly define what the correct output should be.

import pandas as pd

# Sample test data

test_data = pd.DataFrame({

'year': (2019, 2019, 2020, 2020),

'company_name': ('Toyota', 'Toyota', 'Toyota', 'Toyota'),

'product_name': ('Camry', 'Avalon', 'Corolla', 'Yaris')

})

# Expected output

expected_output = pd.DataFrame({

'company_name': ('Toyota'),

'net_new_products': (0) # 2 in 2020 - 2 in 2019

})// Writing and running unit tests

The following test code checks whether your function returns exactly what you expect.

If not, the test fails and tells you why, down to the last row or column.

The test below uses the function from the previous step (calculate_net_new_products()) and the expected output that we have defined.

import unittest

class TestProductDifference(unittest.TestCase):

def test_net_new_products(self):

result = calculate_net_new_products(test_data)

result = result.sort_values('company_name').reset_index(drop=True)

expected = expected_output.sort_values('company_name').reset_index(drop=True)

pd.testing.assert_frame_equal(result, expected)

if __name__ == '__main__':

unittest.main()# Automated Testing with Continuous Integration

Writing tests is a good start, but only if they actually run. You can manually run tests after each change, but it doesn’t scale, it’s easy to forget, and team members may use different setups. Continuous Integration (CI) addresses this by automatically running tests whenever code changes are pushed to the repository.

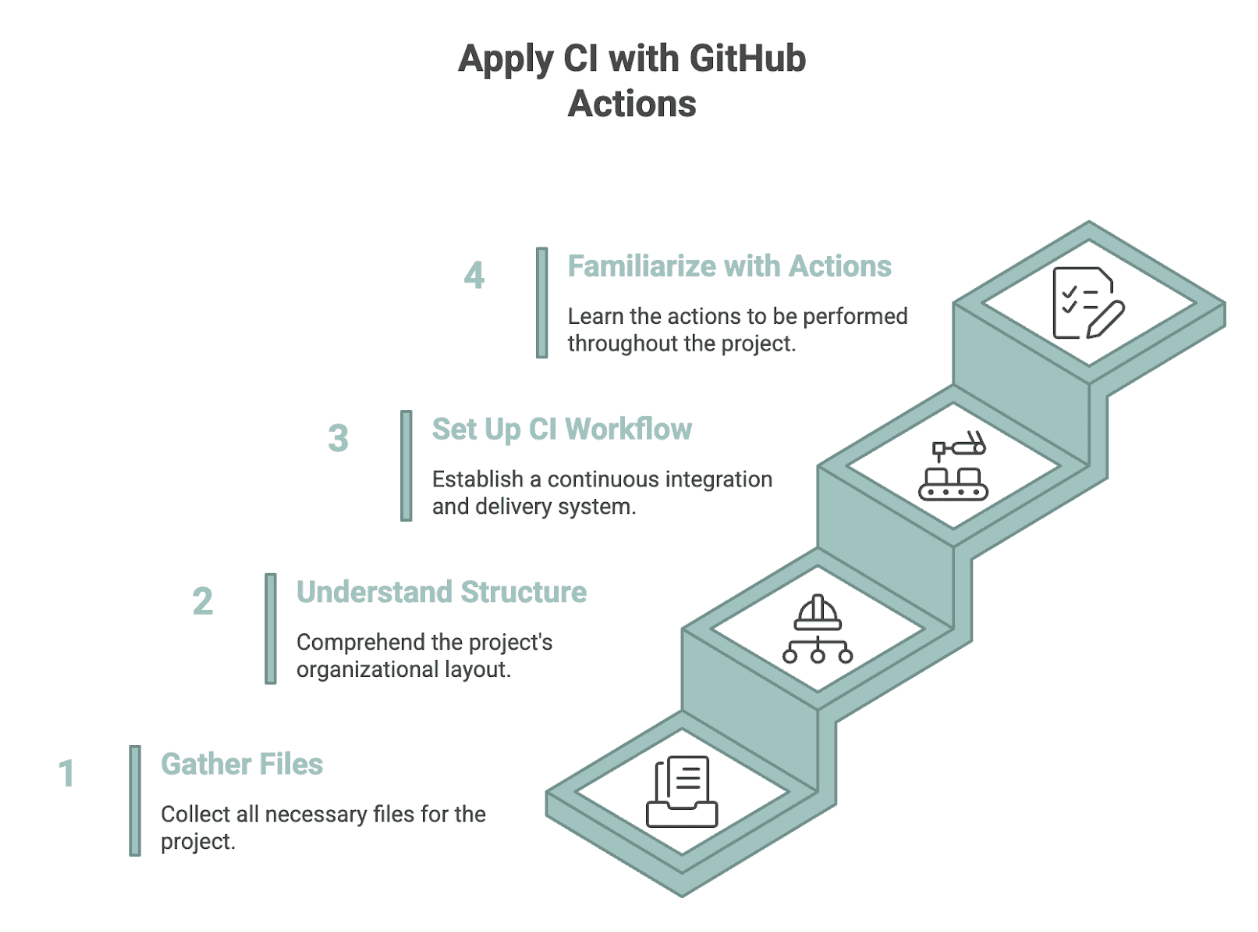

GitHub Actions There’s a free CI tool that does this on every push, keeping your solution reliable even when code, data, or logic changes. It automatically runs your tests on every push, so your solution remains reliable even if the code, data, or logic changes. Here’s how to implement CI with GitHub Actions.

Image by author

// Organizing your project files

To apply CI to an interview query, you first need to push your solution to a GitHub repository. (To learn how to create a GitHub repo, please read it).

Then, set up the following files:

solution.py: Solving Interview Questions from Step 2.1expected_output.py: Defines the test inputs and expected outputs from step 2.2test_solution.py:using unit testingunittestfrom step 2.3requirements.txt:dependencies (e.g., pandas).github/workflows/test.yml:GitHub Actions Workflow Filedata/car_launches.csv: input dataset used by the solution

// Understanding Repository Layout

Repositories are organized in a way that GitHub Actions can find everything it needs in your GitHub repository without any additional setup. This keeps things simple, consistent, and easy to work with, for both you and others.

my-query-solution/

├── data/

│ └── car_launches.csv

├── solution.py

├── expected_output.py

├── test_solution.py

├── requirements.txt

└── .github/

└── workflows/

└── test.yml// Creating a GitHub Actions Workflow

Now that you have all the files, the last thing you need is test.yml. This file tells GitHub Actions how to automatically run your tests when the code changes.

First, we name the workflow and tell GitHub when to run it.

name: Run Unit Tests

on:

push:

branches: ( main )

pull_request:

branches: ( main )This means that tests will run every time someone pushes code or opens a pull request on the main branch. Next, we create a task that defines what will happen inside the workflow.

jobs:

test:

runs-on: ubuntu-latestThe task runs on GitHub’s Ubuntu environment, giving you a clean setup every time. Now we add steps inside that function. The first one checks out your repository so that GitHub Actions can access your code.

- name: Checkout repository

uses: actions/checkout@v4Then we set up Python and choose the version we want to use.

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: "3.10"After that, we install all the dependencies listed requirements.txt.

- name: Install dependencies

run: |

python -m pip install --upgrade pip

pip install -r requirements.txtFinally, we run all unit tests in the project.

- name: Run unit tests

run: python -m unittest discoverThis last step runs your tests automatically and shows errors if something breaks. Here is the full file for reference:

name: Run Unit Tests

on:

push:

branches: ( main )

pull_request:

branches: ( main )

jobs:

test:

runs-on: ubuntu-latest

steps:

- name: Checkout repository

uses: actions/checkout@v4

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: "3.10"

- name: Install dependencies

run: |

python -m pip install --upgrade pip

pip install -r requirements.txt

- name: Run unit tests

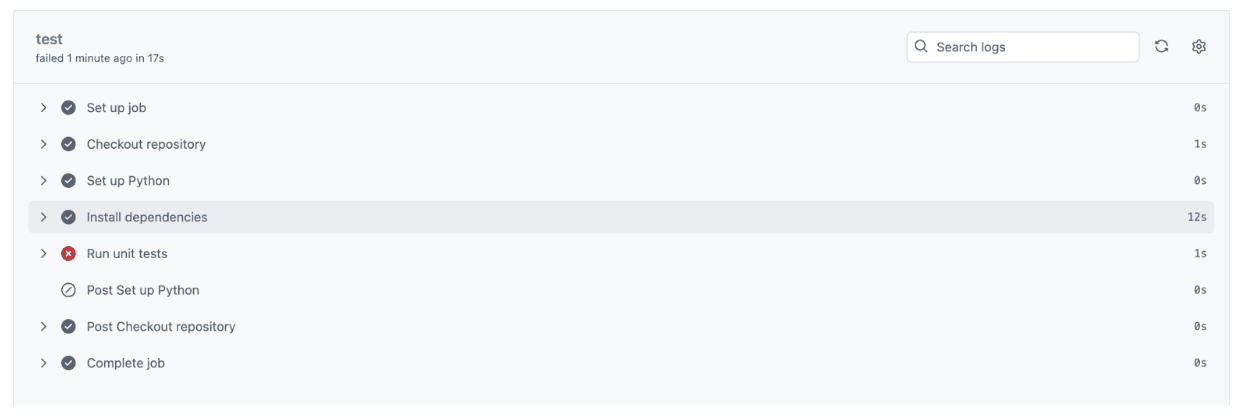

run: python -m unittest discover// Reviewing test results in GitHub Actions

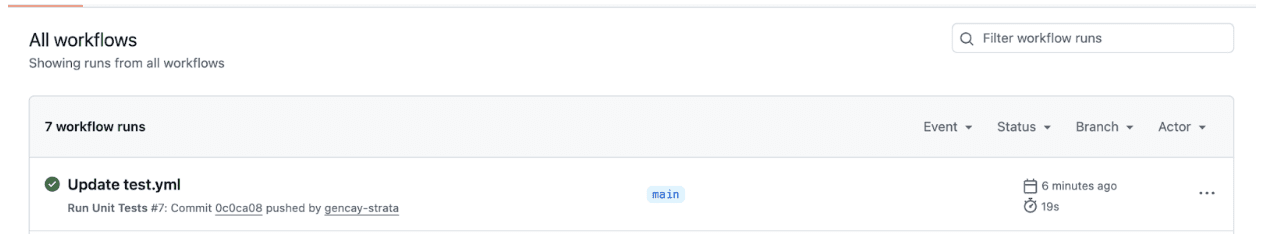

Once you have uploaded all the files to your GitHub repository, go to the Actions tab by clicking on Actions, as you can see from the screenshot below.

Once you click on the action, you will see a green checkmark if everything ran successfully, like the screenshot below.

Click “Update test.yml” to see what actually happened. You’ll get complete information from installing Python to running tests. If all tests pass:

- There will be a check mark on each step.

- This confirms that everything worked as expected.

- This means that your code behaves as intended at every step based on the tests you define.

- The output matches the goals you set when creating those tests.

let us see:

As you can see, our unit test completed in just 1 second, and the entire CI process finished in 17 seconds, verifying everything from setup to test execution.

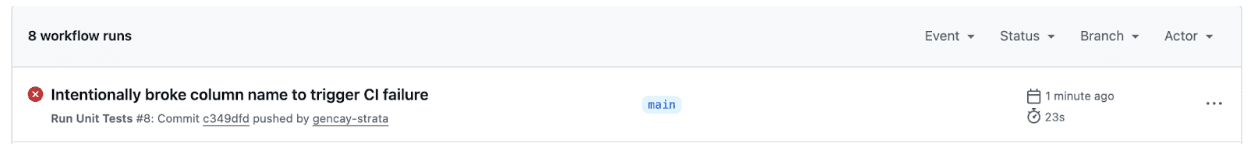

// When a small change ruins the test

Not every change will stand the test. Let’s say you accidentally renamed a column solution.pyAnd Send changes to GitHubFor example:

# Original (works fine)

df('net_new_products') = df('product_name_2020') - df('product_name_2019')

# Accidental change

df('new_products') = df('product_name_2020') - df('product_name_2019')Now let’s see the test results in the Action tab.

We have made an error. Let’s click it to see the details.

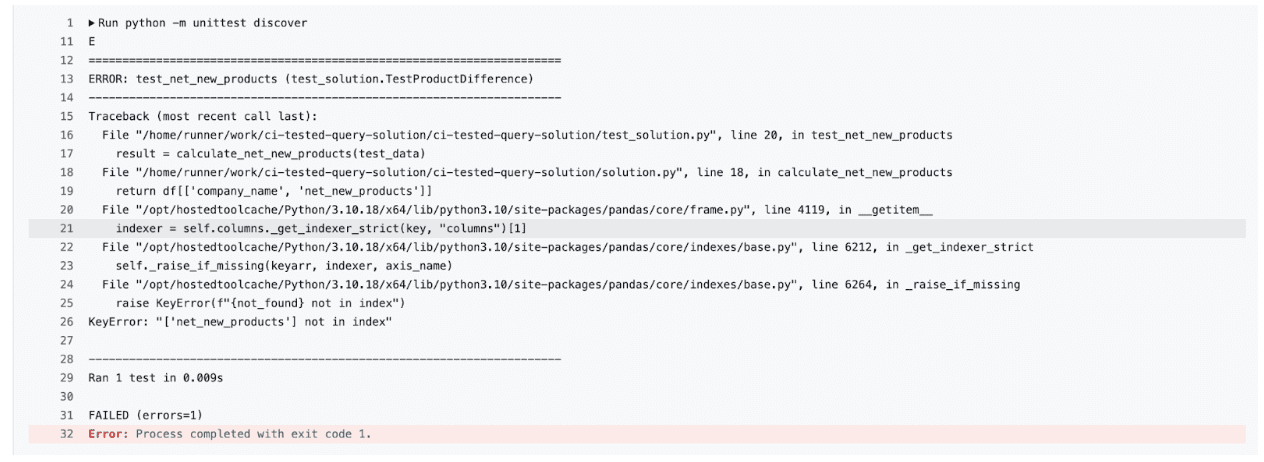

Unit tests did not pass, so click “Run Unit Tests” to see the full error message.

As you can see, our tests revealed the problem KeyError: 'net_new_products'Because the column names in the function no longer match what the test expects.

This way you keep your code under constant check. The tests act as your safety net if you or someone on your team makes a mistake.

# Using version control to track and test changes

Versioning helps you track every change you make, whether it’s to your logic, to your tests, or to your dataset. Let’s say you want to try a new way of grouping data. Instead of editing the main script directly, create a new branch:

git checkout -b refactor-groupingHere’s what’s next:

- Make your changes, commit them and run the test.

- If all tests pass, i.e. the code works as expected, merge it.

- If not, revert the branch without affecting the main code.

This is the power of version control: every change is tracked, testable, and reversible.

# final thoughts

Most people stop after getting the right answer. But real-world data solutions demand much more than this. They reward those who can create pending queries over time, not just once.

With versioning, unit testing, and a simple CI setup, even a one-time interview question becomes a reliable, reusable part of your portfolio.

Nate Rosidi Is a data scientist and is into product strategy. He is also an adjunct professor teaching analytics, and is the founder of StratScratch, a platform that helps data scientists prepare for their interviews with real interview questions from top companies. Nate writes on the latest trends in the career market, gives interview advice, shares data science projects, and covers everything SQL.