In the fast-paced world of agentic workflows, even the most powerful AI model is still only as good as its documentation. Today, Andrew Ng and his team officially launched DeepLearning.AI Context HubAn open-source tool designed to bridge the gap between agents’ static training data and the rapidly evolving reality of modern APIs.

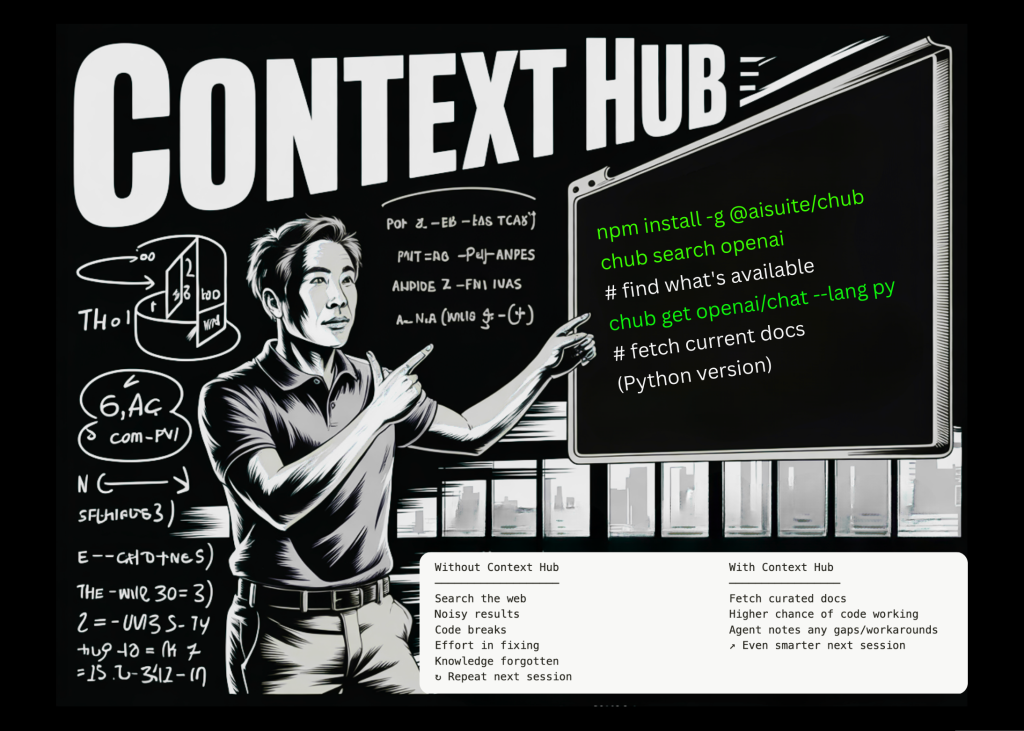

You ask an agent like Cloud Code to create a feature, but it confuses a parameter that was removed six months ago or fails to use a more efficient, new endpoint. Context Hub provides a simple CLI-based solution to ensure that your coding agent always has the ‘ground truth’ it needs to execute.

Problem: When LLMs live in the past

Large language models (LLMs) become stable in time once they finish training. While Retrieval-Augmented Generation (RAG) has helped ground models in private data, the ‘public’ documentation they rely on is often a jumble of old blog posts, legacy SDK examples, and obsolete StackOverflow threads.

The result is what developers are calling ‘agent drift’. Consider a hypothetical but highly plausible scenario: A developer asks an agent to call OpenAI GPT-5.2. even if new Responses API has been the industry standard for a year, the agent – relying on his or her original training – may stubbornly hold on to the old chat completion api. This leads to code breaking, wasted tokens, and hours of manual debugging.

Coding agents often use outdated APIs and obfuscate parameters. Context Hub is designed to intervene at the exact moment an agent starts guessing.

chub:CLI for agent reference

At its core, Context Hub is built around a lightweight CLI tool called chub. It serves as a curated registry of up-to-date, versioned documents served in a format optimized for LLM consumption.

Instead of having an agent scour the web and get lost in noisy HTML, it is used chub To fetch the exact markdown document. The workflow is straightforward: You install the tool and then prompt your agent to use it.

Standard chub The toolset includes:

chub search: Allows the agent to find the specific API or skill it needs.chub get: Receives curated documents, often supporting specific language variants (for example,--lang pyOr--lang js) to reduce token wastage.chub annotate: This is where the tool starts to differentiate itself from standard search engines.

Self-Improving Agent: Annotations and Workarounds

One of the most compelling features is the ability for agents to ‘remember’ technical constraints. Historically, if an agent discovered a specific solution to a bug in the beta library, that knowledge would disappear once the session ended.

With Context Hub, an agent can use chub annotate The command to save a note to the local document registry. For example, if an agent detects that a specific webhook validation requires a raw body rather than a parsed JSON object, it can run:

chub annotate stripe/api "Needs raw body for webhook verification"

In the next session, when the agent (or any agent on that machine) runs chub get stripe/apiThat note is automatically added to the document. This effectively gives coding agents “long-term memory” for technical nuances, preventing them from having to reinvent the same wheel every morning.

Crowdsourcing of ‘ground truth’‘

While annotations remain local to the developer’s machine, Context Hub also offers a feedback loop designed to benefit the entire community. Through chub feedback Commands, agents can rate documentation up Or down Vote and apply specific labels like accurate, outdatedOr wrong-examples.

This feedback flows back to the maintainers of the Context Hub registry. Over time, the most reliable documents rise to the top, while older entries are flagged and updated by the community. It is a decentralized approach to maintaining documentation that evolves as quickly as the code described.

key takeaways

- Solution to ‘Agent Drift’: Context Hub addresses the critical issue where AI agents rely on their static training data, causing them to use outdated APIs or hallucinate parameters that no longer exist.

- CLI-powered ground truth: Through

chubCLI, agents can instantly receive curated, LLM-optimized Markdown documentation for specific APIs, ensuring they build with the most modern standards (for example, using the new OpenAI) Responses API instead of closing the chat). - Persistent Agent Memory:

chub annotateThis feature allows agents to save specific technical solutions or notes to a local registry. This prevents the agent from ‘re-discovering’ the same solution in future sessions. - Collaborative Intelligence: by using

chub feedbackAgents can vote on the accuracy of documentation. This creates a crowdsourced ‘ground truth’ where the most reliable and up-to-date resources surface for the entire developer community. - Language-specific precision: The tool reduces ‘token waste’ by allowing agents to request documents specifically tailored to their current stack (e.g. using flags).

--lang pyOr--lang js), making the context dense and highly relevant.

check out GitHub repo. Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.