Customers expect instant feedback on every interaction, whether it’s a recommendation delivered in milliseconds, a fraudulent charge blocked before it’s cleared, or a search result that feels immediate to the user. At scale, providing these experiences depends on model serving systems that remain fast, stable, and predictable, even under constant and uneven load.

As traffic grows to tens or hundreds of thousands of requests per second, many teams face similar challenges. Latency becomes inconsistent, infrastructure costs increase, and systems require constant tuning to handle spikes and drops in demand. Failures also become harder to diagnose as more components are linked together, causing teams to move away from improving models and instead focus on keeping production systems running.

This post explains how Model Serving on Databricks High QPS supports real-time workloads and outlines solid best practices that you can implement to achieve low latency, high throughput, and predictable performance in production.

Databricks Model Serving: Simple, scalable for high QPS workloads

Databricks Model Serving provides a fully managed, scalable serving infrastructure directly within your Databricks Lakehouse. Simply take an existing model in your model registry, deploy it, and get a REST endpoint on a managed infrastructure that is highly scalable and optimized for high QPS traffic.

Databricks Model Serving is optimized for mission-critical high QPS workloads:

- real time adaptive engine – A self-adapting model server that adapts to each model’s workload, driving greater throughput and resource utilization from a single hardware.

- Fully horizontally scalable architecture – Our inference server, authentication layer, proxy, and rate limiter are all designed to scale independently, allowing the system to sustain very high request volumes.

- fast elastic scaling – Estimate servers can scale up and down, adapting to sudden traffic spikes or drops without over-provisioning.

- Native Feature Store Integration: Databricks Feature Serving integrates seamlessly with Model Serving, allowing you to deploy features and models together as a complete application.

- Lakehouse Native: Customers can centralize their production ML systems’ features, training, MLops through MLflow, service, and real-time monitoring in a unified stack, reducing ops complexity and driving faster deployment.

Databricks Model Serving empowers our team to deploy machine learning models with the reliability and scale required for real-time applications. It is designed to handle high-QPS workloads while maximizing hardware utilization. Additionally, Databricks offers a SOTA Feature Store solution with the super fast lookups required for such workloads. With these capabilities, our ML engineers can focus on what matters: refining model performance and enhancing the user experience. – Bojan Babic, Research Engineer, you.com

Best practices for achieving high QPS performance on model serving

With this foundation, the next step is to optimize your endpoints, models, and client applications to consistently achieve high throughput and low latency, especially as traffic increases. The following best practices support real customer deployments that run millions to billions of estimates every day.

please see our Best Practice Guide For more information.

Best Practice 1: Lower latency by using route optimized endpoints

An important first step to ensure that the network layer is optimized for high throughput/QPS and low latency. Model Serving does this work for you route optimized endpoint. When you enable route optimization on an endpoint, Databricks optimizes the network and routing for model serving inference requests, resulting in faster, more direct communication between your client and the model. This significantly reduces the time it takes for a request to reach the model, and is particularly useful for low-latency applications such as recommendation systems, search, and fraud detection.

Best Practice 2: Optimize the model and optimize endpoints

In high throughput scenarios, reducing model complexity, offloading processing from the serving endpoint, and choosing the right concurrent targets helps your endpoint scale to larger request volumes with the right amount of compute required. This way your endpoints are cost efficient but can still scale to achieve performance goals.

- Model size and complexity: Smaller, less complex models generally lead to faster inference times and higher QPS. If your model is large consider techniques like model quantization or pruning.

- Pre-Processing and Post-Processing: Offload complex pre-processing and post-processing steps from the serving endpoint whenever possible. This ensures that your model serving endpoint only performs the critical step of inference.

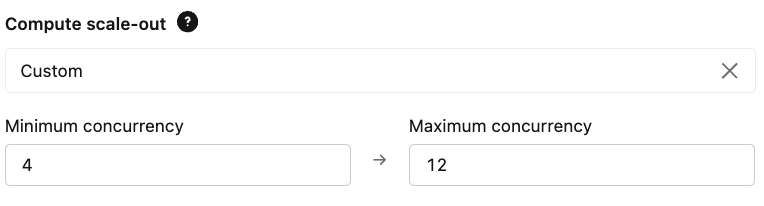

- Scaling: Configure your provisioned concurrency limits based on your expected QPS and latency requirements. This ensures that the endpoint is large enough to handle the baseline load and allows for maximum peak demand.

With Databricks Model Serving, we can handle high-QPS workloads like personalization and recommendations in real-time. This gives our brands the scale and speed they need to deliver customized content experiences to our millions of readers. – Oscar Selma, SVP of Data Science and Product Analytics at Conde Nast

Best Practice 3: Optimize Client Side Code

Optimizing client-side code ensures that requests are processed quickly and your endpoint compute instances are fully utilized – leading to better QPS throughput, cost savings and lower latency.

- Connection Pooling: Use connection pooling on the client side to reduce the overhead of establishing new connections for each request. The Databricks SDK always uses connection best practices, however, if you need to use your own client, be careful about the connection management strategy.

- Payload Size: Keep request and response payloads as small as possible to reduce network transfer times.

- Client-Side Batching: If your application can send multiple requests in a single call, enable batching on the client side. This can significantly reduce the overhead per prediction.

Batch requests occur simultaneously when calling Databricks model serving endpoints

Get started today

- Try Databricks Model Serving! Start deploying ML models as REST APIs.

- Dive deeper: Please see Databricks documentation Custom model for serving.

- High QPS Guide: Please examine Best Practice Guide Service on Databricks for high QPS service on Databricks models.