Author(s): Shreyash Shukla

Originally published on Towards AI.

“Generalist” roof

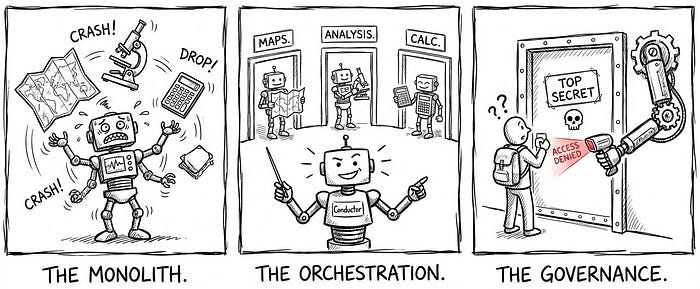

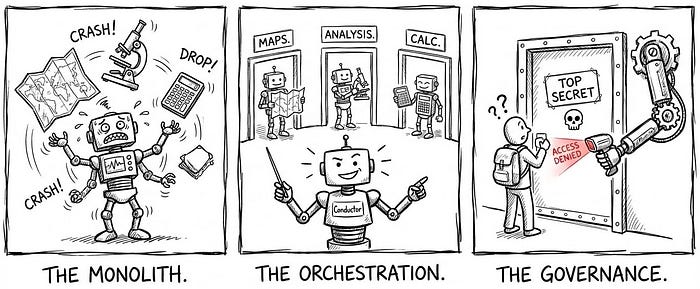

In the previous five articles, we built a robust single agent. It contains memory, tools and user context. However, as we scale this agent to handle enterprise-grade complexity – such as “deep-dive root cause analysis” or “metric design” – we reach a hard limit: generalist trap.

Obstacle A monolithic system with a single agent acting on a prompt is inherently limited. Microsoft Research Note that while single-agent architectures offer simplicity, they often falter in complex, dynamic environments where specific, conflicting logic paths are required (Single-Agent vs. Multi-Agent Architecture – Microsoft Learn).

When we try to force an agent to become an expert Everything – SQL generation, data visualization, statistical hypothesis testing, and creative metric design – we minimize the performance of Anything. The context window becomes filled with competing instructions, causing “distraction” where the model ignores specific constraints.

Bloat (the “huge brain” problem) From an engineering perspective, a monolithic agent is a liability. aggregating hundreds of tools and thousands of lines of instructions into one system_prompt Creates a “God thing”.

- Debug Ability: If the agent fails to write correct SQL, is it because of the SQL instruction or has the new “Creative Writing” instruction confused it?

- Hallucinations: research on Magentic-One (Microsoft’s multi-agent system) shows that monolithic agents struggle with inflexible workflows, while incorporating different skills into different agents significantly reduces error rates (Magentic-One: A Generalist Multi-Agent System – Microsoft Research).

Solution: Swiss Army Knife Pattern To break this limitation, we must transition from a “worker” model to one “Orchestrator” Sample. We stop building a giant bot and start building one Team.

- the shift: We disintegrate the monolith into a fleet specialist agent (Sub-Agent).

- Orchestrator: The primary agent becomes the router. Its only job is to understand the intention and assign the task to the right expert.

- Recognition: Industry benchmarks using frameworks such as autogen show that multi-agent systems can increase accuracy on complex tasks by more than 20% by allowing agents to critique and refine each other’s work (How are Multi-Agent LLM different from traditional LLM – DeepCheck).

Expert Fleet (Process-Based Agent)

To break the “generalist limit”, we adopt Multi-Agent Architecture. Striving to be a “jack of all trades” rather than a single agent, we create a fleet of specialized “experts”.

This approach reflects “Agent Design Pattern” AI has been advocated by thought leaders such as Andrew Ng. Their research emphasizes that decomposing complex workflows into small, iterative steps driven by specialized agents (e.g., coders, critics) outperforms zero-shot prompting. By separating roles, we ensure that each agent only works within the “whole context” relevant to its specific domain, thereby reducing noise that leads to hallucinations (Batch-Agent Workflows).

Roles: defining experts We define three primary expert agents to handle the most complex data workflows. These align with “A Mix of Experts” Frequently discussed patterns in advanced LLM system design:

- Deep Analysis Agent (Investigator): This agent is optimized for structured, multi-turn data slicing. It doesn’t just “query the data”; It guides the user through a hypothesis tree. It asks clear questions (“Do you want to segment by region or product?”) and suggests dimensions to test.

- Metric Innovation Agent (Architect): Designing a new KPI (Key Performance Indicator) requires a different cognitive mode than debugging code. This agent is instructed to act as a “product manager”, focusing on business logic, formula consistency, and edge-case definitions before writing any SQL.

- Exploration Agent (Scout): This agent is designed for open-ended search. It uses “exploratory data analysis” (EDA) techniques to uncover hidden patterns or anomalies in datasets without predefined user hypotheses.

Orchestrator: Routing Logic The core of this system is orchestrator (formerly Generalist). It no longer attempts to solve every problem. Instead, it acts as a router chain – a concept formalized by frameworks such as Langchen (Langchain Router Chain Documentation).

- audience: The orchestrator actively analyzes user intent. If a user says, “Why did customer engagement decline last quarter?”Orchestrator identifies this as a “root cause” problem.

- Handoff: It doesn’t try to answer. Instead, it triggers a handoff tool:

transfer_to_agent(agent_name="exploration_agent"). - Result: The user is seamlessly transferred to the expert agent, who loads his own special system prompts and toolset, ensuring that the context window is clean and focused.

Gatekeeper (sub-agent access control)

In a monolithic system, access control is binary: you are either “in” or “out.” but in one Expert EcosystemGranularity is essential. Not every user should have access to every expert.

- risk: A “Sales Program X Agent” may have access to sensitive compensation data.

- Standard: We can’t just trust the police on LLM (“Please don’t show this data”). according to OWASP Top 10 for LLM“Model denial of service” and “unauthorized access” should be mitigated by a deterministic control layer, not by speedy engineering (OWASP Top 10 for LLM Applications).

Mechanism: Middleware Interception To solve this, we apply a middleware pattern (In particular, a sub_agent_access module) that acts as a firewall between the orchestrator and sub-agents. This aligns with NIST Zero Trust Architecturewhich mandates that access to individual enterprise resources must be provided on a per session basis (NIST SP 800-207: Zero Trust Architecture).

enforcement flow we use a hook system (especially before_tool_callback) to enforce these rules. The flow is strictly deterministic:

- Interception: When the orchestrator decides to call

transfer_to_agent('Exploration_Agent')Middleware stops execution First The equipment runs. - Verify (RBAC check): The system retrieves the user’s identity (userid) and cross-references it against the specific Access Control List (ACL) For the target agent (e.g.,

Exploration_Agent_Allowed_Users). - apply the:

- If authorized: callback returns

NoneAllows the tool to proceed and load the sub-agent. - If unauthorized: callback raises one

PermissionError. The tool call is rejected, and the orchestrator is forced to output a standard deny message: “Access Denied: You do not have permission to access the Analytics Expert.”

user experience This failure is handled gracefully. Instead of a crash, the orchestrator can be programmed to provide a “Request Access” link that guides the user to the appropriate identity provider (IdP) workflow to request entry to the group.

Architecture of Scale (Modularity)

The transition to a multi-agent ecosystem is not just about adding features; This is a fundamental change in software architecture. we are moving from one monolithic application (a huge sign) to a microservices architecture (Many special signs).

This change solves the three biggest barriers to scaling generative AI:

1. Modularity (“Lego block” principle) In a monolithic agent, adding a new capability (for example, “predictive modeling”) requires editing the central system prompt. This creates a regression risk where a change in instructions forecast Instructions for may accidentally break SQL generation.

- Solution: In our ecosystem, each sub-agent is a self-contained entity with its own memory, tools, and signals. This “separation of concerns” ensures that updates to one agent do not go out and destabilize others.

- Recognition: research on Microsoft’s Magic One The system demonstrates that the “orchestrator-worker” pattern allows separation of failure modes. If a particular agent fails, the error is contained, preventing a system-wide crash (Magentic-One: A Generalist Multi-Agent System – Microsoft Research).

2. Maintenance (reducing cognitive load) It is almost impossible to debug a signal with 5,000 tokens of instructions. It acts as a “black box” where the root cause of an error is hidden by competing instructions.

- Solution: By dividing the system into specialized agents, we reduce the complexity of any one instruction set. Developers can write targeted unit tests for the “analysis agent” in isolation, ensuring reliability at the component level before integration.

- Recognition: structure like Langchen Emphasize that “router chains” significantly reduce latency and cost by routing queries over smaller, more concentrated signals rather than a single, expensive generalist model (Langchain: Routing Logic).

3. Extensibility (future-proofing) The real strength of this architecture is how it handles growth.

- Solution: New capabilities are added simply by “plugging in” a new sub-agent and updating the orchestrator’s routing definition. This allows the system to grow organically – from 3 agents to 30 – without requiring a fundamental redesign of the original architecture.

- Recognition: A study by OpenAI Multi-agent environments show that modular systems scale more effectively because new “skills” can be added as discrete modules without retraining the core model (Multi-Agent Environments – OpenAI).

We have now completed the journey from a simple chatbot to a sophisticated one enterprise mesh.

- we broke generalist roof By specific roles (section 1).

- we made one specialist fleet To handle in-depth analysis and metric design (Section 2).

- We secured the perimeter with a middleware gatekeeper (section 3).

- We ensured long-term growth through modular architecture (Section 4).

The “agent” of the future is not a bot. this is one team lead – Organizing a secure, scalable, and intelligent workforce that works 24/7 to empower your organization.

create a complete system

This is part of the article cognitive agent architecture series. We’re going through the engineering required to go from a basic chatbot to a secure, deterministic enterprise advisor.

To see the full roadmap – including Semantic Graph (Brain), Gap Analysis (Discernment)And Sub-Agent Ecosystem (Organization) – See master index below:

Cognitive Agent Architecture: From Chatbot to Enterprise Advisor

Published via Towards AI