In July 2025, the US FDA publicly released its initial batch of 200+ complete response letters (CRL), decision letters explain why drug and biological applications were not approved in the first place, marking a major transparency shift. For the first time, sponsors, physicians and data teams can analyze industry through the agency’s own language about deficiencies in clinical, CMC, safety, labeling and bioequivalence through a centralized, downloadable Open FDA PDF.

As the FDA continues to issue new CRLs, the ability to rapidly generate insights from this and other unstructured data and add it to their internal intelligence/data becomes a key competitive advantage. Organizations that can effectively utilize these unstructured data insights in the form of PDFs, documents, images and beyond can de-risk their own submissions, identify common pitfalls and ultimately accelerate their way to market. The challenge is that this data, like many other regulatory data, is locked in PDF, which is extremely difficult to process at scale.

This is exactly the type of challenge Databricks was created to solve. This blog demonstrates how to use Databricks’ latest AI tooling to accelerate extraction of critical information trapped in PDFs – turning these important letters into a source of actionable intelligence.

What does it take to succeed with AI?

Given the technical depth required, engineers often lead development in silos, creating a huge gap between AI creation and business needs. By the time a subject matter expert (SME) sees the results, it is often not what they need. The feedback loop is too slow and the project loses momentum.

During the initial testing stages, it is important to establish a baseline. In many cases, alternative approaches require wasting months without ground truth, relying on subjective observations and “vibes”. This lack of empirical evidence hinders progress. In contrast, Databricks tooling provides out-of-the-box assessment features and allows customers to immediately emphasize quality by using an iterative framework to gain mathematical confidence in the extraction. AI success requires a new approach built on rapid, collaborative iteration.

Databricks provides an integrated platform where business SMEs and AI engineers can work together in real-time to build, test, and deploy production-quality agents. This framework is built on three key principles:

- Agile Business-Technology Alignment: SMEs and tech leads collaborate in a single UI for instant feedback instead of slow email loops.

- Ground truth evaluation: Business-defined “ground truth” labels are created directly into the workflow for formal scoring.

- A full platform approach: This is not a sandbox or point solution; It is fully integrated with automated pipelines, LLM-A-Judge evaluation, production-reliable GPU throughput, and end-to-end Unity catalog governance.

This integrated platform approach turns a prototype into a reliable, production-ready AI system. Let’s go through the four steps to make it.

From PDF to Production: A Four-Step Guide

Building a production-quality AI system on unstructured data requires more than just a good model; This requires a seamless, iterative and collaborative workflow. Information Extraction Agent Brick, combined with Databricks’ built-in AI functions, makes it easy to parse documents, extract important information, and navigate the entire process. This approach empowers teams to move faster and deliver high-quality results. Below are details of the four major stages of construction.

Step 1: Parsing unstructured PDF into text ai_parse_document()

The first hurdle is extracting clean text from the PDF. CRLs can have complex layouts with headers, footers, tables, charts, multiple pages, and multiple columns. A simple text extraction will often fail, producing incorrect and unusable output.

Unlike delicate point solutions that struggle with layout, ai_parse_document() Leverages state-of-the-art multimodal AI to understand document structure – accurately extracting text in reading order, preserving irregular table hierarchies, and generating captions for figures.

Additionally, Databricks delivers advantages in document intelligence by reliably scaling to handle enterprise-level volumes of complex PDFs at 3-5x lower costs than leading competitors. Teams don’t have to worry about file size limitations, and OCR and VLM under the hood ensure accurate analysis of historically “problem PDFs” containing dense, irregular data and other challenging structures.

What once required many data scientists to configure and maintain custom parsing stacks across multiple vendors can now be accomplished with a single, SQL-native function – allowing teams to process millions of documents in parallel without the failure modes that plague less scalable parsers.

To get started, first, point a UC volume to the cloud storage containing your PDF. In our example, we’ll point the SQL function to a CRL PDF managed by volume:

This single command processes all your PDFs and creates a structured table with the parsed content and combined text, making it ready for the next step.

Note, we don’t need to configure any infrastructure, networking, or external LLM or GPU calls – Databricks hosts the GPU and model backend, enabling reliable, scalable throughput without additional configuration. Unlike platforms that charge licensing fees, Databricks uses a compute-based pricing model – meaning you only pay for the resources you use. This allows powerful cost optimization through parallelization and function-level optimization in your production pipelines.

Step 2: Iterative Information Extraction with Agent Bricks

Once you have the text, the next goal is to extract specific, structured fields. For example: What was missing? What was NDA ID? What was the rejection quote? This is where AI engineers and business SMEs need to collaborate closely. The SME knows what to look for, and can work with the engineer to quickly tell the model how to find it.

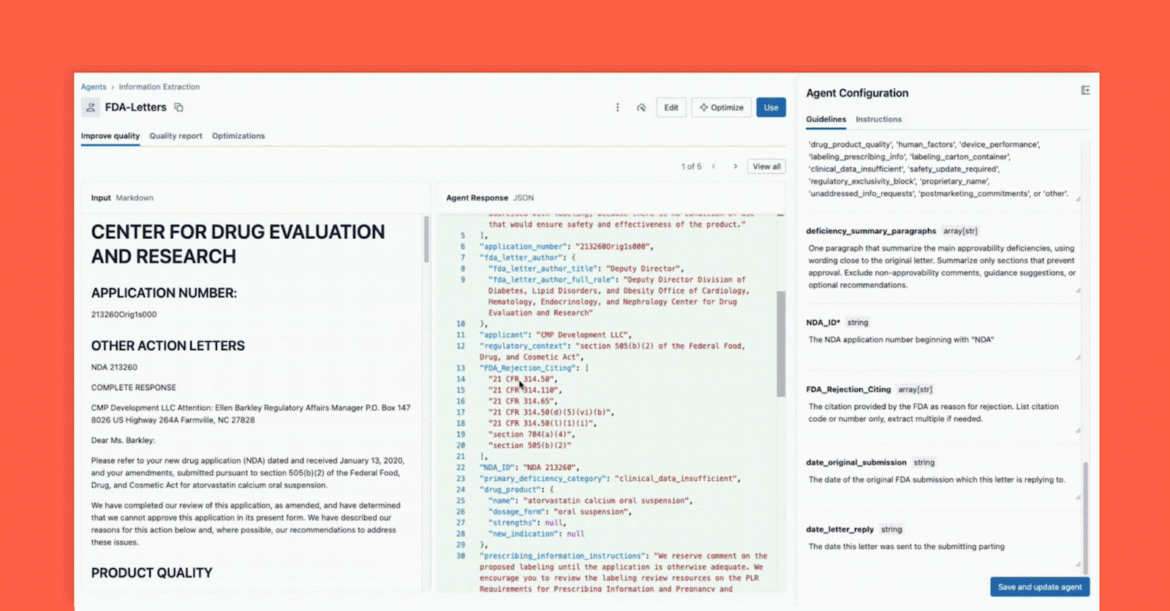

Agent Bricks: Information Extraction Provides a real-time, collaborative UI for this exact workflow.

As shown below, the interface allows a technical lead and a business SME to work together:

- business sme Provides specific fields that need to be removed (for example,

deficiency_summary_paragraphs, NDA_ID, FDA_Rejection_Citing). - information extraction agent Will turn these requirements into effective signals – these are editable guidelines in the right-hand panel.

- Both Tech Leads and Business SMEs can instantly see JSON output in the center panel and verify if the model is correctly extracting information from the document on the left. From here, either can reprogram a signal to ensure accurate extraction.

This instant feedback loop is the key to success. If a field isn’t extracted correctly, the team can make changes to the prompt, add a new field, or refine the instructions and see results in seconds. This iterative process, where multiple experts collaborate across a single interface, is what separates successful AI projects from those that fail in silos.

Step 3: Evaluate and verify the agent

In Step 2, we created an agent that looked correct during iterative development, from a “vibe check.” But how do we ensure high accuracy and scalability when surfacing new data? A change to the prompt that fixes one document may break ten others. This is where formal assessment – an important and built-in part of the Agent BRICS workflow – comes in.

This step is your quality gateway, and it provides two powerful methods for verification:

Method A: Evaluate with ground truth labels (gold standard)

AI, like any data science project, fails in a vacuum without proper domain knowledge. The SME’s investment in providing a “golden set” (ground truth, labeled datasets) of manually extracted and human validated correct and relevant information goes miles to ensure that this solution generalizes to new files and formats. This is because labeled key:value pairs help the agent quickly tune into high-quality signals that lead to relevant and accurate conclusions for the business. Let’s take a look at how Agent Bricks uses these labels to formally score your agents.

Within the Agent BRICS UI, provide the ground truth test set and, in the background, Agent BRICS runs on the test documents. The UI will provide a side-by-side comparison of your agent’s extracted output versus the answer labeled “correct.”

The UI provides a Clear accuracy score for each extraction field which allows you Detect regression immediately when you change signals. With Agent Briscoe, you Gain business-level trust he is an agent Performing at or above human-level accuracy.

Method B: No label? Use LLM as judge

But what if you’re starting from scratch and you don’t have any ground truth labels? This is a common “cold start” problem.

Agent Brix Assessment Suite provides a powerful solution: LLM-A-Judge. Databricks provides a suite of valuation frameworks, and Agent Bricks will leverage the valuation models to act as an unbiased evaluator. The “Judge” model is presented with the original document text and a set of field prompts for each document. The role of the “judge” is to generate an “expected” response and then evaluate it against the output produced by the agent.

LLM-A-Judge allows you to get a scalable, high-quality assessment score and note, it can also be used in production to ensure agents remain reliable and generalize to production variability and scale. More on this in a future blog.

Step 4: Integrating the Agent with ai_query() in your ETL pipeline

At this point, you created your agent in Step 2 and verified its accuracy in Step 3, and you now have the confidence to integrate extraction into your workflow. With one click, you can deploy your agent as a serverless model endpoint – instantly, your extraction logic is available as a simple, scalable function.

To do this, use ai_query() Function in SQL to apply this logic when new documents arrive. ai_query() The function allows you to implement any model serving endpoint directly and seamlessly into your end-to-end ETL data pipeline.

With this, Databricks Lakeflow Jobs ensures that you have a fully automated, production-grade ETL pipeline. Your Databricks job takes the raw PDFs landing in your cloud storage, parses them, extracts structured insights using your high-quality agent, and puts them into a table ready for analysis, reporting, or referenced in retrieval of downstream agent applications.

Databricks is the next generation AI platform – breaking down the walls between deep technical teams and domain experts who have the context needed to create meaningful AI. Success with AI isn’t just models or infrastructure; It’s a tight, iterative collaboration between engineers and SMEs, where each refines the other’s thinking. Databricks gives teams a single environment to co-develop, experiment rapidly, govern responsibly, and put the science back into data science.

Agent Brix epitomizes this approach. With ai_parse_document() to parse unstructured content, Agent Brix: The Collaborative Design Interface for Information Extraction to accelerate high-quality extraction, and ai_query() to implement solutions in production-grade pipelines, teams can move from millions of dirty PDFs to validated insights faster than ever before.

In our next blog, we’ll show how to take these extracted insights and build a production-grade chat agent capable of answering natural-language questions like: “What are the most common manufacturing readiness issues for oncology drugs?”