Event data from IoT, clickstream and application telemetry powers critical real-time analytics and AI when combined with the Databricks Data Intelligence Platform. Traditionally, ingesting this data required multiple data hops (message bus, Spark jobs) between the data source and Lakehouse. This involves operational overhead, data duplication, requires special expertise, and is generally inefficient when Lakehouse is the only destination for this data.

Once this data reaches Lakehouse, it is transformed and curated for downstream analytical use cases. However, teams need to serve this analytical data for operational use cases, and building these custom applications can be a laborious process. They need to provision and maintain essential infrastructure components such as a dedicated OLTP database instance (with networking, monitoring, backups, and more). Additionally, they need to manage the reverse ETL process for the analytical data in the database so that it can be reproduced in a real-time application. Customers also often create additional pipelines to send data from Lakehouse to these external operational databases. These pipelines add infrastructure that developers need to set up and maintain, taking their focus away from the main goal: building applications for their business.

So how does Databricks simplify serving gold data to support data ingestion and operational workloads in Lakehouse?

Enter Zerobus Ingest and Lakebase.

About Zerobus Ingest

Zerobus Ingest, part of Lakeflow Connect, is a set of APIs that provide a streamlined way to push event data directly into Lakeflow. By completely removing the single-sync message bus layer, Zerobus Ingest reduces infrastructure, simplifies operations, and provides real-time ingestion at scale. Thus, Zerobus Ingest makes it easier than ever to unlock the value of your data.

The data-producing application must specify a target table to write data to, ensure that messages map correctly to the table’s schema, and then initiate a stream to send the data to Databricks. On the Databricks side, the API validates the message and the table’s schema, writes the data to the target table, and sends an acknowledgment to the client that the data has been persisted.

Key benefits of Zerobus Ingest:

- Streamlined Architecture: The need for complex workflows and data duplication is eliminated.

- Scale display: Supports real-time ingestion (up to 5 seconds) and allows thousands of clients to write to the same table (up to 100 MB/sec throughput per client).

- Integration with Data Intelligence Platform: Accelerates pricing by enabling teams to apply analytics and AI tools, such as MLflow, directly to their data to detect fraud.

|

Zerobus Swallowing Capacity |

Specifications |

|

ingestion latency |

Near real time (≤5 seconds) |

|

Maximum Throughput per Client |

up to 100 MB/sec |

|

concurrent client |

thousands per table |

|

Continuous Sync Interval (Delta → Lakebase) |

10-15 seconds |

|

real time foreach writer latency |

200-300 milliseconds |

About Lakebase

Lakebase is a fully managed, serverless, scalable, Postgres database built into the Databricks platform, designed for low-latency operational and transactional workloads that run directly on the same data powering analytical and AI use cases.

Complete separation of compute and storage provides fast provisioning and elastic autoscaling. Lakebase’s integration with the Databricks platform is a key differentiator from traditional databases as Lakebase makes Lakehouse data directly available to both real-time applications and AI without the need for complex custom data pipelines. It is designed to meet the database creation, query latency, and concurrency requirements of powering enterprise applications and agentive workloads. Finally, it allows developers to easily version control and branch databases such as code.

Key Benefits of Lakebase:

- Automatic data synchronization: Ability to easily sync data from Lakehouse (analytic layer) to Lakebase on a snapshot, scheduled, or ongoing basis, without the need for complex external pipelines

- Integration with Databricks Platform: LakeBase integrates with Unity Catalog, Lakeflow Connect, Spark Declarative Pipelines, Databricks apps, and more.

- Unified Permissions and Governance: Consistent role and permission management for operational and analytical data. Native Postgres permissions can still be maintained through the Postgres protocol.

Together, these tools allow customers to insert data from multiple systems directly into Delta tables and implement reverse ETL use cases at scale. Next, we’ll explore how to use these techniques to implement a real-time application!

How to build near-real-time applications

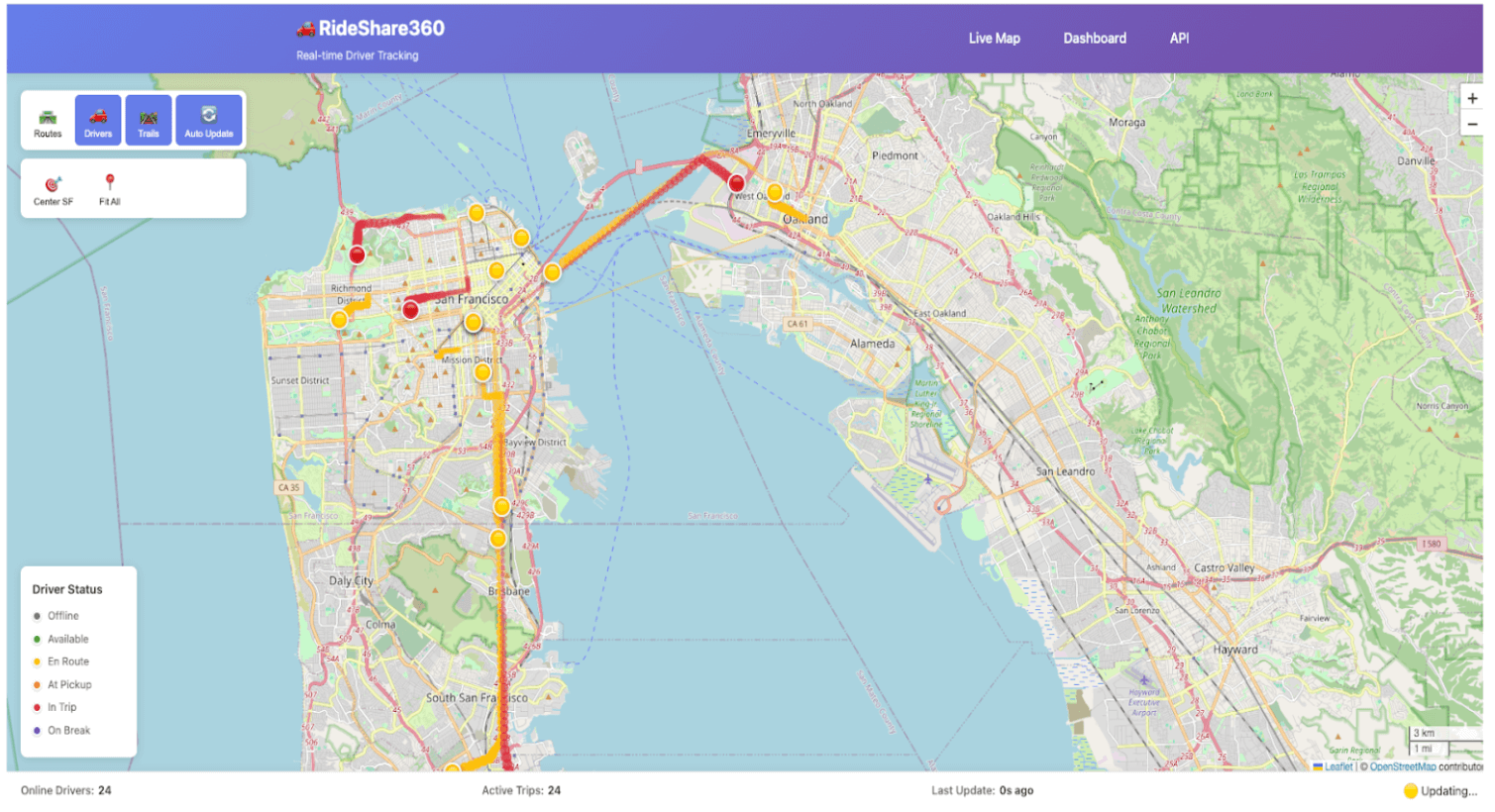

As a practical example, let’s help ‘Data Diners’, a food delivery company, empower their management staff with an application to monitor driver activity and place delivery orders in real-time. Currently, they lack this visibility, which limits their ability to mitigate problems that may arise during delivery.

Why is real-time application valuable?

- Operational Awareness: Management can instantly see where each driver is and how their current delivery is progressing. This means there will be fewer blind spots when orders are late or the driver needs assistance.

- Mitigation of the problem: Live location and status data enables dispatchers to re-route drivers, adjust priorities, or proactively contact customers in the event of delays, reducing failed or late deliveries.

Let’s see how to build it with Zerobus Ingest, Lakebase, and Databricks apps on the Data Intelligence Platform!

Overview of Application Architecture

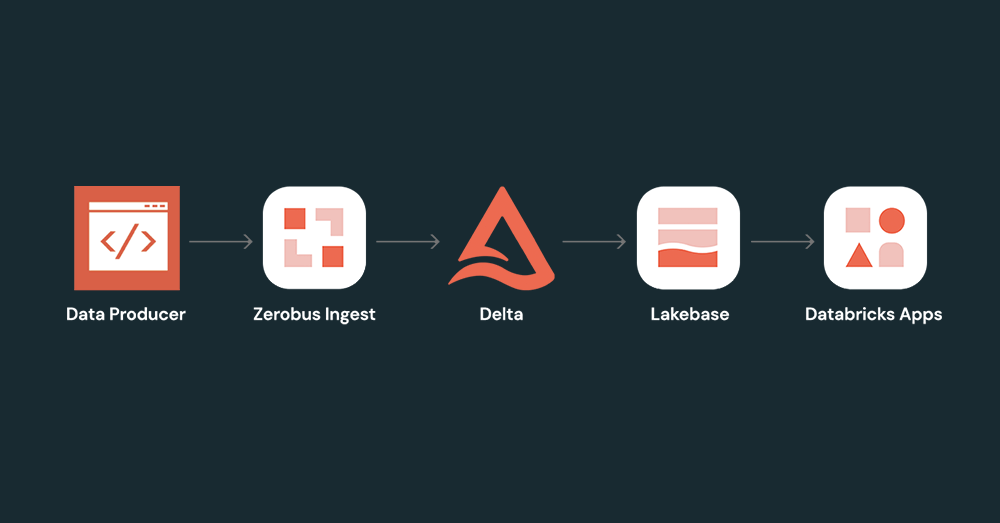

This end-to-end architecture follows four steps: (1) A data producer uses the Zerobus SDK to write events directly to a delta table in the Databricks Unity Catalog. (2) A continuous sync pipeline pushes updated records from the delta table to the Lakebase Postgres instance. (3) A FastAPI backend connects to Lakebase via WebSockets to stream real-time updates. (4) A front-end application built on Databricks Apps visualizes live data for end users.

Starting from our data producer, the Data Diner app on the driver’s phone will emit GPS telemetry data about the driver’s location (latitude and longitude coordinates) en route to deliver the order. This data will be sent to an API gateway, which ultimately passes the data to the next service in the ingestion architecture.

With the Zerobus SDK, we can quickly write a client to forward events from the API Gateway to our target table. With the target table updated in real-time, we can create a continuous sync pipeline to update our Lakebase tables. Finally, by leveraging Databricks Apps, we can deploy a FastAPI backend that uses WebSockets to stream real-time updates from Postgres along with a front-end application to view the live data flow.

Before the introduction of the Zerobus SDK, streaming architectures would involve multiple hops before landing in the target table. Our API gateway will need to offload the data to a staging area like Kafka, and we will need Spark Structured Streaming to write transactions to the target table. All this adds unnecessary complexity, especially considering that the only destination is the lakehouse. Instead the above architecture demonstrates how the Databricks Data Intelligence Platform simplifies end-to-end enterprise application development – from data ingestion to real-time analysis and implementation of interactive applications.

launch

Prerequisites: What you need

Step 1: Create a target table in Databricks Unity Catalog

Event data produced by the client application will reside in the delta table. Use the code below to create that target table in your desired catalog and schema.

Step 2: Authenticate using OAUTH

Step 3: Create Zerobus client and insert data into target table

The code below pushes telemetry event data to Databricks using the Zerobus API.

Change range and resolution of data feed (CDF)

As of today, Zerobus Ingest does not support CDF. CDF allows Databricks to record change events for new data written to the delta table. These change events can be inserts, deletes, or updates. These change events can be used to update synced tables in Lakebase. To sync the data to Lakebase and continue our project, we will write the data to a new table in the target table and enable CDF on that table.

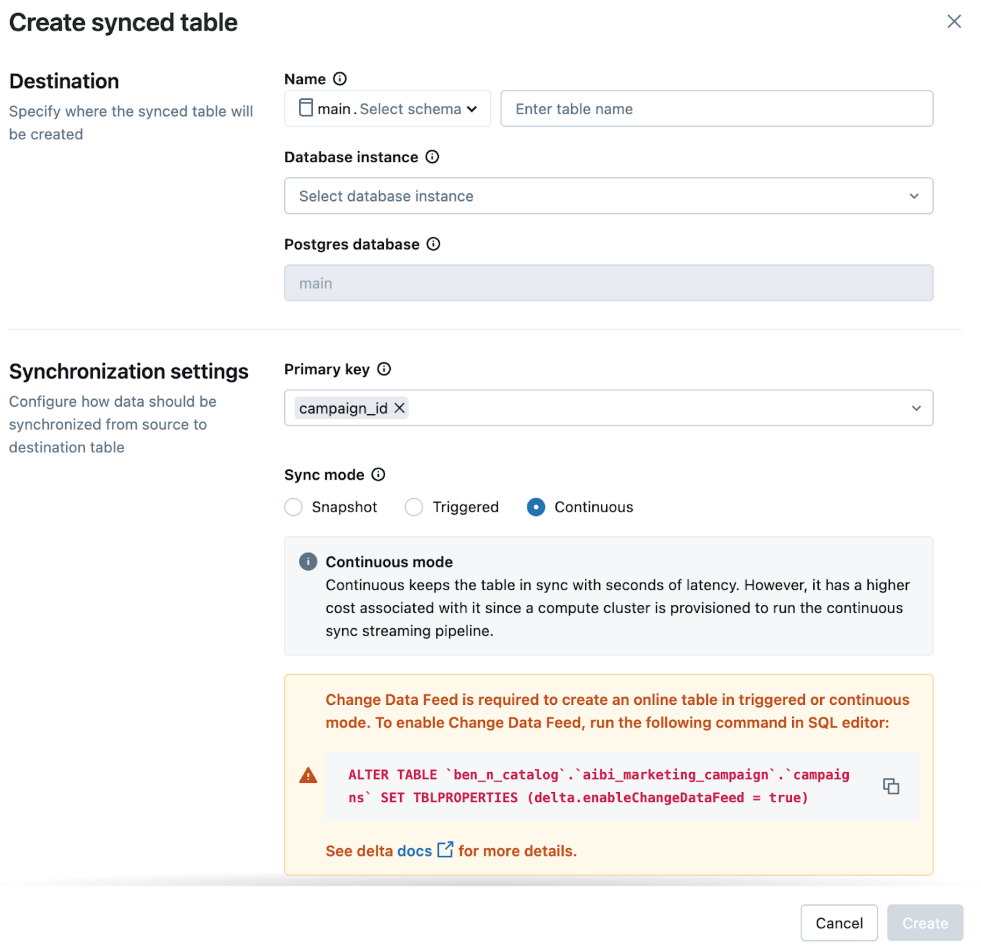

Step 4: Provision Lakebase and sync data to the database instance

To power the app, we’ll sync data from this new, CDF-enabled table to a Lakebase instance. We will constantly sync this table to support our real-time dashboard.

In the UI, we choose:

- Sync Mode: Continuous for low-latency updates

- primary key: table_primary_key

This ensures that the app displays the latest data with minimal delay.

Note: You can also create sync pipelines programmatically using the Databricks SDK.

Real-time mode via foreach writer

Continuous sync from Delta to LakeBase has a lag of 10-15 seconds, so if you need low latency, consider using real-time mode via the ForeachWriter writer to sync data directly from the DataFrame to the LakeBase table. It will sync the data within milliseconds.

refer to Lakebase ForeachWriter code on Github.

Step 5: Build the app with FastAPI or another framework of choice

With your data synced with Lakebase, you can now deploy your code to build your app. In this example, the app receives event data from Lakebase and uses it to update the application in real-time to track driver activity while making food deliveries. read the Get started with Databricks Apps Docs To learn more about building apps on Databricks.

additional resources

Check out more tutorials, demos, and solution accelerators to build your own apps for your specific needs.

- Build an end-to-end application: A real-time sailing simulator tracks a fleet of sailboats using the Python SDK and REST API with Databricks Apps and Databricks Asset Bundles. read blog.

- Create a Digital Twins Solution: Learn how to maximize operational efficiency, gain real-time insight, and accelerate predictive maintenance with Databricks Apps and Lakebase. Read the blog.

learn more about Zerobus Ingest, LakebaseAnd Databricks Apps In technical documentation. you can also see Databricks Apps Cookbook And Cookbook Resource Collection.

conclusion

IoT, clickstream, telemetry and similar applications generate billions of data points every day, which are used to power critical real-time applications across many industries. As such, simplifying ingestion from these systems is paramount. Zerobus Ingest provides a streamlined way to push event data from these systems directly to Lakehouse while ensuring high performance. It integrates well with Lakebase to simplify end-to-end enterprise application development.