Picture this: Your enterprise has just deployed its first generative AI application. Initial results are promising, but as you plan to expand into different departments, important questions arise. How do you enforce consistent security, prevent model bias, and maintain control as AI applications grow?

This shows that you are not alone. A McKinsey survey The inclusion of more than 750 leaders in 38 countries reveals both challenges and opportunities when crafting governance strategies. While organizations are devoting significant resources – most plan to invest more than $1 million in responsible AI – barriers to implementation remain. Lack of knowledge represents the primary barrier for more than 50% of respondents, while 40% cite regulatory uncertainty.

Yet companies with established responsible AI programs report substantial benefits: 42% have seen improvements in business efficiency, while 34% have experienced an increase in consumer trust. These results illustrate why strong risk management is fundamental to realizing the full potential of AI.

Responsible AI: A non-negotiable issue from day one

At the AWS Generative AI Innovation Center, we’ve seen that the organizations that achieve the strongest results embed governance into their DNA from the start. This is consistent with AWS’s commitment to responsible AI development, evidenced by the recent launch of our AWS Well-Architected Responsible AI Lens, a comprehensive framework for implementing responsible practices across the entire development lifecycle.

The Innovation Center has consistently adopted and implemented these principles responsible by design Philosophy, carefully studying use cases, and following science-backed guidance. This approach led us AI Risk Intelligence (AIRI) SolutionsWhich turns these best practices into actionable, automated governance controls – making responsible AI implementation both attainable and scalable.

Four tips for responsible and safe generic AI deployment

Drawing from our experience in helping over a thousand organizations across industries and geographies, here are key strategies for integrating strong governance and security controls into the development, review, and deployment of AI applications through an automated and seamless process.

1 – Adopt a governance-by-design mindset

At the Innovation Center, we work daily with organizations at the forefront of generative and agentic AI adoption. We’ve seen a consistent pattern: While the promise of generative AI attracts business leaders, they often struggle to navigate a path toward responsible and safe implementation. Organizations achieving the most impactful results establish a governance-by-design mindset from the start – treating AI risk management and responsible AI considerations as foundational elements rather than compliance checkboxes. This approach transforms governance from a perceived barrier to rapid innovation while maintaining appropriate controls. By incorporating governance into the development process, these organizations can scale their AI initiatives more confidently and safely.

2 – Align technology, business and governance

The primary mission of the Innovation Center is to help customers develop and deploy AI solutions to meet business needs while leveraging the most optimal AWS services. However, technological exploration must go hand in hand with governance planning. Think of it like conducting an orchestra – you wouldn’t coordinate a symphony without understanding how each instrument works and how they harmonize together. Similarly, effective AI governance requires a deep understanding of the underlying technology before controls can be imposed. We help organizations establish clear connections between technology capabilities, business objectives and governance requirements from the start, ensuring that these three elements work together.

3 – Embed security as a governance gateway

After establishing a governance-by-design mindset and aligning business, technology and governance objectives, the next critical step is implementation. We have found that security serves as the most effective entry point to driving comprehensive AI governance. Security not only provides critical protections but also supports responsible innovation by building trust in the foundation of AI systems. The approach used by the Innovation Center emphasizes security-by-design throughout the implementation journey, from protecting infrastructure to sophisticated threat detection in complex workflows.

To support this approach, we help customers leverage capabilities like AWS Security Agent, which automates security verification throughout the development lifecycle. This frontier agent performs customized security reviews and penetration testing based on centrally defined standards, helping organizations increase their security expertise to match the pace of growth.

This security-first approach establishes a comprehensive set of governance controls. The AWS Responsible AI Framework combines fairness, explainability, privacy and security, security, controllability, veracity and robustness, governance and transparency into one cohesive approach. As AI systems become deeply integrated into business processes and autonomous decision making, automating these controls while maintaining rigorous oversight becomes critical to successfully scaling.

4 – Automate governance at enterprise scale

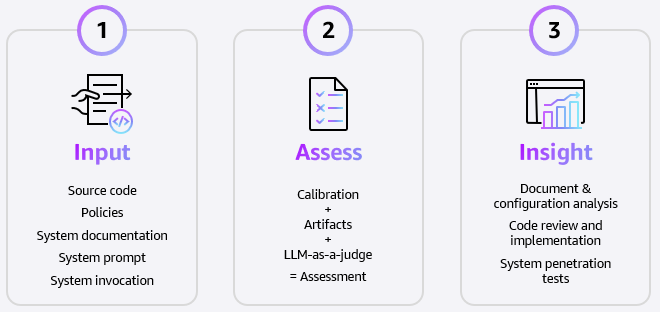

With the foundational elements in place—mindset, alignment, and security controls—organizations need a way to systematically enhance their governance efforts. This is where AIRI Solutions comes in. Rather than creating new processes, it operationalizes the principles and controls we have discussed through automation in a phased approach.

The solution’s architecture integrates seamlessly with existing workflows through a three-step process: user input, automated assessment, and actionable insights. It analyzes everything from source code to system documentation, using advanced technologies such as automated document processing and LLM-based assessments to conduct comprehensive risk assessments. Most importantly, it dynamically tests generative AI systems, checking for semantic consistency and potential vulnerabilities while adapting to each organization’s specific needs and industry standards.

From theory to practice

The true measure of effective AI governance is how it evolves with an organization while maintaining rigorous standards at scale. When successfully implemented, automated governance enables teams to focus on innovation, confident that their AI systems operate within appropriate guardrails. A striking example comes from our collaboration with Ryanair, Europe’s largest airline group. As they move towards 300 million passengers by 2034, Ryanair needs responsible AI governance for its cabin crew application, which provides critical operational information to frontline staff. Using Amazon Bedrock, the Innovation Center conducted an AI-powered assessment. It established transparent, data-driven risk management where risks were previously difficult to measure – creating a model for responsible AI governance that Ryanair can now extend across its AI portfolio.

This implementation demonstrates the broader impact of systematic AI governance. Organizations using this framework consistently report accelerated paths to production, less manual work, and increased risk management capabilities. Most importantly, they have achieved strong cross-functional alignment from technology to legal to security teams – all working toward clear, measurable objectives.

a foundation for innovation

Responsible AI governance is not a barrier – it is a catalyst. By incorporating governance into the framework of AI development, organizations can innovate with confidence, knowing they have the controls in place to scale safely and responsibly. The above example demonstrates how automated governance transforms theoretical frameworks into practical solutions that drive business value while maintaining trust.

Learn more about AWS Generative AI Innovation Center And how we are helping organizations of different sizes apply responsible AI to meet their business objectives.

About the authors

Segoline Desartine-Panhard The AWS Generative AI Innovation Center houses the global technical lead for Responsible AI and AI Governance initiatives. In this role, she supports AWS customers in enhancing their generative AI strategies by implementing strong governance processes and effective AI and cybersecurity risk management systems, leveraging AWS capabilities and cutting-edge scientific models. Before joining AWS in 2018, she was a tenured professor of finance at New York University’s Tandon School of Engineering. He also worked as an independent consultant in financial disputes and regulatory investigations for several years. He has a Ph.D. Is. from Paris Sorbonne University.

Segoline Desartine-Panhard The AWS Generative AI Innovation Center houses the global technical lead for Responsible AI and AI Governance initiatives. In this role, she supports AWS customers in enhancing their generative AI strategies by implementing strong governance processes and effective AI and cybersecurity risk management systems, leveraging AWS capabilities and cutting-edge scientific models. Before joining AWS in 2018, she was a tenured professor of finance at New York University’s Tandon School of Engineering. He also worked as an independent consultant in financial disputes and regulatory investigations for several years. He has a Ph.D. Is. from Paris Sorbonne University.

mr ilaprolu Serves as the Director of the AWS Generative AI Innovation Center, where he leverages nearly three decades of technology leadership experience to drive artificial intelligence and machine learning innovation. In this role, he leads a global team of machine learning scientists and engineers who develop and deploy advanced generative and agentic AI solutions for enterprise and government organizations facing complex business challenges. During his nearly 13-year tenure at AWS, Sri has held progressively senior positions, including leading ML science teams that have partnered with high-profile organizations such as the NFL, Cerner, and NASA. These collaborations enable AWS customers to use AI and ML technologies for transformational business and operational outcomes. Before joining AWS, he spent 14 years at Northrop Grumman, where he successfully managed product development and software engineering teams. Sri holds a Master’s degree in Engineering Science and an MBA with a concentration in General Management, providing him with both the technical depth and business acumen necessary for his current leadership role.

mr ilaprolu Serves as the Director of the AWS Generative AI Innovation Center, where he leverages nearly three decades of technology leadership experience to drive artificial intelligence and machine learning innovation. In this role, he leads a global team of machine learning scientists and engineers who develop and deploy advanced generative and agentic AI solutions for enterprise and government organizations facing complex business challenges. During his nearly 13-year tenure at AWS, Sri has held progressively senior positions, including leading ML science teams that have partnered with high-profile organizations such as the NFL, Cerner, and NASA. These collaborations enable AWS customers to use AI and ML technologies for transformational business and operational outcomes. Before joining AWS, he spent 14 years at Northrop Grumman, where he successfully managed product development and software engineering teams. Sri holds a Master’s degree in Engineering Science and an MBA with a concentration in General Management, providing him with both the technical depth and business acumen necessary for his current leadership role.

Randy Larson The AWS Generative AI Innovation Center connects AI innovation with executive strategy, shaping how organizations understand and translate technological breakthroughs into business value. He Hosts the Innovation Center’s podcast series and combines strategic storytelling with data-driven insights through global keynotes and executive interviews on AI transformation. Before Amazon, Randy refined his analytical precision as a Bloomberg journalist and advisor to economic institutions, think tanks, and family offices on financial technology initiatives. Randy holds an MBA from Duke University’s Fuqua School of Business and a BS in Journalism and Spanish from Boston University.

Randy Larson The AWS Generative AI Innovation Center connects AI innovation with executive strategy, shaping how organizations understand and translate technological breakthroughs into business value. He Hosts the Innovation Center’s podcast series and combines strategic storytelling with data-driven insights through global keynotes and executive interviews on AI transformation. Before Amazon, Randy refined his analytical precision as a Bloomberg journalist and advisor to economic institutions, think tanks, and family offices on financial technology initiatives. Randy holds an MBA from Duke University’s Fuqua School of Business and a BS in Journalism and Spanish from Boston University.