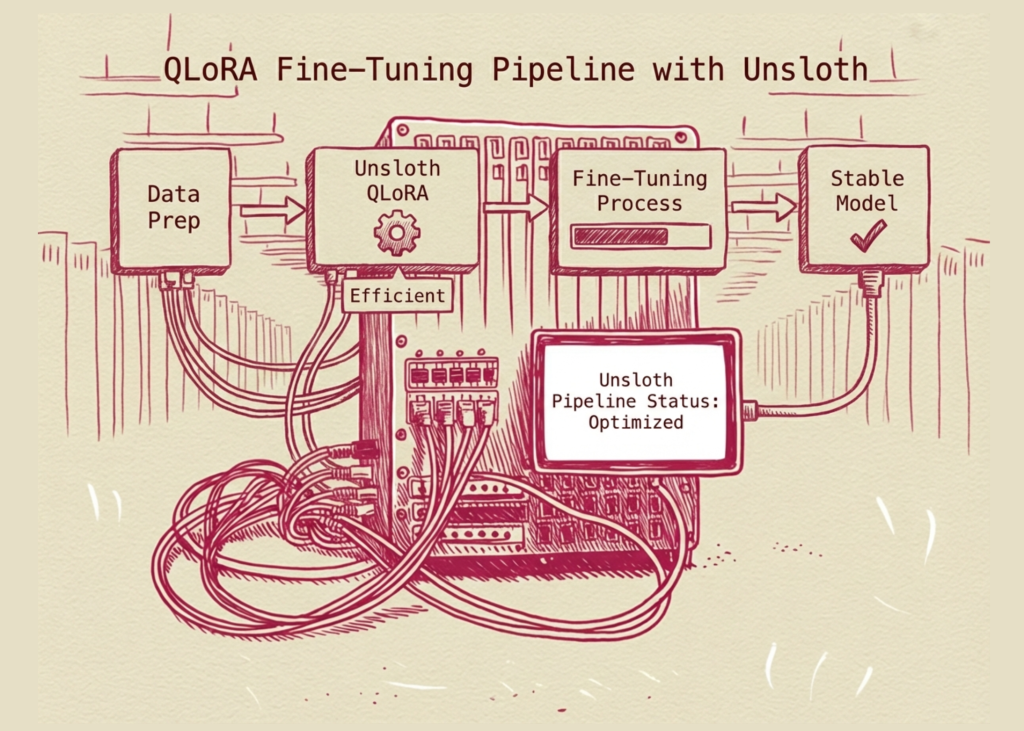

In this tutorial, we demonstrate how to efficiently fine-tune using a large language model tasteless And QLoRA. We focus on building a stable, end-to-end supervised fine-tuning pipeline that handles common Colab issues such as GPU detection failures, runtime crashes, and library incompatibilities. By carefully controlling the environment, model configuration, and training loop, we show how to reliably train an instruction-tuned model with limited resources while maintaining strong performance and fast iteration speed.

import os, sys, subprocess, gc, locale

locale.getpreferredencoding = lambda: "UTF-8"

def run(cmd):

print("n$ " + cmd, flush=True)

p = subprocess.Popen(cmd, shell=True, stdout=subprocess.PIPE, stderr=subprocess.STDOUT, text=True)

for line in p.stdout:

print(line, end="", flush=True)

rc = p.wait()

if rc != 0:

raise RuntimeError(f"Command failed ({rc}): {cmd}")

print("Installing packages (this may take 2–3 minutes)...", flush=True)

run("pip install -U pip")

run("pip uninstall -y torch torchvision torchaudio")

run(

"pip install --no-cache-dir "

"torch==2.4.1 torchvision==0.19.1 torchaudio==2.4.1 "

"--index-url https://download.pytorch.org/whl/cu121"

)

run(

"pip install -U "

"transformers==4.45.2 "

"accelerate==0.34.2 "

"datasets==2.21.0 "

"trl==0.11.4 "

"sentencepiece safetensors evaluate"

)

run("pip install -U unsloth")

import torch

try:

import unsloth

restarted = False

except Exception:

restarted = True

if restarted:

print("nRuntime needs restart. After restart, run this SAME cell again.", flush=True)

os._exit(0)We have set up a controlled and compatible environment by reinstalling PyTorch and all required libraries. We ensure that Unsloth and its dependencies are correctly aligned with the CUDA runtimes available in Google Colab. We also handle the runtime restart logic so that the environment is clean and stable before training begins.

import torch, gc

assert torch.cuda.is_available()

print("Torch:", torch.__version__)

print("GPU:", torch.cuda.get_device_name(0))

print("VRAM(GB):", round(torch.cuda.get_device_properties(0).total_memory / 1e9, 2))

torch.backends.cuda.matmul.allow_tf32 = True

torch.backends.cudnn.allow_tf32 = True

def clean():

gc.collect()

torch.cuda.empty_cache()

import unsloth

from unsloth import FastLanguageModel

from datasets import load_dataset

from transformers import TextStreamer

from trl import SFTTrainer, SFTConfigWe verify GPU availability and configure PyTorch for efficient computation. To ensure that all performance optimizations are correctly applied, we import Unsloth before all other training libraries. We also define utility functions to manage GPU memory during training.

max_seq_length = 768

model_name = "unsloth/Qwen2.5-1.5B-Instruct-bnb-4bit"

model, tokenizer = FastLanguageModel.from_pretrained(

model_name=model_name,

max_seq_length=max_seq_length,

dtype=None,

load_in_4bit=True,

)

model = FastLanguageModel.get_peft_model(

model,

r=8,

target_modules=("q_proj","k_proj),

lora_alpha=16,

lora_dropout=0.0,

bias="none",

use_gradient_checkpointing="unsloth",

random_state=42,

max_seq_length=max_seq_length,

)

We load 4-bit quantized, instruction-tuned models using Unsloth’s fast-loading utilities. We then add LoRA adapters to the model to enable parameter-efficient fine-tuning. We configure the LoRA setup to balance memory efficiency and learning capability.

ds = load_dataset("trl-lib/Capybara", split="train").shuffle(seed=42).select(range(1200))

def to_text(example):

example("text") = tokenizer.apply_chat_template(

example("messages"),

tokenize=False,

add_generation_prompt=False,

)

return example

ds = ds.map(to_text, remove_columns=(c for c in ds.column_names if c != "messages"))

ds = ds.remove_columns(("messages"))

split = ds.train_test_split(test_size=0.02, seed=42)

train_ds, eval_ds = split("train"), split("test")

cfg = SFTConfig(

output_dir="unsloth_sft_out",

dataset_text_field="text",

max_seq_length=max_seq_length,

packing=False,

per_device_train_batch_size=1,

gradient_accumulation_steps=8,

max_steps=150,

learning_rate=2e-4,

warmup_ratio=0.03,

lr_scheduler_type="cosine",

logging_steps=10,

eval_strategy="no",

save_steps=0,

fp16=True,

optim="adamw_8bit",

report_to="none",

seed=42,

)

trainer = SFTTrainer(

model=model,

tokenizer=tokenizer,

train_dataset=train_ds,

eval_dataset=eval_ds,

args=cfg,

)

We prepare training datasets by converting multi-turn conversations into a single text format suitable for supervised fine-tuning. We split the dataset to maintain training integrity. We also define the training configuration, which controls the batch size, learning rate, and training duration.

clean()

trainer.train()

FastLanguageModel.for_inference(model)

def chat(prompt, max_new_tokens=160):

messages = ({"role":"user","content":prompt})

text = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

inputs = tokenizer((text), return_tensors="pt").to("cuda")

streamer = TextStreamer(tokenizer, skip_prompt=True, skip_special_tokens=True)

with torch.inference_mode():

model.generate(

**inputs,

max_new_tokens=max_new_tokens,

temperature=0.7,

top_p=0.9,

do_sample=True,

streamer=streamer,

)

chat("Give a concise checklist for validating a machine learning model before deployment.")

save_dir = "unsloth_lora_adapters"

model.save_pretrained(save_dir)

tokenizer.save_pretrained(save_dir)We execute training loops and monitor the fine-tuning process on the GPU. We switch the model into inference mode and validate its behavior using a sample signal. We finally save the trained LoRA adapter so that we can reuse or deploy the fine-tuned model later.

Finally, we fine-tuned an instruction-following language model using Unsloth’s optimized training stack and the lightweight QLoRA setup. We demonstrated that by limiting sequence length, dataset size, and training steps, we can achieve stable training on Colab GPUs without runtime interruptions. The resulting LoRA adapters provide a practical, reusable artifact that we can deploy or advance, making this workflow a strong foundation for future experimentation and advanced alignment techniques.

check it out full code here. Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.