Image by editor

, Introduction

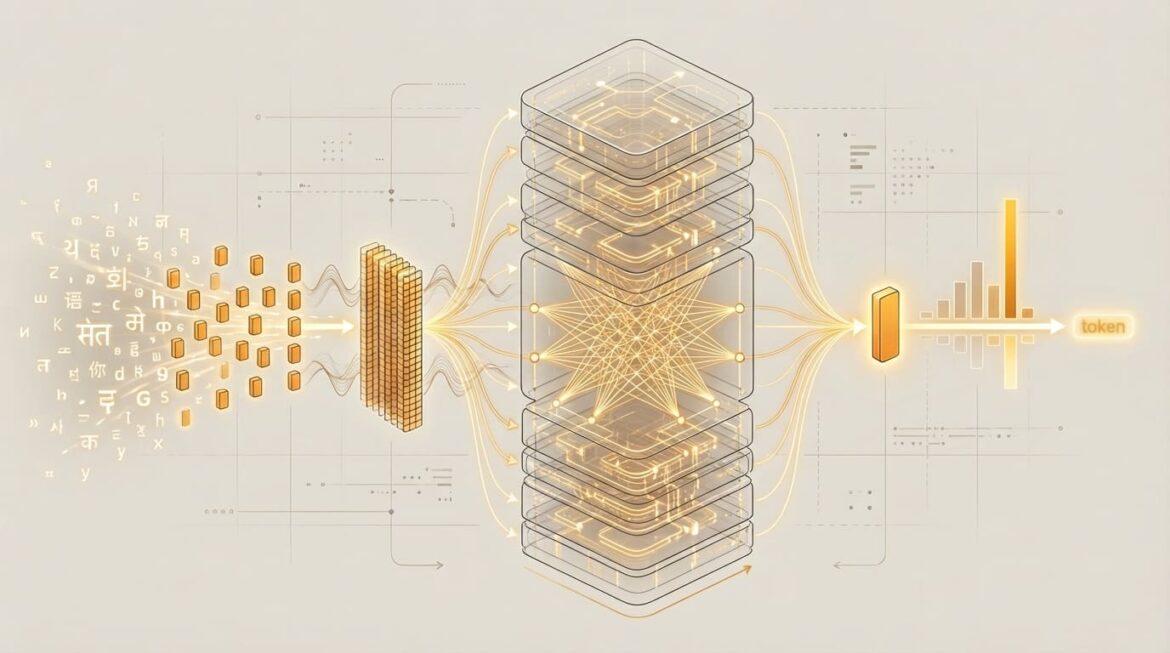

thanks for doing large language model (LLM), nowadays we have impressive, incredibly useful applications Gemini, chatgptAnd cloudto name a few. However, few people realize that the underlying architecture behind LLM is called TransformerThis architecture is carefully designed to “think” – that is, to process data that describes human language – in a very particular and somewhat idiosyncratic way, Are you interested in gaining a comprehensive understanding of what happens inside these so-called transformers?

This article describes, using a gentle, understandable and rather non-technical tone, how the Transformer models sitting behind LLMs analyze input information like user signals and how they produce consistent, meaningful and relevant output text word by word (or, a little more technically, token by token).

, Initial phase: making language understandable by machines

The first key concept to understand is that AI models don’t really understand human languageThey simply understand and work with numbers, and the transformers behind LLM are no exception. Therefore, human language – i.e. text – needs to be converted into a form that the transformer can fully understand before it is able to process it deeply.

Put another way, the first few steps that occur before entering the core and innermost layers of the transformer focus primarily on transforming this raw text into a numerical representation that preserves the key properties and characteristics of the original text under the hood. Let’s examine these three steps.

Making language understandable by machines (click to enlarge)

, tokenization

The tokenizer is the first actor to arrive on the scene, working in conjunction with the Transformer model, and is responsible for dividing the raw text into smaller pieces, called tokens. Depending on the tokenizer used, these tokens may in most cases be equivalent to words, but tokens may sometimes also be parts of words or punctuation marks. Furthermore, each token in a language has a unique numerical identifier. This is when the text no longer becomes text, but numbers: all at the token level, as shown in this example in which a simple tokenizer converts five-word text into five token identifiers, one per word:

Tokenization of text into token identifiers

, token embedding

Next, each token ID is transformed into a ( d )-dimensional vector, which is a list of numbers of size ( d ). This complete representation of a token as an embedding is like a description of the overall meaning of this token, whether it is a word, part of it, or a punctuation mark. The magic lies in the fact that tokens are associated with similar concepts of meaning, e.g. Queen And MaharaniThere will be associated embedding vectors that are similar.

, positional encoding

Until now, a token embedding contained information in the form of a collection of numbers, yet that information still related to a single token in isolation. However, in text sequences such as “language fragments”, it is important to know not only the words or tokens involved, but also their position in the text of which they are part. Positional encoding is a process that, using mathematical functions, embeds in each token some additional information about its position in the original text sequence.

, Transformation through the core of the transformer model

Now that the numerical representation of each token includes information about its position in the text sequence, it is time to enter the first layer of the main body of the Transformer model. Transformers are a very deep architecture, with many stacked components repeated throughout the system. There are two types of transformer layers – encoder layers and decoder layers – but for the sake of simplicity, we will not make any subtle distinctions between them in this article. For now just be aware that there are two types of layers in a transformer, even though they both have a lot in common.

Transformation through the core of the transformer model (click to enlarge)

, multi-focus attention

This is the first major subprocess to occur inside the Transformer layer, and is perhaps the most impressive and distinguishing feature of the Transformer model compared to other types of AI systems. Multi-headed attention is a mechanism that allows one token to observe or “pay attention” to other tokens in the sequence. It collects and incorporates useful contextual information into its own symbolic representation, namely linguistic aspects such as grammatical relations, long-range dependencies between words that are not necessarily next to each other in the text, or semantic similarities. In short, thanks to this mechanism, diverse aspects of relevance and relationships between parts of the original text have been successfully captured. After a token representation travels through this component, it acquires a richer, more context-aware representation of itself and the text to which it belongs.

Some Transformer architectures built for specific tasks, such as translating text from one language to another, also analyze potential dependencies between tokens through this mechanism, looking at both the input text and the output (translated) text generated so far, as shown below:

translations multi-core focus in transformer

, Feed-forward neural network sublayer

In simple words, after passing through attention, the second normal step inside each replication layer of Transformer is a set of convolutional neural network layers that do further processing and help learn additional patterns of our rich token representations. This process amounts to sharpening these representations, identifying and reinforcing relevant features and patterns. Ultimately, these layers are the mechanism used to gradually learn a general, increasingly abstract understanding of the entire text being processed.

The process of going through the multi-headed attention and feed-forward sublayers is repeated several times in that order: the number of times we have replicated Transformer layers.

, Final destination: predicting the next word

After repeating the previous two steps several times in an alternating manner, the symbolic representation coming from the initial text should have allowed the model to gain a much deeper understanding, enabling it to recognize complex and subtle relationships. At this point, we reach the final component of the transformer stack: a special layer that converts the final representation into a probability for every possible token in the vocabulary. That is, we calculate – based on all the information learned along the way – the probability for each word in the target language to be the next word to be output by the Transformer model (or LLM). The model ultimately chooses the token or word with the highest probability, which it produces as part of the output for the end user. The entire process is repeated to generate each term as part of the model response.

, wrapping up

This article provides a gentle and conceptual journey through the journey experienced by text-based information as it flows through the signature model architecture behind LLM: Transformer. After reading this, you will hopefully have a better understanding of what goes on inside models like ChatGPT.

ivan palomares carrascosa Is a leader, author, speaker and consultant in AI, Machine Learning, Deep Learning and LLM. He trains and guides others in using AI in the real world.