Author(s): -Awadhesh Singh Chauhan

Originally published on Towards AI.

Integrated, minimal and production-ready Python SDK for OpenAI, Anthropic, Google Gemini, XAI and native LLM – with built-in agents, flexibility and observability.

Have you ever found yourself using multiple SDKs to use different LLM providers? Writing separate code for OpenAI’s client, Anthropic’s SDK, Google’s API, and then trying to make them work together? If you’ve built production AI applications, you know the pain: different response formats, inconsistent error handling, and endless boilerplate.

Today, I’m excited to announce the public release of aiclient-llm – A Python library that solves this problem beautifully. a customer. All providers. Ready for production out of the box.

pip install aiclient-llm

The problem we are solving

Modern AI development requires flexibility. You might want:

- GPT-4o for general reasoning

- cloud for micro conversation

- Gemini For its huge reference window

- grok For real time information

- Olama For local development and privacy

But each provider has its own:

- SDK with unique method signatures

- Response Formats and Data Structures

- Error types and handling patterns

- authentication mechanism

- streaming implementation

outcome? Your codebase becomes a tangled mess of provider-specific code, adapter patterns, and conditional logic. The test becomes a nightmare. Switching providers requires rewriting.

aiclient-llm changes this.

What is Aiclient-llm?

aiclient-llm There is a minimal, integrated Python client that provides:

- a consistent API In OpenAI, Anthropic, Google Gemini, XAI (Grok) and Olama

- underlying agent framework With tool use and Model Reference Protocol (MCP)

- production flexibility With circuit breakers, rate limiters and automatic retry

- full observability Including cost tracking, logging, and OpenTelemetry integration

- First class testing support With mock providers for deterministic unit tests

All in a clean, Pythonic interface that takes minutes to learn.

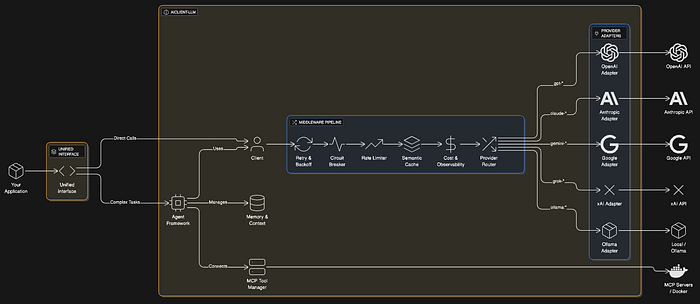

Architecture at a glance

Quick Start: It’s Really That Easy

Here is the complete setup for using multiple LLM providers:

from aiclient import Client# Initialize once with all your API keys

client = Client(

openai_api_key="sk-...",

anthropic_api_key="sk-ant-...",

google_api_key="...",

xai_api_key="..."

)

# Call OpenAI

response = client.chat("gpt-4o").generate("Explain quantum computing")

print(response.text)

# Call Claude - same interface

response = client.chat("claude-3-5-sonnet-latest").generate("Write a haiku about Python")

print(response.text)

# Call Gemini - still the same

response = client.chat("gemini-2.0-flash").generate("Summarize this article...")

print(response.text)

# Call local Ollama - no code changes

response = client.chat("ollama:llama3").generate("Hello, local LLM!")

print(response.text)

That’s it. No adapter class. No response translation. No provider-specific codes.

The library intelligently routes requests based on model names (gpt- → OpenAI, claude- → anthropological, gemini- → Google) or obvious prefixes like ollama:mistral,

Streaming That Just Works

Real-time streaming is first class:

for chunk in client.chat("gpt-4o").stream("Write a poem about coding"):

print(chunk.text, end="", flush=True)

Works equally well on all providers. The chunk format is standardized, so your UI code doesn’t care where the tokens come from.

Multimodal Made Easy

Vision model? Send images from files, URLs, or Base64 – the library handles the encoding:

from aiclient import UserMessage, Text, Imagemessage = UserMessage(content=(

Text(text="What's in this image?"),

Image(path="./photo.png") # Auto-encoded to base64

))

response = client.chat("gpt-4o").generate((message))

print(response.text)

OpenAI Vision works with the cloud’s vision capabilities and Gemini’s multimodal features.

Structured Output: Get JSON You Can Trust

Need guaranteed JSON responses? Use Pedantic Model:

from pydantic import BaseModel

from aiclient import Clientclass Character(BaseModel):

name: str

class_type: str

level: int

items: list(str)

client = Client()

# OpenAI's native strict mode

character = client.chat("gpt-4o").generate(

"Generate a level 5 wizard named Merlin with a staff and hat.",

response_model=Character,

strict=True # Uses OpenAI's native JSON mode

)

print(character.name) # "Merlin"

print(character.items) # ("staff", "hat")

For providers without a native JSON mode, the library intelligently falls back to prompt-based extraction.

Create an agent in minutes

built-in Agent The class provides a complete React loop for tool-using agents:

from aiclient import Client, Agentdef get_weather(location: str) -> str:

"""Get the current weather for a location."""

return f"Sunny, 22°C in {location}"

def search_web(query: str) -> str:

"""Search the web for information."""

return f"Top result for '{query}': ..."

client = Client()

agent = Agent(

model=client.chat("gpt-4o"),

tools=(get_weather, search_web),

max_steps=10

)

result = agent.run("What's the weather in Paris and find me some good restaurants there?")

print(result)

Agent automatically:

- Converts your functions into a tool schema

- React executes the loop (reason → action → observe)

- Tool handles calls and responses

- Maintains memory of conversation

Model Reference Protocol (MCP): 16,000+ external devices

Join the explosive ecosystem of MCP servers to give your agents superpowers:

from aiclient import Client, Agentagent = Agent(

model=client.chat("gpt-4o"),

mcp_servers={

"filesystem": {

"command": "npx",

"args": ("-y", "@modelcontextprotocol/server-filesystem", "./workspace")

},

"github": {

"command": "npx",

"args": ("-y", "@modelcontextprotocol/server-github")

}

}

)

async with agent:

result = await agent.run_async(

"List all Python files in the project and create a GitHub issue for any TODOs"

)

Your agent can now read files, interact with GitHub, perform database queries and much more using the rapidly growing MCP ecosystem.

built-in production flexibility

Real production systems require flexibility. aiclient-llm provides this out of the box:

Automatic retry with exponential backoff

client = Client(

max_retries=3,

retry_delay=1.0 # Seconds, with exponential backoff

)

circuit breakers

Prevent cascade failures when the provider is down:

from aiclient import CircuitBreakercb = CircuitBreaker(

failure_threshold=5, # Open after 5 failures

recovery_timeout=60.0 # Try again after 60 seconds

)

client.add_middleware(cb)

rate limiter

Respect API rate limits automatically:

from aiclient import RateLimiterrl = RateLimiter(requests_per_minute=60)

client.add_middleware(rl)

fallback chain

Automatically switch back to alternative providers:

from aiclient import FallbackChainfallback = FallbackChain(client, (

"gpt-4o", # Try OpenAI first

"claude-3-opus", # Then Anthropic

"gemini-1.5-pro" # Then Google

))

response = fallback.generate("Important query that must succeed")

load balancing

Distribute requests across multiple models:

from aiclient import LoadBalancerlb = LoadBalancer(client, ("gpt-4o", "gpt-4o-mini", "claude-3-5-sonnet"))

response = lb.generate("Hello!") # Round-robin across models

Observability: Know what’s happening

cost tracking

Track your LLM expenses in real time:

from aiclient import CostTrackingMiddlewarecost_tracker = CostTrackingMiddleware()

client.add_middleware(cost_tracker)

# After making requests...

print(f"Total cost: ${cost_tracker.total_cost_usd:.4f}")

print(f"Input tokens: {cost_tracker.total_input_tokens}")

print(f"Output tokens: {cost_tracker.total_output_tokens}")

This includes the latest pricing for all major models.

Logging with key modification

from aiclient import LoggingMiddlewarelogger = LoggingMiddleware(

log_prompts=True,

log_responses=True,

redact_keys=True # Auto-redacts API keys from logs

)

client.add_middleware(logger)

OpenTelemetry integration

For production overview:

from aiclient import OpenTelemetryMiddlewareotel = OpenTelemetryMiddleware(service_name="my-ai-app")

client.add_middleware(otel)

Creates spans automatically with model, token, and error attributes.

memory management

Maintain conversation context with built-in memory:

from aiclient import ConversationMemory, SlidingWindowMemory# Simple memory - stores all messages

memory = ConversationMemory()

# Or sliding window - keeps last N messages (preserves system prompts)

memory = SlidingWindowMemory(max_messages=20)

agent = Agent(

model=client.chat("gpt-4o"),

memory=memory

)

Memory is sorted for persistence:

# Save

state = memory.save()# Load

memory.load(state)

semantic caching

Save money and latency with embedding-based response caching:

from aiclient import SemanticCacheMiddlewareclass MyEmbedder:

def embed(self, text: str) -> list(float):

# Use any embedding model

return client.embed(text, "text-embedding-3-small")

cache = SemanticCacheMiddleware(

embedder=MyEmbedder(),

threshold=0.9 # Cosine similarity threshold

)

client.add_middleware(cache)

Similar queries impact the cache rather than making API calls.

Embedding: first-class support

Generate embeddings with unified interface:

# Single text

vector = await client.embed(

"Hello world",

model="text-embedding-3-small"

)# Batch

vectors = await client.embed_batch(

("Hello", "World", "!"),

model="text-embedding-3-small"

)

Works with OpenAI, Google (text-embedding-004), and xAI embeddings.

Testing: Fake Provider for Reliable Testing

Write deterministic unit tests without hitting the API:

from aiclient import MockProvider, MockTransportdef test_my_ai_feature():

# Create mock provider

provider = MockProvider()

provider.add_response("Expected AI response")

provider.add_response("Second response")

# Use in tests

response = provider.parse_response({})

assert response.text == "Expected AI response"

# Verify requests

assert len(provider.requests) == 1

Test your business logic, not API connectivity.

batch processing

Process thousands of requests efficiently:

questions = (

"What is Python?",

"What is JavaScript?",

"What is Rust?",

# ... hundreds more

)async def process_question(q):

return await client.chat("gpt-4o-mini").generate_async(q)

# Process with controlled concurrency

results = await client.batch(

questions,

process_question,

concurrency=10 # Max 10 parallel requests

)

type-safe error handling

Catch specific errors for proper management:

from aiclient import (

AIClientError,

AuthenticationError,

RateLimitError,

NetworkError,

ProviderError

)try:

response = client.chat("gpt-4o").generate("Hello")

except AuthenticationError:

print("Check your API key")

except RateLimitError:

print("Too many requests - backing off")

except NetworkError:

print("Connection failed")

except ProviderError:

print("Provider returned an error")

except AIClientError:

print("Something went wrong")

Why choose aiclient-llm?

vs provider SDKs (openai, anthropic, google-generativeai)

vs langchen

vs lightllm

Both solve the unified interface problem. aiclient-llm distinguishes:

- underlying agent framework

- MCP protocol support

- comprehensive middleware system

- semantic caching

- First Class Testing Utilities

starting today

# Basic installation

pip install aiclient-llm# With MCP support

pip install aiclient-llm(mcp)

Set your API keys via environment variables:

export OPENAI_API_KEY="sk-..."

export ANTHROPIC_API_KEY="sk-ant-..."

export GEMINI_API_KEY="..."

export XAI_API_KEY="..."

Then start building:

from aiclient import Clientclient = Client()

response = client.chat("gpt-4o").generate("Hello, world!")

print(response.text)

What will happen next?

This is just the beginning. On the roadmap:

- Extended Provider Support (AWS Bedrock, Azure OpenAI)

- Advanced Caching Backend (Redis, PostgreSQL)

- quick templating with jinja2

- evaluation framework For quick quality testing

- Multi-Agent Orchestration pattern

join the community

aiclient-llm is open source under the Apache 2.0 license.

Star the repo, give it a try and let us know what you think. Contributions welcome!

Summary

Building AI applications shouldn’t mean wrestling with multiple SDKs. aiclient-llm gives you:

- integrated api – An interface to OpenAI, Anthropic, Google, XAI and native LLM

- agents – Built-in React Loop with MCP support for 16K+ tools

- resilience – Circuit breakers, rate limiters, retries and fallbacks

- observability – Cost tracking, logging and open telemetry

- tests – Mock provider for deterministic unit tests

- Simplicity – Learn once, use everywhere

pip install aiclient-llm

Build AI applications the way they should be built – simple, flexible, and provider-agnostic.

Have any questions or feedback? open an issue on GitHub or contact LinkedinAuspicious building!

Published via Towards AI