Since we announced the public preview of Lakebase over the summer, thousands of Databricks customers have been building data intelligent applications on top of Lakebase, using it to power application data serving, feature stores, and agent memory, while closely connecting that data with analytics and machine learning workflows.

As we approach the end of the year, we’re thrilled to release an exciting new set of improvements:

- autoscaling which dynamically adjusts calculations based on load

- scale to zeroAllows calculations to be stopped when idle and automatically resumed in hundreds of milliseconds

- quick provision To create new database instances in seconds

- accelerated database branchingEnabling Git-like workflows with isolated, copy-on-write environments for development, testing, and staging

- Automatic backup and point-in-time recovery For fast restoration and safe operation

- postgres 17With continuing Postgres 16 support

- Storage capacity increased to 8TB for large production workloads

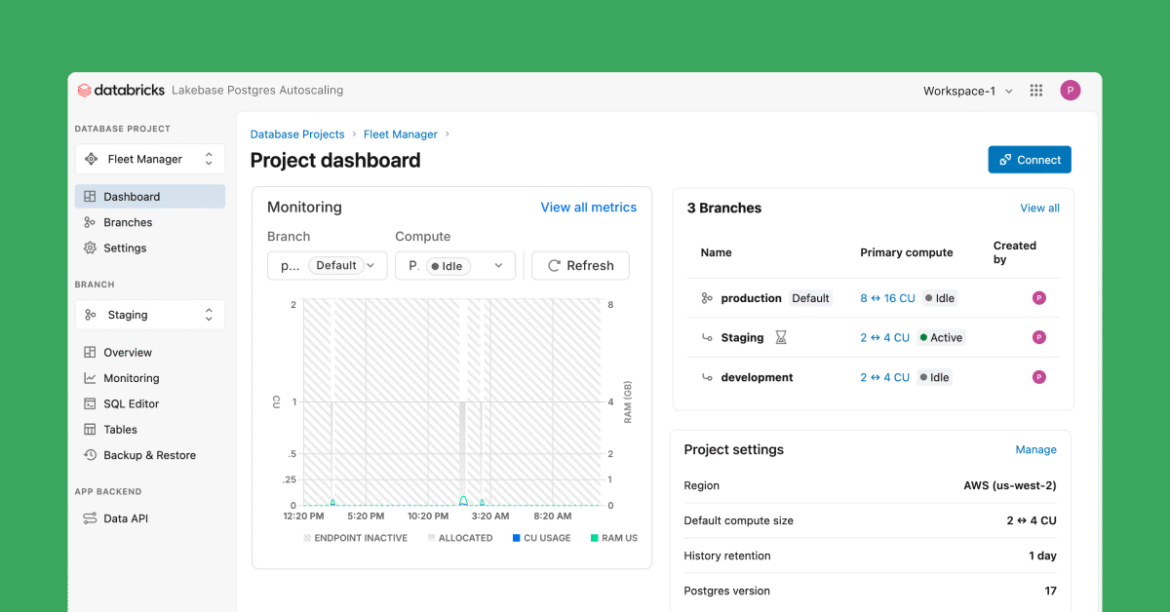

- A new Lakebase UI which simplifies common workflows

These features represent an important milestone in defining the LakeBase family, a serverless database architecture that separates OLTP storage from computing. They are made possible by combining the serverless Postgres and storage technology from our Neon acquisition with Databricks’ enterprise-grade, multi-cloud infrastructure.

Autoscaling for dynamic application workloads

Modern application workloads rarely follow predictable traffic patterns. User activity fluctuates throughout the day, background jobs generate writes, and agent-based systems can create sudden spikes in concurrency. Traditional operational databases require teams to manually plan and adjust capacity for peak usage, often resulting in overprovisioning and unnecessary complexity.

Because Lakebase builds on an architecture that separates the storage layer from the compute layer and allows independent scaling of both, we are now releasing compute autoscaling capability that can dynamically adjust compute based on active workload demand. When traffic increases, the compute level is increased to maintain performance. When activity slows down, the level of computation is reduced. Passive databases suspend after a short period of inactivity and resume immediately when new queries arrive. The compute adjusts dynamically to match workload demands in both production and development environments.

The result is that less time is spent managing capacity and more time is focused on application behavior.

Fast startup and quick provisioning

Creating a new database or restarting an inactive database should not slow down development. With this update, new Lakebase databases are provisioned in seconds, and suspended instances resume immediately when traffic returns. This makes it easier to build environments on demand, iterate during development, and support workflows where databases are frequently created and dropped.

For teams building and testing applications, fast startup reduces friction and keeps iteration cycles tight, especially when combined with branching and autoscaling.

Branching for fast, safe iteration

Building and developing production applications means constant change. Teams validate schema updates, debug complex issues, and run CI pipelines that rely on consistent views of the data. Traditional database cloning struggles to maintain because full copies are slow, storage-heavy, and operationally risky.

Lakebase Storage Service implements copy-on-write branching, and we now expose this functionality to our customers as Database Branching. Branches are instant, copy-on-write environments that remain isolated while sharing the underlying storage. This makes it easy to spin up development, test, and staging environments in seconds and iterate on application logic without touching production systems.

In practice, branching removes friction from the development lifecycle and helps teams move forward faster with confidence. (But testing in production is still not recommended!)

Automatic backup and point-in-time recovery

Not every data issue is an outage. Sometimes the problem is more subtle: a bug that silently writes incorrect data over time, a schema change that behaves differently than expected, or a backfill script that touches more rows than intended. These issues often go unnoticed until teams need to rely on historical data for analysis, reporting, or downstream application behavior.

In a traditional environment, such scenarios can be painful to recover from. Teams are forced to rebuild history by hand, replay logs, or stand up temporary systems to recover a known good version of their data. This process is time-consuming, error-prone and often requires deep database expertise.

LakeBase now makes it much easier to handle these situations. With automated backups and point-in-time recovery, teams can restore databases to the exact moment in time within seconds. This enables application teams to quickly recover from data issues caused by application bugs or operational errors, without the need for manual replay or complex recovery workflows.

supporting large production workloads

In addition to recovery, production systems also need space to grow as the volume of data increases. With this update, Lakebase increases its supported storage capacity to 8TB, four times the previous limit, making it suitable for larger and more demanding application workloads.

Extended Postgres version support

Lakebase now supports Postgres 17 along with continued support for Postgres 16. This gives teams access to the latest Postgres improvements while maintaining compatibility with existing applications.

Together, these updates make Lakebase a stronger foundation for running production-grade operational workloads on Databricks.

Simple workflow with the new Lakebase UI

Lakebase now includes a fresh new user interface designed to simplify everyday workflows. Creating databases, managing branches, and understanding capacity behavior is simpler with better defaults and faster provisioning. This new UI is available in the app launcher icon for the new Lakebase Autoscaling offering. The previous Lakebase provisioned offering will appear in the UI in the coming weeks.

Adoption

As indicated earlier, thousands of Databricks customers are building applications on top of Lakebase. Because Lakebase is fully integrated into the Databricks Data Intelligence Platform, operational data lives in the same foundation that supports analytics, AI, applications, and agentic workflows. Unity Catalog provides consistent governance, access control, auditing, and lineage. Databricks apps and agent frameworks can use Lakebase to integrate real-time state with historical context, eliminating the need for ETL or replication.

For practitioners, this creates a unified environment where operational and analytical data remain aligned, without the need to connect multiple systems to keep applications connected to intelligence.

To cite two early adopters:

“Lakebase lets an agent team instantly self-serve the data they need for their models, whether it’s historical claims or real-time transactions, and that’s really powerful.” -Dragon Sky, Chief Architect, Ensemble Health

“Lakebase gives us a durable, low-latency store for application state, so our data apps load quickly, refresh seamlessly, and even support shared page links between users.” – Bobby Muldoon, Vice President of Engineering, Ypitdata

What’s next for Lakebase?

These new features are available today in AWS us-east-1, us-west-2, eu-west-1 and will be gradually rolled out to more regions in the coming weeks. check it out Product Documentation To learn more and try out the latest capabilities.

This update represents a meaningful step forward for Lakebase. But we are not standing still. Expect lots of exciting updates after the holidays next year!

Happy Holidays from the Lakebase Team!