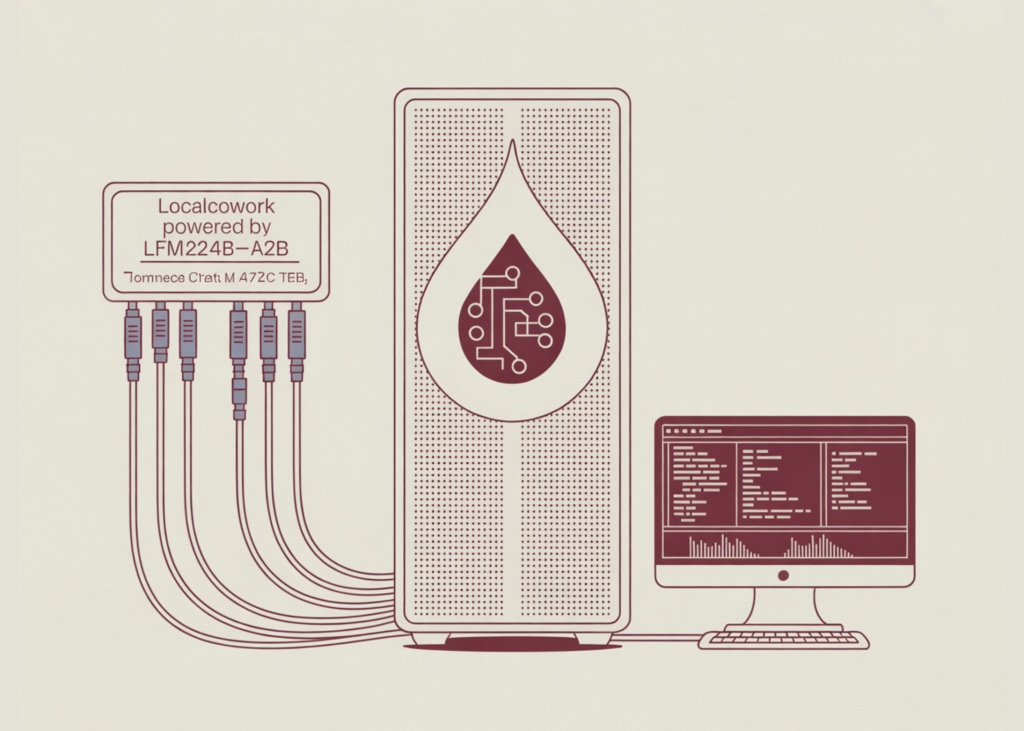

Liquid AI has been released LFM2-24B-A2BA model optimized for local, low-latency device dispatch local peerAn open-source desktop agent application is available Liquid4All GitHub Cookbook. This release provides a deployable architecture to run enterprise workflows entirely on device, eliminating API calls and data exfiltration for privacy-sensitive environments.

Architecture and serving configuration

To achieve low-latency performance on consumer hardware, the LFM2-24B-A2B uses a sparse mixture-of-experts (MoE) architecture. While the model has 24 billion parameters in total, it only activates about 2 billion parameters per token during inference.

This structural design allows the model to maintain a broad knowledge base while significantly reducing the computational overhead required for each generation step. Liquid AI stress-tested the model using the following hardware and software stack:

- Hardware: Apple M4 Max, 36 GB integrated memory, 32 GPU cores.

- Serving Engine:

llama-serverWith flash focus enabled. - Quantization:

Q4_K_M GGUFFormat. - Memory Footprint: ~14.5 GB RAM.

- Hyperparameter: Temperature set to 0.1, top_p to 0.1, and max_tokens to 512 (optimized for deterministic, strict output).

LocalWork Tool Integration

LocalCoWork is a completely offline desktop AI agent that uses Model Context Protocol (MCP) to execute pre-built tools by logging every action into a local audit trail, without relying on cloud APIs or compromising data privacy. The system consists of 75 appliances across 14 MCP servers capable of handling tasks such as file system operations, OCR, and security scanning. However, the demo provided is focused on a highly trusted, curated subset of 20 tools across 6 servers, each rigorously tested to achieve over 80% single-step accuracy and verified multi-step chain participation.

LocalCoWork serves as a practical implementation of this model. It operates completely offline and comes Pre-configured with a suite of enterprise-grade tools:

- File operations: Listing, reading, and searching in the host file system.

- Security Scanning: Identifying leaked API keys and personally identifiable information (PII) within local directories.

- Document Processing: Performing Optical Character Recognition (OCR), parsing text, separating contracts, and generating PDFs.

- Audit Logging: Locally recording each tool call for compliance tracking.

performance benchmark

The Liquid AI team evaluated the model based on a workload of 100 single-step tool selection prompts and 50 multi-step chains (requiring 3 to 6 separate tool executions, such as searching a folder, running OCR, parsing data, deduplicating, and exporting).

delay

the model was average ~385 ms per device-select response. This sub-second dispatch time is highly suitable for interactive, human-in-the-loop applications where immediate response is essential.

accuracy

- Single-Step Execution: 80% accuracy.

- Multi-Step Chain: 26% end-to-end completion rate.

key takeaways

- Privacy-First Local Execution: LocalCowork works completely on-device without cloud API dependencies or data exfiltration, making it highly suitable for regulated enterprise environments requiring strict data privacy.

- Efficient MOE Architecture: The LFM2-24B-A2B uses a sparse mixture-of-experts (MoE) design, activating only ~2 billion of the 24 billion parameters per token, allowing it to fit comfortably within a ~14.5 GB RAM footprint.

Q4_K_M GGUFQuantification. - Sub-second latency on consumer hardware: When benchmarked on an Apple M4 Max laptop, the model achieves an average latency of ~385 ms for tool-selection dispatch, enabling highly interactive, real-time workflows.

- Standardized MCP Tool Integration: The agent leverages the Model Context Protocol (MCP) to seamlessly connect to local tools – including file system operations, OCR, and security scanning – while automatically logging all actions to a local audit trail.

- Strong single-step accuracy with multi-step limits: The model achieves 80% accuracy on single-step tool execution, but drops to a 26% success rate on multi-step chains due to the ‘similarity fallacy’ (selecting a similar but wrong tool), indicating that it currently works best in a guided, human-in-the-loop rather than a fully autonomous agent.

check it out repo And technical details. Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.