This post was co-written with Abdullahi Olaoye, Curtis Lockhart, Nirmal Kumar Juluru of NVIDIA.

We are excited to announce NVIDIA’s Nemotron 3 Nano Now available in Amazon Bedrock as a fully managed and serverless model. This follows our previous announcement supporting NVIDIA Nemotron 2 Nano 9B and NVIDIA Nemotron 2 Nano VL 12B models at AWS re:Invent.

with nvidia nemotron With an open model on Amazon Bedrock, you can accelerate innovation and deliver solid business value without having to manage infrastructure complexities. You can power your generative AI applications with Nemotron’s capabilities through Amazon Bedrock’s inference capabilities and take advantage of its extensive features and tooling.

This post explores the technical features of the NVIDIA Nemotron 3 Nano model and discusses potential application use cases. Additionally, it provides technical guidance to help you get started using this model for your generic AI applications within the Amazon Bedrock environment.

About Nemotron 3 Nano

NVIDIA Nemotron 3 Nano is a small language model (SLM) with hybrid mixture-of-experts (MOE) architecture that provides high computation efficiency and accuracy that developers can use to build specialized agentive AI systems. The model is completely open with open-source weights, datasets, and recipes facilitating transparency and confidence for developers and enterprises. Compared to other similarly sized models, the Nemotron 3 Nano excels at coding and reasoning tasks, taking the lead on benchmarks like SWE Bench Verified, AIME 2025, Arena Hard v2, and IFBench.

Model overview:

- architecture:

- Mixing of Experts (MOE) with hybrid Transformer-Mamba architecture.

- Supports token budgeting to provide accuracy while avoiding overthinking

- accuracy:

- Leading accuracy on coding, scientific reasoning, math, tool calling, following instructions, and chat

- Nemotron 3 Nano leads on benchmarks such as SWE Bench, AIME 2025, Humanity Last Exam, IFbench, Ruler, and Arena Hard (compared to other open language models with MOE of 30 billion or less).

- Model size: 30B with 3B active parameters

- Context Length: 256K

- Model input: text

- Model output: text

Nemotron 3 Nano combines Mamba, Transformer, and mixin-expert layers into a single backbone to help balance efficiency, logic accuracy, and scale. Mamba enables long-range sequence modeling with low memory overhead, while Transformer layers help add precision to structured logic tasks like code, math, and planning. MoE routing increases scalability by activating only a subset of experts per token, helping to improve latency and throughput. This makes Nemotron 3 Nano particularly suitable for agent clusters running multiple concurrent, lightweight workflows.

To learn more about the Nemotron 3 Nano’s architecture and how it is trained, visit Inside NVIDIA Nemotron 3: The technologies, tools, and data that make it efficient and accurate.

model benchmark

The following image shows Nemotron 3 Nano leading in the most attractive quadrant artificial analysis Openness Index vs Intelligence Index. Why openness matters: It builds trust through transparency. Developers and enterprises can confidently build on Nemotron with clear visibility into models, data pipelines, and data attributes, enabling straightforward auditing and governance.

Topic: Chart showing Nemotron 3 Nano in the most attractive quadrant in the Artificial Intelligence Openness vs. Intelligence index (Source: artificial analysis)

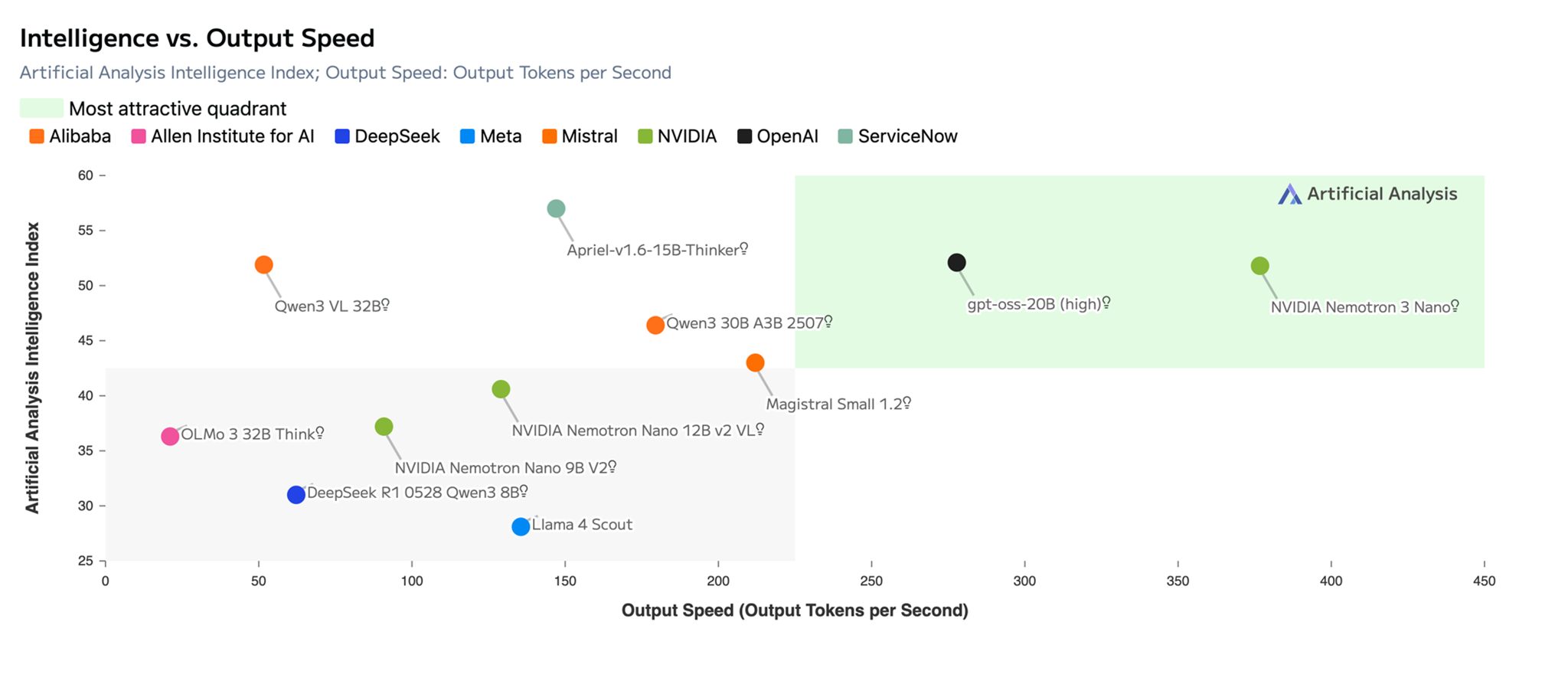

As shown in the following image, the Nemotron 3 Nano delivers leading accuracy with the highest efficiency among open models and achieves an impressive 52 points, a significant leap compared to the previous Nemotron 2 Nano model. The demand for tokens is increasing due to agentic AI, so the ability to ‘think fast’ (quickly arriving at the right answer while using fewer tokens) is important. The Nemotron 3 Nano delivers high throughput with its efficient hybrid Transformer-Mamba and MOE architecture.

Topic: The NVIDIA Nemotron 3 Nano delivers the highest efficiency with leading accuracy among open models with an impressive 52 point score on the Artificial Analysis Intelligence vs Output Speed Index. (Source: artificial analysis)

NVIDIA Nemotron 3 Nano Use Cases

Nemotron 3 Nano helps power a variety of use cases for different industries. Some use cases include

- Finance – Accelerate loan processing by extracting data, analyzing income patterns, detecting fraudulent operations, reducing cycle time and risk.

- Cybersecurity – Automatically test vulnerabilities, perform in-depth malware analysis, and proactively hunt security threats.

- Software development – assisting with tasks such as code summarization.

- Retail – Optimize inventory management and help enhance in-store service with real-time, personalized product recommendations and support.

Get started with NVIDIA Nemotron 3 Nano in Amazon Bedrock

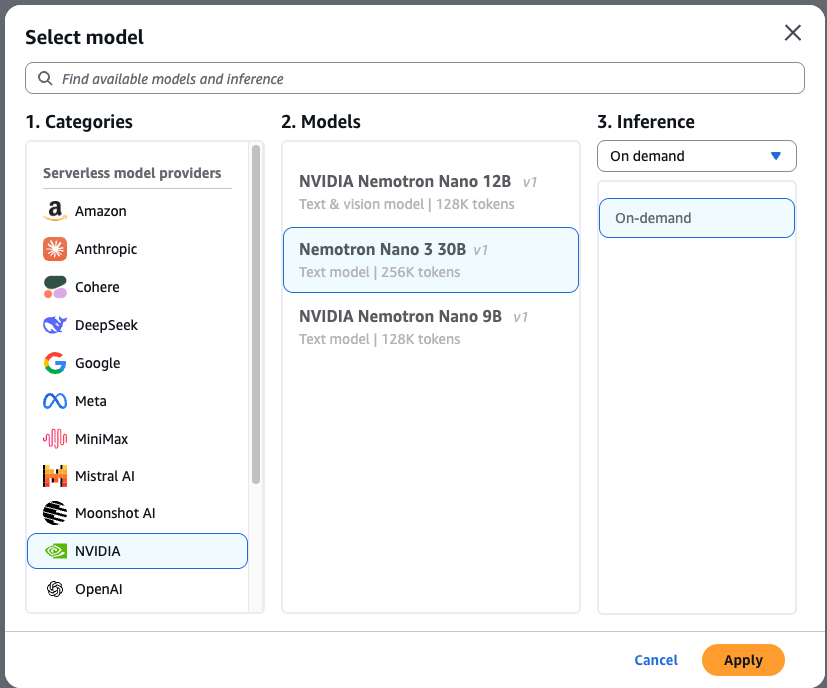

To test the NVIDIA Nemotron 3 Nano in Amazon Bedrock, complete the following steps:

- navigate to amazon bedrock console choose more chat/text playground From the left menu (under). Examination section).

- choose select model In the upper left corner of the playing field.

- choose NVIDIA From the category list, then select nvidia nemotron 3 nano.

- choose apply To load the model.

After selection, you can immediately test the model. Let’s use the following hint to generate unit tests in Python code pytest framework:

Write a pytest unit test suite for a Python function called calculate_mortgage(principal, rate, years). Include test cases for: 1) A standard 30-year fixed loan 2) An edge case with 0% interest 3) Error handling for negative input values.

Complex tasks like this prompt can benefit from a range of consideration approaches to help produce accurate results based on the reasoning capabilities built natively into the model.

Using AWS CLI and SDK

You can access the model programmatically using the model ID nvidia.nemotron-nano-3-30b. The model supports both InvokeModel And Converse API via AWS Command Line Interface (AWS CLI) and AWS SDK nvidia.nemotron-nano-3-30b As model ID. Additionally, it supports Amazon Bedrock OpenAI SDK compatible APIs.

Run the following command to invoke the model directly from your terminal using AWS Command Line Interface (AWS CLI) and InvokeModel API: :

To implement the model via AWS SDK for Python (boto3), Use the following script to send signals to the model, in this case using the Converse API:

Amazon Bedrock to implement models via OpenAI-compatible ChatCompletions Endpoint, you can do this using the OpenAI SDK:

Use NVIDIA Nemotron 3 Nano with Amazon Bedrock Features

You can enhance your generative AI applications by pairing Nemotron 3 Nano with Amazon Bedrock managed tools. Use Amazon Bedrock Rails to enforce security measures and the Amazon Knowledge Base to create robust Retrieval Augmented Generation (RAG) workflows.

Amazon Bedrock Railing

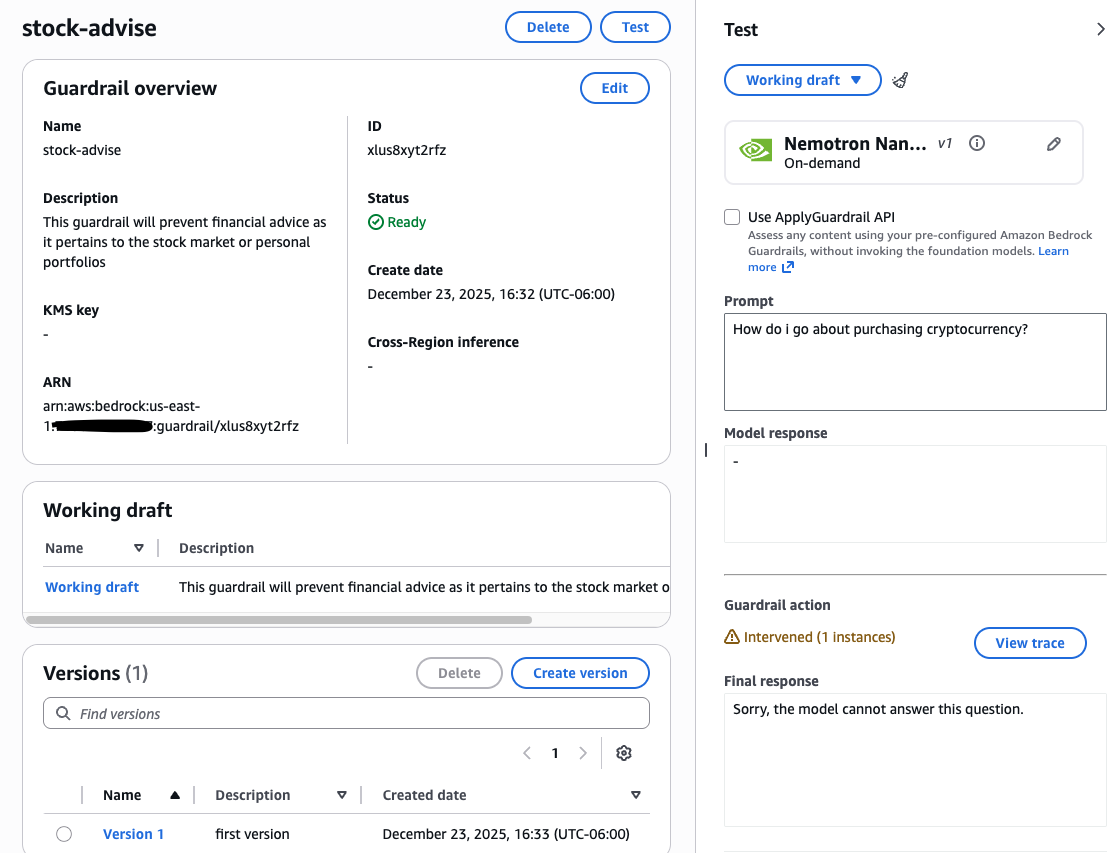

Guardrails is a managed security layer that helps enforce responsible AI by filtering harmful content, modifying sensitive information (PII), and blocking specific topics in signals and responses. It works on multiple models to help detect rapid injection attacks and hallucinations.

Example use case: If you are hiring a mortgage assistant, you can help by preventing him from giving general investment advice. By configuring a filter for the word “stock”, user signals containing that word can be instantly blocked and a custom message can be received.

To install the handrail, complete the following steps:

- In amazon bedrock consolenavigate to Construction section on the left and select handrails.

- Create a new Rails and configure the necessary filters for your use case.

After configuring, test the handrail with different signals to verify its performance. You can then fine-tune settings like disallowed topics, word filters, and PII reduction to match your specific security needs. For a more in-depth look, see Build your railing.

Amazon Bedrock Knowledge Base

Amazon Bedrock Knowledge Base automates the entire RAG workflow. It manages to ingest content from your data sources, break it into searchable segments, convert them into vector embeddings, and store them in a vector database. Then, when a user submits a query, the system matches the input against stored vectors to find semantically similar content, which is used to enhance the prompt sent to the Foundation model.

For this example, we uploaded PDFs (for example, buying a new house, Home Loan Toolkit, Shopping for a Mortgage) in Amazon Simple Storage Service (Amazon S3) and chose Amazon OpenSearch Serverless as the vector store. The following code demonstrates how to query this knowledge base using the RetrieveAndGenerate API, while automatically facilitating security compliance alignment through a unique Rails ID.

This instructs the NVIDIA Nemotron 3 Nano model to synthesize the retrieved documents into a clear, down-to-earth answer using your custom prompt template. To set up your own pipeline, review the complete walkthrough in the Amazon Bedrock User Guide.

conclusion

In this post, we showed you how to get started with the NVIDIA Nemotron 3 Nano on Amazon Bedrock for fully managed serverless inference. We also showed you how to use the model with the Amazon Bedrock Knowledge Base and Amazon Bedrock Rails. This model is now available in the US East (N. Virginia), US East (Ohio), US West (Oregon), Asia Pacific (Tokyo), Asia Pacific (Mumbai), South America (São Paulo), Europe (London), and Europe (Milan) AWS regions. Check the complete region list for future updates. To know more, see nvidia nemotron And try NVIDIA Nemotron 3 Nano in Amazon Bedrock Console today.

About the authors