How to Create Supplier Analytics with Salesforce SAP Integration on Databricks

Supplier data touches almost every part of an organization – from purchasing and supply chain management to finance and analytics. Nevertheless, it often spreads across systems that do not communicate with each other. For example, Salesforce maintains vendor profiles, contacts, and account details, and SAP S/4HANA manages invoicing, payments, and general ledger entries. Because these systems operate independently, the teams lack a comprehensive view of supplier relationships. This results in slow reconciliation, duplicate records and missed opportunities to optimize spend.

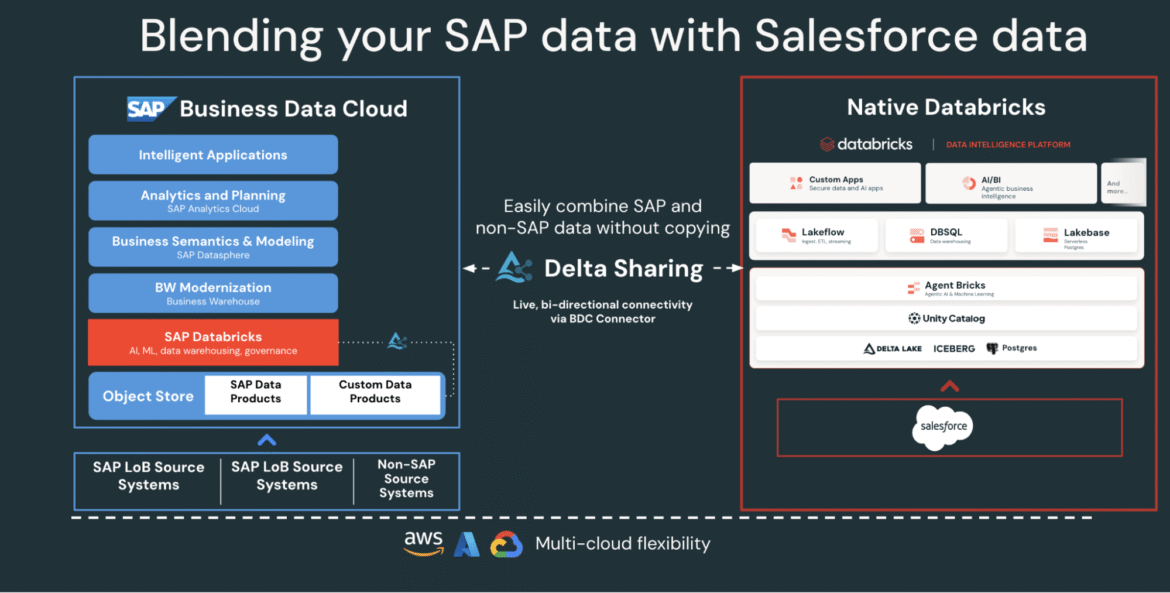

Databricks solves this by connecting both systems onto a single controlled data and AI platform. Using Lakeflow Connect and SAP Business Data Cloud (BDC) Connect for Salesforce for data ingestion, teams can integrate CRM and ERP data without duplication. The result is a single, trusted view of vendors, payments, and performance metrics that supports procurement and finance use cases as well as analytics.

In this how-to, you’ll learn how to connect both data sources, create a blended pipeline, and create a gold layer that powers analytics and conversational insights through AI/BI dashboards and Genie.

Why Zero-Copy SAP Salesforce Data Integration Works

Most enterprises attempt to connect SAP and Salesforce through traditional ETL or third-party tools. These methods create multiple data copies, introduce latency and make governance difficult. Databricks takes a different approach.

-

Zero-Copy SAP Access: SAP BDC Connector for Databricks Delta gives you controlled, real-time access to SAP S/4HANA data products through sharing. No exporting or duplication.

Image: Native Databricks to SAP BDC Connector (bi-directional) - Faster Salesforce Incremental Ingestion: Lakeflow continuously connects and ingests Salesforce data, keeping your dataset fresh and consistent.

- Integrated Governance: Unity Catalog applies permissions, lineage, and auditing across both SAP and Salesforce sources.

- Declarative Pipeline: Lakeflow Spark declarative pipelines simplify ETL design and orchestration with automatic optimization for better performance.

Together, these capabilities enable data engineers to blend SAP and Salesforce data on one platform, reducing complexity while maintaining enterprise-grade governance.

SAP Salesforce Data Integration Architecture on Databricks

Before creating a pipeline, it is useful to understand how these components fit together in Databricks.

At a high level, SAP S/4HANA publishes business data as curated, business-ready SAP-managed data products in the SAP Business Data Cloud (BDC). SAP BDC Connect for Databricks enables secure, zero-copy access to those data products using Delta Sharing. Meanwhile, Lakeflow Connect handles Salesforce ingestion – capturing accounts, contacts, and opportunity data through incremental pipelines.

All incoming data, whether from SAP or Salesforce, is controlled in the Unity Catalog for governance, lineage, and permissions. Data engineers then use the Lakeflow declarative pipeline to combine these datasets and transform them into a medallion architecture (bronze, silver and gold tiers). Finally, the gold layer serves as the foundation for analysis and exploration in AI/BI dashboards and Genie.

This architecture ensures that data from both systems remains synchronized, controlled, and analytics and AI ready – without the overhead of replication or external ETL tools.

How to Create Integrated Supplier Analytics

The following steps outline how to connect, blend, and analyze SAP and Salesforce data on Databricks.

Step 1: Ingesting Salesforce Data with Lakeflow Connect

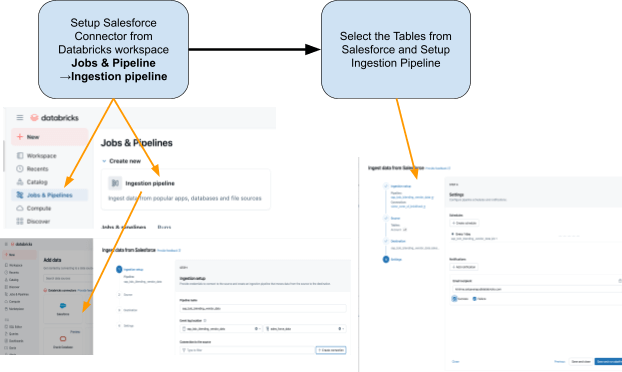

Use Lakeflow Connect To bring salesforce data into databricks. You can configure pipelines through the UI or API. These pipelines automatically manage incremental updates, ensuring that data remains current without manual refreshes.

The connector is fully integrated with Unity Catalog Governance, Lakeflow Spark Declarative Pipelines for ETL, and Lakeflow Jobs for orchestration.

These are the tables we plan to get from Salesforce:

- Account: Vendor/Supplier Details (fields include: Account ID, Name, Industry, Type, Billing Address)

- Contact: Seller Contact (fields include: Contact ID, Account ID, First Name, Last Name, Email)

Step 2: Access SAP S/4HANA data with SAP BDC Connector

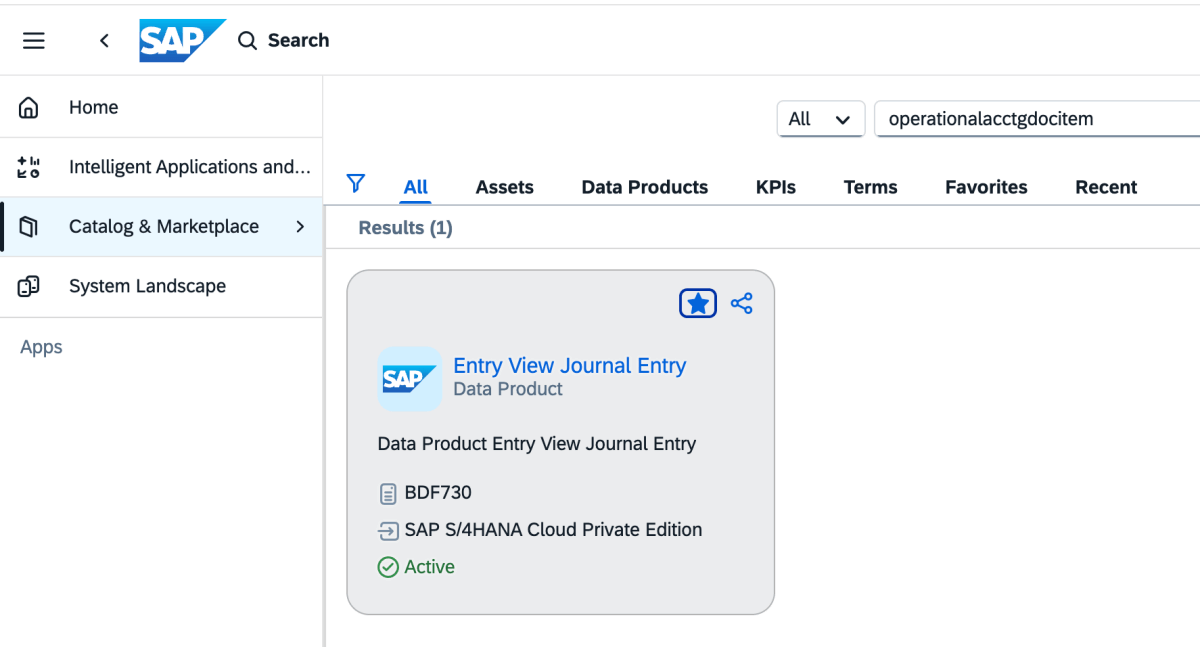

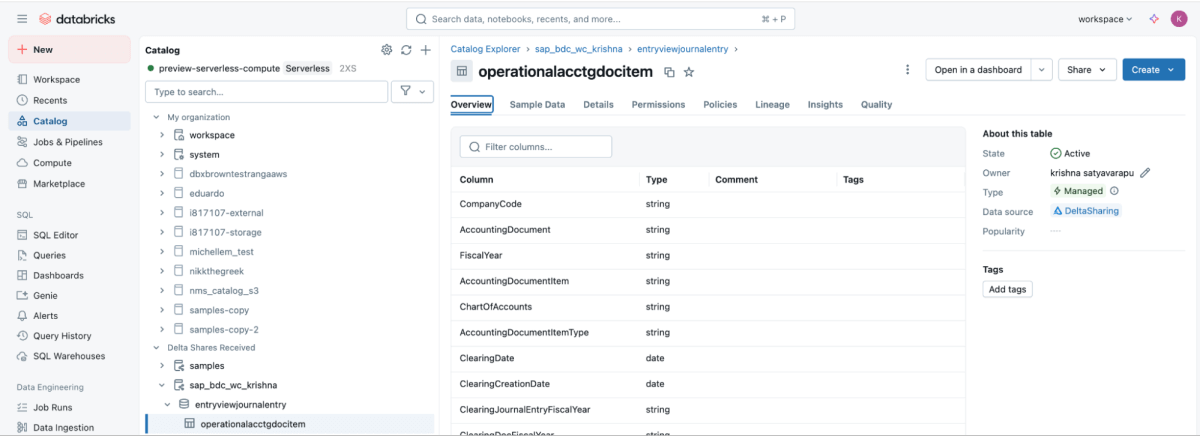

SAP BDC Connect Provides live, controlled access to SAP S/4HANA vendor payment Data directly on Databricks – Eliminating traditional ETL by leveraging SAP BDC data product sap_bdc_working_capital.entryviewjournalentry.operationalacctgdocitem-This Universal Journal Line-Item Look

This BDC data product maps directly to the SAP S/4HANA CDS view I_JournalEntryItem (operational accounting document item) ACDOCA.

For ECC reference, the closest physical structures were BSEG (FI Line Item) with header BKPF, CO is posted COEP, and open/clear the index BSIK/BSAK (vendors) and BSID/BSAD (Customer). in sap s/4hanaThis is B.S.** The objects are part of a simplified data model, where vendor and G/L line items are centralized in a universal journal (ACDOCA), replacing the ECC approach that often requires joining multiple separate finance tables.

These are the steps that need to be performed in SAP BDC Cockpit.

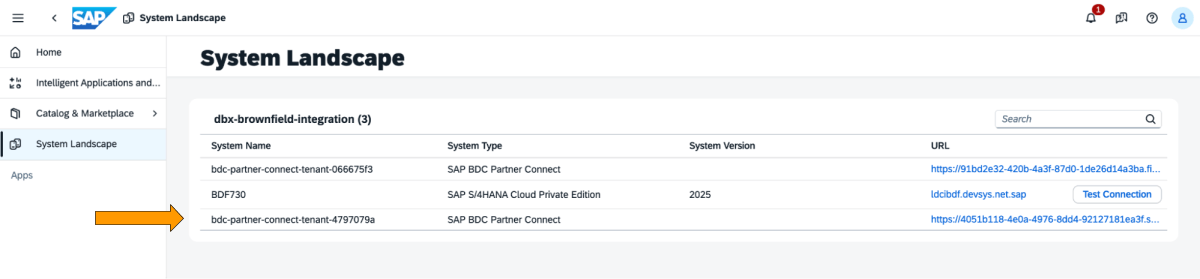

1: Log in to SAP BDC Cockpit and check SAP BDC formation in System Landscape. Connect to native Databricks via SAP BDC Delta Sharing Connector. For more information on how to connect Native Databricks to SAP BDC so that it becomes part of its setup.

2: Go to Catalog and view the Data Product Entry View Journal Entry as shown below

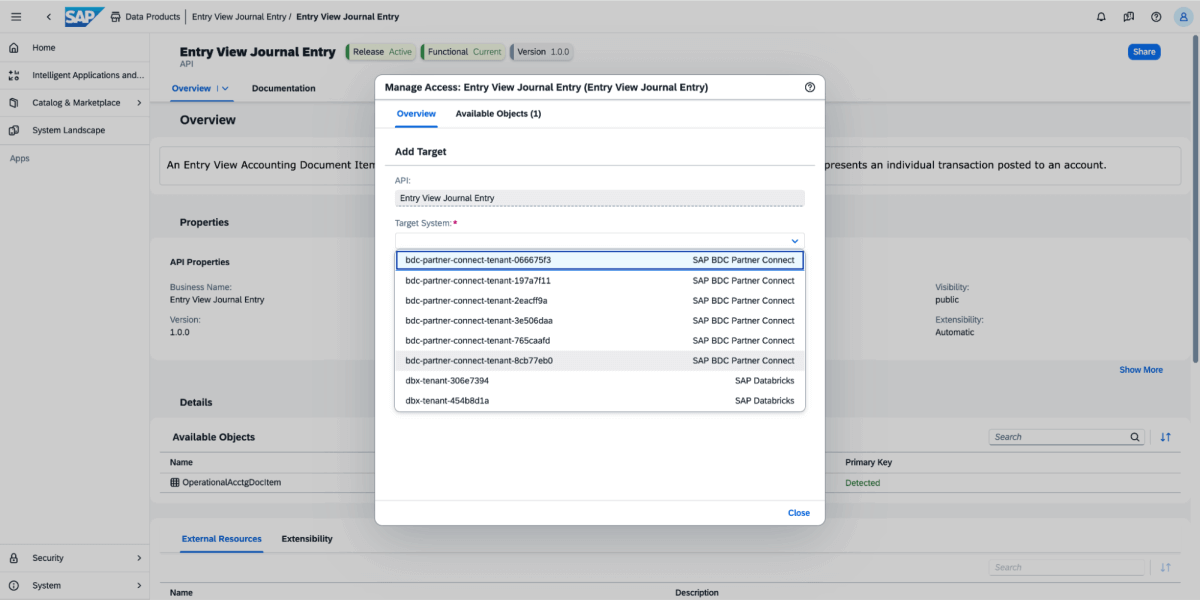

3: On the data product, select Share, and then select the target system, as shown in the image below.

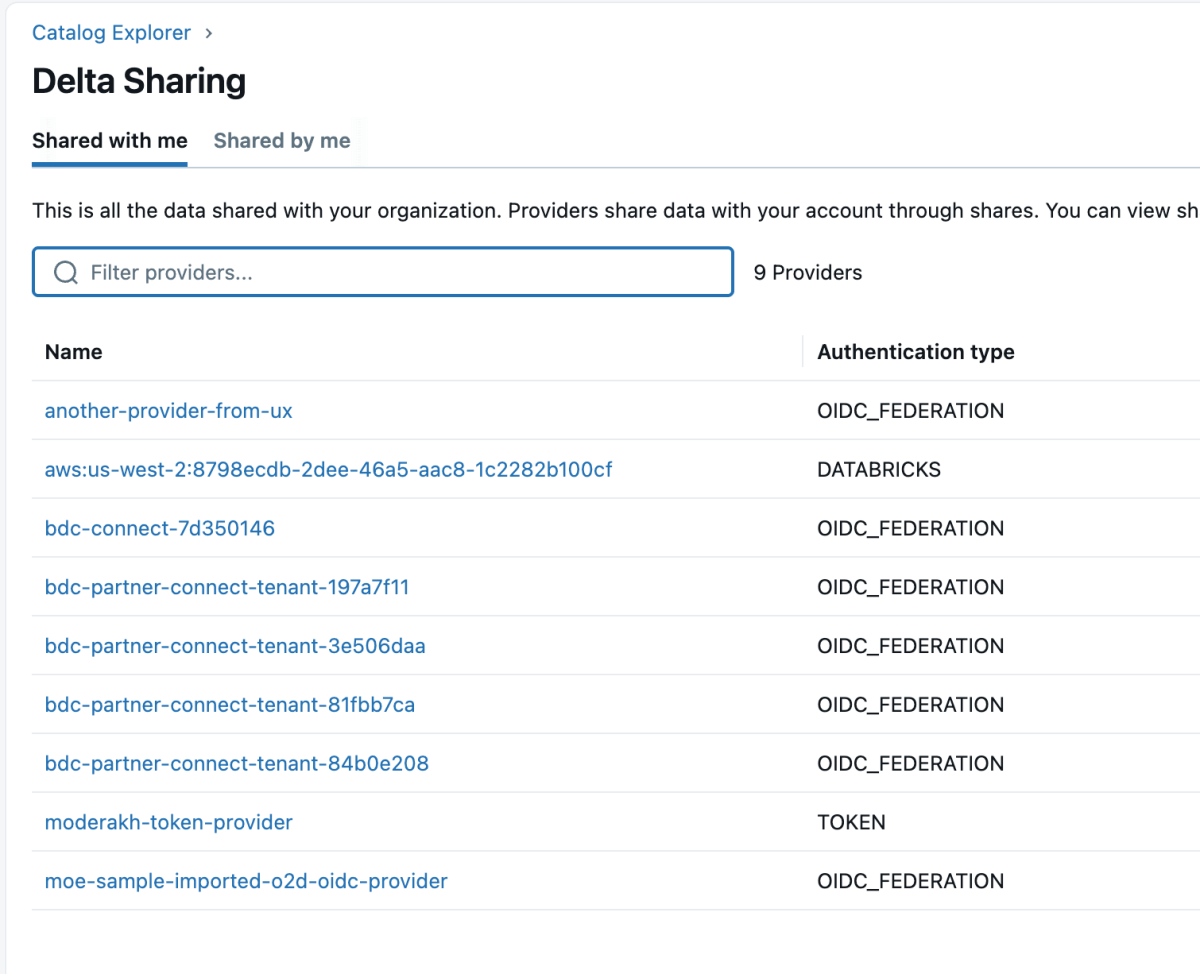

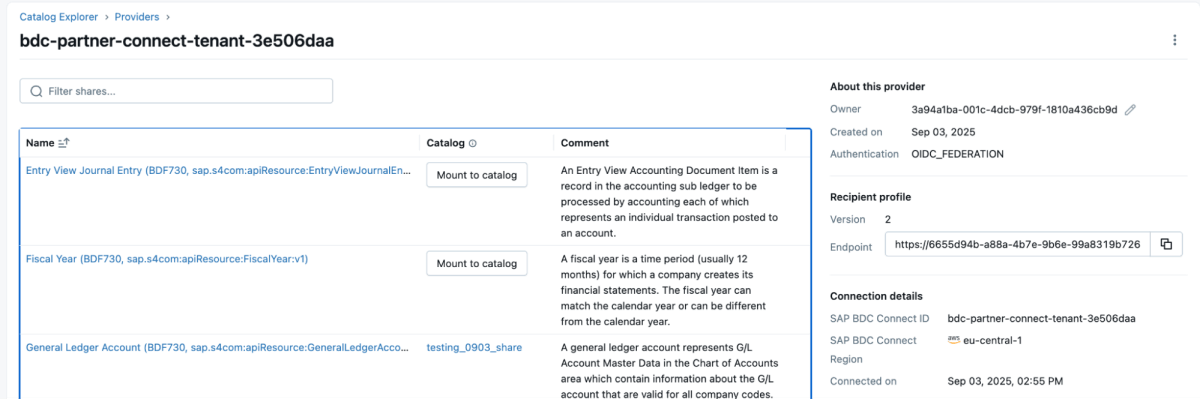

4: Once the data product is shared, it will appear as a delta share in the Databricks workspace as shown below. Make sure you have “use providerAccess to view these providers.

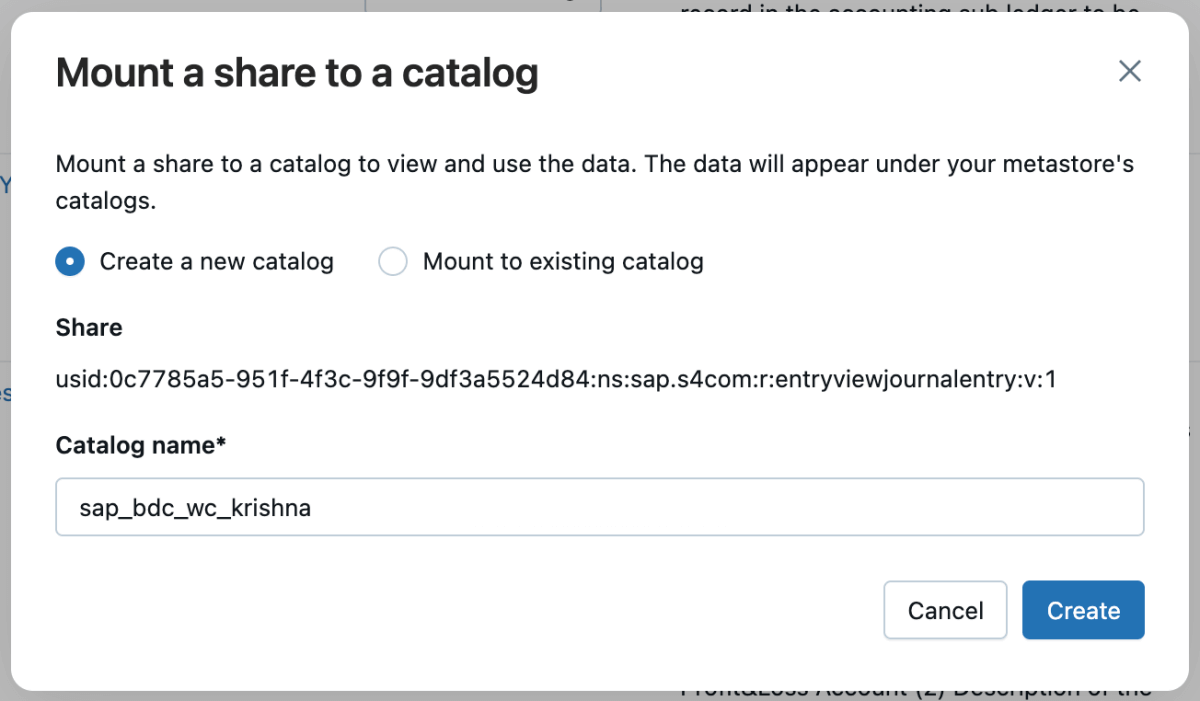

5: You can then mount that share in a catalog and either create a new catalog or mount it in an existing catalog.

6: Once the share is mounted, it will appear in the catalog.

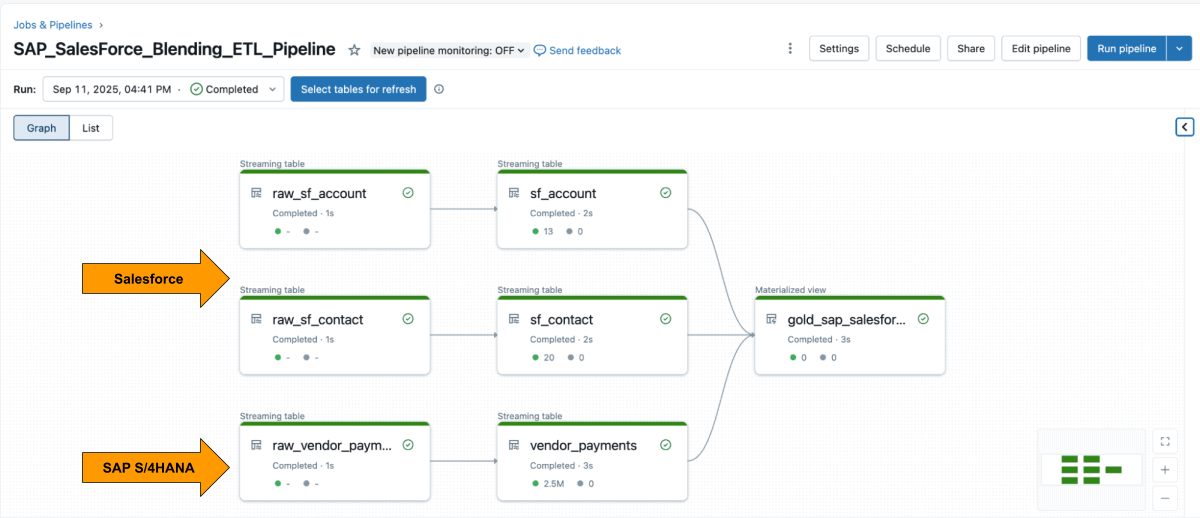

Step 3: Blending ETL Pipelines into Databricks using Lakeflow Declarative Pipelines

When both sources are available, use lakeflow declarative pipeline To create ETL pipelines with Salesforce and SAP data.

salesforce Account Table usually contains fields SAP_ExternalVendorId__cWhich matches the vendor ID in SAP. This becomes the primary association key for your silver layer.

Lakeflow Spark Declarative Pipelines allow you to define transformation logic in SQL while Databricks automatically handles customizations and orchestrates pipelines.

Example: Create curated business-level tables

This query creates a Curated business-level physical views It integrates vendor payment records from SAP with vendor details from Salesforce ready for analysis and reporting.

Step 4: Analyze with AI/BI Dashboard and Genie

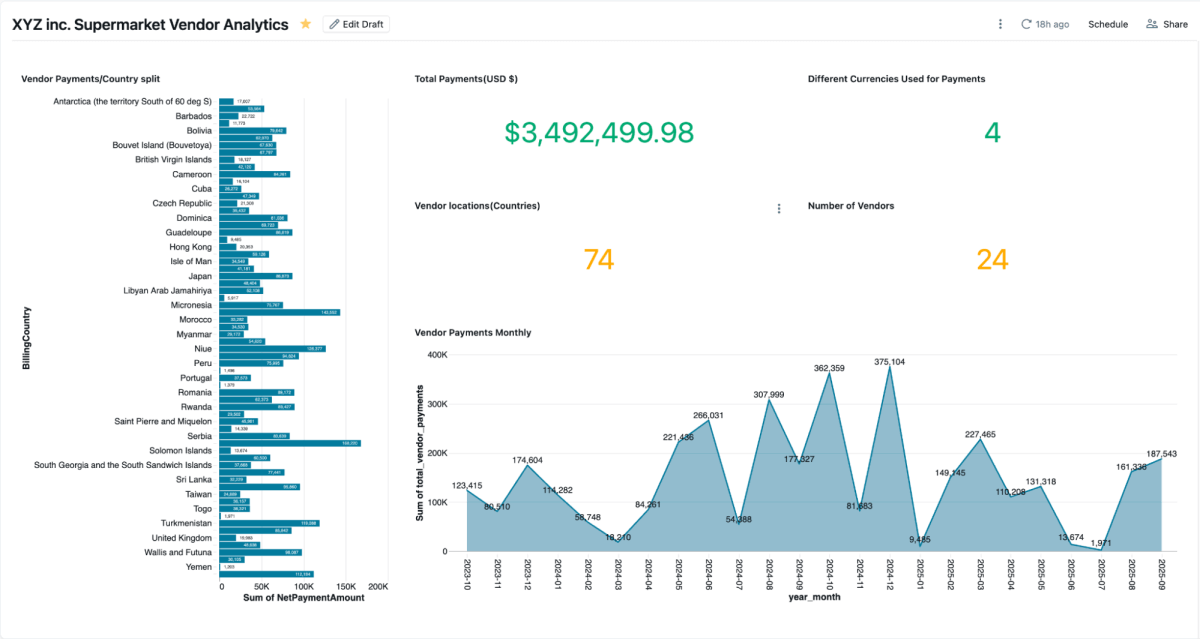

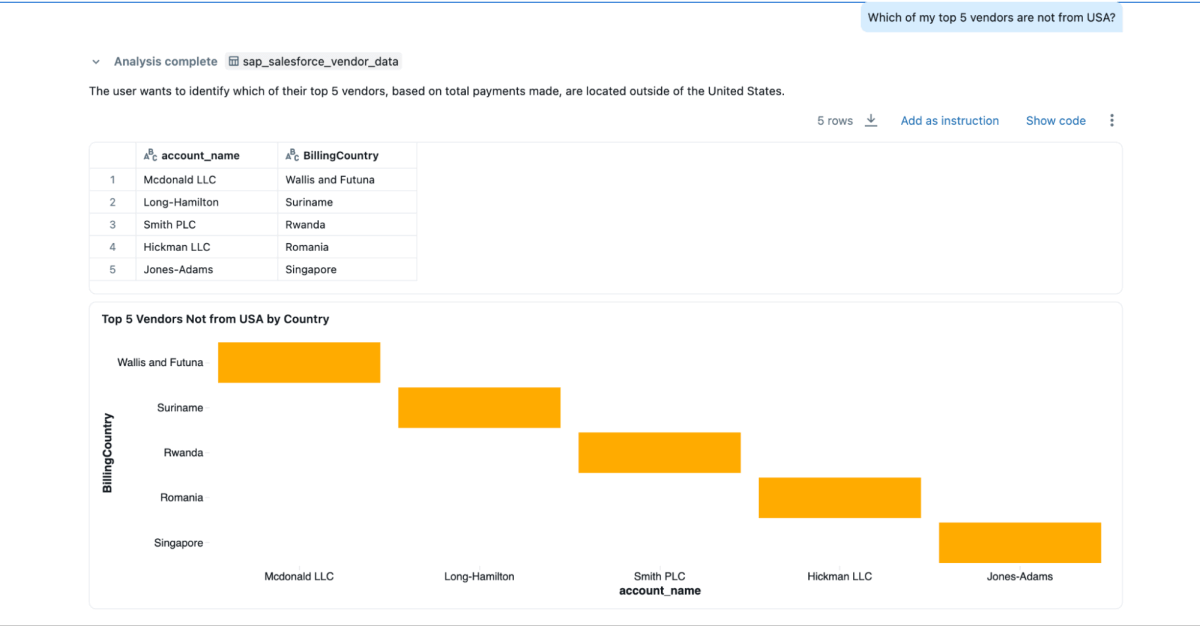

Once the physical view is created, you can view it directly AI/BI Dashboard Let teams visualize vendor payments, outstanding balances, and expenses by region. They support dynamic filtering, searching, and collaboration governed by the Unity Catalog. genie Enables natural language search of similar data.

You can create Genie spaces and run queries on this mixed data, which couldn’t be done if the data was locked in Salesforce and SAP.

- “Who are my top 3 vendors that I pay the most, and I also want their contact information?”

- “What are the billing addresses of the top 3 vendors?”

- “Who among my top 5 sellers is not from the United States?”

business results

By combining SAP and Salesforce data on Databricks, organizations gain a complete and reliable view of supplier performance, payments, and relationships. This integrated approach provides both operational and strategic benefits:

- Speedy Dispute Resolution: Teams can view payment details and supplier contact information together, making it easier to investigate issues and resolve them quickly.

- Early Salary Savings: By having payment terms, clearing dates and net amounts in one place, finance teams can easily identify early payment discount opportunities.

- Cleaner Vendor Master: are joining at

SAP_ExternalVendorId__cThe field helps identify and resolve duplicate or mismatched supplier records, thereby maintaining accurate and consistent vendor data in the system. - Audit-ready governance: Unity Catalog ensures all data is controlled with consistent lineage, permissions, and auditing, so analytics, AI models, and reports rely on a single trusted source.

Together, these results help organizations streamline vendor management and improve financial efficiency – while maintaining the governance and security essential to enterprise systems.

conclusion:

Integrating supplier data across SAP and Salesforce doesn’t mean rebuilding pipelines or managing duplicate systems.

with databricksTeams can work from a single, governed foundation that seamlessly integrates ERP and CRM data in real-time. combination of Zero-copy SAP BDC access, incremental salesforce ingestion, integrated governance, And declarative pipelines Integration replaces overhead with insight.

The results go far beyond fast reporting. It provides a connected view of supplier performance that improves purchasing decisions, strengthens vendor relationships, and unlocks measurable savings. And because it is built on Databricks Data Intelligence PlatformThe same SAP data that feeds payments and invoicing can also drive dashboards, AI models, and conversational analytics – all from a single trusted source.

SAP data is often the backbone of enterprise operations. by integrating SAP Business Data Cloud, Delta Sharing, And unity catalogue, Organizations can extend this architecture beyond supplier analysis to working-capital optimization, inventory management, and demand forecasting.

This approach transforms SAP data from a system of record to a system of intelligence, where each dataset is live, controlled, and ready for use across the business.