Author(s): Apurva Venkata

Originally published on Towards AI.

If you’ve worked on sales forecasting as a product manager, data analyst, or data scientist, this situation will sound familiar.

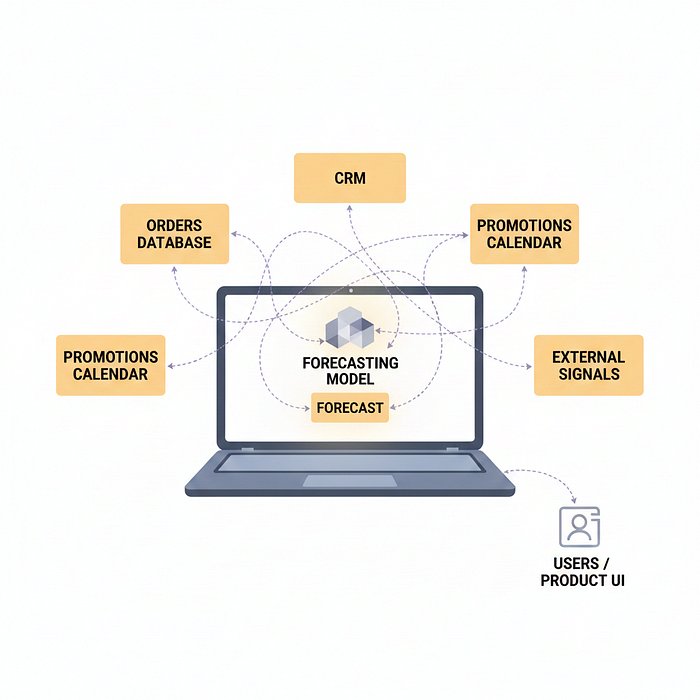

Most sales forecasts work well in notebooks, but fail as soon as they are connected to the real system. Pipelines break when the schema changes. Adding new inputs requires custom ETL. No one can fully explain why the forecast was changed. Over time, people stop trusting the numbers and return to manual decisions.

So the forecast exists. But it never actually ships.

In retrospect, the same issues arise again and again. The forecast lives in the notebook instead of the product. Changing the schema breaks the integration. Adding new context such as promotions or weather requires custom ETL work. When the forecast changes, no one can easily tell why.

As a result, forecasts exist, but they do not ship.

This article is for product managers, data scientists, and ML engineers who have built predictive models that work differently but fail to become reliable product features. By the end of this article, you will understand how the Model Context Protocol enables production-ready forecasting by turning models into system components with stable inputs, clear ownership, and human feedback loops. This is a conceptual and architectural deep dive.

Why do forecasts fail to reach production?

Most forecasting failures are not caused by weak models.

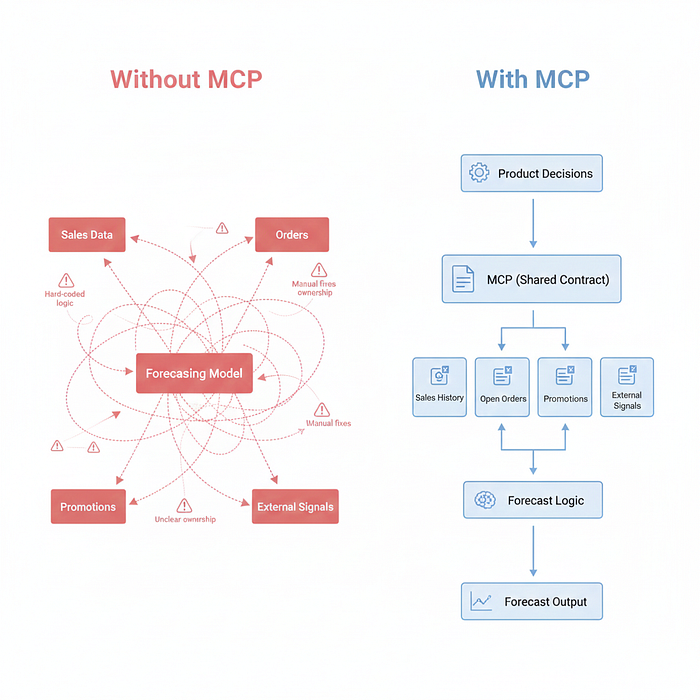

This occurs because forecasting systems rely on brittle, point-by-point integration that cannot evolve with changes in the business. Each new data source introduces more custom logic. Every schema change risks breaking the pipeline. Over time, forecasts become fragile, opaque, and difficult to trust.

When forecasting depends on fragile data plumbing, teams stop iterating. Ultimately, forecasting becomes an experiment rather than a framework.

How does MCP solve in the forecasting workflow

Model Context Protocol (MCP) addresses this exact difference.

Instead of ad-hoc, human-driven data assembly, MCP gives an agent a structured way to gather the same context automatically, consistently, and in one place.

The result is that much less time is spent coordinating systems and much more time is spent using forecasts that actually remain up to date and usable.

What is MCP?

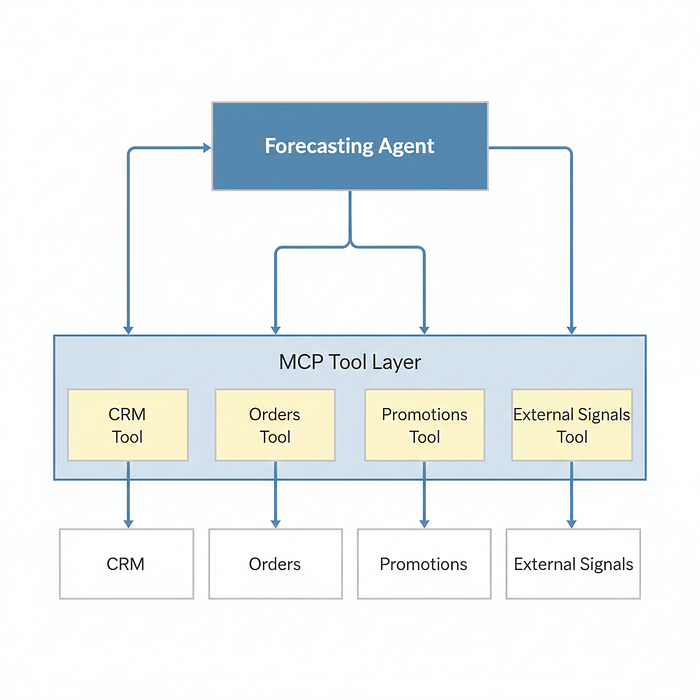

Model Context Protocol (MCP) is a standard way for AI agents to find and call internal tools and data sources with structured context.

Rather than building one-off integrations between the model and each database or API, MCP lets teams expose the system as tools with explicit contracts. Agents can then gather the context they need without custom glue code for each workflow.

For forecasting, this matters because the hardest part of forecasting is usually not the model, it’s constantly collecting inputs.

An End-to-End Forecasting Agent Architecture

Consider an intentionally narrow scope.

Short-term sales forecast for a product line, updated continuously, and visible to a planning team.

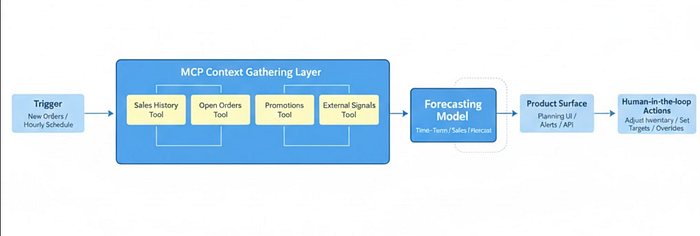

Triggers and Rhythm (Product Ownership)

Every hour, or when new orders come in, a forecasting agent runs. This cadence reflects how fresh the forecast needs to be to support decisions.

Reference assembly via MCP

When the agent runs, it searches for and calls MCP exposed tools like:

• Get sales for the last 90 days

• GET OPEN_ORDER

• Receive upcoming promotions

•Get weather_signals

In this context, the tool is named, having callable capabilities with explicit schema and contracts, not raw data access.

When it runs, the agent calls MCP-exposed tools to gather context. The output is assembled into a structured context object similar to feature snapshots in offline ML.

Nothing magical yet. The agent is doing what a human analyst would do but automatically and continuously.

forecast generation

The agent passes the structured context object to a deployed prediction model. This could be a classical time series model or a machine learning based approach.

The output is a predicted artifact. This can be a point forecast, a distribution with uncertainty ranges, or multiple scenario estimates. Importantly, this prediction artifact is versioned with the context that generated it, enabling traceability and reproducibility.

MCP itself does not change the model. This changes how consistently and transparently the model receives its inputs.

surface forecast in product

The agent writes predictive artifacts on top of what people are already using, such as a planning UI, alerting system, or internal API. In my case, it was Google Sheets.

Forecasts often fail because they live in dashboards that no one checks. Writing forecasts into existing workflows dramatically increases adoption and confidence.

closing the loop on human actions

The agent highlights meaningful changes.

“The forecast for next week is down 8 percent.”

It suggests possible drivers.

“Promotion delayed. Consider adjusting inventory targets.”

When humans override or adjust forecasts, those actions are logged. Over time, these override logs become evaluation data. They point out where the model consistently disagrees with operators and where additional context or features are needed.

At this point, forecasting becomes a living system rather than a static output.

A brief practical example

Consider a beverage company forecasting weekly demand for a new product line.

before mcp

- Sales data lived in a warehouse

- promotion lived in another system

- The weather came from an API that someone wired manually

- Whenever the schema changed, the model broke

- Planners stopped relying on dashboards and used internal judgment

after mcp

- Each signal became a designated MCP device with a clear contract

- Agent rebuilds full reference snapshot every day

- Forecasts were written directly into the planning sheet

- Overrides logged and reviewed weekly

The result wasn’t just better accuracy – It was stability, transparency and trust.

Defining ownership boundaries with MCP

Forecasting systems fail when ownership is unclear. Product, data, and ML teams make changes in isolation, breaking trust and alignment. MCP prevents this by implementing a shared contract layer that separates responsibilities while keeping the systems interoperable.

Product ownership involved

• When the agent moves

• Which forecast horizon matters

• Where results appear

• How users act on them

Data and ML ownership involved

• What signals feed the model

• Feature Engineering

• Model selection and evaluation

MCP sits between these layers, enforcing structure without decomposing responsibilities into a single team.

Takeaway: MCP turns forecasts into product infrastructure

When teams adopt MCP, forecasting is no longer a delicate exercise.

Inputs become stable and searchable. The context evolves without breaking pipelines. Forecasts appear where decisions are made. Human overrides become learning signals.

Most importantly, forecasting moves from notebook-based analysis to reliable product infrastructure.

If you already have predictive models but are struggling to operationalize them, treating MCP as a first-class architectural layer may be the missing step between insight and impact.

AI assistance disclosure

Parts of this article, including some diagrams, were created with the help of AI tools with user input, which were reviewed, edited, and verified by the author.

Published via Towards AI