Last updated on December 4, 2025 by Editorial Team

Author(s): hey rabbit

Originally published on Towards AI.

agent era

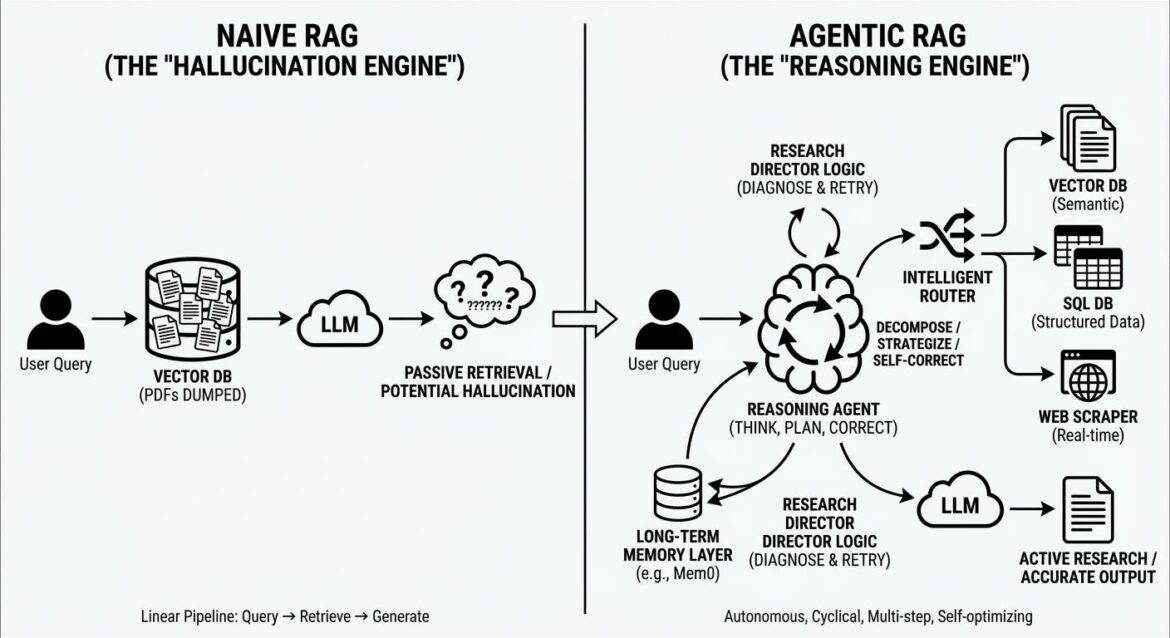

If your architecture still looks like “user query vector DB LLM”, you are not building an AI application; You are building a hallucination engine. The “naive” RAG era where we threw PDFs into the pinecone and prayed for the best is officially over.

The article discusses the development of Retrieval-Augmented Generation (RAG) pipelines, with an emphasis on the transition from older linear architectures to more sophisticated, agentic systems that are causal and self-correcting. This highlights the importance of new approaches, including the integration of long-term memory, hybrid retrieval architectures, and advanced production engineering strategies to enhance performance and reliability. The article concludes by emphasizing that RAG is emerging as an important engineering discipline, encompassing a variety of advanced technologies and strategies to meet increasing quality standards.

Read the entire blog for free on Medium.

Published via Towards AI

Take our 90+ lessons from Beginner to Advanced LLM Developer Certification: This is the most comprehensive and practical LLM course, from choosing a project to deploying a working product!

Towards AI has published Building LLM for Production – our 470+ page guide to mastering the LLM with practical projects and expert insights!

Find your dream AI career at Towards AI Jobs

Towards AI has created a job board specifically tailored to machine learning and data science jobs and skills. Our software searches for live AI jobs every hour, labels and categorizes them and makes them easily searchable. Search over 40,000 live jobs on AI Jobs today!

Comment: The content represents the views of the contributing authors and not those of AI.