Image by author

, Introduction

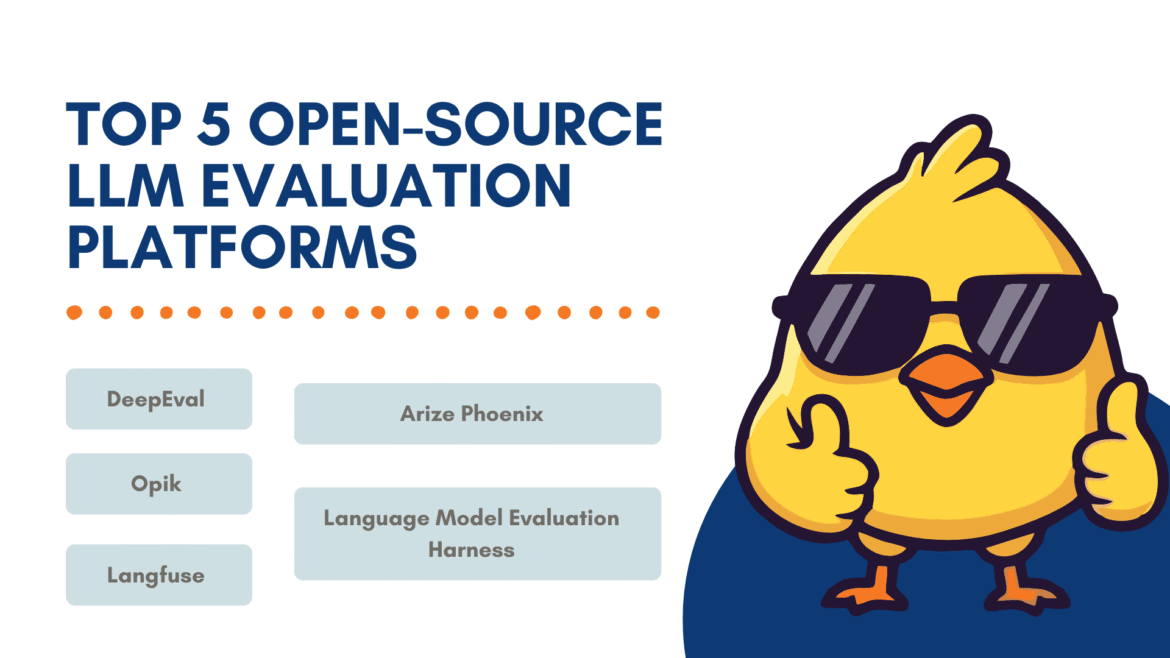

Whenever you have a new idea for a Large Language Model (LLM) application, you should evaluate it properly to understand its performance. Without evaluation, it is difficult to determine how well the application works. However, the abundance of benchmarks, metrics and tools – often each with its own script – can make the process extremely difficult to manage. Fortunately, open-source developers and companies continue to release new frameworks to address this challenge.

Although there are many options, this article shares my personal favorites. LLM Assessment PlatformAdditionally, a “golden repository” full of resources for LLM assessment is attached at the end,

, 1. DeepEval

depthval is an open-source framework specifically for testing LLM outputs. It’s easy to use and works just like pytest. You write test cases for your prompts and expected outputs, and DeepEval calculates a variety of metrics. It includes over 30 built-in metrics (correctness, stability, relevance, hallucination detection, etc.) that work on single-turn and multi-turn LLM tasks. You can also create custom metrics using LLM or Natural Language Processing (NLP) models running locally.

It also allows you to generate synthetic datasets. It works with any LLM application (chatbots, Retrieval-Augmented Generation (RAG) pipelines, agents, etc.) to help you benchmark and validate model behavior. Another useful feature is the ability to perform security scanning of your LLM applications for security vulnerabilities. This is effective for quickly detecting issues such as drift or model errors.

, 2. Aries (Ax and Phoenix)

get up Offers both a freemium platform (Arize AX) and an open-source counterpart, ariz-phoenixFor LLM observation and assessment. Phoenix is completely open-source and self-hosted. You can log every model call, run built-in or custom evaluators, version-control signals, and group output to quickly detect failures. It’s production-ready with async workers, scalable storage, and Open Telemetry (OTEL)-first integration. This makes it easy to plug assessment results into your analytics pipelines. It is ideal for teams that want complete control or want to work in a regulated environment.

Arize AX offers a community version of its product with similar features, with paid upgrades available for teams running large-scale LLMs. It uses the same trace system as Phoenix but adds enterprise features like SOC 2 compliance, role-based access, bring your own key (BYOK) encryption, and air-gapped deployment. AX also includes Alyx, an AI assistant that analyzes traces, cluster failures, and drafts follow-up assessments so your team can act faster as part of the free product. You get dashboards, monitors, and alerts all in one place. Both tools make it easy to see where agents fail, allow you to create datasets and experiments, and make improvements without having to work with multiple tools.

, 3. OPIC

OPIC (by Comet) is an open-source LLM assessment platform built for end-to-end testing of AI applications. It lets you log detailed traces of each LLM call, annotate them, and view the results in a dashboard. You can run automated LLM-judged metrics (for factuality, toxicity, etc.), experiment with signals, and put guardrails in place for security (like redacting personally identifiable information (PII) or blocking unwanted topics). It also integrates with continuous integration and continuous delivery (CI/CD) pipelines so you can add tests to catch issues every time you deploy. It is a comprehensive toolkit to continuously improve and secure your LLM pipelines.

, 4. Langfuse

Langfuse Another open-source LLM engineering platform that focuses on observation and assessment. It automatically captures everything that happens during an LLM call (input, output, API calls, etc.) to provide full traceability. It also offers features like centralized quick versioning and a quick playground where you can quickly iterate on inputs and parameters.

On the evaluation side, Langfuse supports flexible workflows: you can use LLM-as-a-judge metrics, collect human annotations, run benchmarks with custom test sets, and track results across different app versions. It even has dashboards for production monitoring and lets you run A/B experiments. This works well for teams that want both developer user experience (UX) (Playground, Prompt Editor) and full visibility into deployed LLM applications.

, 5. Language Model Evaluation Harness

Language Model Evaluation Harness (by EleutherAI) is a classic open-source benchmark framework. It bundles dozens of standard LLM benchmarks (over 60 functions like Big-Bench, Massive Multitask Language Understanding (MMLU), Hellasvag, etc.) into one library. It supports models loaded through APIs like Hugging Face Transformer, GPT-NeoX, Megatron-DeepSpeed, VLLM inference engines, and even OpenAI or TextSynth.

This hugging face is the basis of the Open LLM leaderboard, so it is used in the research community and cited by hundreds of papers. It is not specifically intended for “app-centric” evaluation (such as detecting an agent); Rather, it provides reproducible metrics for multiple tasks so you can measure how well a model performs against published baselines.

, Wrapping Up (and a Gold Stock)

Every equipment here has its own merits. DeepEval is good if you want to run tests locally and check for security issues. Aries gives you deep visibility with Phoenix for self-hosted setups and AX for enterprise scale. OPIC is great for end-to-end testing and improving agent workflow. Langfuse makes it simple to locate and manage signals. Finally, the LM Evaluation Harness is perfect for benchmarking across many standard academic tasks.

To make things even easier, llm assessment The repository by Andrei Lopatenko collects all the main LLM assessment tools, datasets, benchmarks and resources in one place. If you want a single center for testing, evaluating, and improving your models, this is it.

Kanwal Mehreen He is a machine learning engineer and a technical writer with a deep passion for the intersection of AI with data science and medicine. He co-authored the eBook “Maximizing Productivity with ChatGPT”. As a Google Generation Scholar 2022 for APAC, she is an advocate for diversity and academic excellence. She has also been recognized as a Teradata Diversity in Tech Scholar, a Mitex GlobalLink Research Scholar, and a Harvard VCode Scholar. Kanwal is a strong advocate for change, having founded FEMCodes to empower women in STEM fields.