In machine learning and data science, evaluating a model is as important as building it. Accuracy is often the first metric people use, but it can be misleading when the data is imbalanced. For this reason, metrics such as precision, recall, and F1 score are widely used. This article focuses on F1 scores. It explains what the F1 Score is, why it matters, how to calculate it and when it should be used. The article also includes a practical Python example using scikit-learn and discusses common mistakes to avoid during model evaluation.

What is F1 score in machine learning?

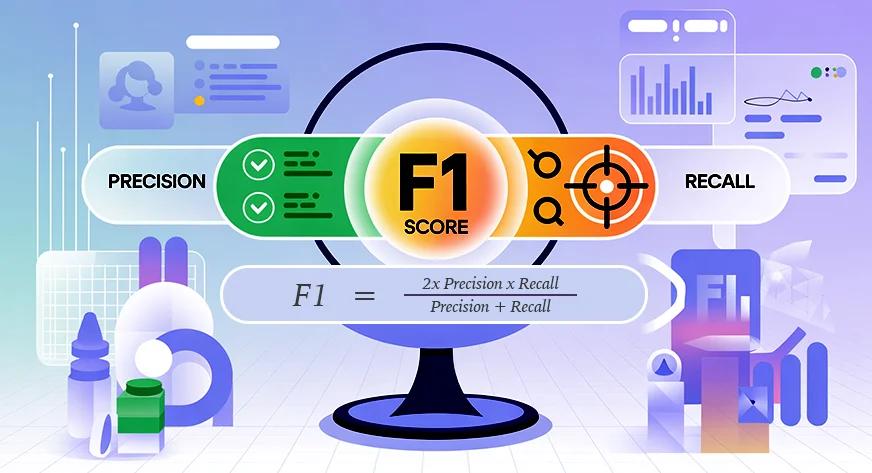

F1 score, also known as balanced F-score or F-measure, is a metric used to evaluate a model by combining precision and recall into a single value. It is commonly used in classification problems, especially when data are imbalanced or when false positive and false negative counts are significant.

Precision measures how many predicted positive cases are actually positive. In simple terms, it answers the question: how many of all predicted positive cases are correct. Recall, also called sensitivity, measures how many true positive cases the model correctly identifies. This answers the question: out of all true positive cases, how many did the model detect?

There is often a trade-off between precision and recall. Improving one can reduce the other. The F1 score addresses this by using the harmonic mean, which gives more weight to lower values. As a result, the F1 score is high only when both precision and recall are high.

f1 = 2 ×

precision × recall

precision + recall

The F1 score ranges from 0 to 1, or 0 to 100%. A score of 1 indicates perfect accuracy and recall. A score of 0 indicates that either precision or recall is zero, or both. This makes the F1 score a reliable metric for evaluating classification models.

Also Read: 8 Ways to Improve the Accuracy of Machine Learning Models

When should you use F1 Score?

When precision alone cannot provide a clear picture of model performance, the F1 score is employed. This mostly happens in imbalanced data. In such situations a model can be highly accurate, making predictions only on the majority class. Nevertheless, it may fail to identify minority groups altogether. F1 score is useful in solving this problem because it takes into account precision and recall.

The F1 score comes in handy when false positives as well as false negatives are important. It provides a value by which a model balances these two categories of errors. For a model to get a high F1 score, it must perform well on precision and recall. This makes it more reliable than accuracy in most tasks performed in the real world.

Real-world use cases of F1 scores

F1 scores are commonly used in the following situations:

- Imbalanced classification issues such as spam filtering, fraud detection, and medical diagnosis.

- Information retrieval and search systems, in which useful results should be located with a minimum number of false matches.

- Model or threshold tuning, when both precision and recall are important.

When one form of error is significantly more costly than another, that type of error should not be independently applied to F1 scores. Recall may be more important in case a positive case is missed. When false alarms are worse, accuracy may be a better point of focus. When precision and recall are of equal importance, the F1 score is most appropriate.

How to Calculate F1 Score Step by Step

Once precision and recall are known the F1 score can be calculated. These metrics are derived from the confusion matrix in binary classification problem.

Precision measures how many predicted positive cases are actually positive. It is defined as:

accuracy ,

T.P

tp+fp

Recall is used to determine the number of true positives retrieved. It is defined as:

Memorization ,

T.P

tp+fn

Here, TP represents true positives, FP represents false positives, and FN represents false negatives.

F1 Score Formula Using Precision and Recall

Knowing precision (P) and recall (R), the F1 score can be determined as the harmonic mean of the two:

f1 ,

2 × P × R

P+R

Harmonic mean gives more importance to smaller values. As a result, F1 scores are pulled toward lower levels of precision or recall. For example, if precision is 0.90 and recall is 0.10, the F1 score is approximately 0.18. If precision and recall are both 0.50, then the F1 score is also 0.50.

This ensures that high F1 scores are achieved only when both precision and recall are high.

F1 Score Formula Using Confusion Matrix

One can write the same formula using the terms of the confusion matrix:

f1 ,

2 tp

2 TP + FP + FN

Considering an example, when the model is characterized by a precision of 0.75 and a recall of 0.60, the F1 score is:

f1 ,

2 × 0.75 × 0.60

0.75 + 0.60

,

0.90

,

1.35

0.67

In multi-class classification problems, the F1 score is calculated for each class separately and then averaged. Macro averaging treats all classes equally, while weighted averaging takes into account class frequency. In highly imbalanced datasets, weighted F1 is usually a better overall metric. Always check the averaging method when comparing model performance.

Calculating F1 Score in Python using Scikit-Learn

An example of binary classification is as follows. Precision, Recall and F1 score will be calculated with the help of Scikit-Learn. This helps demonstrate how these metrics are practical.

To start, bring the essentials.

from sklearn.metrics import precision_score, recall_score, f1_score, classification_report Now, define the actual labels and model predictions for ten samples.

# True labels

y_true = (1, 1, 1, 1, 1, 0, 0, 0, 0, 0) # 1 = positive, 0 = negative

# Predicted labels

y_pred = (1, 0, 1, 1, 0, 0, 0, 1, 0, 0) Next, calculate the precision, recall and F1 score for the positive class.

precision = precision_score(y_true, y_pred, pos_label=1)

recall = recall_score(y_true, y_pred, pos_label=1)

f1 = f1_score(y_true, y_pred, pos_label=1)

print("Precision:", precision)

print("Recall:", recall)

print("F1 score:", f1) You can also generate a full classification report.

print ("nClassification Report:n", classification_report(y_true, y_pred)) Running this code produces output like the following:

Precision: 0.75 Recall: 0.6 F1 score: 0.6666666666666666

Classification Report:

Classification Report:

precision recall f1-score support

0 0.67 0.80 0.73 5

1 0.75 0.60 0.67 5

accuracy 0.70 10

macro avg 0.71 0.70 0.70 10

weighted avg 0.71 0.70 0.70 10

Understanding Classification Report Output in Scikit-Learn

Let’s interpret these results.

In the positive category (label 1), the accuracy is 0.75. This means that three-fourths of the samples that were considered positive were positive. Recall is 0.60 which indicates that the model correctly identified 60% of all true positive samples. When these two values are added, the result is an F1 value of approximately 0.67.

In the case of negative category (label 0), the recall is larger than 0.80. This shows that the model is more effective in identifying negatives than positives. Its accuracy is 70% overall, which is not a measure of the model’s effectiveness in each separate classification.

This can be easily seen in the classification report. It presents precision, recall and F1 by class, macro and weighted average. In this balanced case, the macro and weighted F1 scores are comparable. In more imbalanced datasets the weighted F1 score puts more emphasis on the dominant class.

This is demonstrated by a practical example of calculating and interpreting F1 scores. In real projects the F1 score on validation/test data would be used to determine the balance of false positives and false negatives, as is your model.

Best Practices and Common Pitfalls in Using F1 Score

Choose F1 depending on your purpose:

- F1 is used when recall and precision are equally important.

- There is no need to use F1 when one form of error is more costly.

- Use weighted F-score where necessary.

Don’t rely only on F1:

- F1 is a composite metric.

- This hides the tradeoff between precision and recall.

- Always review accuracy and recall separately.

Handle class imbalance carefully:

- F1 performs well compared to accuracy when faced with imbalanced data.

- Averaging methods affect the final score.

- Macro F1 treats all classes the same.

- Weighted F1 favors consecutive classes.

- Choose the method that reflects your goals.

Keep track of void or missing predictions:

- F1 can be zero when a class is never predicted.

- This may indicate a model or data problem.

- Always inspect the confusion matrix.

Use F1 wisely for model selection:

- F1 works well for comparing models.

- Small differences may not be meaningful.

- Combine F1 with domain knowledge and other metrics.

conclusion

F1 score is a robust metric for evaluating classification models. This combines precision and recall into a single value and is especially useful when both types of errors matter. This is particularly effective for problems with unbalanced data.

Unlike accuracy, the F1 score highlights weaknesses that accuracy may hide. This article explains what the F1 score is, how it is calculated, and how to explain it using Python examples.

Like any evaluation metric, F1 Score should be used with caution. This works best when precision and recall are equally important. Always choose evaluation metrics based on your project goals. When used in the right context, F1 scores help create more balanced and reliable models.

Frequently Asked Questions

A. An F1 score of 0.5 indicates moderate performance. This means that the model balances precision and recall poorly and is often only acceptable as a baseline, especially in unbalanced datasets or early-stage models.

A. A good F1 score depends on the problem. Generally, scores above 0.7 are considered good, above 0.8 as strong, and above 0.9 as excellent, especially in classification tasks with class imbalance.

A. No, a low F1 score indicates poor performance. Since F1 combines precision and recall, a higher value always means the model is making fewer false positives and false negatives overall.

A. The F1 score is used when class imbalance exists or when both false positive and false negative counts are significant. This provides a single metric that balances precision and recall as opposed to precision, which can be misleading.

A. 80% accuracy can be good or bad depending on the context. This may be acceptable in balanced datasets, but in unbalanced problems, high accuracy may hide poor performance on minority classes.

A. Use accuracy for balanced datasets where all errors matter equally. Use the F1 score when dealing with class imbalance or when precision and recall are more important than overall precision.

Login to continue reading and enjoy expertly curated content.