Image by author

# Introduction

As AI-generated media becomes increasingly powerful and common, it has become more challenging to distinguish AI-generated content from human-generated content. In response to risks such as misinformation, deepfakes, and misuse of synthetic media, google deepmind Developed SynthID, a collection of tools that embed unnoticeable digital watermarks into AI-generated content and subsequently enable robust identification of that content.

By incorporating watermarking directly into the content creation process, SynthID helps verify provenance and supports transparency and trust in AI systems. SynthID spans text, images, audio and video, with customized watermarking for each. In this article, I’ll explain what SynthID is, how it works, and how you can use it to place a watermark on text.

# What is SynthID?

At its center, synthID is a digital watermarking and detection framework designed for AI-generated content. It is a watermarking framework that injects unnoticeable signals into AI-generated text, images, and videos. These signals survive compression, resizing, cropping, and general transformations. As opposed to metadata-based approaches such as Alliance for Content Provenance and Authenticity (C2PA), SynthID operates at the model or pixel level. Instead of adding metadata generation after generation, SynthID embeds a hidden signature within the content, encoded in a way that is invisible or inaudible to humans but detectable by algorithmic scanners.

The design goals of SynthID are to be invisible to users, resilient to distortion, and reliably detectable by software.

SynthID is integrated into Google’s AI models, including Gemini (text), Imagen (images), Lyria (audio), and VO (video). It also supports tools like SynthID Detector Portal to verify uploaded content.

// Why is SynthID important?

Generative AI can create highly realistic text, images, audio and video that are difficult to distinguish from human-generated content. This brings risks such as:

- Deepfake videos and manipulated media

- Misinformation and misleading content

- Unauthorized re-use of AI content in contexts where transparency is required

SynthID provides origin markers that help platforms, researchers, and users trace the origin of content and evaluate whether it has been produced synthetically.

// Technical principles of SynthID watermarking

SynthID’s watermarking approach is rooted in steganography – the art of hiding signals within other data so that the presence of the hidden information is invisible but can be recovered with a key or detector.

The main design goals are:

- Watermarks should not diminish the user-facing quality of the content

- Watermarks should avoid common transformations such as compression, cropping, noise and filters

- The watermark must reliably indicate that the content was generated by an AI model using SynthID

Below is how SynthID implements these goals across different media types.

# text media

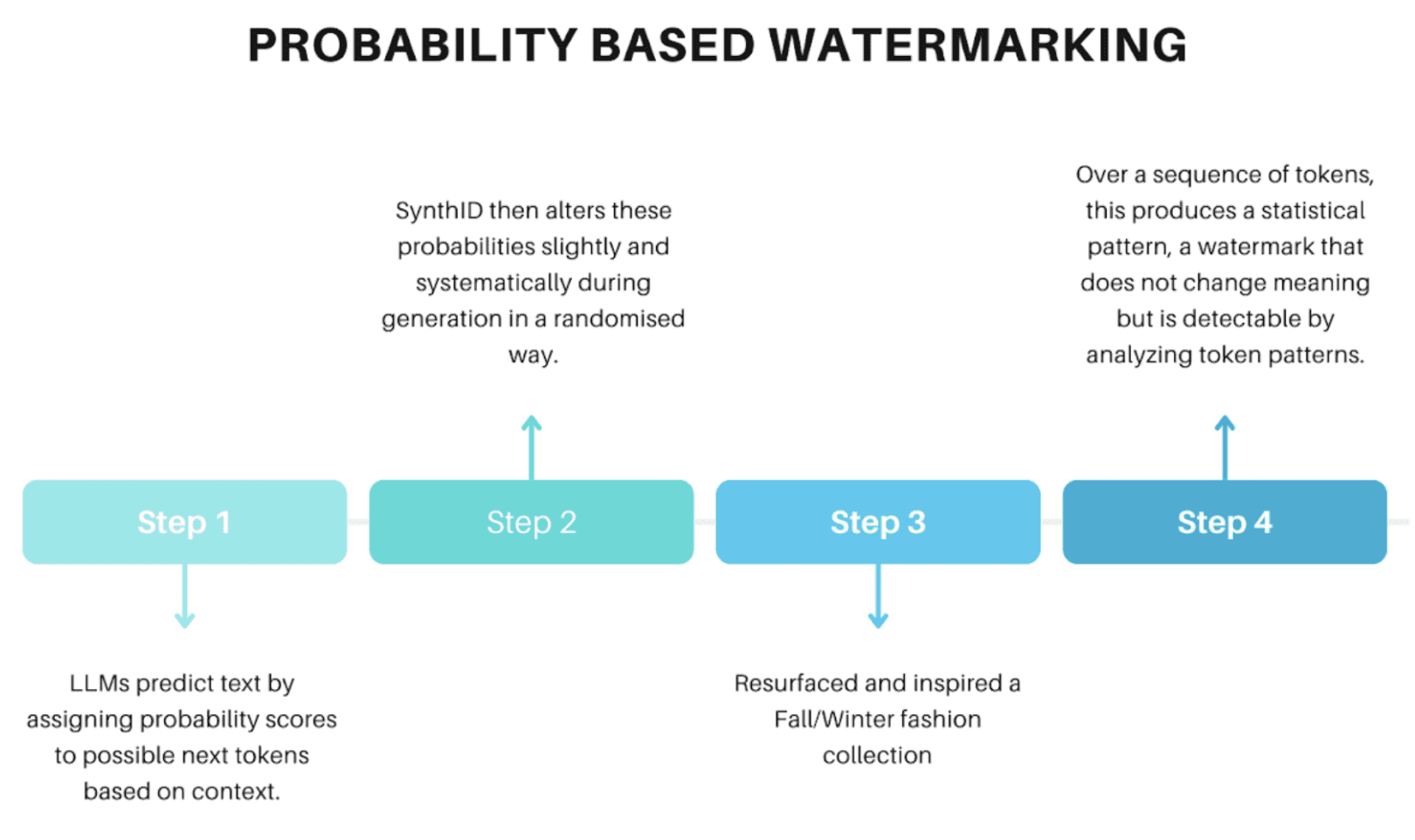

// Probability-Based Watermarking

SynthID embeds signals during text generation by manipulating the probability distribution used by large language models (LLMs) when selecting the next token (word or token part).

This method benefits from the fact that text generation is inherently probabilistic and statistical; Small controlled adjustments leave output quality unaffected while providing traceable signatures.

# Images and Video Media

// pixel level watermarking

For images and videos, SynthID embeds a watermark directly into the generated pixels. During generation, for example, through a diffusion model, SynthID subtly modifies pixel values at specific locations.

These changes are below human noticeable differences but encode machine-readable patterns. In video, watermarking is applied frame by frame, allowing temporal detection even after transformations such as cropping, compression, noise or filtering.

# audio media

// scene-based encoding

For audio content, the watermarking process takes advantage of the spectral representation of the audio.

- Convert audio waveform to time-frequency representation (spectrogram)

- Encode watermark patterns within the spectrogram using encoding techniques aligned with psychoacoustic (sound perception) properties

- Reconstruct the waveform from the modified spectrogram so that the embedded watermark is no longer noticeable to human listeners but can be detected by SynthID’s detector.

This approach ensures that the watermark remains detectable even after changes such as compression, noise enhancement, or motion changes – although you should be aware that excessive changes may weaken detectability.

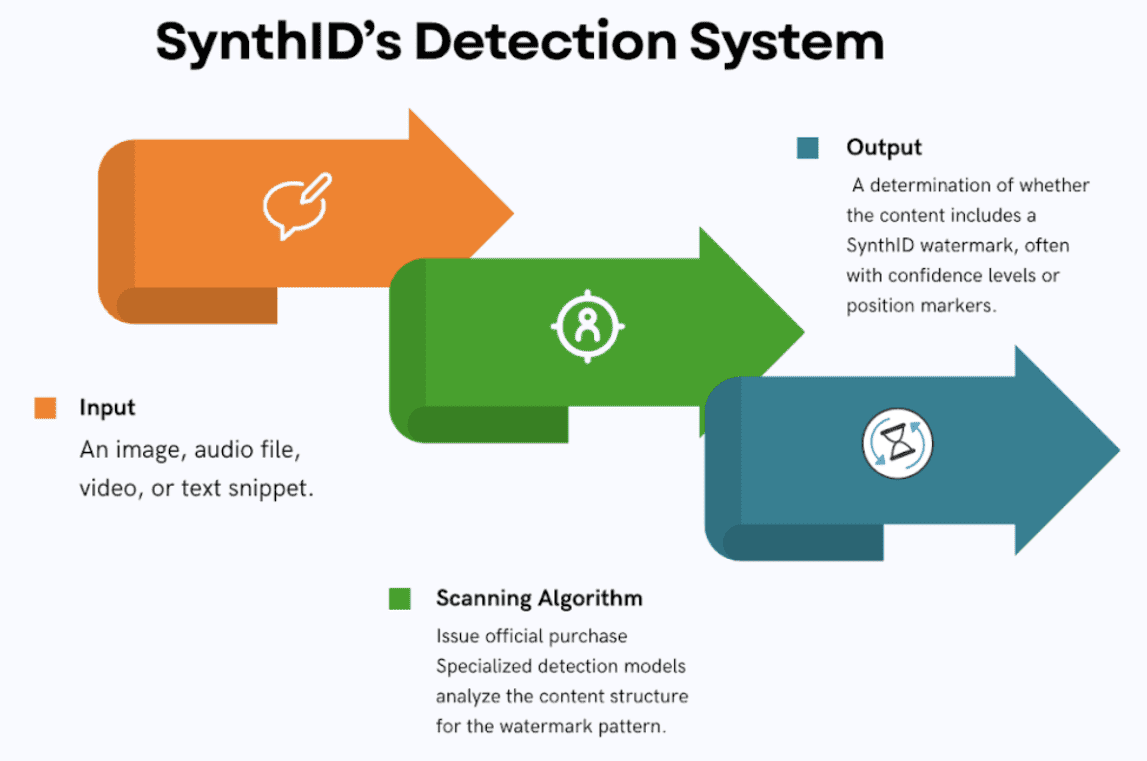

# Watermark detection and verification

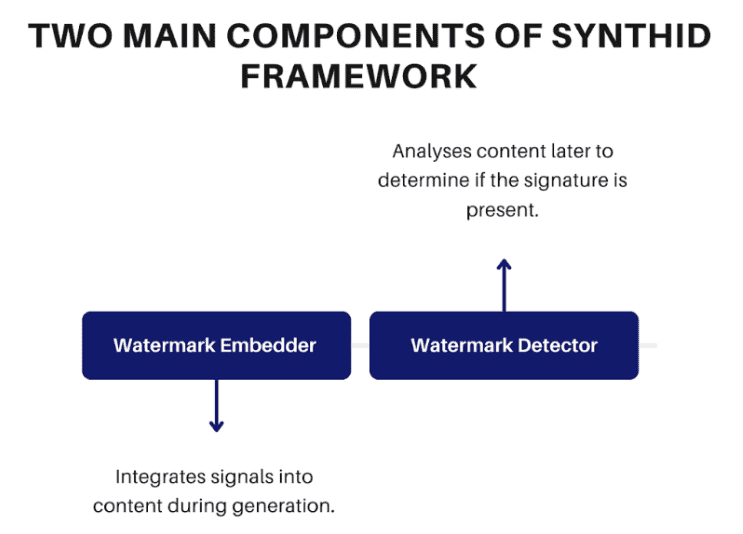

Once a watermark is embedded, SynthID’s detection system inspects a piece of content to determine whether the hidden signature is present.

Tools like the SynthID Detector Portal allow users to upload media to be scanned for the presence of watermarks. Detection highlights areas with strong watermark signals, allowing more granular originality checks.

# Strengths and limitations of SynthID

SynthID is designed to cope with typical content transformations, such as cropping, resizing, and image/video compression, as well as noise addition and audio format conversion. It also handles minor editing and annotation for text.

However, significant changes such as excessive editing, aggressive annotation, and non-AI transformations may reduce watermark detection capability. Furthermore, SynthID detection works primarily for content generated by models integrated with watermarking systems, such as Google’s AI models. It cannot detect AI content from external models lacking SynthID integration.

# Applications and wide impact

Main use cases of SynthID include the following:

- Content originality verification separates AI-generated content from human-generated content

- Fighting misinformation, such as tracing the origins of synthetic media used in disinformation

- Media sources, compliance platforms, and regulators can help track the origin of content

- Supporting research and academic integrity, replication and responsible AI use

By embedding consistent identifiers into AI outputs, SynthID increases transparency and trust in the generative AI ecosystem. As its acceptance grows, watermarking may become a standard practice on AI platforms in industry and research.

# conclusion

SynthID represents an impressive advancement in AI content traceability, embedding cryptographically strong, noticeable watermarks directly into generated media. By leveraging model-specific effects on token probabilities for text, pixel modifications for images and video, and spectrogram encoding for audio, SynthID achieves a practical balance of invisibility, strength, and detectability without compromising content quality.

As the shift to generic AI continues, technologies like SynthID will play an increasingly central role in ensuring responsible deployment, challenging abuse, and maintaining trust in a world where synthetic content is ubiquitous.

Shittu Olumide He is a software engineer and technical writer who is passionate about leveraging cutting-edge technologies to craft compelling narratives, with a keen eye for detail and the ability to simplify complex concepts. You can also find Shittu Twitter.