Zipu AI has open sourced the GLM-4.6v series as a pair of vision language models that treat images, videos, and tools as first-class inputs to agents, not as afterthoughts on top of text.

Model Lineup and Reference Length

There are 2 models in the series. GLM-4.6V is a 106B Parameter Foundation Model for Cloud and High Performance Cluster Workloads. glm-4.6v-flash There is a 9B parameter variant tuned for local deployment and low latency usage.

GLM-4.6V expands the training context window to 128K tokens. In practice it supports dense documents of about 150 pages, 200 slide pages or an hour of video in a single pass because the pages are encoded as images and consumed by the visual encoder.

Use of basic multimodal tools

The main technological change is native multimodal function callingUsing traditional tools in LLM systems routes everything through text, The images or pages are first transformed into descriptions, the model calls the tool using text arguments and then reads the text responses, This wastes information and increases latency,

GLM-4.6V Introduction native multimodal function callingImages, screenshots, and document pages are passed directly as tool parameters, Tools can return search results grids, charts, rendered web pages, or product images, The model consumes those visual outputs and combines them with text in the same logic chain, It closes the loop from perception to execution and is explicitly positioned as the bridge between visual perception and executable action for multimodal agents,

To support this, Zhipu AI extends the Model Context Protocol with URL based multimodal handling. Tools receive and return URLs identifying specific images or frames, which avoids file size limits and allows precise selection inside multiple image contexts.

Rich text content, web search and frontend replication

The Zipu AI research team describes 4 canonical scenarios:

First, comprehension and creation of rich textual contentThe GLM-4,6V reads mixed inputs such as papers, reports or slide decks and produces structured image text interleaved output, It understands text, charts, figures, tables and formulas in a single document, During generation it can crop relevant sequences or retrieve external images through tools, then run a visual audit stage that filters out low quality images and produces a final article with inline statistics,

Second, visual web searchThe model can detect user intent, plan which search tool to call and combine text to image and image to text search, It then aligns the retrieved images and text, selects relevant evidence and outputs a structured answer, for example a visual comparison of products or locations,

Third, frontend replication and visual interactionThe GLM-4,6V is tuned for design to code workflows, From UI screenshots, it recreates pixel accurate HTML, CSS, and JavaScript, Developers can then mark an area on the screenshot and issue natural language instructions, for example move this button to the left or change the background of this card, The model maps those instructions back to code and returns an updated snippet,

Fourth, multimodal document understanding in long contextGLM-4,6V can read multiple document inputs up to 128K token reference limit by treating pages as images, The research team reports a case where the model processes financial reports from 4 public companies, extracts key metrics and creates a comparison table, and a case where it summarizes a full football match while having the ability to answer questions about specific goals and timestamps,

Architecture, data and reinforcement learning

The GLM-4.6V models belong to the GLM-V family and are based on the GLM-4.5V and GLM-4.1V-Thinking’s technical reports. The research team highlights three main technical ingredients.

First, long sequence modelingGLM-4,6V expands the training context window to 128K tokens and runs continuous pre-training on large-scale long context image text corpora, It uses compression alignment ideas from glyphs so that visual tokens carry dense information aligned with language tokens,

Second, world knowledge enhancement. The Zipu AI team combines a billion-scale multimodal perception and world knowledge datasets in pre-training time. It includes layered encyclopedic concepts and everyday visual units. The stated goal is to improve both the basic assumption and the completeness of cross-modal question answering, not just the benchmark.

Third, agentic data synthesis and extended MCPThe research team generates large synthetic traces where the model calls tools, processes visual outputs and iterates over plans, They extend MCP with URL based multimodal handling and an interleaved output mechanism, The generation stack follows a draft, image selection, final polish sequence, The model can automatically call cropping or searching tools between these steps to place the images in the right place in the output,

Tool invocation is part of the reinforcement learning objective. GLM-4.6v uses RL to align scheduling, instruction following, and format adherence across complex tool chains.

Display

key takeaways

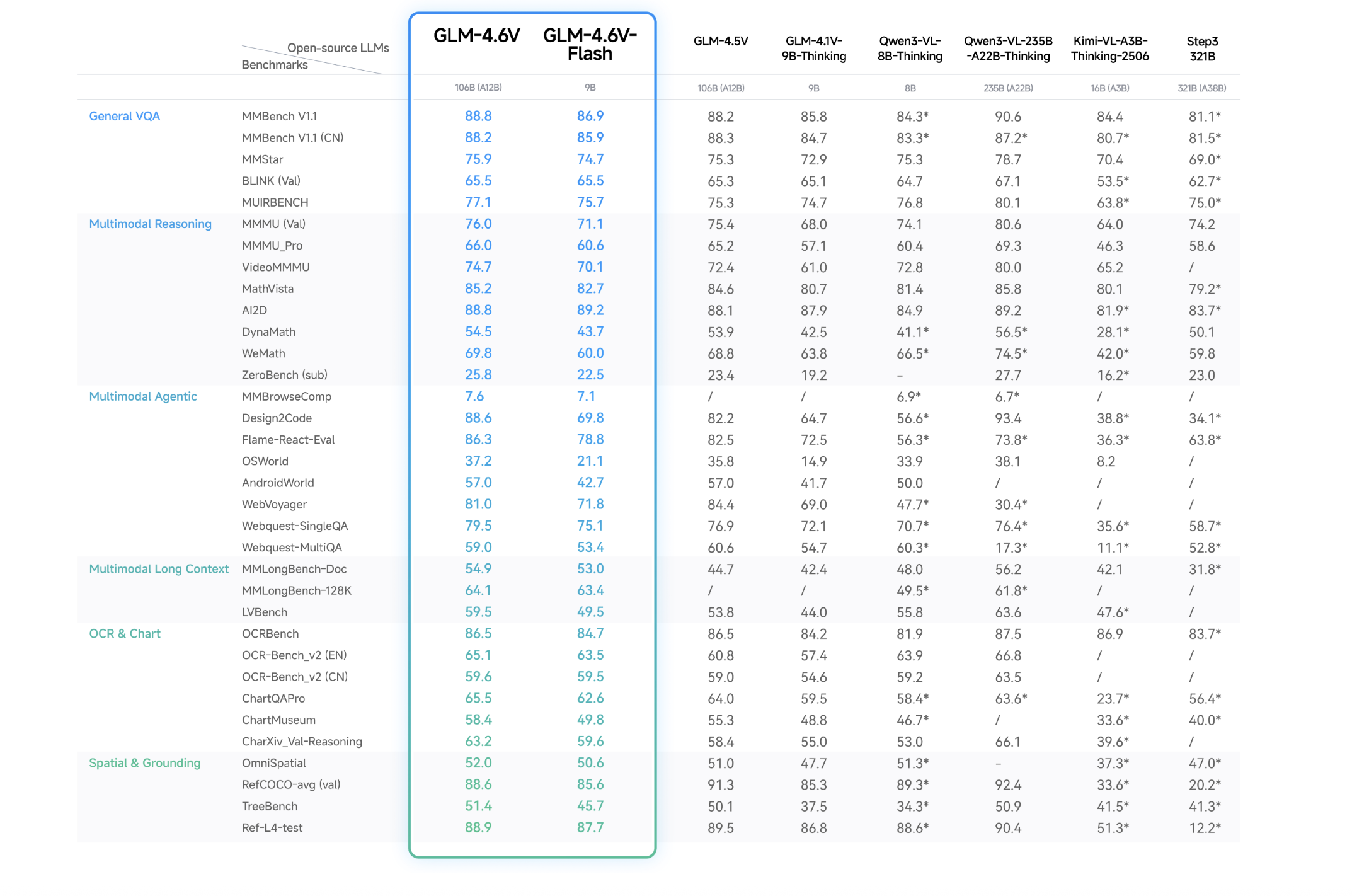

- GLM-4.6V is a 106B multimodal foundation model with a 128K token training context, and GLM-4.6V-Flash is a 9B variant optimized for local and low latency use.

- Both models support native multimodal function calling so that devices can directly consume and return images, video frames, and document pages, connecting visual perception to executable actions for agents.

- GLM-4.6V is trained for long context multimodal comprehension and interleaved generation, so it can read large mixed document sets and emit structured text with inline statistics and tool selected images in one pass.

- The series achieves state-of-the-art performance on major multimodel benchmarks at the same parameter scale and is released as open source weights under the MIT license on Hugging Face and ModelScope.

check it out Model card on HF And technical detailsFeel free to check us out GitHub page for tutorials, code, and notebooksAlso, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletterwait! Are you on Telegram? Now you can also connect with us on Telegram.

Asif Razzaq Marktechpost Media Inc. Is the CEO of. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. Their most recent endeavor is the launch of MarketTechPost, an Artificial Intelligence media platform, known for its in-depth coverage of Machine Learning and Deep Learning news that is technically robust and easily understood by a wide audience. The platform boasts of over 2 million monthly views, which shows its popularity among the audience.